Inferring Robot Task Plans from Human Team Meetings: A Generative Modeling Approach with Logic-Based Prior

We aim to reduce the burden of programming and deploying autonomous systems to work in concert with people in time-critical domains, such as military field operations and disaster response. Deployment plans for these operations are frequently negotia…

Authors: Been Kim, Caleb M. Chacha, Julie Shah

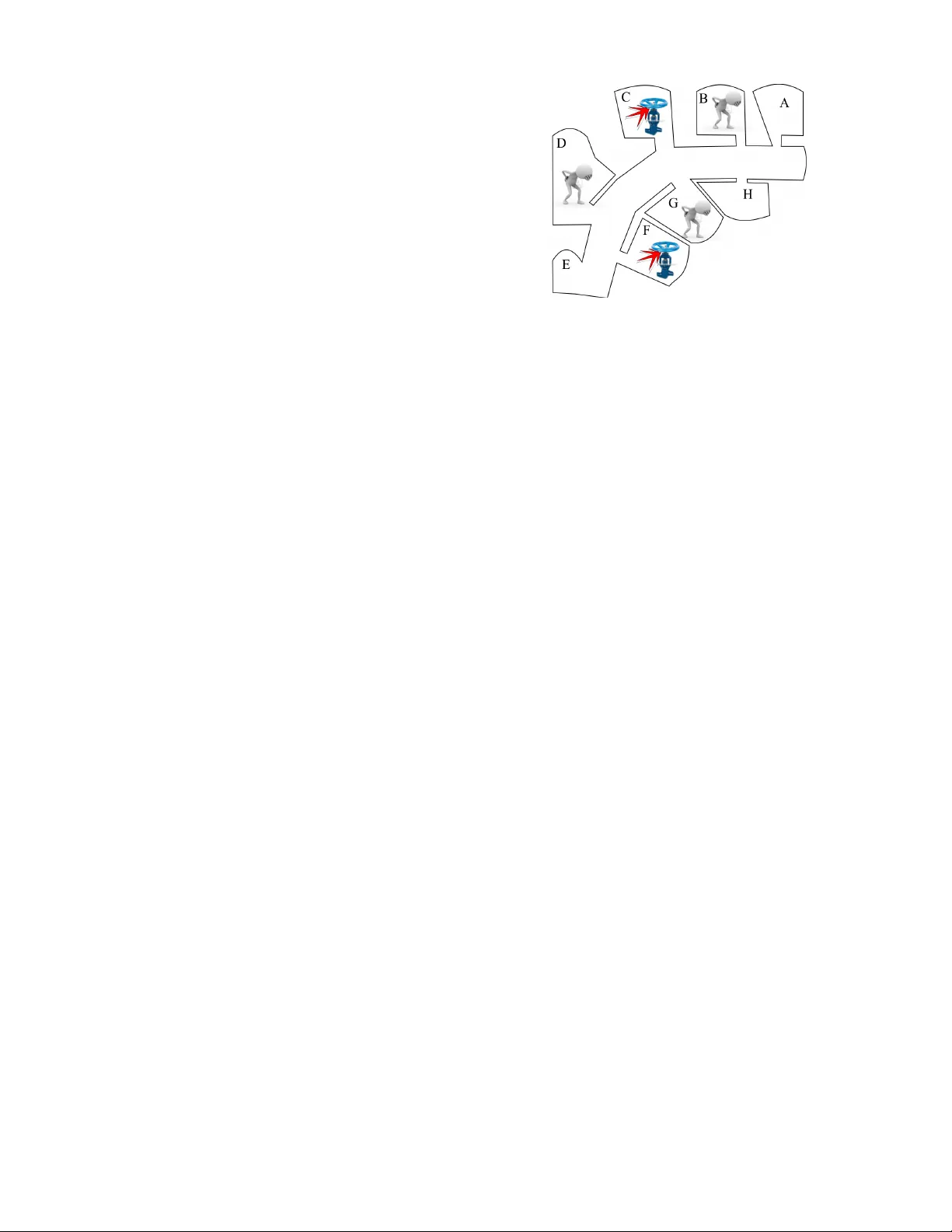

Inferring Robot T ask Plans fr om Human T eam Meetings: A Generativ e Modeling A ppr oach with Logic-Based Prior Been Kim, Caleb M. Chacha and Julie Shah Massachusetts Institute of T echnology Cambridge, Masachusetts 02139 {beenkim, c_chacha, julie_a_shah}@csail.mit.edu Abstract W e aim to reduce the burden of programming and de- ploying autonomous systems to work in concert with people in time-critical domains, such as military field operations and disaster response. Deployment plans for these operations are frequently negotiated on-the-fly by teams of human planners. A human operator then trans- lates the agreed upon plan into machine instructions for the robots. W e present an algorithm that reduces this translation burden by inferring the final plan from a processed form of the human team’ s planning con ver - sation. Our approach combines probabilistic generati ve modeling with logical plan validation used to compute a highly structured prior over possible plans. This hy- brid approach enables us to overcome the challenge of performing inference over the lar ge solution space with only a small amount of noisy data from the team plan- ning session. W e v alidate the algorithm through human subject experimentation and sho w we are able to infer a human team’ s final plan with 83% accurac y on av erage. W e also describe a robot demonstration in which two people plan and execute a first-response collaborative task with a PR2 robot. T o the best of our knowledge, this is the first work that integrates a logical planning technique within a generativ e model to perform plan in- ference. Introduction Robots are increasingly introduced to work in concert with people in high-intensity domains, such as military field op- erations and disaster response. They have been deployed to gain access to areas that are inaccessible to people (Casper and Murphy 2003; Micire 2002), and to inform situation as- sessment (Larochelle et al. 2011). The human-robot inter- face has long been identified as a major bottleneck in uti- lizing these robotic systems to their full potential (Murphy 2004). As a result, we have seen significant research efforts aimed at easing the use of these systems in the field, includ- ing careful design and validation of supervisory and con- trol interfaces (Jones et al. 2002; Cummings, Brzezinski, and Lee 2007; Barnes et al. 2011; Goodrich et al. 2009). Much of this prior work has focused on ease of use at “exe- cution time. ” Howe ver , a significant bottleneck also exists Copyright c 2018, Association for the Advancement of Artificial Intelligence (www .aaai.org). All rights reserved. in planning the deployments of autonomous systems and in programming these systems to coordinate their task ex- ecution with the rest of the human team. Deployment plans are frequently negotiated by human team members on-the- fly and under time pressure (Casper and Murphy 2002; Casper and Murphy 2003). For a robot to ex ecute part of this plan, a human operator must transcribe and translate the result of the team planning session. In this paper we present an algorithm that reduces this translation burden by inferring the final plan from a pro- cessed form of the human team’ s planning con versation. Our approach combines probabilistic generativ e modeling with logical plan validation which is used to compute a highly structured prior over possible plans. This hybrid approach enables us to overcome the challenge of performing infer- ence over the lar ge solution space with only a small amount of noisy data from the team planning session. Rather than working with raw natural language, our algo- rithm takes as input a structured form of the human dialog. Processing human dialogue into more machine understand- able forms is an important research area (Kruijff, Janıcek, and Lison 2010; T ellex et al. 2011; Koomen et al. 2005; Palmer , Gildea, and Xue 2010; Pradhan et al. 2004), but we view this as a separate problem and do not focus on it in this paper . The form of input we use preserves many of the challeng- ing aspects of natural human planning con versations and can be thought of as very ‘noisy’ observations of the final plan. Because the team is discussing the plan under time pressure, the planning sessions often consist of a small number of suc- cinct communications. Our approach reliably infers the ma- jority of final agreed upon plan, despite the small amount of noisy data. W e validate the algorithm through human subject exper- imentation and show we are able to infer a human team’ s final plan with 83% accuracy on a verage. W e also describe a robot demonstration in which two people plan and execute a first-response collaborativ e task with a PR2 robot. T o the best of our knowledge, this is the first work that integrates a logical planning technique within a generative model to perform plan inference. Problem Formulation Disaster response teams are increasingly utilizing web- based planning tools to plan their deployments (Di Ciaccio, Pullen, and Breimyer 2011). Dozens to hundreds of respon- ders log in to plan their deployment using audio/video con- ferencing, text chat, and annotatable maps. In this work, we focus on inferring the final plan using the text data that can be logged from chat or transcribed speech. This section for- mally describes our problem formulation, including the in- puts and outputs of our inference algorithm. Planning Problem A plan consists of a set of actions together with execution timestamps associated with those actions. A plan is valid if it achie v es a user-specified goal state without violating user- specified plan constraints. In our work, the full set of pos- sible actions is not necessarily specified apriori and actions may also be deriv ed from the dialog. Actions may be constrained to e xecute in seq uence or in par all el with other actions. Other plan constraints in- clude discrete resource constraints (e.g. there are two medi- cal teams), and temporal deadlines on time durativ e actions (e.g. a robot can be deployed for up to one hour at a time due to battery life constraints). Formally , we assume our plan- ning problem may be represented in Planning Domain De- scription Language (PDDL) 2.1. In this paper , we consider a fictional rescue scenario that in v olves a radioactiv e material leakage accident in a build- ing with multiple rooms, as sho wn in Figure 1. This is the scenario we use in human subject experiments for proof-of- concept of our approach. A room either has a patient that needs to be assessed in person or a valv e that needs to be fixed. The scenario is specified by the following informa- tion. Goal State: All patients are assessed in person by a medi- cal crew . All valv es are fixed by a mechanic. All rooms are inspected by a robot. Constraints: For safety , the radioactivity of a room must be inspected by a robot before human crews can be sent to the room (sequence constraint). There are two medical crews, red and blue (discrete resource constraint). There is one hu- man mechanic (discrete resource constraint). There are two robots, red and blue (discrete resource constraint). Assumption: All tasks (e.g. inspecting a room, fixing a valv e) take the same unit of time and there are no hard temporal constraints in the plan. This assumption was made to conduct the initial proof-of-concept experimentation de- scribed in this paper . Ho we ver , the technical approach read- ily generalizes to v ariable task durations and temporal con- straints, as described later . The scenario produces a very lar ge number of possible plans (more than 10 12 ), many of which are v alid for achiev- ing the goals without violating the constraints. W e assume that the team reaches an agreement on a fi- nal plan. T echniques introduced by (Kim, Bush, and Shah 2013) can be used to detect the strength of agreement, and encourage the team to discuss further to reach an agreement if necessary . W e leave for future study the situation where Figure 1: Fictional rescue scenario the team agrees on a flexible plan with multiple options to be decided among later . While we assume that the team is more likely to agree on a v alid plan, we do not rule out the possibility that the final plan is in v alid. Algorithm Input T ext data from the human team conv ersation is collected in the form of utterances, where each utterance is one person’ s turn in the discussion, as shown in T able 1. The input to our algorithm is a machine understandable form of human con v ersation data, as illustrated in the right-hand column of T able 1. This structured form captures the actions discussed and the proposed ordering relations among actions for each utterance. Although we are not working with raw natural language, this form of data still captures many of the characteristics that make plan inference based on human con versation chal- lenging. T able 1 shows part of the data, using the follo wing short-hand: ST = SendT o rr = red robot, br = blue robot rm = red medical, bm = blue medical e.g. ST(br , A) = SendT o(blue robot, room A) Utterance tagging An utterance is tagged as an or der ed tuple of sets of gr ounded predicates . Each predicate repre- sents an action applied to a set of objects (cre w member, robot, room, etc.), as is the standard definition of a dynamic predicate in PDDL. Each set of grounded predicates repre- sents a collection of actions that, according to the utterance, should happen in parallel. The order of the sets of grounded predicates indicates the relative order in which these col- lections of actions should happen. For example, ({ST(rr , B), ST(br , G)}, {ST(rm, B)}) corresponds to simultaneously sending the red robot to room B and the blue robot to room G, followed by sending the red medical team to room B. As one can see, the structured dialog is still very noisy . Each utterance (i.e. U1-U7) discusses a partial plan and only predicates that are explicitly mentioned in the utterance are tagged (e.g. U6-U7: the “and then” in U7 implies a sequenc- ing constraint with the predicate discussed in U6, but the Natural dialogue Structured form U1 So I suggest using Red robot to co ver “upper” rooms (A, B, C, D) and Blue robot to co ver “lower” rooms (E, F , G, H). ({ST(rr ,A),ST(br ,E), ST(rr ,B),ST(br ,F), ST(rr ,C),ST(br ,G), ST(rr ,D), ST(br ,H)}) U2 Okay . so first send Red robot to B and Blue robot to G? ({ST(rr ,B),ST(br ,G)}) U3 Our order of inspection would be (B, C, D, A) for Red and then (G, F , E, H) for Blue. ({ST(rr ,B),ST(br ,G)}, {ST(rr ,C),ST(br ,F)}, {ST(rr ,D),ST(br ,E)}, {ST(rr ,A), ST(br ,H)}) U4 Oops I meant (B, D, C, A) for Red. ({ST(rr ,B)},{ST(rr ,D)}, {ST(rr ,C)},{ST(rr ,A)}) · · · U5 So we can ha ve medical crew go to B when robot is inspect- ing C ({ST(m,B), ST(r ,C)}) · · · U6 First, Red robot inspects B ({ST(r ,B)}) U7 Y es, and then Red robot in- spects D, Red medical crew to treat B ({ST(r ,D),ST(rm,B)}) T able 1: Dialogue and structured form examples structured form of U7 does not include ST(r,B)). T ypos and misinformation are tagged as they are (e.g. U3), and the utterances to re vise information are not placed in context (e.g. U4). Utterances that clearly violate ordering constraints (e.g. U1: all actions cannot happen at the same time) are tagged as they are. In addition, the information regarding whether the utterance was a suggestion, rejection or agree- ment of an partial plan is not coded. Note that the utterance tagging only contains information about relative or dering between the predicates appearing in that utterance, not the absolute or dering of their appearance in the final plan. For example, U2 specifies that the two grounded predicates happen at the same time. It does not say when the two predicates happen in the final plan or whether other predicates happen in parallel. This simulates how hu- mans perceiv e the conv ersation — at each utterance, humans only observe the relativ e ordering, and infer the absolute or - der of predicates based on the whole con versation and an understanding of which orderings make a v alid plan. Algorithm Output The output of the algorithm is an inferred final plan, which is sampled from the probability distribution over final plans. The final plan has a similar representation to the structured utterance tags, and is represented as an order ed tuple of sets of gr ounded predicates . The predicates in each set represent actions that happen in parallel, and the ordering of sets indi- cates sequence. Unlike the utterance tags, the sequence or- dering relations in the final plan represent the absolute order in which the actions are to be carried out. For example, a plan can be represented as ( { A 1 , A 2 } , { A 3 } , { A 4 , A 5 , A 6 } ) , where A i represents a predicate. In this plan, A 1 and A 2 will happen at plan time step 1 , A 3 happens at plan time step 2 , and so on. T echnical Appr oach and Related W ork It is natural to take a probabilistic approach to the plan infer - ence problem since we are working with noisy data. How- ev er the combination of a small amount of noisy data and a very lar ge number of possible plans means that an approach using typical uninformati ve priors over plans will frequently fail to con verge to the team’ s plan in a timely manner . This problem could also be approached as a logical con- straint problem of partial order planning, if there were no noise in the utterances. In other words, if the team discussed only the partial plans relating to the final plan and did not make an y errors or re visions, then a plan generator such as a PDDL solver (Coles et al. 2009) could produce the final plan with global sequencing. Unfortunately human con versation data is sufficiently noisy to preclude this approach. This moti v ates a combined approach. W e b uild a proba- bilistic generati ve model for the structured utterance obser- vations. W e use a logic-based plan validator (Howey , Long, and F ox 2004) to compute a highly structured prior distribu- tion ov er possible plans, which encodes our assumption that the final plan is likely , but not required, to be a valid plan. This combined approach naturally deals with noise in the data and the challenge of performing inference over plans with only a small amount of data. W e perform sampling in- ference in the model using Gibbs sampling and Metropolis- Hastings steps within to approximate the posterior distribu- tion over final plans. W e show through empirical validation with human subject e xperiments that the algorithm achie v es 83% accuracy on a verage. Combining a logical approach with probabilistic model- ing has gained interest in recent years. (Getoor and Mi- halkov a 2011) introduce a language for describing statisti- cal models over typed relational domains and demonstrate model learning using noisy and uncertain real-world data. (Poon and Domingos 2006) introduce statistical sampling to improve the ef ficiency of search for satisfiability test- ing. (Richardson and Domingos 2006; Singla and Domin- gos 2007; Poon and Domingos 2009; Raedt 2008) introduce Markov logic networks, and form the joint distribution of a probabilistic graphical model by weighting the formulas in a first-order logic. Our approach shares with Markov logic netw orks the phi- losophy of combining logical tools with probabilistic model- ing. Markov logic networks utilize a general first-order logic to help infer relationships among objects, but they do not explicitly address the planning problem, and we note the formulation and solution of planning problems in first-order logic is often inefficient. Our approach instead exploits the highly structured planning domain by integrating a widely used logical plan validator within the probabilistic genera- tiv e model. Algorithm Generative Model In this section, we explain the generativ e model of human team planning dialog (Figure 2) that we use to infer the team’ s final plan. W e start with a pl an latent variable that must be inferred by observing utterances in the planning session. The model generates each utterance in the con ver- sation by sampling a subset of the predicates in pl an and computing the relative ordering in which they appear in the utterance. This mapping from the absolute ordering in pl an to the relativ e ordering of predicates in an utterance is de- scribed in more detail belo w . Since the conv ersation is short, and the noise lev el is high, our model does not distinguish utterances based on the order of which they appear in the con v ersation. W e describe the generati ve model step by step: 1. V ariable pl an : The pl an variable in Figure 2 is defined as an ordered tuple of sets of grounded predicates and rep- resents the final plan agreed upon by the team. The prior distribution o ver the plan variable is gi ven by: p ( plan ) ∝ e α if plan is valid 1 if plan is in v alid. (1) where α is a positive number . This models our assump- tion that the final plan is more likely , but not necessarily required, to be a v alid plan. W e describe in the next sec- tion how we e valuate the v alidity of a plan. Each set of predicates in pl an is assigned a consecutive absolute plan step index s , starting at s = 1 working from left to right in the ordered tuple. For example, gi v en plan = ( { A 1 , A 2 } , { A 3 } , { A 4 , A 5 , A 6 } ) , where each A i is a predicate, A 1 and A 2 occur in parallel at plan step s = 1 and A 6 occurs at plan step s = 3 . 2. V ariable s n t : Each of n predicates in an utterance t is assigned a step index s n t . s n t is giv en by the absolute plan step s where the corresponding predicate p n t (explained later), appears in plan . s n t is sampled as follo ws. For each utterance, n pred- icates are sampled from pl an . For example, consider n = 2 where first sampled predicate appears in the sec- ond set of plan and the second sampled predicate appears in the fourth set of plan . Then s 1 t = 2 and s 2 t = 4 . The probability of a set being sampled is proportional to the number of predicates in the set. For example, given plan = ( { A 1 , A 2 } , { A 3 } , { A 4 , A 5 , A 6 } ) , the probability of selecting the first set ( { A 1 , A 2 } ) is 2 6 . This models that people are more likely to discuss plan steps that include many predicates. F ormally , p ( s n t = i | pl an ) = # predicates in set i in plan total # predicates in plan . (2) 3. V ariable s 0 t : The v ariable s 0 t is an array of size n that specifies, for each utterance t , the r elative ordering of predicates as they appear in plan . s 0 t is generated from s t as follows: p ( s 0 t | s t ) ∝ e β if s 0 t = f ( s t ) 1 if s 0 t 6 = f ( s t ) . (3) where β > 0 . The function f is a deterministic mapping from the absolute ordering s t to the relative ordering s 0 t . f takes as input a vector of absolute plan step indices and s n t s 0 t β plan α p n t w p N T Figure 2: The generativ e model produces a vector of consecutiv e indeces. For example, f maps s t = (2 , 4) to s 0 t = (1 , 2) , and s t = (5 , 7 , 2) to s 0 t = (2 , 3 , 1) . This v ariable models the way predicates and their orders appear in human conv ersation; people do not often refer to the absolute ordering, and frequently use relati ve terms such as “before” and “after” to describe partial sequences of the full plan. People also make mistakes or otherwise incorrectly specify an ordering, hence our model allows for inconsistent relativ e orderings with nonzero probabil- ity . 4. V ariable p n t : The v ariable p n t represents the n th predicate that appears in the t th utterance. This is sampled given s n t , the plan variable and a noise parameter w p . W ith probability w p , we sample the predicate p n t uni- formly from the “correct” set s n t in plan as follows: p ( p n t = i | pl an, s n t = j ) = ( 1 # pred. in set j if i is in set j 0 o.w . W ith probability 1 − w p , we sample the predicate p n t uni- formly from “an y” set in plan (i.e. from all predicates mentioned in the dialog), therefore p ( p n t = i | pl an, s n t = j ) = 1 total # predicates . (4) In other words, with higher probability w p , we sample a value for p n t that is consistent with s n t , but allow nonzero probability that p n t is sampled from a random plan. This allows the model to incorporate the noise in the planning con v ersation, including mistak es or plans that are later re- vised. PDDL V alidator W e use the Planning Domain Description Language (PDDL) 2.1 plan v alidator tool (Howe y , Long, and Fox 2004) to ev al- uate the prior distribution over possible plans. The plan val- idator is a standard tool that takes as input a planning prob- lem described in PDDL and a proposed solution plan, and checks if the plan is valid. Some v alidators can also compute a value for plan quality , if the problem specification includes a plan metric. V alidation is often much cheaper than generat- ing a valid plan, and gi ves us a way to compute p ( plan ) up to proportionality in a computationally efficient manner . Le ver - aging this ef ficiency , we use Metropolis-Hastings sampling to sample the plan without calculating the partition function, as described in the next section. Many elements of the PDDL domain and problem spec- ification are defined by the capabilities and resources at a particular response team’ s disposal. The PDDL specification may lar gely be reused from mission to mission, b ut some el- ements will change or may be specified incorrectly . For ex- ample the plan specification may misrepresent the number of responders available or may lea v e out implicit constraints that people take for granted. In the Experimental Evaluation section we demonstrate the rob ustness of our approach using both complete and degraded PDDL plan specifications. Gibbs Sampling W e use Gibbs sampling to perform inference on the gener- ativ e model. There are two latent variables to sample: pl an and the collection of variables s n t . W e iterate between sam- pling plan given all other variables and sampling the s n t vari- ables given all other variables. The PDDL validator is used when the plan variable is sampled. Unlike s n t , where we can write down an analytic form to sample from the posterior , it is intractable to directly resam- ple the plan v ariable, as it requires calculating the number of all possible valid and inv alid plans. Therefore we use a Metropolis-Hasting (MH) algorithm to sample from the plan posterior distribution within the Gibbs sampling steps. In this section, we explain how plan and the s n t variables are sampled. Sampling plan using Metropolis-Hastings The posterior of plan can be represented as the product of the prior and likelihood as follo ws: p ( plan | s, p ) ∝ p ( pl an ) p ( s, p | plan ) = p ( pl an ) T Y t =1 N Y n =1 p ( s n t , p n t | plan ) = p ( pl an ) T Y t =1 N Y n =1 p ( s n t | plan ) p ( p n t | plan, s n t ) (5) The MH sampling algorithm is widely used to sam- ple from a distribution when direct sampling is difficult. The typical MH algorithm defines a proposal distribution, Q ( x 0 | x t ) which samples a new point (i.e. x 0 : a value of the plan v ariable in our case) giv en the current point x t . The new point can be achiev ed by randomly select- ing one of the possible moves, as defined below . The pro- posed point is accepted or rejected with probability of min(1 , acceptance ratio ) . Unlike simple cases where a Gaussian distribution can be used as a proposal distribution, our proposal distribution needs to be defined over the plan space. Recall that pl an is represented as an ordered tuple of sets of predicates, and the new point is suggested by performing one of the following mov es: • Select a predicate. If it is in the current plan , mov e it to either: 1) the next set of predicates, 2) the pre vious set or 3) remove it from the current plan . If it is not in the current plan , move it to one of the e xisting set. • Select two sets in plan , and switch their orders. These mo v es are suf ficient to mov e from an arbitrary plan to another arbitrary plan using the set of moves defined abov e. The probability of each of these moves described above is chosen such that the the proposal distribution is symmetric: Q ( x 0 | x t ) = Q ( x t | x 0 ) . This symmetry simplifies the calcu- lation of the acceptance ratio. Second, the ratios of the proposal distribution at the cur- rent and proposed points are calculated. When pl an is v alid and in valid respecti vely , p ( pl an ) is proportional to e α and 1 respectiv ely , as described in Equation 1. Plan validity is e v al- uated using the PDDL validation tool. The remaining term, p ( s n t | plan ) p ( p n t | plan, s n t ) , is calculated using Equations 2 and 4. Third, the proposed pl an is accepted with the following probability: min 1 , p ∗ ( plan = x 0 | s,p ) p ∗ ( plan = x t | s,p ) , where p ∗ is a function that is proportional to the posterior distribution. Sampling s n t Fortunately , there exists an analytic expres- sion for the posterior of s n t : p ( s t | plan, p t , s 0 t ) ∝ p ( s t | plan ) p ( p t , s 0 t | plan, s t ) = p ( s t | plan ) p ( p t | plan, s t ) p ( s 0 t | s t ) = p ( s 0 t | s t ) N Y n =1 p ( s n t | plan ) p ( p n t | plan, s n t ) Note this analytic expression can be expensi v e to e valuate if the number of possible values of s n t is large. In that case one can marginalize out s n t , as the one variable we truly care about is the plan variable. Experimental Evaluation In this section we ev aluate the performance of our plan infer- ence algorithm through initial proof-of-concept human sub- ject experimentation and show we are able to infer a human team’ s final plan with 83% accurac y on a verage. W e also de- scribe a robot demonstration in which two people plan and ex ecute a first-response collaborati ve task with a PR2 robot. Human T eam Planning Data W e designed a web-based collaboration tool that is modeled after the NICS sys- tem (Di Ciaccio, Pullen, and Breimyer 2011) used by first re- sponse teams, b ut with a modification that requires the team to communicate soley via text chat. T wenty-three teams of two (total of 46 participants) were recruited through Ama- zon Mechanical T urk and the greater Boston area. Recruit- ment was restricted to participants located in US to increase the probability that participants were fluent in English. Each team was provided the fictional rescue scenario described in this paper , and was asked to collaboratively plan a rescue mission. At the completion of the planning session, each par- ticipant was asked to summarize the final agreed upon plan in the structured form described previously . An independent analyst re vie wed the planning sessions to resolve discrepan- cies between the two member’ s final plan descriptions, when necessary . Utterance tagging w as performed by the three an- alysts: the first and second authors, and an independent an- alyst. T wo of the three analysts tagged and revie wed each team planning session. On average, 19% of predicates men- tioned per data set did not end up in the final plan. Algorithm Implementation The algorithm is implemented in Python, and the V AL PDDL 2.1 plan validator (Howey , Long, and Fox 2004) is used. W e perform 3000 Gibbs sam- pling steps on the data from each planning session. W ithin one Gibbs sampling step, we perform 400 steps of the Metropolis-Hastings (MH) algorithm to sample the plan. Every 20 samples are selected to measure the accuracy . w p is set to 0 . 8 , α is 10, β is 5. Results W e ev aluate the quality of the final plan produced by our algorithm in terms of (1) accuracy of task allocation among agents (e.g. which medic trav els to which room), and (2) accuracy of plan sequence. T wo metrics for task allocation accuracy are ev aluated: 1) [% Inferred] the percent of inferred plan predicates that appear in the team’ s final plan, and 2) [% Noise Rej] the percent noise rejection of e xtraneous predicates that are dis- cussed but do not appear in the team’ s final plan. W e ev aluate the accuracy of the plan sequence as fol- lows. W e say a pair of predicates is correctly or der ed if it is consistent with the relativ e ordering in the true final plan. W e measure the percent accuracy of sequencing [% Seq] by # correctly ordered pairs of correct predicates total # of pairs of correct predicates . Only correctly estimated predicates are compared, as there is no ground truth rela- tion for predicates that are not in the true final plan. W e use this relative sequencing measure because it does not com- pound the sequence errors, as an absolute difference mea- sure would (e.g. where the error in the ordering of one pred- icate early in the plan shifts the position of all subsequent predicates). Overall plan accuracy is computed as the arithmetic mean of the two task allocation and one plan sequence accuracy measures. Our algorithm is ev aluated under three conditions: 1) [PDDL] perfect PDDL files, 2) [PDDL-1] PDDL problem file with missing goals/constants (delete one patient and one robot), 3) [PDDL-2] PDDL domain file missing a constraint (delete precondition that a robot needs to inspect the room before a human cre w can enter), and 4) [no PDDL] using an uninformativ e prior o ver possible plans. Results, shown in T able 2, show that our algorithm infers final plans with greater than 80% on average, and achiev es good noise rejection of extraneous predicates discussed in con v ersation. W e also show our approach is relati vely robust to degraded PDDL specifications. Concept-of-Operations Robot Demonstration W e illus- trate the use of our plan inference algorithm with a robot demonstration in which two people plan and execute a first- response collaborativ e task with a PR2 robot. T wo partici- pants plan an impending deployment using the web-based T ask Allocation % Seq A vg. % Inferred % Noise Rej PDDL 92.2 71 85.8 83.0 PDDL-1 91.4 67.9 84.1 81.1 PDDL-2 90.8 68.5 84.2 81.1 No PDDL 88.8 68.2 73.4 76.8 T able 2: Plan Accuracy Results collaborativ e tool that we developed. Once the planning ses- sion is complete, the dialog is manually tagged. The plan inferred from this data is confirmed with the human plan- ners, and is provided to the robot to execute. The registra- tion of predicates to robot actions, and room names to map locations, is performed offline in advance. While the first responders are on their way to the accident scene, the PR2 autonomously na vigates to each room performing online lo- calization, path planning, and obstacle a v oidance. The robot informs the rest of the team as it inspects each room and con- firms it is safe for the human team members to enter . V ideo of this demo is here: http://tiny.cc/uxhcrw . Future W ork Our aim is to reduce the burden of programming a robot to work in concert with a human team. W e present an algorithm that combines a probabilistic approach with logical plan val- idation to infer a plan from human team conv ersation. W e empirically demonstrate that this hybrid approach enables us to infer the team’ s final plan with about 83% accurac y on av erage. Next, we plan to in vestigate the speed and ease with which a human planner can “fix” the inferred plan to achieve near 100% plan accuracy . W e are also in vestigating automatic mechanisms for translating raw natural dialog into the struc- tured form our algorithm takes as input. Acknowledgement The authors thank Matt Johnson and James Saunderson for helpful discussions and feedback. This work is sponsored by ASD (R&E) under Air Force Contract F A8721-05-C-0002. Opinions, interpreta- tions, conclusions and recommendations are those of the au- thors and are not necessarily endorsed by the United States Gov ernment. References [Barnes et al. 2011] Barnes, M.; Chen, J.; Jentsch, F .; and Redden, E. 2011. Designing ef fecti v e soldier-robot teams in complex environments: training, interfaces, and individual differences. In EPCE . Springer . 484–493. [Casper and Murphy 2002] Casper , J., and Murphy , R. 2002. W orkflow study on human-robot interaction in usar . In IEEE ICRA , volume 2, 1997–2003. [Casper and Murphy 2003] Casper , J., and Murphy , R. 2003. Human-robot interactions during the robot-assisted urban search and rescue response at the world trade center . IEEE SMCS 33(3):367–385. [Coles et al. 2009] Coles, A.; Fox, M.; Halse y , K.; Long, D.; and Smith, A. 2009. Managing concurrency in temporal planning using planner-scheduler interaction. Artificial In- telligence 173(1):1 – 44. [Cummings, Brzezinski, and Lee 2007] Cummings, M. L.; Brzezinski, A. S.; and Lee, J. D. 2007. Operator per- formance and intelligent aiding in unmanned aerial vehicle scheduling. IEEE Intelligent Systems 22(2):52–59. [Di Ciaccio, Pullen, and Breimyer 2011] Di Ciaccio, R.; Pullen, J.; and Breimyer , P . 2011. Enabling distributed command and control with standards-based geospatial collaboration. In IEEE International Confer ence on HST . [Getoor and Mihalkov a 2011] Getoor, L., and Mihalkov a, L. 2011. Learning statistical models from relational data. In In- ternational Confer ence on Manag ement of data , 1195–1198. A CM. [Goodrich et al. 2009] Goodrich, M. A.; Morse, B. S.; Engh, C.; Cooper , J. L.; and Adams, J. A. 2009. T owards us- ing U A Vs in wilderness search and rescue: Lessons from field trials. Interaction Studies, Special Issue on Robots in the W ild: Exploring Human-Robot Interaction in Naturalis- tic En vir onments 10(3):453–478. [Howe y , Long, and Fox 2004] Howe y , R.; Long, D.; and Fox, M. 2004. V al: Automatic plan validation, continuous effects and mixed initiative planning using pddl. In IEEE ICT AI , 294–301. IEEE. [Jones et al. 2002] Jones, H.; Rock, S.; Burns, D.; and Mor- ris, S. 2002. Autonomous robots in swat applications: Re- search, design, and operations challenges. A UVSI . [Kim, Bush, and Shah 2013] Kim, B.; Bush, L.; and Shah, J. 2013. Quantitati ve estimation of the strength of agreements in goal-oriented meetings. In IEEE CogSIMA . [K oomen et al. 2005] Koomen, P .; Pun yakanok, V .; Roth, D.; and Y ih, W . 2005. Generalized inference with multiple se- mantic role labeling systems. In CoNLL , 181–184. [Kruijff, Janıcek, and Lison 2010] Kruijff, G.; Janıcek, M.; and Lison, P . 2010. Continual processing of situated dia- logue in human-robot collaborati ve activities. In IEEE Ro- Man . [Larochelle et al. 2011] Larochelle, B.; Kruijff, G.; Smets, N.; Mioch, T .; and Groenewe gen, P . 2011. Establishing hu- man situation awareness using a multi-modal operator con- trol unit in an urban search & rescue human-robot team. In IEEE Ro-Man , 229–234. IEEE. [Micire 2002] Micire, M. 2002. Analysis of r obotic plat- forms used at the world tr ade center disaster . Ph.D. Disser- tation, MS thesis, Department Computer Science Engineer- ing, Univ . South Florida. [Murphy 2004] Murphy , R. 2004. Human-robot interaction in rescue robotics. IEEE SMCS 34(2):138–153. [Palmer , Gildea, and Xue 2010] Palmer , M.; Gildea, D.; and Xue, N. 2010. Semantic role labeling. Synthesis Lectur es on Human Language T echnologies 3(1):1–103. [Poon and Domingos 2006] Poon, H., and Domingos, P . 2006. Sound and efficient inference with probabilistic and deterministic dependencies. In AAAI , volume 21, 458. [Poon and Domingos 2009] Poon, H., and Domingos, P . 2009. Unsupervised semantic parsing. In EMNLP . [Pradhan et al. 2004] Pradhan, S.; W ard, W .; Hacioglu, K.; Martin, J.; and Jurafsk y , D. 2004. Shallow semantic parsing using support vector machines. In NAA CL-HLT , 233. [Raedt 2008] Raedt, L. 2008. Probabilistic logic learning. Logical and Relational Learning 223–288. [Richardson and Domingos 2006] Richardson, M., and Domingos, P . 2006. Markov logic networks. Machine learning 62(1):107–136. [Singla and Domingos 2007] Singla, P ., and Domingos, P . 2007. Markov logic in infinite domains. In U AI , 368–375. [T ellex et al. 2011] T ellex, S.; K ollar , T .; Dickerson, S.; W al- ter , M.; Banerjee, A.; T eller, S.; and Roy , N. 2011. Under- standing natural language commands for robotic navig ation and mobile manipulation. In AAAI .

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment