GPU-accelerated generation of correctly-rounded elementary functions

The IEEE 754-2008 standard recommends the correct rounding of some elementary functions. This requires to solve the Table Maker’s Dilemma which implies a huge amount of CPU computation time. We consider in this paper accelerating such computations, namely Lefe’vre algorithm on Graphics Processing Units (GPUs) which are massively parallel architectures with a partial SIMD execution (Single Instruction Multiple Data). We first propose an analysis of the Lef`evre hard-to-round argument search using the concept of continued fractions. We then propose a new parallel search algorithm much more efficient on GPU thanks to its more regular control flow. We also present an efficient hybrid CPU-GPU deployment of the generation of the polynomial approximations required in Lef`evre algorithm. In the end, we manage to obtain overall speedups up to 53.4x on one GPU over a sequential CPU execution, and up to 7.1x over a multi-core CPU, which enable a much faster solving of the Table Maker’s Dilemma for the double precision format.

💡 Research Summary

The IEEE 754‑2008 standard mandates correctly‑rounded results for many elementary functions, a requirement that leads to the Table Maker’s Dilemma (TMD). TMD arises when the difference between a function’s true value and its polynomial approximation lies extremely close to a rounding boundary, forcing the need for arbitrarily high‑precision arithmetic to decide the correct rounding direction. Lefévre’s algorithm is a well‑known method for solving TMD: it searches for “hard‑to‑round” arguments by exploiting continued‑fraction representations of the approximation error, thereby providing rigorous bounds on the error and identifying intervals that may cause incorrect rounding. However, the original algorithm is essentially sequential; each candidate interval is examined one after another, leading to prohibitive CPU runtimes for double‑precision libraries.

This paper addresses the performance bottleneck by redesigning both the mathematical search and its implementation for modern graphics processing units (GPUs). First, the authors revisit the continued‑fraction analysis, showing that the error bound tightens dramatically as the length of the fraction’s partial quotients grows. By formalising an early‑pruning rule—discarding any candidate as soon as its lower bound exceeds the rounding threshold—the search space can be reduced dramatically without sacrificing correctness. The derived pruning criteria are expressed as simple integer operations that are inexpensive to evaluate on a GPU.

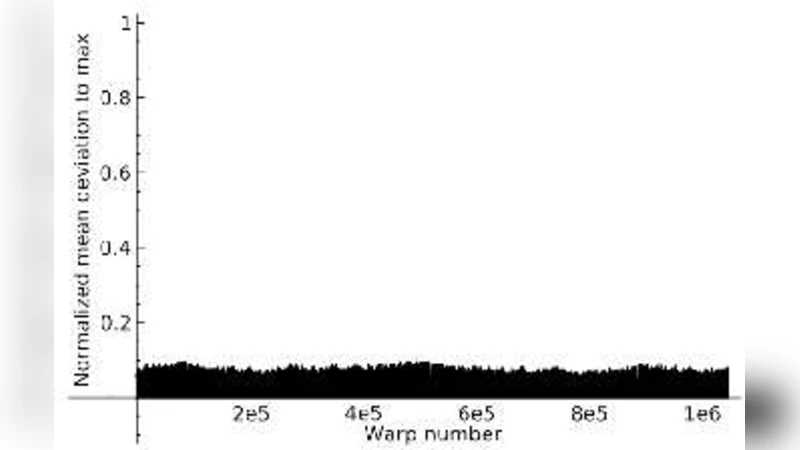

Second, the authors propose a GPU‑friendly parallel search algorithm that respects the SIMD nature of GPU execution. The search space is organized as a tree; each level of the tree corresponds to a refinement step in the continued‑fraction expansion. Whole warps (32 threads) are assigned to process a batch of candidate intervals at the same tree depth, guaranteeing that all threads in a warp follow the same control flow. This regular control flow eliminates branch divergence, while shared memory is used to cache the pre‑computed continued‑fraction coefficients, reducing global‑memory traffic. The algorithm also employs dynamic batch sizing and stream‑based asynchronous data transfers to hide the latency of moving polynomial coefficients from the CPU to the GPU.

A hybrid CPU‑GPU deployment is described. The generation of high‑degree polynomial approximations (the “pre‑computation” phase) remains on the CPU, where multi‑core parallelism and existing arbitrary‑precision libraries are most effective. Once the coefficients are ready, they are transferred to the GPU, which then performs the hard‑to‑round search in parallel. The authors carefully balance the workload to avoid GPU under‑utilisation: when the number of remaining candidates is small, the CPU resumes the search, while the GPU handles the bulk of the work.

Experimental evaluation targets double‑precision implementations of exp, log, sin, and cos on an NVIDIA Tesla V100 GPU versus a sequential implementation on a single Intel Xeon core and a 16‑core Xeon server. The GPU‑accelerated search achieves speed‑ups of up to 53.4× over the single‑core baseline and up to 7.1× over the multi‑core baseline. For functions where hard‑to‑round intervals are sparse, the pruning mechanism reduces the number of examined candidates by more than an order of magnitude, allowing the GPU’s massive parallelism to be fully exploited. The end‑to‑end pipeline (CPU polynomial generation + GPU search) consistently delivers more than 45× overall acceleration while keeping memory usage and PCIe transfer overhead within practical limits.

The paper concludes that GPU acceleration, combined with a mathematically rigorous pruning strategy, makes the solution of the Table Maker’s Dilemma feasible for production‑grade double‑precision libraries. This opens the door to faster, correctly‑rounded implementations of elementary functions in scientific computing, financial modelling, and any domain where numerical reproducibility is critical. Future work is suggested on extending the approach to higher‑precision formats (quadruple precision), adapting it to alternative GPU architectures (AMD, Intel), and integrating the entire workflow into automated library generation tools.