Learning a Factor Model via Regularized PCA

We consider the problem of learning a linear factor model. We propose a regularized form of principal component analysis (PCA) and demonstrate through experiments with synthetic and real data the superiority of resulting estimates to those produced by pre-existing factor analysis approaches. We also establish theoretical results that explain how our algorithm corrects the biases induced by conventional approaches. An important feature of our algorithm is that its computational requirements are similar to those of PCA, which enjoys wide use in large part due to its efficiency.

💡 Research Summary

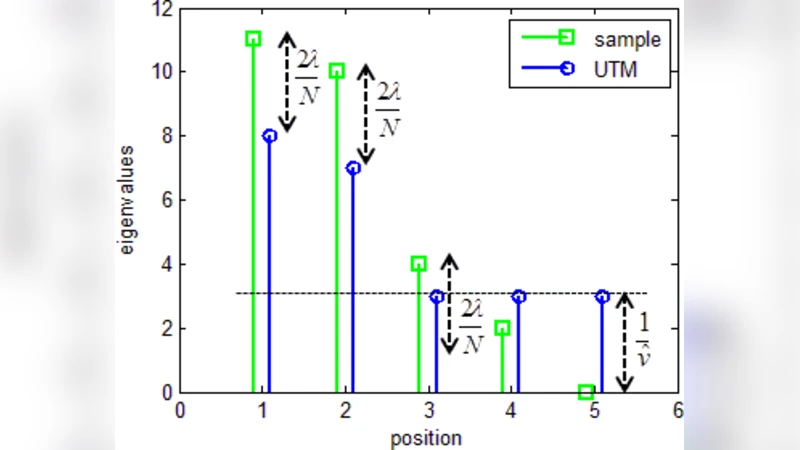

The paper addresses the problem of learning a linear factor model from observed data, where each observation vector x ∈ ℝ^M is generated as x = F^{1/2} z + w, with z ∈ ℝ^K a standard normal factor vector and w ∈ ℝ^M a residual noise vector. The population covariance is Σ* = F* + R*, where F* is low‑rank (rank K ≪ M) and R* is diagonal. The authors aim to estimate a covariance matrix Σ = F + R that maximizes the expected out‑of‑sample log‑likelihood L(Σ, Σ*) = E_{x∼N(0,Σ*)}

Comments & Academic Discussion

Loading comments...

Leave a Comment