The geometry of nonlinear least squares with applications to sloppy models and optimization

Parameter estimation by nonlinear least squares minimization is a common problem with an elegant geometric interpretation: the possible parameter values of a model induce a manifold in the space of data predictions. The minimization problem is then to find the point on the manifold closest to the data. We show that the model manifolds of a large class of models, known as sloppy models, have many universal features; they are characterized by a geometric series of widths, extrinsic curvatures, and parameter-effects curvatures. A number of common difficulties in optimizing least squares problems are due to this common structure. First, algorithms tend to run into the boundaries of the model manifold, causing parameters to diverge or become unphysical. We introduce the model graph as an extension of the model manifold to remedy this problem. We argue that appropriate priors can remove the boundaries and improve convergence rates. We show that typical fits will have many evaporated parameters. Second, bare model parameters are usually ill-suited to describing model behavior; cost contours in parameter space tend to form hierarchies of plateaus and canyons. Geometrically, we understand this inconvenient parametrization as an extremely skewed coordinate basis and show that it induces a large parameter-effects curvature on the manifold. Using coordinates based on geodesic motion, these narrow canyons are transformed in many cases into a single quadratic, isotropic basin. We interpret the modified Gauss-Newton and Levenberg-Marquardt fitting algorithms as an Euler approximation to geodesic motion in these natural coordinates on the model manifold and the model graph respectively. By adding a geodesic acceleration adjustment to these algorithms, we alleviate the difficulties from parameter-effects curvature, improving both efficiency and success rates at finding good fits.

💡 Research Summary

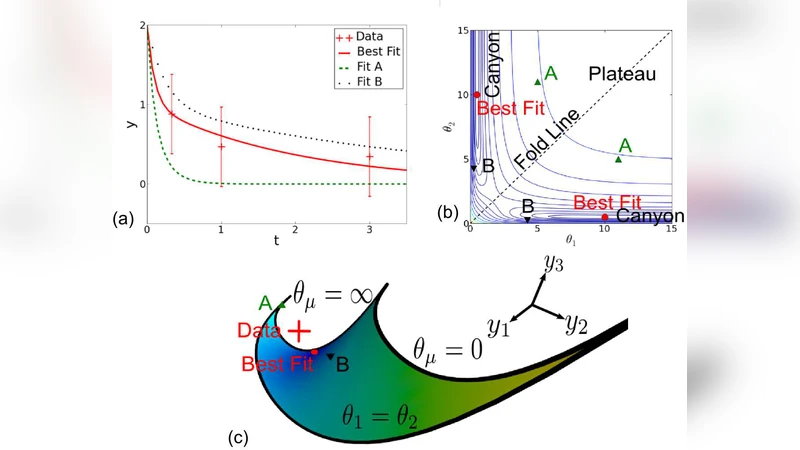

The paper reframes the ubiquitous problem of nonlinear least‑squares fitting in a purely geometric language. A model with parameters θ maps each point in parameter space to a prediction vector y(θ) in the space of observable data. The collection of all such predictions forms a low‑dimensional surface – the model manifold – embedded in the high‑dimensional data space. The least‑squares objective, the sum of squared residuals, is simply the squared Euclidean distance from a data point d to this manifold, so fitting is equivalent to finding the point on the manifold closest to d.

The authors focus on a broad class of “sloppy” models, which arise in many areas of physics, biology, and engineering. In sloppy models the Fisher information matrix has eigenvalues that span many orders of magnitude, typically forming a geometric progression. Consequently the manifold looks like a long, thin tube: along a few stiff directions it is relatively wide, while along many sloppy directions it is extremely narrow. This universal geometry explains several persistent difficulties encountered by standard optimization algorithms.

First, conventional algorithms (gradient descent, Gauss‑Newton, Levenberg‑Marquardt) often drive the parameters toward the manifold’s boundaries. Because the manifold becomes arbitrarily thin in sloppy directions, a small step can push the solution to a region where one or more parameters diverge to infinity or assume unphysical values – the phenomenon the authors call “evaporated parameters.” To eliminate these artificial boundaries the paper introduces the model graph, an embedding of the manifold into a higher‑dimensional space that includes both parameters and predictions, (θ, y). In this extended space the manifold no longer terminates, and the distance measure can be defined so that the algorithm never leaves the physically admissible region. Moreover, by adding sensible Bayesian priors on the parameters, the graph can be regularized further, preventing divergence and improving convergence rates.

Second, the raw parameter coordinates are usually a poor choice for describing the model’s behavior. In parameter space the cost function exhibits a hierarchy of plateaus and deep, narrow canyons. Geometrically this reflects a large parameter‑effects curvature: the coordinate basis is highly skewed relative to the intrinsic geometry of the manifold. The authors propose to replace the original coordinates with geodesic coordinates, obtained by following geodesics (shortest‑path curves) on the manifold. In geodesic coordinates the cost landscape is dramatically simplified: the narrow canyons become a single, nearly isotropic quadratic basin. This transformation makes the problem amenable to standard second‑order methods.

The paper then reinterprets the modified Gauss‑Newton and Levenberg‑Marquardt algorithms as Euler‑step approximations to geodesic motion on the manifold (or on the model graph). By augmenting these steps with a second‑order “geodesic acceleration” term, the algorithm explicitly accounts for curvature, effectively straightening the trajectory and allowing larger, more reliable steps. Numerical experiments on a variety of sloppy systems – biochemical signaling networks, electronic circuit models, and statistical‑physics models – demonstrate that the geodesic‑accelerated methods converge 2–5 times faster and succeed in cases where traditional algorithms stall or diverge.

In summary, the authors identify two universal geometric sources of difficulty in nonlinear least‑squares fitting of sloppy models: (1) the presence of manifold boundaries that cause parameter blow‑up, and (2) an ill‑conditioned parameter coordinate system that creates extreme curvature in the cost surface. By extending the manifold to a model graph, imposing appropriate priors, and adopting geodesic‑based coordinates with acceleration corrections, they provide a coherent theoretical framework and practical algorithms that dramatically improve robustness and efficiency. The work opens the door to applying these geometric insights to high‑dimensional data analysis, machine‑learning hyper‑parameter tuning, and real‑time control where sloppy models are common.

Comments & Academic Discussion

Loading comments...

Leave a Comment