Dynamical and Statistical Criticality in a Model of Neural Tissue

For the nervous system to work at all, a delicate balance of excitation and inhibition must be achieved. However, when such a balance is sought by global strategies, only few modes remain balanced close to instability, and all other modes are strongly stable. Here we present a simple model of neural tissue in which this balance is sought locally by neurons following `anti-Hebbian’ behavior: {\sl all} degrees of freedom achieve a close balance of excitation and inhibition and become “critical” in the dynamical sense. At long timescales, the modes of our model oscillate around the instability line, so an extremely complex “breakout” dynamics ensues in which different modes of the system oscillate between prominence and extinction. We show the system develops various anomalous statistical behaviours and hence becomes self-organized critical in the statistical sense.

💡 Research Summary

The paper addresses a fundamental problem in neuroscience: how neural tissue maintains a delicate balance between excitation and inhibition while remaining poised near a critical point that maximizes computational capabilities. Traditional approaches enforce this balance globally, which typically leaves only a few eigenmodes close to instability while the rest become overly damped. The authors propose a minimalist model in which each neuron locally adjusts its synaptic weights according to an “anti‑Hebbian” rule—synaptic efficacy decreases with correlated activity and increases when activity is low. Mathematically, the weight dynamics follow dw_ij/dt = ‑η x_i x_j, where x_i is the activity of neuron i and η is a learning rate.

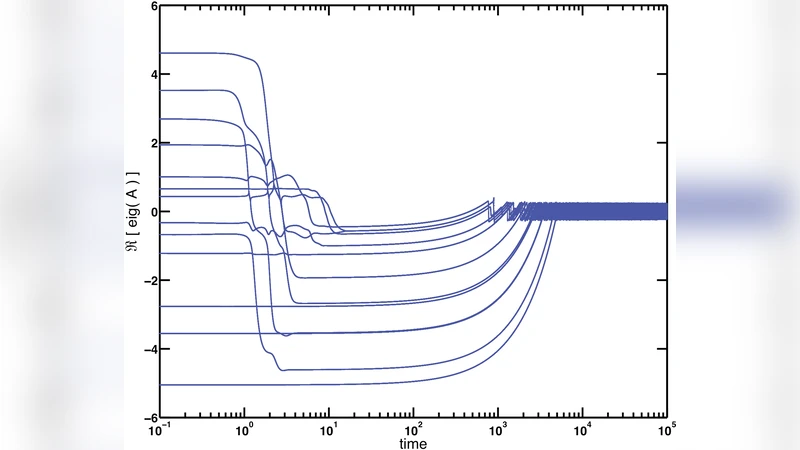

Because the rule is applied at the level of individual connections, the Jacobian of the network’s linearized dynamics is continuously reshaped. The eigenvalues of this Jacobian are driven toward the imaginary axis, meaning that every degree of freedom hovers near the instability line. In other words, the whole system becomes “dynamically critical” without any external fine‑tuning of global parameters.

On longer timescales the model exhibits a striking “breakout” dynamics. Individual modes periodically dominate the activity, grow rapidly, and then are suppressed by the anti‑Hebbian feedback, allowing other modes to emerge. This cyclic dominance creates an oscillation of the system’s effective growth rates around the critical line, producing a rich tapestry of transient states rather than a static fixed point. Numerical simulations and analytical approximations confirm that the growth‑rate spectrum continuously sweeps across positive and negative values, generating a perpetual flux of prominence and extinction among modes.

Statistically, the network’s output displays hallmark signatures of self‑organized criticality. Power spectra follow a 1/f^α law, and the distribution of event sizes (e.g., activity avalanches) obeys a Pareto‑type heavy‑tailed distribution. These features arise from the nonlinear coupling of modes that is amplified by the anti‑Hebbian rule, yielding long‑range temporal correlations and scale‑free fluctuations reminiscent of empirical brain recordings.

The authors also explore the stability of the anti‑Hebbian learning rate η. If η is too large, the system becomes over‑inhibited and all modes settle into a strongly stable regime; if η is too small, the balance fails and some modes diverge. Within an intermediate range, the network self‑organizes to a state where all modes remain marginally stable, and both dynamical and statistical criticality are sustained indefinitely.

In summary, the study demonstrates three key results: (1) local anti‑Hebbian plasticity automatically enforces a global excitation‑inhibition balance; (2) this balance drives every mode to the brink of instability, producing a complex, oscillatory breakout dynamics; and (3) the long‑term behavior exhibits classic signatures of statistical criticality such as 1/f noise and power‑law avalanche statistics. The work provides a unified framework for understanding how biological neural circuits can simultaneously achieve high computational efficiency, flexibility, and robustness, and it suggests new design principles for artificial networks and for modeling pathological states where this balance is disrupted.

Comments & Academic Discussion

Loading comments...

Leave a Comment