Harmonic Analysis of Boolean Networks: Determinative Power and Perturbations

Consider a large Boolean network with a feed forward structure. Given a probability distribution on the inputs, can one find, possibly small, collections of input nodes that determine the states of most other nodes in the network? To answer this ques…

Authors: Reinhard Heckel, Steffen Schober, Martin Bossert

Harmonic Analysis of Bo olean Net w orks: Determinativ e P o w er and P erturbations Reinhard Hec kel ∗ , Steffen Sc hob er † and Martin Bossert † Abstract Consider a large Bo olean net w ork with a feed forward structure. Giv en a probability distri- bution on the inputs, can one find, possibly small, collections of input nodes that determine the states of most other no des in the netw ork? T o answer this question, a notion that quantifies the determinative p ower of an input ov er the states of the no des in the netw ork is needed. W e argue that the m utual information (MI) b etw een a giv en subset of the inputs X = { X 1 , ..., X n } of some no de i and its associated function f i ( X ) quantifies the determinativ e p o w er of this set of inputs o ver node i . W e compare the determinativ e p o wer of a set of inputs to the sensitivit y to p erturbations to these inputs, and find that, ma yb e surprisingly , an input that has large sensitivit y to p erturbations do es not necessarily hav e large determinative p ow er. Ho wev er, for unate functions, which play an imp ortant role in genetic regulatory netw orks, we find a direct relation b et ween MI and sensitivity to p erturbations. As an application of our results, w e ana- lyze the large-scale regulatory netw ork of Escherichia c oli . W e identify the most determinative no des and sho w that a small subset of those reduces the ov erall uncertaint y of the netw ork state significan tly . F urthermore, the netw ork is found to b e toleran t to p erturbations of its inputs. 1 In tro duction A Bo olean netw ork (BN) is a discrete dynamical system, whic h is, for example, used to study and model a v ariet y of bio chemical net works such as genetic regulatory netw orks. BNs hav e b een in tro duced in the late 1960s by Kauffman [1, 2] who prop osed to study random BNs as mo dels of gene regulatory net works. Kauffman in vestigated their dynamical behavior and a phenomena called self-organization. Aside from its original purp ose, BNs were also used to mo del (small- scale) genetic regulatory netw orks; for example, in [3, 4, 5], it was demonstrated that BNs are capable of repro ducing the underlying biological pro cesses (i.e., the cell cycle) w ell. BNs are also used to model large-scale netw orks, suc h as the Escherichia c oli regulatory net w ork [6] which is analyzed in section 6. This net work is, in con trast to Kauffman’s automata and the regulatory net works considered in [3, 4, 5], not an autonomous system, since the gene’s states are determined b y external factors. In the literature addressing the analysis of BNs, it is common to consider measures that quan tify the effect of p erturbations. Whether a random BN op erates in the so called ordered or disordered regime is determined by whether a single p erturbation, i.e., flipping the state of a no de, is exp ected ∗ Departmen t of Information T echnology and Electrical Engineering, ETH Zurich † Institute of T elecommunications and Applied Information Theory , Universit y of Ulm 1 to spread or die out ev entually . Kauffman [2] argues that biological netw orks must op erate at the b order of the ordered and disordered regime; hence, they must be tolerant to p erturbations to some exten t. In con trast to measures of p erturbations, determinative p o wer in BNs has not receiv ed m uch atten tion, ev en though there are several settings where such a notion is of interest. F or example, giv en a feed forw ard net work where the states of the no des are controlled by the states of no des in the input la yer, we might ask whether a p ossibly small set of inputs suffices to determine most states, i.e., reduces the uncertain ty ab out the netw ork’s states significan tly . This can b e addressed b y quantifying the determinative p ow er of the input no des. F or example, in the E. c oli regulatory net work, it turns out that a small set of metab olites and other inputs determine most genes that accoun t for E. c oli ’s metab olism (see section 6). In this pap er, we view the state of eac h no de in the netw ork as an indep enden t random v ari- able. This mo deling assumption applies for net works with a tree-lik e top ology , e.g., a feed forw ard net work, and is often applied when studying the effect of p erturbations. F or this setting, determi- nativ e p ow er of no des and p erturbation-related measures are prop erties of single functions; hence, the analysis of the BN reduces to the analysis of single functions. Our main to ol for the theoretical results is F ourier analysis of Bo olean functions. F ourier analytic techniques were first applied to BNs by Kesseli et al. [7, 8]. In [7, 8], results related to Derrida plots and conv ergence of tra jecto- ries in random BNs were derived. Rib eiro et al. [9] considered the pairwise m utual information in time series of random BNs, under a differen t setup that we use. Sp ecifically , in [9], the functions are random; whereas here, the functions are deterministic, but the argumen t is random. Finally , note that part of this pap er was presen ted at the 2012 International W orkshop on Computational Systems Biology [10]. 1.1 Con tributions Mutual information b et ween a set of inputs to a node and the state of this no de is a measure of the determinative p o wer of this set of inputs, as mutual information quantifies mutual dep endence of random v ariables. In order to understand the determinative p o w er and m utual dep endencies in Bo olean netw orks, we systematically study the mutual information of sets of inputs and the state of a no de. W e relate m utual information to a measure of perturbations and pro ve that (ma yb e surprisingly) a set of inputs that is highly sensitiv e to perturbations might not necessarily hav e determinativ e p ow er. Conv ersely , a set of inputs which has determinativ e p ow er m ust b e sensitive to p erturbations. T o prov e those results, we show that the concentration of weigh t in the F ourier domain on certain sets of inputs characterizes a function in terms of tolerance to p erturbations and determinative p ow er of input nodes. F urthermore, we generalize a result b y Xiao and Massey [11], whic h gives a necessary and sufficient condition of statistical indep endence of a set of inputs and a function’s output in terms of the F ourier co efficien ts. This result can for instance b e applied to decide for which classes of functions the algorithm presen ted in [12], which detects functional dep endencies based on estimating mutual information, can succeed or fails. F or unate functions, w e show that an y input and the function’s output are statistically dep enden t and provide a direct relation betw een the m utual information and the influence of a v ariable. The class of unate functions is especially relev an t for biological netw orks, as it includes all linear threshold functions and all nested canalizing functions, and describ es functional dep endencies in gene regulatory net works w ell [13]. As an application of the theoretical results in this pap er, we show that m utual information 2 can b e used to identify the determinativ e no des in the large-scale mo del of the c on trol netw ork of E. c oli ’s metab olism [6]. 1.2 Outline The pap er is organized as follo ws. Bo olean net works and F ourier analysis of Bo olean functions are review ed in section 2. In section 3, the influence and a verage sensitivity as measures of p erturbations are review ed, and their relation to the F ourier sp ectrum is discussed. In section 4, we study the m utual information of sets of inputs and the function’s output. Section 5 is devoted to unate functions. Section 6 con tains an analysis of the large-scale E. c oli regulatory net work, using the to ols and ideas developed in previous sections. 2 Preliminaries W e start with a short introduction to Bo olean netw orks and F ourier analysis of Bo olean functions, and introduce notation. 2.1 Bo olean netw orks A (synchronous) BN can b e viewed as a collection of n no des with memory . The state of a no de i is describ ed by a binary state x i ( t ) ∈ {− 1 , +1 } at discrete time t ∈ N . Cho osing the alphabet to b e {− 1 , +1 } rather than { 0 , 1 } as more common in the literature on BNs will turn out to be adv antageous later. Ho wev er, b oth c hoices are equiv alent. The state of the net work at time t can b e describ ed by the vector x ( t ) = [ x 1 ( t ) , ..., x n ( t )] ∈ {− 1 , +1 } n . The net work dynamic is defined b y x i ( t + 1) = f i ( x ( t )) , (1) where f i : {− 1 , +1 } n → {− 1 , +1 } is the Boolean function asso ciated with no de i . At time t = 0, an initial state x (0) = x 0 is chosen. In general, not all arguments x 1 , ..., x n of a function f i ( x ) need to b e r elevant . The v ariable x j , j ∈ { 1 , ..., n } is said to b e relev ant for f i if there exists at least one x ∈ {− 1 , +1 } n , suc h that changing x j to − x j c hanges the function’s v alue. In most of the BN mo dels in biology , the functions dep end on a small subset of their argumen ts only . F urthermore, not every state m ust ha ve a function asso ciated with it; states can also b e external inputs to the net work. T o study the determinative pow er and tolerance to p erturbations, a probabilistic setup is needed. In our analysis, we assume that eac h state is an indep enden t random v ariable X i with distribu- tion P[ X i = x i ], x i ∈ {− 1 , +1 } . The assumption of indep endence holds for netw orks with tree-like top ology , but is not feasible for netw orks with strong lo cal de pendencies and feedback lo ops. How- ev er, in many relev ant settings, a BN has a tree-like top ology , for instance the E. c oli netw ork analyzed in section 6. F or a netw ork with few lo cal dep endencies, assuming indep endence will lead to a small mo deling error. Ma jor results concerning the analysis of BNs ha ve b een obtained under the assumptions as stated ab o ve, e.g., the annealed approximation [14], an imp ortan t result on the spread of p erturbations in random BNs. Sev eral imp ortant results on random BNs, e.g., [14], let the netw ork size n tend to infinit y; hence, there are no lo cal dep endencies. 3 2.2 Notation W e use [ n ] for the set { 1 , 2 , ..., n } , and all sets are subsets of [ n ]. With P S ⊆ A ( · ), we mean the sum o ver all sets S that are subsets of A . Throughout this pap er, w e use capital letters for random v ariables, e.g., X , and lo wer case letters for their realizations, e.g., x . Boldface letters denote v ectors, e.g., X is a random v ector, and x its realization. F or a vector x and a set A ⊆ [ n ], x A denotes the subv ector of x corresp onding to the en tries indexed b y A . 2.3 F ourier analysis of Boolean functions In the following, w e giv e a short introduction to F ourier analysis of Boolean functions. Let X = ( X 1 , ..., X n ) b e a binary , product distributed random v ector, i.e., the en tries of X are independent random v ariables X i , i ∈ [ n ] with distribution P[ X i = x i ] , x i ∈ {− 1 , +1 } . Throughout this pap er, probabilities P[ · ] and exp ectations E [ · ] are with resp ect to the distribution of X . W e denote p i , P[ X i = 1], the v ariance of X i b y V ar ( X i ), its standard deviation by σ i , p V ar ( X i ) and finally µ i , E [ X i ]. The inner pro duct of f , g : {− 1 , +1 } n → { − 1 , +1 } with resp ect to the distribution of X is defined as h f , g i , E [ f ( X ) g ( X )] = X x ∈{− 1 , 1 } n P[ X = x ] f ( x ) g ( x ) (2) whic h induces the norm k f k = p h f , f i . An orthonormal basis with resp ect to the distribution of X is Φ S ( x ) = Y i ∈ S x i − µ i σ i , S ⊆ [ n ] \ ∅ (3) and Φ S ( x ) = 1 , S = ∅ . This basis was first prop osed by Bahadur [15]. Thus, each Bo olean function f : {− 1 , +1 } n → {− 1 , +1 } can b e uniquely expressed as f ( x ) = X S ⊆ [ n ] ˆ f ( S )Φ S ( x ) , (4) where ˆ f ( S ) , h f , Φ S i are the F ourier co efficien ts of f . Note that (4) is a represen tation of f as a m ultilinear p olynomial. As an example, consider the AND2 function defined as f AND2 ( x ) = 1 if and only if x 1 = x 2 = 1, and let p 1 = p 2 = 1 / 2. According to (4) f AND ( x ) = − 1 2 + 1 2 x 1 + 1 2 x 2 + 1 2 x 1 x 2 . As a second example consider P ARITY2, i.e., the X OR function, defined as f P ARITY2 ( x ) = 1 if x 1 = x 2 = 1 or if x 1 = x 2 = − 1, and f P ARITY2 ( x ) = − 1 for all other c hoices of x . W ritten as a p olynomial, f P ARITY2 ( x ) = x 1 x 2 . W e conclude this section b y listing prop erties of the basis functions which are used frequen tly throughout this pap er. Decomp osition: Let A ⊆ [ n ] and S ⊂ A , and denote ¯ S = A \ S . Then, Φ A ( x ) = Φ S ( x )Φ ¯ S ( x ) . 4 Orthonormalit y: F or A, B ⊆ [ n ], E [Φ A ( X )Φ B ( X )] = ( 1 , if A = B 0 , otherwise . P arsev al’s identit y: F or f : {− 1 , +1 } n → {− 1 , +1 } , E [ f ( X ) 2 ] = k f k 2 = X S ⊆ [ n ] ˆ f ( S ) 2 = 1 . 3 Influence and a v erage sensitivit y Next, w e discuss measures of p erturbations and their relation to the F ourier sp ectrum. W e start with a measure of the p erturbation of a single input. Definition 1 ([16]) . Define the influenc e of variable i on the function f as I i ( f ) = P[ f ( X ) 6 = f ( X ⊕ e i )] , wher e x ⊕ e i is the ve ctor obtaine d fr om x by flipping its i th entry. By definition, the influence of v ariable i is the probabilit y that perturbing, i.e., flipping, input i c hanges the function’s output. Influence can b e view ed as the capability of input i to change the output of f . In BNs, usually , the sum of all influences, i.e., the aver age sensitivity is studied. Definition 2. The aver age sensitivity of f to the variables in the set A is define d as I A ( f ) = X i ∈ A I i ( f ) . The aver age sensitivity of f is define d as as( f ) , I { 1 ,...,n } ( f ) . I A ( f ) captures whether flipping an input chosen uniformly at random from A affects the func- tion’s output. Most commonly , all inputs are taken into account, i.e., the av erage sensitivit y as( f ) is studied. As an example, as( f P ARITY2 ) = 2 and as( f AND2 ) = 1; hence, P ARITY2 is more sensitiv e to single p erturbations than AND2. Influence and av erage sensitivit y hav e the follo wing conv enient expressions in terms of F ourier co efficien ts. Prop osition 1 (Lemma 4.1 of [17]) . F or any Bo ole an function f , I i ( f ) = 1 σ 2 i X S ⊆ [ n ] : i ∈ S ˆ f ( S ) 2 . (5) Prop osition 2. F or any Bo ole an function f , I A ( f ) = X S ⊆ [ n ] ˆ f ( S ) 2 X i ∈ S ∩ A 1 σ 2 i . (6) 5 0 1 2 3 0 0 . 5 1 d ! | S | = d ˆ f ( S ) 2 AND3 P ARITY3 Figure 1: The F ourier sp ectrum of AND3 and P ARITY3. Prop osition 2 follo ws directly from Prop osition 1 and the definition of I A ( f ). F rom (6), w e see that as( f ) is large if the F ourier weigh t is concen trated on the co efficien ts of high degree d = | S | , i.e., if P S : | S |≥ d ˆ f ( S ) 2 is large (i.e., close to one). F or this case, Parsev al’s identit y implies that the ˆ f ( S ) 2 with | S | < d m ust b e small. Let’s see an example: Supp ose p 1 = p 2 = p 3 = 1 / 2 and consider the AND3 function, i.e., f AND3 ( x 1 , x 2 , x 3 ) = 1 if and only if x 1 = x 2 = x 3 = 1. f AND3 is tolerant to p erturbations since as( f AND3 ) = 0 . 75, and as Figure 1 shows, its sp ectrum is concen trated on the co efficien ts of lo w degree. In con trast for f P ARITY3 ( x 1 , x 2 , x 3 ) , x 1 x 2 x 3 , as( f P ARITY ) = 3. Hence, P ARITY3 is maximally sensitive to p erturbations. Figure 1 sho ws that its sp ectrum is maximally concen trated on the co efficien t of highest degree. According to (6) as( f ) is small only if the F ourier w eight is concentrated on the co efficien ts of lo w degree. This is the case either if f is strongly biased (i.e., if f ( x ) = a , for most inputs x , where a ∈ {− 1 , 1 } is a constant) or if f dep ends on few v ariables only . This is in accordance with the results of Kauffman [1]; he found that a random BN op erates in the ordered regime if the functions in the netw ork dep end on av erage on few v ariables. W e will state our result for measures of single p erturbations. How ev er, these results also apply to other noise models, sp ecifically to the noise sensitivity of f . That is, because the noise sensitivity of f is small if f is toleran t to single p erturbations. The noise sensitivity of a Bo olean function is defined as the probability that the function’s output c hanges if each input is flipp ed indep enden tly with probability . F or uniformly distributed X , as( f ) is an upp er b ound for the noise sensitivity; for small v alues of , as( f ) approximates the noise sensitivity well. F or the X i b eing equally but p ossibly nonuniformly distributed and a slightly different noise model, it w as found in [18] that as( f ) still upp er b ounds the noise sensitivity . This result w as generalized to pro duct distributed X in [19]. 4 Mutual information and uncertain t y In this section, we study the determinative p o w er of a subset of v ariables X A , where X A consists of the en tries of X corresp onding to the indices in the set A ⊆ [ n ], o ver the function’s output f ( X ). As a measure of determinative p o wer, we take the mutual information MI( f ( X ); X A ) betw een f ( X ) and X A , since MI( f ( X ); X A ) quan tifies the statistical dependence b et ween the random v ariable X A and f ( X ). Hence, this section is devoted to the study of MI( f ( X ); X A ). 6 Before giving a formal definition of m utual information, let us start with an example. Consider the P ARITY2 function and let its inputs X 1 , X 2 b e uniformly distributed. Intuitiv ely , if X 1 has determinativ e p o wer, knowledge ab out X 1 should provide us with information ab out f P ARITY2 ( X ). Supp ose w e know the v alue of X 1 , say X 1 = 1. Since f P ARITY2 ( x ) = x 1 x 2 , we ha ve with P[ X 2 = 1] = 1 / 2 that P[ f P ARITY2 ( X ) = 1] = P[ f P ARITY2 = 1 | X 1 = 1]. Hence, knowledge of X 1 do es not help to predict the v alue of f P ARITY2 ( X ). Therefore, X 1 has no determinative p o wer o v er f P ARITY2 ( X ). W e indeed hav e MI( f P ARITY2 ( X ); X 1 ) = 0. W e next define mutual information. Mutual information is the reduction of uncertaint y of a random v ariable Y due to the kno wledge of X ; therefore, we need to define a measure of uncertaint y first, which is en tropy . As a reference for the following definitions, see [20]. Definition 3. The entr opy H ( X ) of a discr ete r andom variable X with alphab et X is define d as H ( X ) , − X x ∈X P[ X = x ] log 2 P[ X = x ] . Definition 4. The c onditional entr opy H ( Y | X ) of a p air of discr ete and jointly distribute d r andom variables ( Y , X ) is define d as H ( Y | X ) , X x ∈X P[ X = x ] H ( Y | X = x ) . Definition 5. The mutual information MI( Y ; X ) is the r e duction of unc ertainty of the r andom variable Y due to the know le dge of X MI( Y ; X ) , H ( Y ) − H ( Y | X ) . F or a binary random v ariable X with alphab et X = { x 1 , x 2 } and p , P[ X = x 1 ], w e hav e H ( X ) = h ( p ), where h ( p ) is the binary entrop y function, defined as h ( p ) , − p log 2 p − (1 − p ) log 2 (1 − p ) . (7) The properties of m utual information are what w e intuitiv ely exp ect from a measure of determi- nativ e p o wer: If knowledge of X i reduces the uncertain ty of f ( X ), then X i determines the state of f ( X ) to some extent, b ecause then, knowledge about the state of X i helps in predicting f ( X ). F urthermore, we require from a measure of determinativ e p o w er that not all v ariables can hav e large determinative p o wer sim ultaneously . This is guaran teed for m utual information as n X i =1 MI( f ( X ); X i ) ≤ MI( f ( X ); X ) ≤ 1 , (8) whic h follows from the chain rule of mutual information (as a reference, see [20]) and indep endence of the X i , i ∈ [ n ]. Hence, if MI( f ( X ); X i ) is large, i.e., close to 1, w e can b e sure that X i has determinativ e p ow er o ver f ( X ) since (8) implies that MI( f ( X ); X j ) for j 6 = i m ust b e small then. 7 4.1 Mutual information and the F ourier sp ectrum In order to study determinative p o w er, its relation to measures of p erturbations, and statistical de- p endencies, we start by characterizing the mutual information in terms of F ourier co efficien ts. Our results are based on the following nov el characterization of en tropy in terms of F ourier co efficien ts. Theorem 1. L et f b e a Bo ole an function, let X b e pr o duct distribute d, and let X A = { X i : i ∈ A } b e a fixe d set of ar guments, wher e A ⊆ [ n ] . Then, H ( f ( X ) | X A ) = E h 1 2 1 + X S ⊆ A ˆ f ( S )Φ S ( X A ) , wher e h ( · ) is the binary entr opy function as define d in (7) . Pr o of. See Appendix B. F or the sp ecial case of uniformly distributed X , a pro of app ears in [21], in the context of designing S-b o xes. Using the definition of mutual information, an immediate corollary of Theorem 1 is the follo wing: Corollary 1. L et f b e a Bo ole an function, X b e pr o duct distribute d, and X A = { X i : i ∈ A } . Then, MI( f ( X ); X A ) = h 1 / 2(1 + ˆ f ( ∅ ) − E h 1 2 1 + X S ⊆ A ˆ f ( S )Φ S ( X A ) . (9) Theorem 1 (and Corollary 1) shows that the conditional en tropy H ( f ( X ) | X A ) and the mutual information MI( f ( X ); X A ) are functions of the co efficien ts { ˆ f ( S ) : S ⊆ A } only . This already hints at a fundamental difference to the av erage sensitivity , since the av erage sensitivit y dep ends on the co efficien ts { ˆ f ( S ) : | S ∩ A | > 0 } , according to (6). W e next discuss MI( f ( X ); X i ) based on (9). First, note that MI( f ( X ); X i ) has previously b een studied under the notion information gain as a measure of ‘go odness’ for split v ariables in greedy tree learners [22] and also under the notion of informativeness to quan tify voting p o wer [23]. According to (9), the mutual information MI( f ( X ); X i ) just dep ends on ˆ f ( { i } ), ˆ f ( ∅ ), and p i . In contrast, the influence I i ( f ) is a function of the co efficien ts { ˆ f ( S ) : S ∈ [ n ] , i ∈ S } , according to (5). In Figure 2, we depict MI( f ( X ); X i ) for p i = 0 . 3 as a function of ˆ f ( { i } ) and ˆ f ( ∅ ). It can be seen that MI( f ( X ); X i ) = 0, i.e., f ( X ) and X i are statistically independent if and only if ˆ f ( { i } ) = 0. That can b e formalized as follows: MI( f ( X ); X i ) is con v ex in ˆ f ( { i } ). This can b e pro ven by taking the second deriv ativ e of (9) and observing that it is larger than zero for all pairs of v alues ( ˆ f ( ∅ ), ˆ f ( { i } )) for whic h MI( f ( X ); X i ) is defined. Next, from (9), w e see that MI( f ( X ); X i ) = 0 if ˆ f ( { i } ) = 0; hence, it follows that MI( f ( X ); X i ) = 0 if and only if ˆ f ( { i } ) = 0, whic h prov es the following result: Corollary 2. L et f b e a Bo ole an function, and X b e pr o duct distribute d. X i and f ( X ) ar e statis- tic al ly indep endent if and only if ˆ f ( { i } ) = 0 . 8 Figure 2: MI( f (X); X i ) as a function of ˆ f ( { i } ) and ˆ f ( ∅ ) for p i = 0 . 3 . Corollary 2 also follows immediately from a more general result, namely Theorem 5, whic h is presented later. Recall that for P ARITY2, MI( f P ARITY2 ( X ); X 1 ) = 0 and ˆ f ( { 1 } ) = 0; hence, Corollary 2 comes at no surprise. F rom Figure 2, it can be seen that the larger | ˆ f ( { i } ) | , the larger MI( f ( X ); X i ) b ecomes. F or- mally , it follows from the conv exit y of MI( f ( X ); X i ) and Corollary 2 that MI( f ( X ); X i ) is increas- ing in | ˆ f ( { i } ) | . Hence, X i has large determinativ e p o wer, i.e., MI( f ( X ); X i ) is large, if and only if | ˆ f ( { i } ) | is large (i.e., close to one). | ˆ f ( { i } ) | is trivially maximized for the dictatorship function , i.e., for f ( x ) = x i , or its negation, i.e., f ( x ) = − x i . The output f ( X ) of the dictatorship function is fully determined by x i . Next, let us consider the (trivial) case where A = [ n ] and hence X A = X . Then, MI( f ( X ); X ) = h (1 / 2(1 + ˆ f ( ∅ )). It follo ws that MI( f ( X ); X ) is maximized for ˆ f ( ∅ ) = 0, i.e, P[ f ( X ) = 1] = 1 / 2, i.e., if the v ariance of f ( X ) is 1. In general, the closer to zero ˆ f ( ∅ ) is, the larger the mutual information b et w een a function’s output and all its inputs become s. Let us finally relate the conditional entrop y H ( f ( X ) | X A ) to the concentration of the F ourier weigh t on the co efficients { S : S ⊆ A } , A ⊆ [ n ]. Theorem 2. L et f b e a Bo ole an function, let X b e pr o duct distribute d, and let X A = { X i : i ∈ A } b e a fixe d set of ar guments, wher e A ⊆ [ n ] . Then, 1 − X S ⊆ A ˆ f ( S ) 2 1 ln(4) ≥ H ( f ( X ) | X A ) ≥ 1 − X S ⊆ A ˆ f ( S ) 2 . Pr o of. See App endix C. Theorem 2 sho ws that H ( f ( X ) | X A ) can b e appro ximated with 1 − P S ⊆ A ˆ f ( S ) 2 . It further sho ws that H ( f ( X ) | X A ) is small if the F ourier weigh t is concen trated on the v ariables in the set A , i.e., if P S ⊆ A ˆ f ( S ) 2 is close to one. In con trast, as mentioned previously , for I A ( f ), it is relev an t whether the F ourier weigh t is concentrated on the co efficien ts with high degree. 9 4.2 Relation to measures of p erturbation Mutual information and av erage sensitivity are related as follows. Theorem 3. F or any Bo ole an function f , for any pr o duct distribute d X , I A ( f ) ≥ min i ∈ A 1 σ 2 i (MI( f ( X ); X A ) − Ψ(V ar ( f ( X )))) (10) with Ψ( x ) , ( x ) 1 / ln(4) − x. (11) Pr o of. See App endix D. Note that the term Ψ(V ar ( f ( X ))) is close to zero. Sp ecifically , for any f ( X ) w e hav e 0 ≤ Ψ(V ar ( f ( X ))) < 0 . 12, and for settings of interest, Ψ(V ar ( f ( X ))) is very close to zero, as explained in more detail in the following. Theorem 3 shows that if MI( f ( X ); X A ) if large (i.e., close to one), f must b e sensitive to p erturbations of the en tries of X A . Moreo ver, if I A ( f ) is small (i.e., if f is toleran t to p erturbations of the en tries of X A ), then MI( f ( X ); X A ) m ust b e small (i.e., the entries of X A do not hav e determinativ e p o wer). F or the case that A = [ n ], Theorem 3 states that the a verage sensitivit y as( f ) is lo wer-bounded b y MI( f ( X ); X ) minus some small term. W e next discuss the sp ecial case that A = { i } . Theorem 3 ev aluated for A = { i } yields a low er b ound on the influence of a v ariable in terms of the m utual information of that v ariable, namely I i ( f ) ≥ 1 σ 2 i (MI( f ( X ); X i ) − Ψ(V ar ( f ( X )))) . (12) Again, Ψ(V ar ( f ( X ))) is clos e to zero for settings of interest, as the following argument explains. Equation (12) will not b e ev aluated for small V ar ( f ( X )); since then, f ( X ) is close to a constant function (i.e., close to f ( X ) = 1 or f ( X ) = − 1), and I i ( f ) and MI( f ( X ); X i ) m ust b oth b e small (i.e., close to zero) an ywa y . Hence, (12) is of in terest when V ar ( f ( X )) is large, i.e., close to 1; for this case, the term Ψ(V ar ( f ( X ))) is small (e.g., for V ar ( f ( X )) > 0 . 8, Ψ(V ar ( f ( X )) < 0 . 05). Observ e that, according to (12), if MI( f ( X ); X i ) is large, then I i ( f ) is also large. That pro ves the in tuitive idea that if an input determines f ( X ) to some extent, this input m ust b e sensitive to p erturbations. Conv ersely , as men tioned previously , an input i can ha ve large influence and still MI( f ( X ); X i ) = 0. E.g., for the P ARITY2 function, we ha ve I i ( f ) = 1 and MI( f ( X ); X i ) = 0. In terestingly , the influence also has an information theoretic interpretation. The following theorem generalizes Theorem 1 in [23]. Theorem 4. F or any Bo ole an function f , for any pr o duct distribute d X , I i ( f ) = H f ( X ) | X [ n ] \{ i } H ( X i ) . Pr o of. See App endix E. F or uniformly distributed X , a pro of app ears in [23]. Theorem 4 sho ws that the influence of a v ariable is a measure for the uncertain ty of the function’s output that remains if all v ariables except v ariable i are set. 10 4.3 Statistical indep endence of inputs to a Bo olean function Next, w e characterize statistical indep endence of f ( X ) and a set of its arguments X A in terms of F ourier co efficien ts. This result generalizes a theorem deriv ed b y Xiao and Massey [11] from uniform to pro duct distributed X . Theorem 5. L et A ⊆ [ n ] b e fixe d, f b e a Bo ole an function, and X b e pr o duct distribute d. Then, f ( X ) and the inputs X A = { X i : i ∈ A } ar e statistic al ly indep endent if and only if ˆ f ( S ) = 0 for al l S ⊆ A \ ∅ . Pr o of. See Appendix F. F or uniformly distributed X , i.e., P[ X i = 1] = 1 / 2 for all i ∈ [ n ], Theorem 5 has b een derived b y Xiao and Massey [11]. Note that the pro of pro vided here is also conceptually differen t from the pro of for the uniform case in [11], as it do es not rely on the Xiao-Massey lemma. Theorem 5 shows that a function and small sets of its inputs are statistically indep endent if the sp ectrum is concen trated on the co efficien ts of high degree d = | S | . The most prominen t example is the parity function of n v ariables, i.e., f P ARITYN ( x ) = x 1 x 2 ...x n : F or uniformly distributed X , each subset of n − 1 or fewer arguments and f P ARITYN ( X ) are statistically indep enden t. Conv ersely , if a function is concen trated on the co efficien ts of lo w degree d = | S | , which is the case for functions that are tolerant to p erturbations, then small sets of inputs and the function’s output are statistically dep enden t. Theorem 5 also has an important implication for algorithms that detect functional dependencies in a BN based on estimating the m utual information from observ ations of the netw ork’s states, suc h as the algorithm presented in [12]. Theorem 5 c haracterizes the classes of functions for which such an algorithm may succeed and for which it will fail. Moreov er, Theorem 5 shows that in a Bo olean mo del of a genetic regulatory netw ork, a functional dep endency b et ween a gene and a regulator cannot b e detected based on statistical dependence of a regulator X i and a gene’s state f j ( X ), unless the regulatory functions are restricted to those for which | ˆ f ( { i } ) | > 0 holds for each relev an t input i . 5 Unate functions In this section, we discuss unate, i.e., locally monotone functions. Definition 6. A Bo ole an function f is said to b e unate in x i if for e ach x = ( x 1 , ..., x n ) ∈ {− 1 , +1 } n and for some fixe d a i ∈ {− 1 , +1 } , f ( x 1 , ..., x i = − a i , ..., x n ) ≤ f ( x 1 , ..., x i = a i , ..., x n ) holds. f is said to b e unate if f is unate in e ach variable x i , i ∈ [ n ] . Eac h linear threshold function and nested canalizing function is unate. Moreov er, most, if not all, regulatory interactions in a biological netw ork are considered to be unate. That can b e deduced from [13, 24], and the basic argument is the following: If an elemen t acts either as a repressor or an activ ator for some gene, but nev er as b oth (which is a reasonable assumption for regulatory in teractions[13, 24]), then the function determining the gene’s state is unate by definition. F or unate functions, the following prop ert y holds: 11 Prop osition 3. L et f : {− 1 , +1 } n → {− 1 , +1 } b e unate. Then, ˆ f ( { i } ) = a i σ i I i ( f ) , ∀ i ∈ [ n ] , (13) wher e a i ∈ {− 1 , +1 } is the p ar ameter in Definition 6. Pr o of. Go es along the same lines as the pro of for monotone functions in Lemma 4.5 of [17]. Note that conv ersely , if (13) holds for each x i , i ∈ [ n ], f is not necessarily unate. Inserting (13) in to (9) yields MI( f ( X ); X i ) = h 1 2 (1 + ˆ f ( ∅ )) − E h 1 2 1 + ˆ f ( ∅ ) + a i σ i I i ( f ) X i − µ i σ i , (14) where the exp ectation in (14) is ov er X i . B ased on (14), the discussion from section 4.1 on MI( f ; X i ) applies b y using ˆ f ( { i } ) and a i σ i I i ( f ) synonymously . Hence, for unate functions, the mutual infor- mation MI( f ; X i ) is increasing in the influence | I i ( f ) | . Moreov er, if f is unate, and x i is a relev an t v ariable, i.e., a v ariable on which the functions actually dep end on, then | ˆ f ( { i } ) | > 0. F rom this fact and the same argumen ts as giv en in section 4.1 follows: Theorem 6. L et f : {− 1 , +1 } n → {− 1 , +1 } b e unate. If and only if x i is a r elevant variable, then MI( f ( X ); X i ) 6 = 0 . In a Bo olean mo del of a biological regulatory net work, this implies that if the functions in the net work are unate, then a regulator and the target gene m ust b e statistically dep enden t. 6 E. c oli regulatory net w ork In [6], the authors presented a complex computational mo del of the E. c oli transcriptional regulatory net work that controls central parts of the E. c oli metab olism. The net w ork consists of 798 no des and 1160 edges. Of the no des, 636 represent genes and of the remaining 162 no des, most (103) are external metab olites. The rest are stimuli, and others are state v ariables such as internal metab olites. The netw ork has a lay ered feed-forward structure, i.e., no feedback lo ops exist. The elemen ts in the first lay er can b e viewed as the inputs of the system, and the elements in the follo wing sev en lay ers are in teracting genes that represent the internal state of the system. Our exp erimen ts revealed that all functions are unate; therefore, the prop erties derived in section 5 apply . Note that all functions b eing unate is a sp ecial prop ert y of the net work, since if functions are chosen uniformly at random, it is unlikely to sample a unate function, in particular if the n umber of inputs n is large. 6.1 Determinativ e no des in the E. c oli net w ork W e first identify the input no des that ha v e large determinative p o w er (w e will define what that means in a netw ork setting shortly) and then show that a small n umber thereof reduces the uncer- tain ty of the netw ork’s state significantly . Sp ecifically , we show that on a verage, the en tropy of the no de’s states conditioned on a small set of determinative input no des, is small. T o put this result into p ersp ectiv e, w e p erform the same exp erimen t for random net works with the same and differen t top ology as the E. c oli netw ork. W e denote by X = { X 1 , ..., X n } , n = 145 12 the set of inputs of the feed forw ard netw ork and assume that the X i are independent and uniformly distributed. The remaining v ariables are denoted by Y = { Y 1 , ..., Y m } , m = 653 and are a function of the inputs and the netw ork’s states, i.e., Y i = f 0 i ( X , Y ). F or our analysis, the distributions of the random v ariables Y 1 , ..., Y m need to b e computed, since some of those v ariables are argumen ts to other functions. This can b e circum ven ted by defining a collapsed netw ork, i.e., a netw ork where eac h state of a no de is giv en as a function of the input no des only , i.e., Y i = f i ( X ). The collapsed net work is obtained b y consecutively inserting functions in to eac h other, until eac h function only dep ends on states of no des in the input lay er, i.e., on X . The collapsed netw ork reveals the dep endencies of eac h no de on the input v ariables. Interestingly , in the collapsed netw ork, it is seen that the v ariables chol xt > 0, salicylate, 2ddglcn xt > 0, mnnh > 0, altrh > 0, and his-l xt > 0 (here, and in the follo wing, w e adopt the names from the original dataset), whic h app ear to be inputs when considering the original E. c oli netw ork, turn out to b e not. Consider, for example, the no de salicylate. The only no de dependent on salicylate is mara = (( NOT arca OR NOT fnr) OR oxyr OR salicylate). Ho wev er, arca = (fnr AND NOT o xyr), and it is easily seen that mara simplifies to mara = 1. Next, w e identify the determinativ e no des. As argued in section 4, MI( f i ( X ); X j ) is a measure of the determinativ e p o w er of X j o ver Y i = f i ( X ). This motiv ates the definition of the determinative p o w er of input X j o ver the states in the netw ork as D ( j ) , m X i =1 MI( f i ( X ); X j ) . Note that a small v alue of D ( j ) implies that X j alone do es not hav e large determinativ e p o w er o ver the netw ork’s states, but X j ma y hav e large determinative p o wer ov er the netw ork states in conjunction with other v ariables. In principle P m i =1 MI( f i ( X ); X j , X k ) can b e large for some j, k ∈ [ n ], even though D ( j ) and D ( k ) are equal to zero. This is, how ev er, not p ossible in the E. c oli netw ork since the functions are unate. Specifically , MI( f i ( X ); X j , X k ) 6 = 0 implies that x j or x k are relev an t v ariables, and according to Theorem 6, MI( f i ( X ); X j ) 6 = 0 or MI( f i ( X ); X k ) 6 = 0. W e computed D ( j ) for each input v ariable and found that D ( j ) is large just for some inputs, such as o2 xt (37 bit), leu-l xt (20.9 bit), glc-d xt (19.3 bit), and glcn xt > 0 (17 bit), but is small for most other v ariables. P artly , this can b e explained by the out-degree (i.e., the n umber of outgoing edges of a no de) distribution of the input no des. How ev er, having a large out-degree do es not necessarily result in large v alues of D ( j ). In fact, in the E. c oli netw ork, glc-d xt, glcn xt > 0, and o2 xt hav e 99, 93, and 73 outgoing edges, resp ectiv ely . On the other hand, D(glc-d xt) = 19.3 bit and D(glcn xt > 0) = 17 bit, whereas D(o2 xt) = 37 bit. Denote τ as a p erm utation on [ n ], such that D ( X τ (1) ) ≥ D ( X τ (2) ) ≥ ... ≥ D ( X τ ( n ) ), i.e., τ orders the input no des in descending order in their determinative p o wer. W e next consider H ( Y | X τ (1) , ..., X τ ( l ) ) as a function of l to see whether knowledge of a small set of input no des re- duces the entrop y of the o verall net work state significantly . H ( Y | X τ (1) , ..., X τ ( l ) ) has an interesting in terpretation which arises as a consequence of the so called asymptotic equipartition prop erty [20] (as discussed in greater detail in [25]): Consider a sequence y 1 , ..., y k of k samples of the random v ariable Y . F or > 0 and k sufficiently large, there exists a set A ( k ) of typical sequences y 1 , ..., y k , suc h that | A ( k ) | ≤ 2 k ( H ( Y )+ ) 13 and P h Y ∈ A ( k ) i > 1 − , where | A ( k ) | denotes the cardinalit y of the set A ( k ) . This sho ws that the sequences obtained as samples of Y are likely to fall in a set of size determined by the uncertaint y of Y . Since the output la yer consists of 653 no des, the net work’s state space has maximal size 2 653 . Since Y is a function of X , H ( Y ) ≤ H ( X ) = 145bit, where for the last equality , we assume uniformly distributed inputs. Th us, without kno wing the state of any input v ariable, the netw ork’s state is lik ely to b e in a set of size roughly 2 145 . Given the knowledge ab out the states X τ (1) , ..., X τ ( l ) , the state of the net work is likely to be in a set of size roughly 2 H ( Y | X τ (1) ,...,X τ ( l ) ) . F or a large net work, how ev er, H ( Y | X τ (1) , ..., X τ ( l ) ) is exp ensiv e to compute as by definition: H ( Y | X A ) = X x A P[ X A = x A ] · X y P[ Y = y | X A = x A ] log 2 P[ Y = y | X A = x A ] . (15) Hence, the n umber of terms in the sum is exponential in n and | A | . An estimate of (15) can be obtained by sampling uniformly at random o ver x A and y . Instead, we will consider the follo wing upp er bound whic h is computationally inexp ensiv e to compute: H ( Y | X τ (1) , ..., X τ ( l ) ) ≤ A ( l ) with A ( l ) , m X i =1 H ( Y i | X τ (1) , ..., X τ ( l ) ) . The b ound abov e follo ws from the c hain rule for en tropy [20]. H ( Y i | X τ (1) , ..., X τ ( l ) ) is computation- ally inexpensive to compute, since Y i dep ends on few v ariables only (in the E. c oli netw ork, on ≤ 8). F or the E. c oli net work, A ( l ) is depicted in Figure 3 as a function of l . Figure 3 shows that knowledge of the states of the most determinativ e nodes reduces the uncertain ty ab out the netw ork’s states significan tly . In fact, the upp er b ound A ( l ) is loose; hence, w e ev en expect H ( Y | X τ (1) , ..., X τ ( l ) ) to lie significantly b elo w A ( l ). Also, note that when A ( l ) is small, H ( Y i | X τ (1) , ..., X τ ( l ) ) must b e small on av erage; hence, P Y i = 1 | X τ (1) , ..., X τ ( l ) is close to one or zero on av erage. T o put A ( l ) for the E. c oli netw ork in Figure 3 into p erspective, we compute A ( l ) for random net works. First, w e to ok the E. c oli netw ork and exchanged eac h function with one c hosen uniformly at random from the set of all Bo olean functions of corresp onding degree. W e also exc hanged each function with one c hosen uniformly at random from all unate functions. W e p erformed the same exp erimen t for the original E. c oli net work for 25 c hoices of random and random unate functions, resp ectiv ely . The mean of A ( l ), along with one standard deviation from the mean (dashed lines), is plotted in Figure 3 for random and random unate functions. It is seen that few er inputs determine the output of the original E. c oli netw ork, compared to its random counterparts. F or example, to obtain A ( l ) = 50, ab out twice as many inputs need to be known if the functions in the E. c oli net work are exc hanged for functions c hosen uniformly at random. Next, w e generated at random feed forw ard net w orks with m = 653 outputs and n = 145 inputs, eac h with out-degree 8, i.e., the a verage out-degree of the inputs in the collapsed E. c oli netw ork. Again, w e computed A ( l ) for 25 choices of random and random unate functions, resp ectiv ely . The mean and one standard deviation from the mean are depicted in Figure 3. The results sho w that, as exp ected, for a random feed forw ard netw ork, there seems to b e no small set of inputs that determines the outputs. 14 20 40 60 80 100 120 140 0 100 200 300 l A ( l ) Random NW Random unate NW Random functions Random unate functions Original functions Figure 3: The upp er b ound A ( l ) on H (Y | X τ (1) , ..., X τ ( l ) ) as a function of l for the E. c oli net work and random netw orks. 6.2 T olerance to p erturbations Finally , w e discuss the a v erage sensitivity of individual functions in the E. c oli netw ork. In sec- tion 3, w e found that the av erage sensitivity is small if the F ourier sp ectrum is concentrated on the co efficien ts of lo w degree. This app ears to b e the case for functions that are highly biased and for functions that dep end on few v ariables only . Figure 4 shows pairs of v alues (as( f ) , Pr[ f ( X ) = 1]) for eac h function in the E. c oli netw ork, again assuming that the X i are indep enden t and uniformly distributed. W e can see from Figure 4 that the a verage sensitivit y of all functions is close to the lo wer bound on the av erage sensitivit y . Note that the functions with high in-degree K (i.e., num b er of relev an t input v ariables), whic h could hav e av erage sensitivit y up to K , also hav e small av erage sensitivit y , b ecause those functions are highly biased. W e, therefore, can conclude that the func- tions ha ve small av erage sensitivit y either b ecause they depend on few v ariables only or b ecause they are highly biased. F or other input distributions, i.e., other v alues of p = P[ X i = 1] , ∀ i ∈ [ n ], w e obtained the same results. 7 Conclusion In a Bo olean netw ork, tolerance to perturbations, determinativ e p o wer, and statistical dep endencies b et w een nodes are prop erties of single functions in a probabilistic setting. Hence, w e analyzed single functions with pro duct distributed argument. W e used F ourier analysis of Bo olean functions to study the m utual information b et ween a function f ( X ) and a set of its inputs X A , as a measure of determinativ e p o wer of X A o ver f ( X ). W e related the mutual information to the F ourier sp ectrum and pro ved that the m utual information low er bounds the influence, a measure of p erturbation. W e also ga ve necessary and sufficien t conditions for statistical indep endence of f ( X ) and X A . F or the class of unate functions, whic h are particularly in teresting for biological netw orks, w e found that m utual information and influence are directly related (not just via an inequalit y). W e also found 15 0 0 . 5 1 1 . 5 0 0 . 5 1 as ( f ) Pr[ f = 1] L. Bound K = 2 K = 3 K = 4 K = 5 K = 6 K = 7 K = 8 K = 9 K = 10 K = 11 Figure 4: Average sensitivity in the E. c oli netw ork. Pairs of v alues (as( f ) , Pr[ f ( X ) = 1]) of eac h function in the E. c oli netw ork for different in-degrees K and uniformly distributed X . Moreo ver, a low er b ound on the av erage sensitivit y as( f ), i.e., Poincare’s inequalit y , is plotted. that MI( f ( X ); X i ) > 0 for eac h relev an t input i , which, as an application, implies that in a unate regulatory netw ork, a gene and its regulator must be statistically dep endent. As an application of our results, w e analyzed the large-scale regulatory netw ork of E. c oli . W e identified the most determinativ e input no des in the net work and found that it is sufficien t to kno w only a small subset of those in order to reduce the uncertaint y of the o verall netw ork state significantly . This, in turn, reduces the size of the state space in whic h the net work is lik ely to b e found significantly . A p ossible direction for future w ork is to provid e an analysis similar to that of the E. c oli regulatory netw ork for other Bo olean mo dels of biological netw orks, and see if similar conclusions as in section 6 can b e reached. One of the main assumptions in our w ork is the indep endence among the input v ariables of the net w ork. It w ould be in teresting to provide metho ds that can b e used beyond this setup. How ev er, deriving suc h results is challenging b ecause for dep enden t inputs, the basis functions Φ S ( x ) do not factorize as in (3), and many results cited and derived in this pap er make use of this particular form of the basis functions. In this paper, w e fo cused on generic prop erties of information-pro cessing netw orks that ma y help identify p ossible principles that underly biological net works. Assessing our findings from a biological persp ective would b e an in teresting next step. App endices A Lemma 1 F or the pro of of Theorems 1 and 5, we will need the following lemma: Lemma 1. L et f b e a Bo ole an function, let X b e pr o duct distribute d, and let A ⊆ [ n ] and some fixe d x A ∈ {− 1 , +1 } | A | b e given. Then, E [ f ( X ) | X A = x A ] = X S ⊆ A ˆ f ( S )Φ S ( x A ) . (16) 16 Pr o of. Inserting the F ourier expansion of f ( X ) given b y (4) in the left-hand side of (16) and utilizing the linearity of conditional exp ectation yields E [ f ( X ) | X A = x A ] = X S ⊆ [ n ] ˆ f ( S ) E [Φ S ( X ) | X A = x A ] . F or S ⊆ A , E [Φ S ( X ) | X A = x A ] = Φ S ( x A ) . Con versely , for S 6⊆ A , E [Φ S ( X ) | X A = x A ] = 0 . T o see this, assume without loss of generality that S = A ∪ { j } and j / ∈ A . Using the decomp osition prop ert y of the basis function as given in section 2.3, E [Φ S ( X ) | X A = x A ] = E " Y i ∈ S Φ { i } ( X ) | X A = x A # = Y i ∈ S E [Φ { i } ( X ) | X A = x A ] whic h is equal to zero as E [Φ { j } ( X ) | X A = x A ] = E [Φ { j } ( X )] = 0 . B Pro of of Theorem 1 First, P[ f ( X ) = 1 | X A = x A ] = 1 2 (1 + E [ f ( X ) | X A = x A ]) = 1 2 1 + X S ⊆ A ˆ f ( S )Φ S ( x A ) | {z } q ( x A ) , (17) where (17) follows from an application of Lemma 1. By definition of the conditional entrop y , H ( f ( X ) | X A ) = X x A ∈{− 1 , 1 } | A | P[ X A = x A ] H ( f ( X ) | X A = x A ) = X x A ∈{− 1 , 1 } | A | P[ X A = x A ] h (P[ f ( X ) = 1 | X A = x A ]) = X x A ∈{− 1 , 1 } | A | P[ X A = x A ] h ( q ( x A )) (18) = E [ h ( q ( X A ))] , (19) where h ( · ) is the binary en trop y function as defined in (7). T o obtain (18), we used (17). The exp ectation in (18) is with resp ect to the distribution of X A . Inserting q ( X A ) as given by (17) in (19) concludes the pro of. 17 C Pro of of Theorem 2 First, note that with q ( · ) as defined in (17), w e hav e E [4 q ( X A )(1 − q ( X A ))] = E 1 − X S ⊆ A ˆ f ( S )Φ S ( X A ) 2 = X S ⊆ A X U ⊆ A ˆ f ( S ) ˆ f ( U ) E [Φ S ( X A )Φ U ( X A )] = 1 − X S ⊆ A ˆ f ( S ) 2 , (20) where (20) follows from the orthogonality of the basis functions. W e start with pro ving the low er b ound in Theorem 2. Applying the low er b ound on the binary en tropy function h ( p ) ≥ 4 p (1 − p ), given in Theorem 1.2 of [26], on (19) yields H ( f ( X ) | X A ) = E [ h ( q ( X A ))] ≥ E [4 q ( X A )(1 − q ( X A ))] , and the low er b ound in Theorem 2 follows using (20). Next, we pro ve the upp er b ound in Theorem 2. Applying the upp er b ound on the binary en tropy function h ( p ) ≤ ( p (1 − p )) 1 / ln(4) , given in Theorem 1.2 of [26], on (19) yields H ( f ( X ) | X A ) = E [ h ( q ( X A )] ≤ E [( 4 q ( X A )(1 − q ( X A )) | {z } Y ) 1 / ln(4) ] . (21) The term Y in (21) is a random v ariable, and the function ( Y ) 1 / ln(4) is concav e in Y . An application of Jensen’s inequalit y (see e.g. [20]) yields E [( Y ) 1 / ln(4) ] ≤ ( E [ Y ]) 1 / ln(4) ; hence, the right-hand side of (21) can b e lo wer as H ( f ( X ) | X A ) ≤ ( E [4 q ( X A )(1 − q ( X A ))]) 1 / ln(4) . (22) Finally , the upp er b ound in Theorem 2 follows from com bining (22) and (20). D Pro of of Theorem 3 According to Prop osition 2, I A ( f ) = X S ⊆ [ n ] ˆ f ( S ) 2 X i ∈ S ∩ A 1 σ 2 i ≥ X S ⊆ [ n ] \∅ ˆ f ( S ) 2 | S ∩ A | min i ∈ A 1 σ 2 i ≥ min i ∈ A 1 σ 2 i X S ⊆ A \∅ ˆ f ( S ) 2 . (23) 18 Next, we rewrite the lo wer bound on H ( f ( X ) | X A ) given by Theorem 2 as X S ⊆ A \∅ ˆ f ( S ) 2 ≥ 1 − ˆ f ( ∅ ) 2 − H ( f ( X ) | X A ) . (24) By adding H ( f ( X )) − H ( f ( X )) on the righ t-hand side of (24) and using the definition of mutual information, (24) b ecomes X S ⊆ A \∅ ˆ f ( S ) 2 ≥ MI( f ( X ); X A ) − H ( f ( X )) + 1 − ˆ f ( ∅ ) 2 . (25) With V ar ( f ( X )) = 1 − ˆ f ( ∅ ) 2 and by using the inequalit y H ( f ( X )) ≤ (V ar ( f ( X ))) 1 / ln(4) , given in Theorem 1.2 of [26], (25) b ecomes X S ⊆ A \∅ ˆ f ( S ) 2 ≥ MI( f ( X ); X A ) − Ψ(V ar ( f ( X ))) , (26) with Ψ( · ) as defined in (11). Finally , Theorem 3 follo ws b y combining (23) and (26). E Pro of of Theorem 4 F or notational conv enience, let A = [ n ] \ { i } . By definition of the conditional entrop y , H ( f ( X ) | X A ) = X x A ∈{− 1 , 1 } | A | P[ X A = x A ] H ( f ( X ) | X A = x A ) = X x A ∈{− 1 , 1 } | A | P[ X A = x A ] h (P[ f ( X ) = 1 | X A = x A ]) , (27) where h ( · ) is the binary en tropy function as defined in (7). Observe that h (P[ f ( X ) = 1 | X A = x A ]) = h (P[ X i = 1]) if f ( X 1 = x 1 , ..., X i = 1 , ..., X n = x n ) 6 = f ( X 1 = x 1 , ..., X i = − 1 , ..., X n = x n ) and h (P[ f ( X ) = 1 | X A = x A ]) = 0 otherwise. Hence, 27 b ecomes H ( f ( X ) | X A ) = X x A ∈{− 1 , 1 } | A | P[ X A = x A ] h ( p i ) 1 { f ( X ) 6 = f ( X ⊕ e i ) } , where x ⊕ e i is the vector obtained from x b y flipping its i th entry , and Theorem 4 follows b y using the definition of the influence. 19 F Pro of of Theorem 5 By definition, f ( X ) and X A are statistically indep enden t if and only if for all x A ∈ {− 1 , +1 } | A | P[ f ( X ) = 1 | X A = x A ] = P[ f ( X ) = 1] . (28) With P[ f ( X ) = 1 | X A = x A ] = 1 2 + 1 2 E [ f ( X ) | X A = x A ] and application of Lemma 1 given in App endix 1, (28) b ecomes X S ⊆ A ˆ f ( S )Φ S ( x A ) = ˆ f ( ∅ ) ⇔ X S ⊆ A \∅ ˆ f ( S )Φ S ( x A ) = 0 . (29) It follows from the F ourier expansion (4) that (29) holds for all x A ∈ {− 1 , +1 } | A | if and only if ˆ f ( S ) = 0 for all S ⊆ A \ ∅ , whic h prov es the theorem. Ac kno wledgmen ts W e would lik e to thank Sara Al-Sa yed and Dejan Lazich for their helpful discussions and careful reading of the manuscript. References [1] S. Kauffman, Metabolic stability and epigenesis in randomly constructed genetic nets. J. Theor. Biol. 22 (3), 437–467 (1969) [2] S. Kauffman, Homeostasis and differen tiation in random genetic control netw orks. Nature. 224 (5215), 177–178 (1969) [3] S. Davidic h, M.I. Bornholdt, Bo olean netw ork mo del predicts cell cycle sequence of fission y east. PLoS ONE. 3 (2), e1672 (2008) [4] J. Saez-Ro driguez, L. Simeoni, J.A. Lindquist, R. Hemen wa y , U. Bommhardt, B. Arndt, U Haus, R W eismantel, E.D. Gilles, S. Klam t, B. Sc hrav en, A logical mo del provides insights in to T cell receptor signaling. PLoS Comput. Biol. 3 (8), e163 (2007) [5] F. Li, T. Long, Y. Lu, Q. Ouyang, C. T ang, The yeast cell-cycle netw ork is robustly designed. Pro c. Natl. Acad. Sci. USA 101 (14), 4781–4786 (2004) [6] B.O. Cov ert, M.W. Knigh t, E.M. Reed, J.L. Herrgard, M.J. P alsson, Integrating high- throughput and computational data elucidates bacterial net works. Nature. 429 (6987), 92–96 (2004) [7] J. Kesseli, P . R¨ am¨ o, O. Yli-Harja, On sp ectral techniques in analysis of Bo olean net works. Ph ysica D: Nonlinear Phenomena. 206 (1–2), 49–61 (2005) 20 [8] J. Kesseli, P . R¨ am¨ o, O. Yli-Harja, T rac king p erturbations in Bo olean netw orks with sp ectral metho ds. Ph ys Rev E 72 (2), 026137 (2005) [9] L. Rib eiro, A.S. Kauffman, S.A. loyd-J Price, B. Samuelsson, J.E.S. Socolar, Mutual informa- tion in random Bo olean mo dels of regulatory net works. Phys Rev E. 77 , 011901 (2008) [10] R. Heck el, S. Schober, M. Bossert, Determinative p o wer and tolerance to p erturbations in Bo olean net works. P ap er presented at the 9th international w orkshop on computational sys- tems biology , Ulm, Germany , 4-6 June 2012 [11] G. Xiao, J. Massey , A sp ectral characterization of correlation-imm une com bining functions. Inf. Theory IEEE T rans. 34 (3), 569–571 (1988) [12] S. Liang, S. F uhrman, R. Somogyi, Rev eal, a general reverse engineering algorithm for inference of genetic netw ork architectures. Pacific Symp osium on Bio computing 3 18–29 (1998) [13] J. Grefenstette, S. Kim, S. Kauffman, An analysis of the class of gene regulatory functions implied by a biochemical mo del. Bio. Syst. 84 (2), 81–90 (2006) [14] B. Derrida, Y. Pomeau, Random net works of automata: a simple annealed appro ximation. Europh ysics. Lett. 1 (2), 45–49 (1986) [15] R.R. Bahadur, A representation of the joint distribution of resp onses to n dichotomous items. in Studies in Item Analysis and Pr e diction , ed. by H. Solomon (Stanford Universit y Press, Stanford, 1961), pp.158–168 [16] M. Ben-Or, N. Linial, Collective coin flipping, robust v oting sc hemes and minima of Banzhaf v alues. P ap er presen ted at the 26th annual symp osium on foundations of computer science, P ortland, Oregon, USA, 21–23 Octob er 1985 [17] C. Bshouty , N.H. T amon, On the F ourier sp ectrum of monotone functions. J. A CM. 43 (4), 747–770 (1996) [18] S. Sc hob er, Ab out Bo olean netw orks with noisy inputs. Paper presen ted at the fifth interna- tional workshop on computational systems biology , Leipzig, Germany , 11–13 June 2008 [19] V. Matac he, M.T. Matache, On the sensitivity to noise of a Bo olean function. J. Math. Ph ys. 50 (10), 103512 (2009) [20] J.A. Cov er, T.M. Thomas, Elements of Information The ory , 2nd edn. (Wiley-Interscience, New Y ork, 2006) [21] R. F orre, Metho ds and instruments for designing S-b o xes. J. Cryptology 2 (3), 115-130 (1990) [22] B. Rosell, L. Hellerstein, S. Ray , Why skewing works: learning difficult Bo olean functions with greedy tree learners. P ap er presented at the 22nd in ternational conference on mac hine learning, Bonn, Germany , 7–11 August 2005 [23] A. Diskin, M. Koppel, V oting pow er: an information theory approach. So c. Choice W elfare 34 , 105–119 (2010) 21 [24] L. Raeymaekers, Dynamics of Bo olean net w orks con trolled b y biologically meaningful func- tions. J. Theor. Biol. 218 (3), 331–341 (2002) [25] S. Sc hob er, Analysis and Identific ation of Bo ole an Networks using Harmonic A nalysis , (Der Andere V erlag, Germany , 2011) [26] F. T opso e, Bounds for entrop y and div ergence for distributions ov er a t wo-elemen t set. In- equalities Appl. Pure Math. 2 , (2001) 22

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

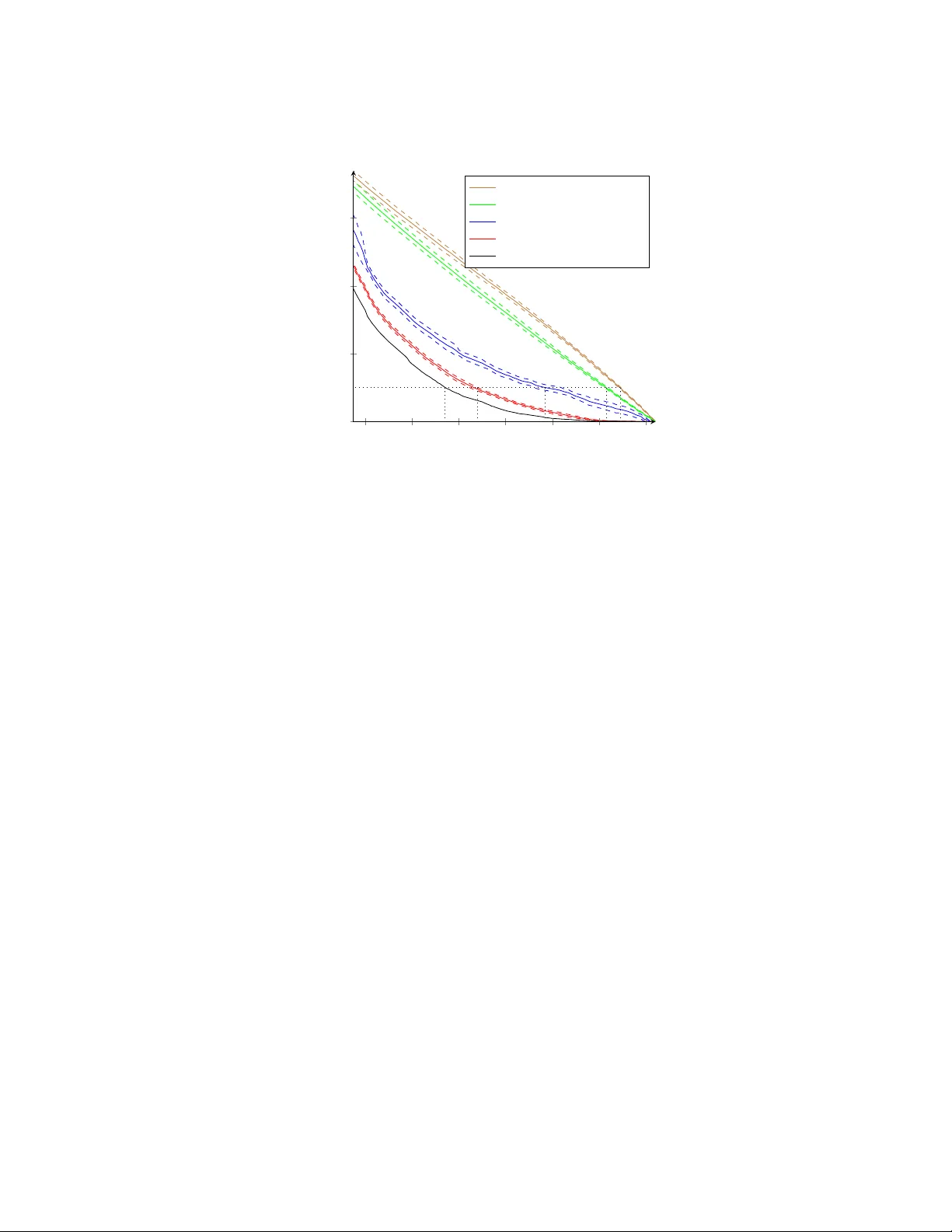

Leave a Comment