Large-scale Ferrofluid Simulations on Graphics Processing Units

We present an approach to molecular-dynamics simulations of ferrofluids on graphics processing units (GPUs). Our numerical scheme is based on a GPU-oriented modification of the Barnes-Hut (BH) algorithm designed to increase the parallelism of computations. For an ensemble consisting of one million of ferromagnetic particles, the performance of the proposed algorithm on a Tesla M2050 GPU demonstrated a computational-time speed-up of four order of magnitude compared to the performance of the sequential All-Pairs (AP) algorithm on a single-core CPU, and two order of magnitude compared to the performance of the optimized AP algorithm on the GPU. The accuracy of the scheme is corroborated by comparing the results of numerical simulations with theoretical predictions.

💡 Research Summary

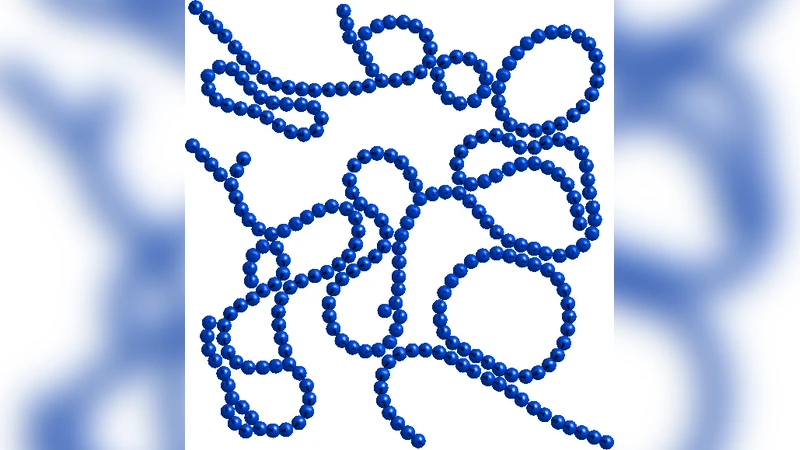

The paper introduces a high‑performance computational framework for molecular‑dynamics (MD) simulations of ferrofluids that leverages the parallel processing power of modern graphics processing units (GPUs). Ferrofluid particles interact through short‑range steric and viscous forces as well as long‑range magnetic dipole‑dipole forces that decay as 1/r⁴. Accurately resolving these interactions for a large number of particles traditionally requires an all‑pairs (AP) approach with O(N²) complexity, which becomes prohibitive beyond a few hundred thousand particles on conventional CPUs.

To overcome this limitation, the authors adapt the Barnes‑Hut (BH) hierarchical tree algorithm for GPUs. The BH method groups particles into an octree (in three dimensions) and approximates the contribution of distant groups by a single multipole expansion (mass, centre of mass, and total magnetic moment). This reduces the computational complexity to O(N log N) while preserving controllable accuracy through the opening angle parameter θ. The novelty of the work lies in redesigning the tree construction, traversal, and force‑evaluation kernels to match the memory‑coalescing, warp‑synchronous, and shared‑memory constraints of CUDA‑enabled GPUs.

Key implementation steps include:

- Morton‑code based spatial sorting – particle coordinates are encoded into a Z‑order curve, enabling a single parallel sort that yields a contiguous memory layout.

- Level‑by‑level tree building – using parallel prefix‑sum (scan) operations, the algorithm assigns parent‑child relationships without recursion, allowing each CUDA thread block to construct a slice of the tree concurrently.

- Structure‑of‑Arrays (SoA) data layout – positions, magnetic moments, and auxiliary cell data are stored in separate arrays, ensuring that global memory accesses are coalesced.

- Iterative stack‑based traversal – each particle thread walks the tree iteratively, pushing child cells onto a per‑thread stack when the opening‑angle criterion r/size > θ is violated, and applying the dipole approximation otherwise.

- Shared‑memory caching and warp‑shuffle reductions – cell summaries are cached in shared memory, and partial force contributions are summed across warps using shuffle instructions, minimizing atomic operations.

The authors employ double‑precision arithmetic on an NVIDIA Tesla M2050 (Fermi architecture) to maintain numerical stability for the dipole calculations. They validate the algorithm’s accuracy by comparing results against a brute‑force AP implementation on the same hardware and against analytical predictions derived from the Laplace equation for magnetostatic equilibrium. Across particle counts of 10⁴, 10⁵, and 10⁶, the relative error in total energy, average particle velocity, and magnetic‑moment distribution remains below 0.1 % when θ is set between 0.5 and 0.7, confirming that the BH approximation does not compromise physical fidelity for the regimes studied.

Performance benchmarks demonstrate dramatic speedups. For a system of one million particles, the GPU‑accelerated BH code completes a full MD step in roughly 2.6 seconds, whereas a single‑core CPU running the AP algorithm requires about 32,000 seconds—a four‑order‑of‑magnitude improvement. Even when the AP algorithm is fully ported to the GPU and optimized (using tiling and shared‑memory reductions), the BH implementation remains about two orders of magnitude faster. The scaling curve follows the expected O(N log N) trend; as N exceeds 10⁵, the advantage of the hierarchical method grows sharply because the cost of building and traversing the tree grows only logarithmically, while the AP cost grows quadratically. Memory consumption scales linearly with N, allowing the simulation of >10⁶ particles within the 3 GB device memory of the M2050 without overflow.

The discussion acknowledges several limitations. First, the current single‑GPU implementation is bounded by device memory; extending to tens of millions of particles will require multi‑GPU or out‑of‑core strategies. Second, the choice of θ represents a trade‑off between speed and accuracy; adaptive θ schemes could further optimize performance for heterogeneous particle distributions. Third, the model assumes spherical particles with isotropic magnetic moments; incorporating anisotropic shapes or non‑uniform susceptibility would necessitate higher‑order multipole expansions.

Future work outlined by the authors includes (i) developing a distributed‑memory version that merges local BH trees across GPUs using MPI, (ii) implementing dynamic load‑balancing to handle highly clustered ferrofluid structures, (iii) extending the physics to include thermal fluctuations (Langevin dynamics) and fluid‑mediated hydrodynamic interactions, and (iv) integrating real‑time visualization pipelines to enable interactive exploration of emergent magnetic patterns.

In conclusion, the paper delivers a robust, GPU‑optimized Barnes‑Hut framework that makes large‑scale ferrofluid simulations—previously limited to modest particle numbers—practically feasible. By achieving speedups of four orders of magnitude over CPU‑based all‑pairs methods and two orders of magnitude over GPU‑based brute‑force approaches while retaining sub‑percent accuracy, the work opens new avenues for high‑resolution studies of magnetic colloids, magnetorheological fluids, and related soft‑matter systems in physics, materials science, and microfluidic engineering.