Genetic Soundtracks: Creative Matching of Audio to Video

The matching of the soundtrack in a movie or a video can have an enormous influence in the message being conveyed and its impact, in the sense of involvement and engagement, and ultimately in their aesthetic and entertainment qualities. Art is often associated with creativity, implying the presence of inspiration, originality and appropriateness. Evolutionary systems provides us with the novelty, showing us new and subtly different solutions in every generation, possibly stimulating the creativity of the human using the system. In this paper, we present Genetic Soundtracks, an evolutionary approach to the creative matching of audio to a video. It analyzes both media to extract features based on their content, and adopts genetic algorithms, with the purpose of truncating, combining and adjusting audio clips, to align and match them with the video scenes.

💡 Research Summary

**

The paper introduces “Genetic Soundtracks,” an automated framework that matches music to video by extracting simple yet perceptually relevant features from both media and then employing a genetic algorithm (GA) to compose, truncate, and align audio snippets with video scenes. The motivation stems from the growing volume of user‑generated video content, where manual soundtrack creation is time‑consuming and often lacks creative diversity. By leveraging the stochastic nature of evolutionary computation, the system can generate multiple distinct solutions from the same input, thereby stimulating user creativity.

Feature Extraction

Two low‑level features are extracted for each modality. For video, frame‑differencing is applied to successive frames; the resulting values are aggregated into 500 ms blocks to produce (i) a continuous movement metric and (ii) scene‑cut timestamps (detected when a block’s average exceeds the recent mean by a threshold). For audio, the signal is sampled every 10 ms, transformed with FFT, and the energy in ten octave‑wide frequency bands (≈21 Hz–22 kHz) is computed. These band energies are averaged over the same 500 ms blocks, yielding a time‑varying loudness curve and a set of band‑level vectors used to locate significant musical changes (both absolute level shifts and variance spikes). Thresholds for change detection are dynamically adjusted based on current loudness, elapsed time since the last cut, and a user‑defined scaling factor.

Genetic Representation

Each 500 ms interval of the target video corresponds to a gene. A chromosome is a sequence of genes whose total length matches the video duration; genes store references to audio clips (including start and end positions) or silence. The initial population is generated semi‑randomly by concatenating randomly chosen audio snippets and silence intervals until the chromosome length is satisfied. This seeding strategy ensures diversity while respecting the temporal constraint.

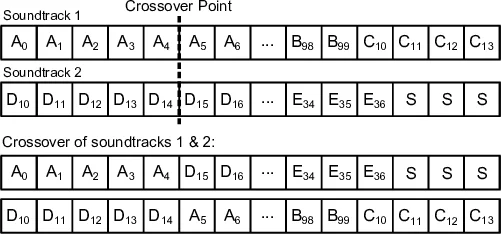

Genetic Operators

Selection uses tournament selection to pick fitter individuals. Crossover selects a random temporal point and swaps the tail portions of two parent chromosomes, producing two offspring. Mutation operates at the snippet level with a configurable probability and can (i) shift a snippet’s position, (ii) replace it with another snippet, (iii) stretch or shrink its duration, or (iv) delete it (creating silence). A decimation step removes chromosomes that violate two hard constraints: (a) each snippet must be longer than a minimum duration to avoid excessive fragmentation, and (b) snippet start points must align with detected musical transition points to keep audio transitions smooth.

Fitness Function

The fitness (or adequacy) score is a weighted sum of four criteria, all of which can be tuned by the user:

- Audio‑Video Correlation – Pearson correlation between video movement and audio loudness, which the algorithm seeks to maximise.

- Snippet Continuity – Encourages longer audio segments and penalises a high number of cuts, reducing abrupt changes.

- Silence Ratio – Prevents over‑use of silence by penalising solutions with excessive silent portions.

- Temporal Alignment – Rewards alignment of audio segment boundaries with detected scene cuts, discouraging starts in the middle of a shot.

By adjusting the weights, users can bias the system toward “dramatic” (high correlation, few cuts), “rhythmic” (strong alignment with musical changes), or “ambient” (more silence) styles.

Experimental Evaluation

The authors tested the system on a 70‑second excerpt from a Johan Hex film, which contains a calm dialogue segment (first 44 s) followed by an action‑packed shoot‑out. Two songs were supplied: (i) Bittersweet (melodic, cello‑driven) and (ii) Fuel (aggressive thrash metal). The GA produced several plausible matchings. One notable solution used the soft opening of Bittersweet for the dialogue portion and switched to the high‑energy segment of Fuel for the shoot‑out, matching low video movement with low audio level and high movement with high audio level. Other runs selected different Fuel excerpts (e.g., 126‑143 s, 193‑212 s) for the action part, demonstrating the system’s ability to generate diverse yet sensible soundtracks from the same inputs. Transition artefacts were mitigated with fade‑in/out effects, though occasional unnatural cuts remained, suggesting the need for interactive fitness evaluation.

Conclusions and Future Work

The study confirms that simple movement and loudness features, combined with a GA, can automatically produce coherent video‑music pairings and generate multiple creative alternatives. Limitations include the reliance on low‑level cues (ignoring emotional content, lyrics, genre, or higher‑level semantic information) and the residual unnaturalness of some audio transitions. Future directions propose (a) incorporating interactive fitness where users provide real‑time subjective feedback, (b) expanding the feature set to include colour, illumination, tempo, harmony, and metadata, and (c) exploring novel evolutionary operators that better respect musical phrasing and cinematic pacing. By involving users more directly in the evolutionary loop, the authors aim to create a hybrid system that balances automatic optimisation with human artistic judgement.

Comments & Academic Discussion

Loading comments...

Leave a Comment