Parsimonious module inference in large networks

We investigate the detectability of modules in large networks when the number of modules is not known in advance. We employ the minimum description length (MDL) principle which seeks to minimize the total amount of information required to describe the network, and avoid overfitting. According to this criterion, we obtain general bounds on the detectability of any prescribed block structure, given the number of nodes and edges in the sampled network. We also obtain that the maximum number of detectable blocks scales as $\sqrt{N}$, where $N$ is the number of nodes in the network, for a fixed average degree $

💡 Research Summary

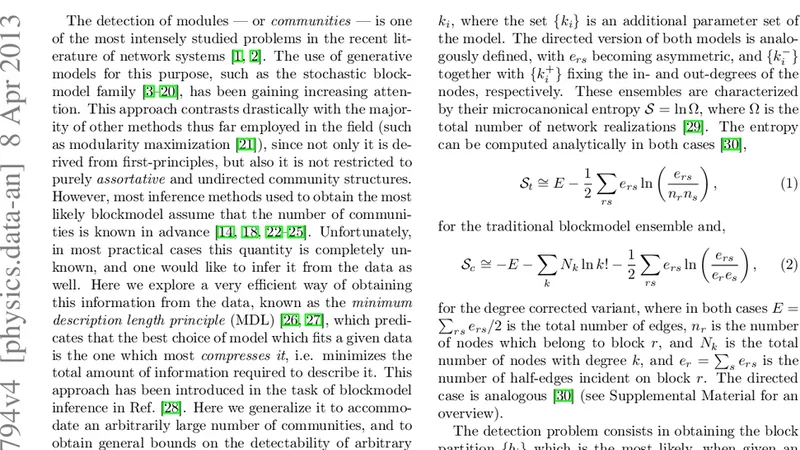

The paper tackles the fundamental problem of community (or block) detection in networks without assuming prior knowledge of the number of blocks. It adopts the Minimum Description Length (MDL) principle, which seeks the most compact encoding of both the data (the observed network) and the model (the stochastic block model, SBM). By minimizing the total number of bits required to describe the network, MDL naturally penalizes overly complex models and thus avoids over‑fitting.

The authors first derive a general “detectability bound” for any prescribed block structure. Using information‑theoretic arguments they show that, for a network with N nodes and average degree ⟨k⟩ (or equivalently a total of E edges), the number of blocks B that can be statistically distinguished is limited. In particular, when ⟨k⟩ is held constant, the maximal detectable B scales as √N. Beyond this threshold the description‑length gain from adding more blocks is too small to overcome the penalty for extra parameters, making the finer partition indistinguishable from random fluctuations. This result provides a clean, model‑agnostic limit that complements earlier spectral and belief‑propagation thresholds.

To operationalize MDL‑based inference, the paper introduces a multilevel Markov‑chain Monte Carlo (MCMC) algorithm. The algorithm proceeds in a simulated‑annealing fashion: an initial high‑temperature phase explores a broad range of partitions, while a gradual cooling refines the solution toward the MDL optimum. Crucially, the number of blocks B is treated as a dynamic variable; proposals that split or merge blocks are accepted according to the MDL change, allowing the method to discover B automatically when it is unknown. The computational cost is O(τ N) when B is fixed, and O(τ N log N) when B must be inferred, where τ denotes the mixing time of the Markov chain. Empirical measurements show τ to be modest even for very large graphs, so the algorithm scales well to millions of edges.

The methodology is validated on a real‑world bipartite network of actors and films containing over one million edges. The MDL‑driven inference recovers the natural two‑partite structure (actors vs. movies) with high fidelity, demonstrating that the approach can capture disassortative, bipartite patterns that many traditional community‑detection algorithms (which often assume assortative mixing) would miss or mischaracterize.

Beyond the specific experiments, the authors discuss extensions. Because MDL is a generic model‑selection criterion, it can be combined with more sophisticated SBMs (e.g., degree‑corrected, hierarchical, or overlapping variants) without altering the core algorithmic framework. Moreover, the multilevel MCMC scheme can be adapted for dynamic networks, enabling online updating of block assignments as new edges arrive.

In summary, the paper makes three interrelated contributions: (1) a rigorous information‑theoretic bound on the number of detectable blocks, showing a √N scaling for fixed average degree; (2) a principled MDL formulation that simultaneously selects the optimal number of blocks and avoids over‑fitting; and (3) an efficient, scalable MCMC inference engine that operates in near‑linear time for large graphs. These advances provide a solid statistical foundation for community detection in massive, heterogeneous networks and open the door to robust, automated analysis across a wide range of scientific domains.

Comments & Academic Discussion

Loading comments...

Leave a Comment