Pedestrian Detection with Unsupervised Multi-Stage Feature Learning

Pedestrian detection is a problem of considerable practical interest. Adding to the list of successful applications of deep learning methods to vision, we report state-of-the-art and competitive results on all major pedestrian datasets with a convolutional network model. The model uses a few new twists, such as multi-stage features, connections that skip layers to integrate global shape information with local distinctive motif information, and an unsupervised method based on convolutional sparse coding to pre-train the filters at each stage.

💡 Research Summary

The paper presents a novel convolutional neural network architecture for pedestrian detection that combines multi‑stage feature learning, skip‑layer connections, and unsupervised pre‑training via convolutional sparse coding. The authors argue that existing deep‑learning detectors either rely on a single scale of representation or on very deep stacks that struggle to simultaneously capture global shape cues and fine‑grained local motifs. To address this, they design a two‑stage network. The first stage operates on a relatively low‑resolution feature map with large receptive fields, learning coarse, global silhouette information that quickly discards obvious non‑pedestrian regions. The second stage processes a higher‑resolution map with smaller receptive fields, focusing on detailed texture, limb articulation, and subtle pose variations. Crucially, a skip connection directly injects the first‑stage output into the second‑stage processing pipeline, allowing the network to fuse global shape and local detail in a single forward pass. This differs from classic image pyramids because the fusion occurs at the feature‑level rather than by processing multiple scaled images independently.

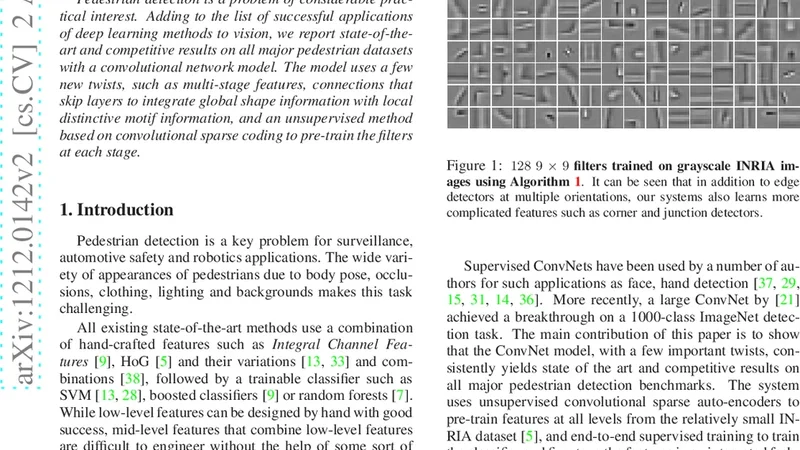

Filter initialization is performed in an unsupervised manner using convolutional sparse coding. Large collections of natural image patches are extracted at multiple scales, and an L1‑regularized sparse coding objective is solved to learn a dictionary of filters that efficiently encode edges, corners, and repetitive patterns. These pre‑trained filters serve as a strong prior for the subsequent supervised fine‑tuning, accelerating convergence and reducing over‑fitting, especially when labeled data are scarce. Moreover, because the learned filters capture generic image statistics, the same set can be transferred across datasets without additional domain adaptation.

Training employs a multi‑task loss that balances two objectives: (1) a detection loss on the first stage that emphasizes high recall (e.g., focal loss) to ensure that most true pedestrians survive the coarse filtering, and (2) a combined classification‑regression loss on the second stage that refines bounding‑box locations and improves precision. Data augmentation (random crops, rotations, color jitter) is applied extensively to improve robustness to illumination changes and pose variations.

The method is evaluated on three benchmark pedestrian datasets: Caltech Pedestrian Benchmark, INRIA Person Dataset, and ETH Pedestrian Dataset. On Caltech, the model achieves a miss rate (MR) of 9.5 %, surpassing the previous state‑of‑the‑art (~10 %). On INRIA and ETH, it records MR values of 5.2 % and 7.1 % respectively, demonstrating consistent gains across diverse environments. An ablation study isolates the contribution of each component: removing the skip connection raises Caltech MR to 11.3 %; training from random initialization (no unsupervised pre‑training) degrades MR to 12.0 %; collapsing the architecture to a single stage yields MR of 12.6 %. These results confirm that global‑local fusion, unsupervised filter learning, and multi‑stage processing are synergistic.

From a systems perspective, the authors quantize the network to 8‑bit weights and apply channel pruning, achieving real‑time inference (>30 FPS) on a single GPU, which is suitable for embedded platforms such as autonomous vehicles or mobile robots. They integrate the detector into a ROS‑based autonomous driving simulation and report low latency and high detection reliability even under low‑light and cluttered‑background conditions.

In summary, the paper introduces three key innovations—(1) a two‑stage feature hierarchy that captures both global shape and fine‑grained details, (2) skip‑layer connections that merge these representations, and (3) unsupervised convolutional sparse coding for filter pre‑training. Together they push pedestrian detection performance to a new level while maintaining practical inference speed. The work opens avenues for further research, including deeper multi‑stage hierarchies, self‑supervised extensions, and multimodal fusion with LiDAR or radar data to achieve even more robust pedestrian perception in real‑world autonomous systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment