Deterministic Sequencing of Exploration and Exploitation for Multi-Armed Bandit Problems

In the Multi-Armed Bandit (MAB) problem, there is a given set of arms with unknown reward models. At each time, a player selects one arm to play, aiming to maximize the total expected reward over a horizon of length T. An approach based on a Determin…

Authors: Sattar Vakili, Keqin Liu, Qing Zhao

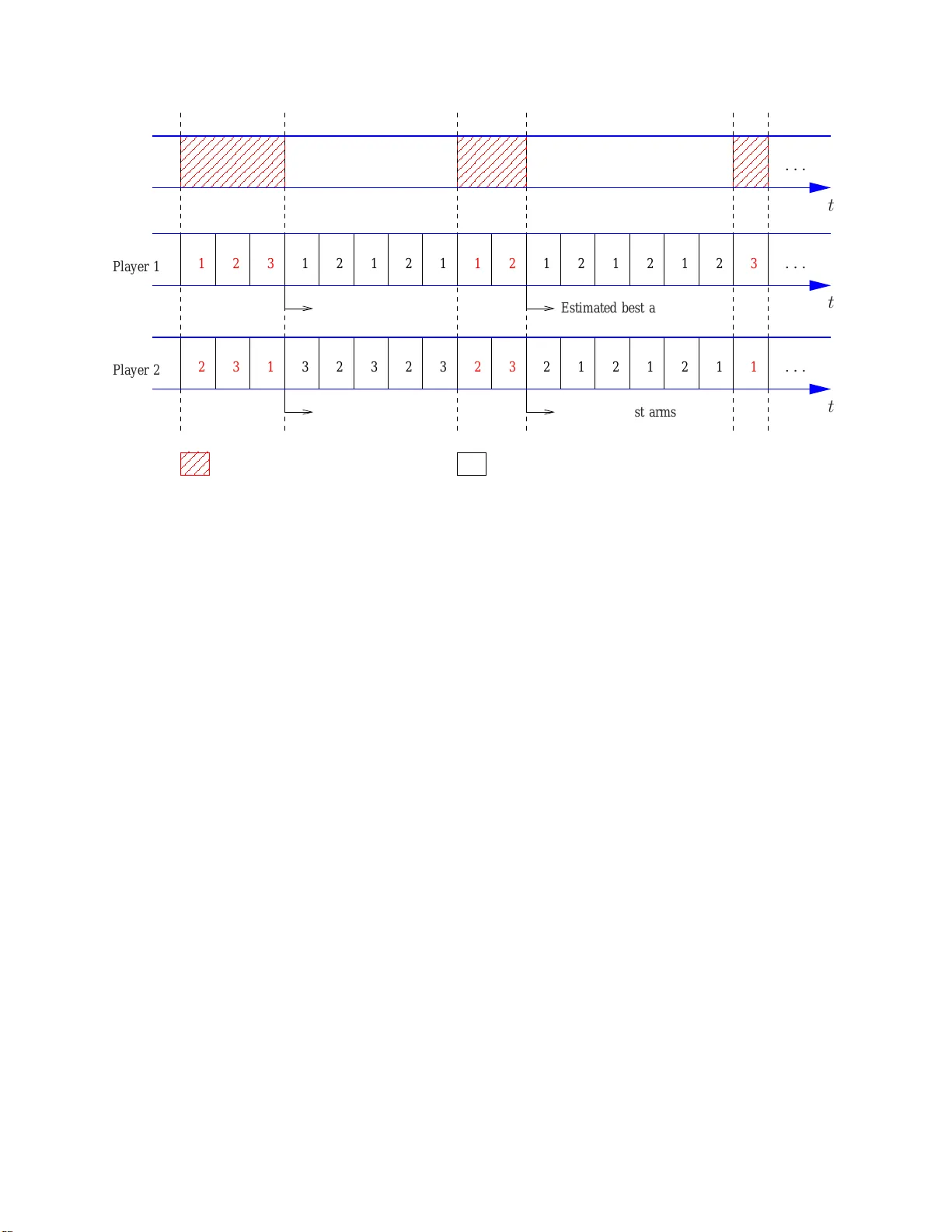

1 Deterministic Sequencing of Exploration and Exploitation for Multi-Armed Bandit Problems Sattar V akili, K eqin Liu, Qing Zhao Abstract In the Multi-Arm ed Bandit (MAB) proble m, there is a giv en set of arm s with unkn own rew ard models. At each ti me, a player selects one arm to play , aiming to maximize the total expected re ward o ver a horizo n of length T . An approach based on a Deterministic Sequencin g of Exploration and Exploitation (DSEE) is developed for constructing sequential arm selection policies. It is shown that for all light-tailed rew ard distrib utions, DSEE achiev es the optimal logarithm ic order of the regret, where re gret is defined as the total expected reward loss again st the ideal case with known reward mo dels. For heavy-tailed reward distributions, DSEE achieves O ( T 1 /p ) regre t when the moments of t he re ward distributions exist up t o the p th ord er f or 1 < p ≤ 2 and O ( T 1 / (1+ p/ 2) ) for p > 2 . W ith the knowledge of an uppe rboun d on a finite moment of the heavy-tailed r ew ard distributions, DSEE offers the o ptimal loga rithmic regret order . Th e propo sed DSEE approach co mplements existing w ork on MAB by p roviding c orrespon ding r esults for general reward d istributions. Furtherm ore, with a clearly defined tun able p arameter—the card inality of the explo ration sequence, the DSEE approach is easily extendable to variations of MAB, inc luding MAB with various objectives, dece ntralized MAB with mu ltiple player s an d incom plete rew ard observations under collisions, MAB with u nknown Markov dyn amics, and combin atorial MAB with d ependen t arms that o ften arise in n etwork optimization pr oblems such as the shortest p ath, the minimum span ning, and the dom inating set pr oblems under unk nown r andom we ights. Index T erms Multi-armed bandit, regret, deter ministic sequencing of exploration and exploitation , decentr alized multi-armed band it, restless multi-armed bandit, combinato rial mu lti-armed b andit. This work was supported by the Army Research Office under Grant W911NF-12-1-0271. Part of this work was presented at the Allerton Confer ence on Communications, Contr ol, and Computing , S eptember , 2011. Sattar V akili, K eqin Liu, and Qing Zhao are with the Depa rtment of Electrical an d Computer Engineering, Uni versity of California, Davis, CA, 95616, USA. E mail: { svakili, kqliu, qzhao } @ucda vis.edu. 2 I . I N T R O D U C T I O N A. Multi-Armed Bandit Multi-armed bandit (MAB) is a class o f sequential learning and decision prob lems with unknown models. In the classic MAB, there are N independent arms and a singl e player . At each tim e, the player choos es one arm to play and obtains a random re ward drawn i.i.d. over time from an un known distri bution. Dif ferent arms may h a ve differe nt rew ard dis tributions. The design objective is a sequential arm selection po licy th at maximizes the total expected re ward over a horizon of length T . The MAB p roblem finds a wide range of applications including clinical trials, tar get tracking, dynamic spectrum access, Internet advertising and W eb search, and social economical networks (see [1]–[3] and refer ences th erein). In the MAB probl em, each received re war d plays two roles: increasing the wealth of the player , and providing one m ore observation for learning the rew ard statistics of t he arm. T he tradeof f between exploration and exploit ation i s thus clear: which role sh ould be emphasized in arm selection—an arm less explored thu s holding potentials for t he future or an arm with a good history of rew ards? In 1952, Robbins addressed the two-armed bandit problem [1]. He showed that the same m aximum aver age re ward achie vable under a known model can be obt ained by dedicating two arbit rary sublinear sequences for playing each of the two arms. In 1985, Lai and Robbins proposed a finer performance measure, the so-called regre t, defined as the expected total re ward loss with respect to the ideal scenario of known rew ard models (under which the best arm is alw ays played) [4]. Re gret not o nly indicates whether the m aximum a verage re ward under known models is achieve d, but also measures the conv er gence rate of the a vera ge re ward, or th e effec tiveness of learning. Alth ough all poli cies with subli near regret achie ve the maximum average rew ard, the differ ence in their total expected reward can be arbitrarily lar ge as T increases. The minim ization of the regret is t hus of great interest. Lai and Robbi ns showed that the minimum regret has a logarithmi c order in T and constructed explicit policies to achiev e the minim um regret growth rate for several rewa rd distributions including Bernoulli, Poisson, Gaussian, Laplace [4] un der the assu mption that t he distribution type is k nown. In [5], Agra wal dev eloped simpler index-type policies in explicit form for the above four d istributions as well as e xponential distribution assuming kno wn di stribution ty pe. In [6], Auer et al. in 2002 [6] dev eloped order opti mal i ndex poli cies for any unkno wn distribution wi th bounded support 3 assuming the support range is known. In these classic policies de veloped in [4]–[6], arms are prioritized according to two st atistics: the sample mean ¯ θ ( t ) calculated from past observations up to tim e t and t he nu mber τ ( t ) of times that the arm has been played up t o t . The lar ger ¯ θ ( t ) is or the smaller τ ( t ) is, the higher the priority giv en to this arm i n arm selection. The tradeoff between exploration and exploitation is reflected in ho w these two statist ics are combined together for arm selection at each gi ven time t . This is most clearly seen in the UCB (Upper Confidence Bound) policy proposed by Auer et al. in [6], in which an i ndex I ( t ) is computed for each arm and the arm with t he largest index is chosen. Th e index has the fol lowing sim ple form: I ( t ) = ¯ θ ( t ) + s 2 log t τ ( t ) . (1) This index form is intuitive in the light of Lai and Robbins’ s result on the logarithmic order of the m inimum regret which indicates t hat each arm needs to b e explored on the order of log t times. For an arm sam pled at a smaller order than log t t imes, i ts ind ex, domi nated by the second term (referred to as the upper confidence boun d), will be suffic ient lar ge for large t to ensure further exploration. B. Deterministic Sequencing of Exploration and Expl oitation In this paper , we de velop a new approach to the MAB problem. Based on a Deterministic Sequencing of Expl oration and Exploi tation (DSEE), this approach d iff ers from t he classic pol i- cies proposed in [4]–[6] by s eparating in time the two objectives of exploration and exploitation. Specifically , time is divided int o two interlea ving sequences: an exploration sequence and an exploitation sequence. In th e former , the player pl ays all arms in a round-robin fashion. In the l atter , the player plays t he arm with the l ar gest sample mean (or a properly chosen m ean estimator). Un der thi s approach, the tradeof f b etween exploration and exploitat ion is reflected in t he cardinality of the exploration sequence. It is not difficult to see that the regret order is lower bounded by the cardinality of the e xploration s equence since a fixed fraction of the exploration sequence is spent on bad arms. Nevertheless, t he exploration sequence needs to be chosen suffi ciently dense to ensure ef fectiv e learning of the best arm. The key issue here is to find the minim um cardinality of t he exploration sequence that ensures a re ward loss in the 4 exploitation sequence caused by incorrectly identified arm rank having an order no lar ger t han the cardinality of the exploration sequence. W e show that when the re ward distributions are light-tailed, DSEE achiev es the optim al logarithmic order of t he regret using an exploration sequence with O (log T ) cardinali ty . For hea vy-tailed re ward distrib utions, DSEE achie ves O ( T 1 /p ) regret when the moments of the re ward distributions exist up to the p th order for 1 < p ≤ 2 and O ( T 1 / (1+ p/ 2) ) for p > 2 . W ith the knowledge o f an up perbound on a finite moment of the heavy-tailed rew ard distributions, DSEE off ers the optimal logarithm ic regret order . W e poin t out that b oth the classic policies in [4]–[6] and the DSEE app roach developed in this paper require certain kno wledge on the re ward d istributions for policy const ruction. The classic policies in [4]–[6] appl y to specific distributions with either k nown distribution types [4], [5] or known finite s upport range [6]. The advantage o f the DSEE approach is that it applies to any distribution without knowing th e distribution type. The ca veat is that it requires the knowledge of a positive lower bound on t he difference in the reward means of the best and the s econd best arms. T his can be a more demanding requirement than the di stribution type or the su pport range of the reward distributions. By increasing the cardinali ty of t he exploration sequence, howe ver , we sh ow that DSEE achieve s a regret arbit rarily close to the logarithmic o rder wi thout any knowledge o f the re ward model. W e further emphasize that the sublinear regret for re ward distributions with hea vy tails is achie ved withou t any knowledge of the re ward mod el (other than a lower bou nd on the order of the highest finite moment). C. Extendability to V a r iations of MAB Diffe rent from the classic po licies p roposed i n [4]–[6], the DSEE approach has a clearly defined tunable parameter — the cardinali ty of th e e xploration sequence—which can be adjusted according t o the “hardness ” (in terms of learning) of the reward distributions and ob serv ation models. It is thus more easily e xtendable to handle variations of MAB, includin g d ecentralized MAB with mu ltiple players and incomplete reward observations under collisions, MAB wit h unknown Marko v dynamics, and combinatorial MAB with dependent arms that often arise in network opt imization prob lems such as the shortest path, t he minimum spanning, and the dominating set problems under unknown random weight s. Consider first a decentralized MAB problem i n wh ich mult iple distributed players learn from 5 their local o bserva tions and make decisions independent ly . Wh ile other pl ayers’ obs erv ations and actions are unobs erv able, players’ actions affec t each ot her: conflicts occur when m ultiple players choose the same arm at the sam e time and conflicting players can o nly share the rew ard offere d by the arm, not necessarily with conservation. Such an e vent is referred to as a collision and is unobservable to the players. In other words, a player does not know wh ether it is in volved in a collision, or equiv alently , whether the recei ved re ward reflects the true state o f the arm. Collis ions thus not only result in im mediate re ward l oss, but also corrupt the obs erv ations that a player relies on for learning th e arm rank. Such decentralized learning p roblems arise in com munication networks wh ere multiple distri buted users share th e access to a common set of channels, each with unknown communicati on q uality . If multip le users access the same channel at the same time, no one transmi ts su ccessfully or onl y one captures the channel through certain s ignaling schemes such as carrier sens ing. Another application i s mult i-agent systems in which M agents search or collect targets in N l ocations. When multiple agents choose the same l ocation, they share t he reward in an unknown way t hat may depend on which player com es first or th e nu mber of colliding agents. The deterministi c separation of exploration and e xploit ation in DSEE, ho wev er , can ensure th at collisions are cont ained within the exploitation sequence. Learning i n the exploration sequence is thu s carried out using only reliable observations. In particul ar , we show that under the DSEE approach, the system re gret, defined as the total re ward l oss wi th respect to the ideal scenario of known reward models and centralized scheduling among players, grows at the same orders as the regret in t he single-player MAB under the same conditions on the re ward di stributions. These results hinge on the extendabilit y of DSEE to tar g eting at arms with arbitrary ranks (not necessarily the best arm) and the s uffi ciency in learning the arm rank so lely through the observations from t he e xploration sequence. The DSEE approach can also be extended to M AB with unknown Marko v re ward models and the so-called combi natorial MAB where there is a lar ge numb er of arms dependent throu gh a smaller number of unknowns. Since th ese t wo extensions are more in volved and require separate in vestigati ons, they are not included in this paper and can be found in [7], [8]. 6 D. Related W ork There ha ve b een a number of recent studi es o n extending the cl assic policies of MAB to more general sett ings. In [9], the UCB policy proposed b y Au er et al. in [6] was extended to achie ve logarithmic regre t order for hea vy-tailed re ward di stributions when an upper bou nd on a finite moment is known. Th e basic i dea i s to replace the sample mean in the UCB index with a truncated mean estimator which allows a mean concentration result simil ar to the Chernoff-Hoef fding bound. The computati onal complexity and memory requirement of the resultin g UCB policy , howe ver , are much hig her since all past observ ations need t o be stored and truncated differently at each time t . The resul t of achie vi ng th e l ogarithmic regret order for heavy-tailed distributions under DSEE in Sec. III-C2 i s insp ired by [9]. Howe ver , the focus of this paper is to present a general approach to MAB, which not only provides a different p olicy for achie vi ng logarith mic regret order for both light-tail ed a nd heavy-tailed distributions, b ut also off ers soluti ons to v arious MAB variations as di scussed in Sec. I-C. DSEE als o of fers the option of a su blinear regre t order for heavy-tailed distri butions wit h a constant memo ry requirement, s ublinear complexity , and no requirement on any kno wledge of the re ward distri butions (see Sec. III-C1). In the context of decentralized MAB with multip le players, the problem wa s formulated in [10] with a simpl er collision model: regardless of the occurrence of collisi ons, each pl ayer always observes the actual rew ard offered by the selected arm. In this case, collisions aff ect only t he immediate rewar d but no t the l earning abilit y . It was shown that the opti mal system regret has the same l ogarithmic order as in t he classic M AB with a s ingle player , and a Time-Di vision Fair sharing (TDFS) framework for constructi ng order -optimal decentralized policies was proposed. Under the same complete observation model, decentralized MAB was also addressed in [11], [12], where the single-player policy UCB1 was e xtended to the mult i-player setting un der a Bernoulli rew ard model. In [13], T ekin and Liu add ressed decentralized learning un der general interference functions and light-tailed re ward models. In [14], [15], Kalathil et al. considered a more challenging case where arm ranks may be different across players and addressed both i.i.d . and M arkov re ward models. They proposed a decentralized po licy that achie ves near - O (log 2 T ) regret for d istributions wit h bounded support. Di f ferent from this p aper , all the above referenced work assum es com plete re ward observ ation under colli sions and focuses on specific light -tailed distributions. 7 I I . T H E C L A S S I C M A B Consider an N -arm bandit and a single player . At each ti me t , the player choos es one arm to play . Playing arm n yields i.i .d. random re ward X n ( t ) drawn from an unkn own distribution f n ( s ) . Let F = ( f 1 ( s ) , · · · , f N ( s )) denote the set of t he unknown distributions. W e assume that the rew ard mean θ n ∆ = E [ X n ( t )] exists for all 1 ≤ n ≤ N . An arm selection poli cy π is a function that maps from the player’ s observation and decision history to the arm to play . Let σ be a permut ation of { 1 , · · · , N } such that θ σ (1) ≥ θ σ (2) ≥ · · · ≥ θ σ ( N ) . The system performance under policy π is measured by the regret R π T ( F ) defined as R π T ( F ) ∆ = T θ σ (1) − E π [Σ T t =1 X π ( t )] , where X π ( t ) is the rando m re ward obtained at time t under policy π , and E π [ · ] denotes the expectation wi th respect to policy π . The ob jectiv e is to mi nimize the rate at which R π T ( F ) grows with T under any d istribution set F by choo sing an optimal pol icy π ∗ . W e say t hat a policy is order -opt imal if it achie ves a regret growing at the same order of the optim al one. W e point out that any policy w ith a sublinear regret orde r achie ves the maxim um average rew ard θ σ (1) . I I I . T H E D S E E A P P ROA C H In this section, we present the DSEE approach and analyze its performance for bot h light-tailed and heavy-tailed reward distrib utions. A. The General Structur e T ime is di vided into two i nterlea ving sequences: an e xploration sequence and an exploitation sequence. In the exploration sequence, the player plays all arms in a round-robin fashion. In the e xploitatio n sequence, t he pl ayer plays the arm with the l ar gest sample m ean (or a properly chosen mean estim ator) calculated from past reward observations. It is also pos sible to use only the obs erv ations obtained in the exploration sequence in computing the sample mean. Th is leads to the same regret order with a si gnificantly lower complexity since the samp le mean of each arm only needs t o be updated at the same subl inear rate as t he exploration sequence. A det ailed implementati on of DSEE is given in Fig 1. In DSEE, the tradeof f between exploration and exploitation i s balanced by choosing the cardinality of t he e xploration sequence. T o mi nimize the regret growth rate, the cardinality 8 The D SEE A ppr oach • Notations and Inputs: Let A ( t ) denote the set of time i ndices that belong to the exploration sequence up to (and i ncluding) t ime t . Let |A ( t ) | denote the cardinality of A ( t ) . Let θ n ( t ) denote the sample mean of arm n com puted from t he rew ard observations at ti mes in A ( t − 1) . For two positive i ntegers k and l , define k ⊘ l ∆ = (( k − 1) m od l ) + 1 , wh ich is an i nteger taking va lues from 1 , 2 , · · · , l . • At time t , 1. if t ∈ A ( t ) , pl ay arm n = |A ( t ) | ⊘ N ; 2. if t / ∈ A ( t ) , play arm n ∗ = arg max { θ n ( t ) , 1 ≤ n ≤ N } . Fig. 1. The DSEE approach for the classic MAB. of the exploration sequence should be set to the minimum t hat ensures a rew ard loss in t he exploitation sequence having an order no lar ger th an the cardinality of the exploration sequence. The detailed regret analysis is gi ven in t he ne xt subsection. B. Under Light-T ailed Rewar d Distributions In this section, we construct an exploration sequence in DSEE to achieve the opti mal loga- rithmic regret o rder for all light-tailed re ward distributions. W e recall the definition of light-tailed distributions below . Definition 1: A random va riable X is light-tailed if its mom ent-generating functi on exists, i.e., there e xists a u 0 > 0 such that for all u ≤ | u 0 | , M ( u ) ∆ = E [exp( uX )] < ∞ . Otherwise X i s hea vy-tailed. For a zero-mean light-tailed random v ariabl e X , we have [16], M ( u ) ≤ exp( ζ u 2 / 2) , ∀ u ≤ | u 0 | , ζ ≥ sup { M (2) ( u ) , − u 0 ≤ u ≤ u 0 } , (2) where M (2) ( · ) denotes th e second deriv ative o f M ( · ) and u 0 the parameter specified in Defini- tion 1. W e observe that the upper b ound in (2) is the moment-generating functi on of a zero-mean 9 Gaussian random variable with variance ζ . Thus, lig ht-tailed dist ributions are also called locally sub-Gaussian dis tributions. If the moment-generating function exists for all u , the corresponding distributions are referre d to as sub-Gaussian. From (2), we have the following extended Chernoff- Hoef fding bound on the deviation of t he sample mean. Lemma 1: (Chernof f-Hoeffding Bound [17]) Let { X ( t ) } ∞ t =1 be i.i.d. random variables drawn from a light-tailed distribution. L et X s = (Σ s t =1 X ( t )) /s and θ = E [ X (1)] . W e hav e, for all δ ∈ [0 , ζ u 0 ] , a ∈ ( 0 , 1 2 ζ ] , Pr( | X s − θ | ≥ δ ) ≤ 2 exp( − aδ 2 s ) . (3) Prov en in [17], Lem ma 1 extends the origi nal Chernof f-Hoef fding bound give n in [18] t hat considers on ly random var iables with a bounded su pport. Based on Lemm a 1, we show in the following th eorem that DSEE achiev es the o ptimal logarithmic regret order for all li ght-tailed re ward distributions. Theor em 1: Construct an exploration sequence as follows. Let a, ζ , u 0 be the const ants such that (3) holds. Defi ne ∆ n ∆ = θ σ (1) − θ σ ( n ) for n = 2 , . . . , N . Choose a const ant c ∈ (0 , ∆ 2 ) , a constant δ = min { c/ 2 , ζ u 0 } , and a cons tant w > 1 aδ 2 . For each t > 1 , i f |A ( t − 1 ) | < N ⌈ w log t ⌉ , then incl ude t in A ( t ) . Un der th is exploration s equence, th e resul ting DSEE policy π ∗ has regret, ∀ T , R π ∗ T ( F ) ≤ Σ N n =2 ⌈ w log T ⌉ ∆ n + 2 N ∆ N (1 + 1 aδ 2 w − 1 ) . (4) Pr oof : W ithou t loss of generality , we assume that { θ n } N n =1 are dist inct. Let R π ∗ T ,O ( F ) and R π ∗ T ,I ( F ) denote, respectively , regret incurred during the exploration and the exploit ation se- quences. From the construction of the exploration sequence, it is easy t o see that R π ∗ T ,O ( F ) ≤ Σ N n =2 ⌈ w log T ⌉ ∆ n . (5) During the exploitati on sequence, a rew ard loss happens if the player i ncorrectly identifies the best arm. W e thus ha ve R π ∗ T ,I ( F ) ≤ E [Σ t / ∈A ( T ) ,t ≤ T I ( π ∗ ( t ) 6 = σ (1))]∆ N = Σ t / ∈A ( T ) ,t ≤ T Pr( π ∗ ( t ) 6 = σ ( 1 ))∆ N . (6) 10 For t / ∈ A ( T ) , define the follo wing e vent E ( t ) ∆ = {| θ n ( t ) − θ n | ≤ δ, ∀ 1 ≤ n ≤ N } . (7) From the choice of δ , it is easy to see that under E ( t ) , th e arm ranks are correctly identified. W e thus ha ve R π ∗ T ,I ( F ) ≤ Σ t / ∈A ( T ) ,t ≤ T Pr( E ( t ))∆ N = Σ t / ∈A ( T ) ,t ≤ T Pr( ∃ 1 ≤ n ≤ N s.t. | θ n ( t ) − θ n | > δ )∆ N ≤ Σ t / ∈A ( T ) ,t ≤ T Σ N n =1 Pr( | θ n ( t ) − θ n | > δ )∆ N , (8) where (8) results from the union bound. L et τ n ( t ) denote the number o f times that arm n has been played during t he exploration sequence u p to tim e t . A pplying Leamma 1 to (8), we hav e R π ∗ T ,I ( F ) ≤ 2∆ N Σ t / ∈A ( T ) ,t ≤ T Σ N n =1 exp( − aδ 2 τ n ( t )) ≤ 2∆ N Σ t / ∈A ( T ) ,t ≤ T Σ N n =1 exp( − aδ 2 w log t ) (9) = 2 ∆ N Σ t / ∈A ( T ) ,t ≤ T Σ N n =1 t − aδ 2 w ≤ 2 N ∆ N Σ ∞ t =1 t − aδ 2 w ≤ 2 N ∆ N (1 + 1 aδ 2 w − 1 ) , (10) where (9) comes from τ n ( t ) ≥ w log t and (10 ) from aδ 2 w > 1 . Combining (5) and (10), we arri ve at t he theorem. The choice of the exploration sequence given i n Theorem 1 is not unique. In particular , when the horizon leng th T is gi ven, we can choos e a sing le block of exploration followed by a single block of exploitation . In t he case of i nfinite horizon, we can follow the standard technique of partitioning the tim e horizon into epochs with geometrically growing lengths and app lying the finite- T scheme t o each epoch. W e point out that t he logarithmic re gret order requires certain knowledge about the differen- tiability of the best arm. Specifically , we need a lower bound (parameter c d efined in Theorem 1) on the difference in the re ward mean of t he best and the second b est arms. W e also n eed t o k now the b ounds on parameters ζ and u 0 such that the Chernof f-Hoef fding bound (3) holds. These bounds are requi red in defining w that specifies the mini mum leading constant of the logarith mic cardinality o f the exploration sequence necessary for identifying the best arm. Howe ver , we s how 11 that when no knowledge on the re ward models i s a va ilable, we can in crease the cardinality of the exploration sequence of π ∗ by an arbit rarily small amount t o achieve a regret arbitrarily close to the logarithmic order . Theor em 2: Let f ( t ) be any positive increasing sequence wit h f ( t ) → ∞ as t → ∞ . Construct an exploration sequence as foll ows. For eac h t > 1 , include t in A ( t ) if |A ( t − 1) | < N ⌈ f ( t ) log t ⌉ . The resul ting DSEE policy π ∗ has regret R π ∗ T ( F ) = O ( f ( T ) log T ) . Pr oof : Recall const ants a and δ defined in Theorem 1. Note th at s ince f ( t ) → ∞ as t → ∞ , there exists a t 0 such that for any t > t 0 , aδ 2 f ( t ) ≥ b for some b > 1 . Similar to the proof of Theorem 1, we ha ve, following (8), R π ∗ T ,I ( F ) ≤ 2 N ∆ N Σ t / ∈A ( T ) ,t ≤ T exp( − aδ 2 f ( t ) lo g t ) ≤ Σ t 0 t =1 exp( − aδ 2 f ( t ) lo g t ) + Σ ∞ t = t 0 +1 t − b ≤ t 0 + 1 b − 1 t 1 − b 0 . (11) It is easy to see that R π ∗ T ,O ( F ) ≤ Σ N n =2 ⌈ f ( T ) log T ⌉ ∆ n . (12) Combining (11) and (12), we hav e R π ∗ T ( F ) ≤ N X n =2 ⌈ f ( T ) log T ⌉ ∆ n + t 0 + 1 b − 1 t 1 − b 0 . (13) From the proof of Theorem 2, we observe a tradeoff between th e re gret order and the finit e- time performance. While one can arbitrarily approach the l ogarithmic regret order by reducing the dive rging rate of f ( t ) , the price is a larger additive constant as shown in (13). C. Under Heavy-T ailed Rewar d Distributions In this subsection, we con sider t he regret performance of DSEE under heavy-tailed re ward distributions. 12 1) Sublinear Re gr et wit h Subl inear Complexity and No Prior Knowledge: For heavy-tailed re ward dis tributions, the Chernof f-Hoef fding b ound does n ot hol d in general. A weak er bound on the de viation of the sample mean from the true mean is established in the lemma below . Lemma 2: Let { X ( t ) } ∞ t =1 be i.i.d. random va riables d rawn from a di stribution with finit e p th moment ( p > 1 ). Let X t = 1 t Σ t k =1 X ( k ) and θ = E [ X (1)] . W e have , for all δ > 0 , Pr( | X t − θ | ≥ δ ) ≤ (3 √ 2) p p p/ 2 E [ | X (1) − θ | p ] δ p t 1 − p if p ≤ 2 (3 √ 2) p p p/ 2 E [ | X (1) − θ | p ] δ p t − p/ 2 if p > 2 (14) Pr oof : By Chebyshe v’ s inequality we ha ve, Pr( | X t − θ | ≥ δ ) ≤ E [ | X t − θ | p ] δ p = E [ | Σ t k =1 ( X ( k ) − θ ) | p ] t p δ p ≤ B p E [(Σ t k =1 ( X ( k ) − θ ) 2 ) p/ 2 ] t p δ p , (15) where (15) hold s by the Marcinkiewicz -Zygmund inequality for s ome B p depending only on p . The best constant in the Marcinkie wicz-Zygmund inequalit y was shown in [19] t o be B p ≤ (3 √ 2) p p p/ 2 . Next, we pro ve Lemm a 2 by considering the two ca ses of p . • p ≤ 2 : Considering the in equality (Σ t k =1 a k ) α ≤ Σ t k =1 a α k for a k ≥ 0 and α ≤ 1 (which can be easily shown using inducti on), w e hav e, from (15), Pr( | X t − θ | ≥ δ ) ≤ B p E [Σ t k =1 | X ( k ) − θ | p ] t p δ p = B p E [ | X (1) − θ | p ] δ p t 1 − p . (16) • p > 2 : Using Jensen’ s inequality , we ha ve, from (15), Pr( | X t − θ | ≥ δ ) ≤ B p E [ t p/ 2 − 1 Σ t k =1 | X ( k ) − θ | p ] t p δ p = B p E [ | X (1) − θ | p ] δ p t − p/ 2 . (17) Based on Lemma 2, we ha ve the following result s on the regret p erformance of DSEE under hea vy-tailed rew ard distributions. 13 Theor em 3: Assum e that the re ward d istributions h a ve finit e p th order moment ( p > 1 ). Construct an exploration sequence as follo ws. Choos e a constant v > 0 . For each t > 1 , include t in A ( t ) if | A ( t − 1) | < v t 1 /p for 1 < p ≤ 2 or |A ( t − 1) | < v t 1 1+ p/ 2 for p > 2 . Und er this exploration sequence, the resulting DSEE policy π p has regret R π p T ( F ) ≤ O ( T 1 /p ) if 1 < p ≤ 2 O ( T 1 1+ p/ 2 ) if p > 2 (18) An upper bound on the regret for eac h T is giv en in 20 in the proof. Pr oof : W e prov e the theorem for the case of p ≥ 2 , the ot her case can be shown simi larly . Follo wing a similar li ne of arguments as i n the proof of Th eorem 1, we can show , by appl ying Lemma 2 to (8), R π p T ,I ( F ) ≤ ∆ N (3 √ 2) p p p/ 2 E [ | X (1) − θ | p ] δ p v − p/ 2 Σ T t =1 t − p/ 2 1+ p/ 2 ≤ ∆ N (3 √ 2) p p p/ 2 E [ | X (1) − θ | p ] δ p v − p/ 2 [(1 + p/ 2)( T 1 1+ p/ 2 − 1 ) + 1] (19) Considering the cardinality of the exploration sequence, we ha ve, ∀ T , R π p T ( F ) ≤ ∆ N (3 √ 2) p p p/ 2 E [ | X (1) − θ | p ] (∆ 2 / 2) p v − p/ 2 [ p ( T 1 p − 1 ) + 1] + ⌈ v T 1 p ⌉ if p ≤ 2 ∆ N (3 √ 2) p p p/ 2 E [ | X (1) − θ | p ] (∆ 2 / 2) p v − p/ 2 [(1 + p/ 2)( T 1 1+ p/ 2 − 1) + 1] + ⌈ v T 1 1+ p/ 2 ⌉ if p > 2 (20) The re gret order given in Theorem 3 is thus readily seen. 2) Lo garithmi c Re gr et Using T runcat ed Sample Mean: Inspi red by the work by Bubeck, C esa- Bianchi, and Lugosi [9], we show in this s ubsection t hat usi ng the truncated sampl e mean, DSEE can offer log arithmic regret order for hea v y-tailed reward distributions with a carefully chosen cardinality of the exploration sequence. Sim ilar to the UCB v ariation developed in [9], this logarithmic regret order i s achieve d at the price of pri or informatio n on the re ward distrib utions and higher computational and memory requi rement. The computational and memory requirement, howe ver , is significantly lo wer than that of the UCB variation i n [9], since the D SEE approach only needs to s tore sampl es from and com pute the t runcated sample m ean at the e xploration times with O (log T ) order rath er than each time instant. The main idea is based on the following result on the truncated sample mean given in [9]. 14 Lemma 3: ( [9]:) Let { X ( t ) } ∞ t =1 be i.i.d. random variables sat isfying E [ | X (1) | p ] ≤ u for some constants u > 0 and p ∈ (1 , 2 ] . Let θ = E [ X (1) ] . Consider the truncated empirical mean b θ ( s, ǫ ) defined as b θ ( s, ǫ ) = 1 s s X t =1 X ( t ) 1 {| X ( t ) | ≤ ( ut log( ǫ − 1 ) ) 1 /p } . (21) Then for any ǫ ∈ (0 , 1 2 ] , Pr( | b θ ( s, ǫ ) − θ | > 4 u 1 /p ( log( ǫ − 1 ) s ) p − 1 p ) ≤ 2 ǫ. (22) Based on Lemma 3, we ha ve the follo wing result on the regret of DSEE. Theor em 4: Assum e that the rewa rd o f each arm satisfies E [ | X n (1) | p ] ≤ u for some const ants u > 0 and p ∈ (1 , 2] . L et a = 4 p 1 − p u 1 1 − p . Define ∆ n ∆ = θ σ (1) − θ σ ( n ) for n = 2 , . . . , N . Construct an exploration s equence as fol lows. Choose a cons tant δ ∈ (0 , ∆ 2 / 2) and a constant w > 1 /aδ p/ ( p − 1) . For each t > 1 , if |A ( t − 1) | < N ⌈ w log t ⌉ , then include t in A ( t ) . At an exploitation time t , play the arm with the lar gest truncated sam ple mean gi ven by b θ n ( τ n ( t ) , ǫ n ( t )) = 1 τ n ( t ) τ n ( t ) X k =1 X n,k 1 {| X n,k | ≤ ( uk log( ǫ n ( t ) − 1 ) ) 1 /p } , (23) where X n,k denotes the k th observation of arm n during the exploration sequence, τ n ( t ) the total number of such observ ations, and ǫ n ( t ) i n the truncator for each arm at each time t is gi ven by ǫ n ( t ) = exp( − aδ p p − 1 τ n ( t )) . (24) The resulting DSEE policy π ∗ has regret R π ∗ T ( F ) ≤ N X n =2 ⌈ w lo g T ⌉ ∆ n + 2 N ∆ N (1 + 1 aδ p/ ( p − 1) w − 1 ) . (25) Pr oof : Follo wing the same li ne of arguments as in the proof of Theorem 1, we have, following (8) R π ∗ T ,I ( F ) ≤ X t / ∈A ( T ) ,t ≤ T N X n =1 Pr( | b θ n ( τ n ( t ) , ǫ n ( t )) − θ n | > δ )∆ N . (26) Based on Leamma 3, we hav e, by substituti ng ǫ n ( t ) give n in (24) into (22), Pr( | b θ ( τ n ( t ) , ǫ n ( t )) − θ n | > δ ) ≤ 2 exp( − aδ p p − 1 τ n ( t )) . (27) 15 Substitutin g t he abov e equation into (26), we ha ve R π ∗ T ,I ( F ) ≤ 2∆ N Σ t / ∈A ( T ) ,t ≤ T Σ N n =1 exp( − aδ p p − 1 τ n ( t )) ≤ 2∆ N Σ t / ∈A ( T ) ,t ≤ T Σ N n =1 exp( − aδ p p − 1 w log t ) = 2 ∆ N Σ t / ∈A ( T ) ,t ≤ T Σ N n =1 t − aδ p/ ( p − 1) w ≤ 2 N ∆ N Σ ∞ t =1 t − aδ p/ ( p − 1) w ≤ 2 N ∆ N (1 + 1 aδ p/ ( p − 1) w − 1 ) (28) W e t hen arri ve at t he theorem, considering R π ∗ T ,O ( F ) ≤ Σ N n =2 ⌈ w lo g T ⌉ ∆ n . W e po int out that to achieve the logarith mic re gret order under heavy-tailed distributions, an upper bound on E [ | X n (1) | p ] for a certain p needs to be known. The range constraint of p ∈ (1 , 2] in Theorem 4 can be easily addressed: if w e know E [ | X n (1) | p ] ≤ u for a certain p > 2 , th en E [ | X | 2 ] ≤ u + 1 . Similar to Theorem 2, we can show that when no knowledge on the rewar d models is av ailable, we can increase the cardinalit y of the exploration sequence by an arbitrarily small amount (any div er ging sequence f ( t ) ) to achiev e a regre t arbitrarily clos e to the logarithmic order . One necessary change to the poli cy is that the constant δ in Theorem 4 used in (24) for calculating the truncated sample mean should be replaced by f ( t ) γ for some γ ∈ ( 1 − p p , 0) . I V . V A R I A T I O N S O F M A B In this section , we extend the DSEE approach to sev eral MAB variations i ncluding MAB wit h var ious ob jectiv es, decentralized MAB with m ultiple players and incom plete rewa rd ob serv ations under collisions, MAB with unknown Markov dynamics, and combinatorial MAB with depe ndent arms. A. MAB under V ario u s Objectives Consider a generalized MAB problem in which the desired arm is the m th best arm for an arbitrary m . Such objectives m ay arise when there are multi ple players (see the next subsection) or other constraint s/costs in arm selection. The classic policies in [4]–[6] cannot be directly extended to handle this new obj ectiv e. F or example, for the UCB policy prop osed by Auer et al. in [6], simply choosing the arm with th e m th (1 < m ≤ N ) largest index cannot guarantee an opti mal solution. This can be seen from the index form given in (1): w hen the index of the 16 desired arm is too large to be selected, its index t ends to become e ven larger due to the second term of the i ndex. The rectification proposed in [20 ] is to combin e the upper confidence bound with a symmetric lower confidence bound. Specifically , t he arm selection is completed in two steps at each t ime: the u pper confidence bound i s first used to filter o ut arms wit h a lower rank, the lower confidence bound is then used to filter out arms with a higher rank. It wa s s hown in [20] t hat under the extended UCB, the expected t ime that the player does no t play t he t ar geted arm has a logarithmi c order . The DSEE approach, howe ver , can be directly extended to handle this general objective. Under DSEE, all arms, regardless of their ranks, are suffi ciently explored by carefully choosing the cardinality of the exploration sequence. As a consequence, this general obj ectiv e can be achie ved by simply choosin g the arm wit h the m th l ar gest sample mean in the exploitation sequence. Specifically , assume that a cost C j > 0 ( j 6 = m, 1 ≤ j ≤ N ) is incurred when the player p lays the j th best arm. Define the regret R π T ( F , m ) as the expected tot al cos ts over tim e T under po licy π . Theor em 5: By choosing the parameter c i n Theorem 1 to satisfy 0 < c < min { ∆ m − ∆ m − 1 , ∆ m +1 − ∆ m } or a parameter δ in theorem 3 and 4 t o satisfy 0 < δ < 1 2 min { ∆ m − ∆ m − 1 , ∆ m +1 − ∆ m } and letting the pl ayer select th e arm with the m -th l ar gest sample m ean (or truncated sample mean in case of 4) in th e exploitation sequence, Theorems 1-4 hold for R π T ( F , m ) . Pr oof : The proof is sim ilar to t hose of previous theorems. The key observation is that after playing all arm s sufficient times during the exploration sequence, the prob ability that the sample mean o f each arm de viates from its true mean by an amount large r than the non-overlapping neighbor is small enough to ensu re a properly bo unded regret incurred in the exploitation sequence. W e now consider an alternati ve scenario that the player t ar gets at a set of b est arms, say the M best arms. W e ass ume that a cost is incurred wheneve r the player plays an arm not in the set. Similarly , we define the regret R π T ( F , M ) as the expec ted total costs ov er time T under policy π . Theor em 6: By choosing the parameter c in Theorem 1 to sati sfy 0 < c < ∆ M +1 − ∆ M or a parameter δ in th eorem 3 and 4 to satisfy 0 < δ < 1 2 (∆ M +1 − ∆ M ) and letting th e player select 17 one of the M arms with the largest sample means (or truncated s ample mean i n case of 4) i n the exploitation sequence, Theorem 1 -4 hold for R π T ( F , M ) . Pr oof : The proof is similar to thos e of previous t heorems. Compared to Theorem 5, the condition on c for applying Theorem 1 is more relaxe d: we only n eed t o know a l owe r bo und on the mean diffe rence bet ween the M -th best and t he ( M + 1) -th best arms . This i s due t o the fact that we onl y need to di stinguish the M best arms from others in stead of specifying thei r rank. By s electing arms with dif ferent ranks of the sample mean i n the exploitati on sequence, it is n ot difficult to see that T heorem 5 and Theorem 6 can b e applied to cases with time- var ying objectiv es. In the next subsection, we u se th ese e xtensions of DSEE to solve a class of decentralized MAB with incomplete re ward observ ations. B. Decentralized MA B with Incompl ete Rew ar d Observations 1) Distributed Learning under Incomplete Obs ervations: Consider M di stributed players. At each time t , each player chooses one arm to play . Wh en multiple players choose the s ame arm (say , arm n ) to play at tim e t , a player (say , player m ) in volved in thi s collisio n obtains a potentially reduced rew ard Y n,m ( t ) wi th P M m =1 Y n,m ( t ) ≤ X n ( t ) . W e focus on t he case where the M b est arms have p ositive re ward mean and collisi ons cause rew ard loss. The distribution of the partial rewar d Y n,m ( t ) under collisions can take any unknown form and h as any dependency on n , m and t . Players make decisions solely based on thei r p artial rew ard observations Y n,m ( t ) without in formation exchange. Consequently , a player does not kno w whether it is in volved in a collisi on, or equi valently , whether t he receiv ed re ward reflects th e t rue state X n ( t ) of t he arm. A local arm selection policy π m of player m is a function that maps from the pl ayer’ s observation and decision hi story to the arm t o play . A decentralized arm selection p olicy π is thus giv en by the concatenation of the local polices of all players: π d ∆ = [ π 1 , · · · , π M ] . The system performance und er policy π d is measured by t he s ystem re gret R π d T ( F ) defined as the e xpected total re ward loss up to tim e T under policy π d compared to the ideal scenario that players are centralized and F is known to all players (thus the M best arms with hig hest means 18 are played at each time). W e have R π d T ( F ) ∆ = T Σ M n =1 θ σ ( n ) − E π [Σ T t =1 Y π d ( t )] , where Y π d ( t ) is t he total random re ward obt ained at time t un der decentralized policy π d . Similar to th e si ngle-player case, any pol icy with a s ublinear order of regret would achieve the m aximum a verage re ward gi ven by t he sum of the M highest re ward means. 2) Decentr alized P ol icies under DSEE: In order to mini mize the system regret, i t is crucial that each player extracts reliable information for learning the arm rank. This requires that each player obtains and recognizes sufficient observa tions that were recei ved without collisions. As shown in Sec. III, efficient learning can be achie ved in DSEE by s olely ut ilizing the observations from the deterministic e xploration sequence. Based on this property , a decentralized a rm selection policy can b e constructed as follows. In the e xploration sequ ence, players p lay all arms in a round-robin fashion with d iff erent offsets which can be predetermined b ased on, for example, the pl ayers’ IDs, to eliminate col lisions. In the exploitation sequence, each player plays t he M arms with the lar gest sam ple mean calculated using only observations from the exploration sequence under either a prioritized or a fair sharing scheme. While collisions still occur in the exploitation sequences d ue to t he d iff erence in the estimated arm rank across players caused by the randomness of the samp le means, their effect on the total re ward can be l imited throug h a carefully designed cardinality of t he exploration sequ ence. Not e that under a priori tized scheme, each player needs to learn th e sp ecific rank of one or mult iple of th e M best arms and Theorem 5 can be appli ed. While under a fair sharing s cheme, a player only needs to learn the set of the M best arms (as addressed in Theorem 6) and use the common arm index for fair sharing. An example based on a round-robin fa ir sharing scheme is i llustrated in Fig. 2. W e point out that under a fair sharing scheme, each player achie ves t he same a verage re ward at the same rate. Theor em 7: Under a decentralized poli cy based on DSEE, Theorem 1-4 hold for R π d T ( F ) . Pr oof : It is n ot difficult to see that t he regret in the decentralized poli cy i s completely determined by the learning ef ficiency of the M best arms at each player . The ke y is to notice that duri ng the exploitation sequence, col lisions can only happ en i f at least one player incorrectly identifies the M best arms. As a consequence, to analyze the regret in the exploitation sequence, we only need to consider such e vents. The proof is thus similar to those of pre v ious theorems. 19 000000 000000 000000 111111 111111 111111 0000 0000 0000 1111 1111 1111 00 00 00 11 11 11 2 1 2 3 2 3 2 3 1 2 2 1 3 2 3 1 1 3 1 2 1 2 1 1 2 2 1 1 2 2 1 2 3 1 00 00 11 11 r e p l a c e m e n t s · · · · · · · · · Player 1 Player 2 t t t Exploration Exploitatio n Estimated best arms Estimated best arms Estimated best arms Estimated best arms (1 , 2) (1 , 2) (2 , 3) (1 , 2) Fig. 2. An example of decentralized policies based on DSEE ( M = 2 , N = 3 , the index of the selected arm at each time is gi ven). C. Combinatorial MAB with Dependent Arms In the classical MAB formulation, arms are in dependent. Re ward observ ations from o ne arm do not provide informati on about the quali ty of ot her arms. As a result, regret grows linearly with the numb er of arms. Howe ver , many network optim ization problems (such as optimal activ ation for online detection, shortest-path, minim um spanning, and dominating set under unknown and time-varying edge weig hts) lead to MAB wi th a large number of arms (e.g., the n umber of paths) dependent through a small nu mber of unknowns (e.g., the number of edges). While th e dependency across arms can be ignored i n learning and existin g learning algorithms directly apply , such a nai ve approach often yields a regret g ro wing e xponentially with the problem size. In [8], we ha ve shown that the DSEE approach can be extended to such comb inatorial M AB problems to achie ve a regret that g ro ws polynomially (rather t han e xponential ly) wit h the p roblem size wh ile m aintaining its opt imal log arithmic order with time. The basi c idea is to construct a set of properly chosen basis functio ns of the underlying network and explore on ly the basis in the exploration sequ ence. The detailed re gret analysis is rather in volved and is gi ven i n a separate study [8]. 20 D. Restless MAB under Unknown Markov Dynami cs The classical MAB formulation assumes an i.i.d. or a rested Marko v re ward mo del (see, e.g., [21], [22]) which applies only to systems without memory or systems whose dynamics only result from the player’ s action. In many practical applications such as tar get tracking and scheduling in qu eueing and communi cation networks, the syst em has m emory and continues to ev olve ev en when it is not engaged b y the p layer . For example, channels conti nue to ev olve even w hen they are not sensed; queues con tinue to grow due to new arriv als ev en when th ey are not serv ed; tar gets conti nue to mov e e ven when they are not monitored. Such appl ications can be formulated as restl ess MAB where the s tate o f each arm continu es to e volve (with memory) ev en when i t is not played. More s pecifically , the state of each arm changes according t o an unknown Markovian transition rule when the arm is pl ayed and according t o an arbitrary unkno wn random process when the arm is not played. In [7], we ha ve extended the DSEE approach to th e restless MAB under both the centralized (or equivalently , the single- player) setting and the decentralized setting with m ultiple dist ributed players. W e hav e shown that the DSEE approach o f fers a logarithmi c order of the so-called weak regre t. The detail ed deriv atio n i s rather in volved and is given in a separate study [7]. V . C O N C L U S I O N The DSEE approach addresses the fundamental t radeof f between exploration and exploitati on in MAB by separating, in time, the two often conflicting objectives. It has a clearly d efined tunable parameter — the ca rdinality of the exploration sequence—-whi ch c an be adjusted to handle any re ward di stributions and the l ack of any prior knowledge on t he rew ard mo dels. Furthermore, the determinist ic separation of exploration from exploitation allows easy extensions to var iations of MAB, including decentralized MAB with multiple players and incomplete re ward observations under collisions, MAB with unknown Markov dynamics, and combinatorial MAB with depe ndent arms that often arise in netw ork optimization problems such as the shortest path , the minimum spanning, and the dominatin g s et problems under unknown random weights. In algorithm design, there is often a tens ion between performance and g enerality . The gen- erality of t he DSEE app roach comes at a price of finite-tim e performance. Even though DSEE off ers t he optim al regret order for any distribution, si mulations show that the leading constant in the regret of fered by DSEE is often inferior to that of classic policies proposed i n [4]–[6] that 21 tar get at s pecific typ es of distributions. Whether one can im prove the finite-tim e performance of DSEE without scarifying its generality is an interesting future research direction. R E F E R E N C E S [1] H. Robbins, “Some Aspects of the Sequential Design of E xperiments, ” Bull. Amer . Math. Soc. , vol. 58, no. 5, pp. 527-535, 1952. [2] T . S antner , A. T amhane, Design of Experiments: Ranking and Selection , CRC P ress, 1984. [3] A. Mahajan and D. T eneketzis, “Multi-armed Bandit Problems, ” F oundations and Applications of Sensor Manag ement, A. O. Her o III, D. A. Castanon, D. Cochran and K. Kastella, (Editors) , Springer-V erlag, 2007. [4] T . Lai and H. Robbins, “ Asymptotically Efficient Adapti ve Allocation Rules, ” A dvances in Applied Mathematics , v ol. 6, no. 1, pp. 4-22, 1985. [5] R. Agrawal, “Sample Mean Based Index Policies with O ( log n ) Regret for the Multi-armed Bandit Problem, ” Advances i n Applied Pro bability , vol. 27, pp. 1054-1078, 1995 . [6] P . Auer, N. Cesa-Bianchi, P . Fischer , “Finit e-time Analysis of the Multiarmed Bandit Problem, ” Machine Learning , vol. 47, pp. 235-256, 2002. [7] H. Liu, K. Liu, and Q. Zhao, “Learning in A C hanging W orld: Restless Multi-Armed Bandit with Unknown Dynamics, ” to appear in IEEE T rans actions on Information T heory . [8] K. Liu and Q. Zhao, “ Adaptive Shortest-Path Routing under Unkno wn and Stochastically V arying Link States, ” P roc. of the 10th International Symposium on Modeling and Optimization in Mobile, Ad Hoc, and W ir eless Networks (W iO pt) , May , 2012. [9] S. Bubeck, N . Cesa-Bianchi, G. Lugosi, “Bandits with heavy tail, ” arXiv:1209.1727 [stat.ML] , S eptember 2002. [10] K. L iu, Q. Zhao, “Distributed Learning in Multi-Armed Bandit wi th Multiple Players, ” IEEE Tr ansations on Signal Pr ocessing , vol. 58, no. 11, pp. 5667-5681, Nov ., 2010. [11] A. Anandkumar , N. Michael, A.K. T ang, A. Swami, “Distributed Algorithms for Learning and Cognitiv e Medium Access with Logarithmic Regret, ” IEEE JSAC on A dvances in Cognitive Radio Networking and Communications , vol. 29, no. 4, pp. 731-745, Mar ., 2011. [12] Y . Gai and B. Krishnamachari, “Decentralized O nline Learning Algorithms for Opportun istic Spectrum Access, ” IEE E Global Communications Confer ence (GLOBECOM 2011) , Houston, USA, Dec., 2011. [13] C. T ekin, M. Liu, “Performance and Con ver gence of Multiuser Online Learning, ” Pro c. of Internanional Confer ence on Game Theory for Networks (GAMNET S) , Apr ., 2011, [14] D. Kalathil, N. Nayyar , R. Jain, “Decentralized learning for multi-player multi- armed bandits, ” Pr oc. of IEEE Confer ence on Decision and Contr ol (CDC) , Dec., 2012. [15] D. Kalathil, N. Nayyar , R. Jain, “Decentralized learning f or multi-player multi-armed bandits, ” submitted to IE EE Tr ans. on Information Theory , April 2012, available at http://arxiv .org/abs/1206.35 82 . [16] P . Chareka, O. Chareka, S. Ken nendy , “Locally Sub-Gaussian Random V ariable and the Stong Law of Large Numbers, ” Atlantic Electr onic Jo urnal of Mathematics , vo l. 1, no. 1, pp. 75-81 , 2006. [17] R. Agrawal, “The Continuum-Armed Bandit Problem, ” SIAM J. Contr ol and Optimization , vol. 33, no. 6, pp. 1926-1951, Nov ember , 1995. [18] W . Hoef fding, “Probability Inequalities for Sums of Bounded Rando m V ariables, ” J ournal of the American Statistical Association , vol. 58, no. 301, pp. 13-30, March, 1963. 22 [19] Y . Ren, H. Liang “On the best constant i n Marcinkie wicz-Zygmund inequality , ” Statistics and Pr obability Letters , vol. 53, pp. 227-233, June, 2001. [20] Y . Gai and B. K rishnamachari, “Decentralized Online Learning Algorithms for Opportunistic Spectrum Access, ” T echnical Report, March, 2011. A vailable at http:// anrg.usc .edu/www/publications/papers/DMAB201 1.pdf . [21] V . Anantharam, P . V araiya, J. W alrand, “ Asymptotically Efficient Al location Rules for the Multiarmed Bandit Problem with Multiple Plays-Part II : Markovian Re wards, ” IEEE T ransaction on Automatic Contr ol , vol. A C-32, no.11, pp. 977-982, Nov ., 1987. [22] C. T ekin, M. Li u, “Online Al gorithms for the Multi-Armed Bandit Pr oblem With Marko vian Rewards, ” P r oc. of Allerton Confer ence on Communications, Contr ol, and Computing , Sep., 2010.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment