Non-simplifying Graph Rewriting Termination

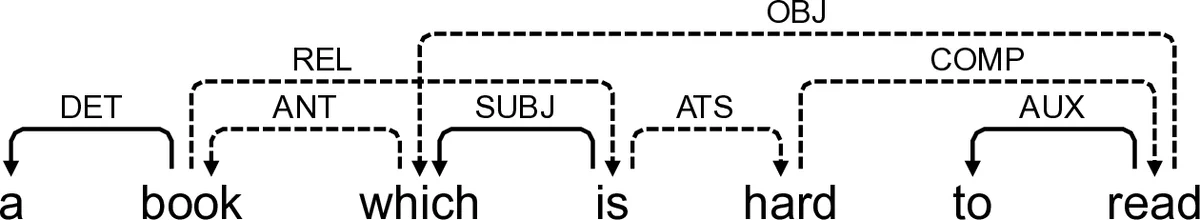

So far, a very large amount of work in Natural Language Processing (NLP) rely on trees as the core mathematical structure to represent linguistic informations (e.g. in Chomsky’s work). However, some linguistic phenomena do not cope properly with trees. In a former paper, we showed the benefit of encoding linguistic structures by graphs and of using graph rewriting rules to compute on those structures. Justified by some linguistic considerations, graph rewriting is characterized by two features: first, there is no node creation along computations and second, there are non-local edge modifications. Under these hypotheses, we show that uniform termination is undecidable and that non-uniform termination is decidable. We describe two termination techniques based on weights and we give complexity bound on the derivation length for these rewriting system.

💡 Research Summary

The paper investigates graph rewriting systems (GRS) tailored to natural language processing (NLP) where the underlying linguistic structures are represented as labeled directed graphs rather than trees. The authors argue that many linguistic phenomena—such as shared subjects, cyclic dependencies, and long‑distance relations—cannot be adequately captured by tree‑based formalisms. Consequently, they propose a GRS framework that respects two linguistic constraints: (1) no node creation during rewriting (node‑preserving transformations) and (2) non‑local edge modifications realized through a dedicated “shift” operation that moves all incident edges from one node to another. Additionally, the framework supports negative conditions on patterns, allowing rules to fire only when certain edges are absent, which is essential for distinguishing, for example, passive from active constructions.

Formally, a graph G is a triple (N, ℓ, E) where N is a finite set of nodes, ℓ labels nodes with symbols from a finite set Σ_N, and E ⊆ N × Σ_E × N is a set of labeled edges with the restriction that for any pair of nodes there is at most one edge of a given label. A pattern P is a quadruple (B, (\bar{E}), (\bar{I}), (\bar{O})) consisting of a basic graph B (the structural part) together with three sets of forbidden edges: internal forbidden edges (\bar{E}), forbidden incoming edges (\bar{I}) (edges from the context into the pattern), and forbidden outgoing edges (\bar{O}) (edges from the pattern to the context). A matching µ of P into a host graph G is an injective graph morphism from B to G that respects node labels and edge labels and additionally satisfies the three negative conditions (no forbidden internal edge appears, no forbidden incoming edge from outside the pattern, and no forbidden outgoing edge to the outside).

Given a matching, the host graph is partitioned into three node sets: the pattern image (nodes matched by µ), the crown (nodes outside the image that are directly adjacent to it), and the context (all remaining nodes). Edges are similarly partitioned into pattern edges, crown edges, context edges, and pattern‑glued edges (edges between pattern nodes that are not part of the basic pattern). This decomposition isolates the part of the graph that a rule may affect.

A rule R = ⟨P, c⟩ consists of a pattern P and a consistent sequence c of atomic commands. The command set includes:

label(a,α): relabel the node identified by a,del edge(a,e,b): delete an edge of label e between a and b,add edge(a,e,b): add such an edge,del node(a): delete node a (the paper mainly focuses on node‑preserving rules, so this command is rarely used),shift(a,b): move all incident edges of node a to node b (the key operation for non‑local edge modifications).

Consistency means that once a del node(a) command appears, the identifier a does not occur in any later command, preventing references to a deleted node.

The semantics of a rule application is defined inductively on the command sequence. Each command updates the current graph, preserving the graph‑type constraints (e.g., at most one edge of a given label between two nodes). The result of applying rule R at matching µ to graph G is denoted G·µc.

The central theoretical contribution concerns termination. The authors distinguish two notions:

- Uniform termination: does every possible derivation from any initial graph terminate?

- Non‑uniform termination: given a specific initial graph, does every derivation from that graph terminate?

They prove that uniform termination is undecidable for the class of graph rewriting systems under study. The proof reduces the halting problem of a Turing machine to the uniform termination problem by encoding machine configurations as graphs and simulating transitions with rewriting rules that respect the node‑preserving and shift constraints.

In contrast, non‑uniform termination is decidable. The authors present two static analysis techniques based on assigning weights to edge labels.

First technique (single integer weight): each edge label e receives an integer weight w(e). For a rule R, the sum of weights of edges deleted minus the sum of weights of edges added must be strictly positive (i.e., the total weight strictly decreases). If all rules satisfy this condition, any derivation must terminate because the total weight is a natural number that cannot decrease indefinitely. They show that under this condition the maximal length of a derivation is bounded by a quadratic function of the size of the initial graph (specifically O(|G|²)).

Second technique (vector of integer weights): each label receives a d‑dimensional integer vector w(e) ∈ ℤ^d. The rule must be decreasing in the lexicographic order: there exists a dimension i such that the i‑th component strictly decreases while all earlier components are unchanged, and later components are non‑increasing. This more expressive scheme can capture systems where a single scalar weight is insufficient. The authors prove that if such a weight vector exists for all rules, termination follows, and the derivation length is bounded by a polynomial whose degree depends on d and the maximal absolute weight.

Both techniques are automatically computable: given a finite set of rules, one can formulate a system of linear (or linear‑integer) inequalities and solve it using standard linear programming or integer programming tools. Hence the termination analysis can be integrated into a tool chain for NLP pipelines.

The paper also discusses practical motivations. In their own NLP application, they have several hundred graph rewriting rules organized into modules. Each module is verified for termination using the weight‑based analysis before being deployed. Termination is not only a safety property (preventing infinite loops) but also a prerequisite for applying Newman’s Lemma to prove confluence, which in turn guarantees that the order of rule applications does not affect the final semantic representation.

Finally, the authors outline future work: extending the framework with a categorical semantics (e.g., using double‑pushout or sesqui‑pushout approaches), improving the completeness of the weight‑based methods, and optimizing the implementation of pattern matching and shift operations for large‑scale graph rewriting in NLP. The paper demonstrates that, despite the higher expressive power and apparent computational overhead of graph rewriting compared to term rewriting, careful restriction of operations and static analysis make it a viable and powerful tool for linguistic computation.

Comments & Academic Discussion

Loading comments...

Leave a Comment