Gravitational Wave Astronomy: Needle in a Haystack

A world-wide array of highly sensitive interferometers stands poised to usher in a new era in astronomy with the first direct detection of gravitational waves. The data from these instruments will provide a unique perspective on extreme astrophysical phenomena such as neutron stars and black holes, and will allow us to test Einstein’s theory of gravity in the strong field, dynamical regime. To fully realize these goals we need to solve some challenging problems in signal processing and inference, such as finding rare and weak signals that are buried in non-stationary and non-Gaussian instrument noise, dealing with high-dimensional model spaces, and locating what are often extremely tight concentrations of posterior mass within the prior volume. Gravitational wave detection using space based detectors and Pulsar Timing Arrays bring with them the additional challenge of having to isolate individual signals that overlap one another in both time and frequency. Promising solutions to these problems will be discussed, along with some of the challenges that remain.

💡 Research Summary

The paper provides a comprehensive overview of the current and emerging challenges in gravitational‑wave (GW) astronomy, focusing on the signal‑processing and statistical‑inference problems that must be solved to fully exploit the capabilities of the worldwide network of ground‑based interferometers (LIGO, Virgo, KAGRA) as well as future space‑based detectors (LISA) and pulsar‑timing arrays (PTA). It begins by emphasizing that the direct detection of GWs will open an unprecedented window onto extreme astrophysical objects such as neutron‑star binaries and black‑hole mergers, and will allow stringent tests of general relativity in the strong‑field, dynamical regime.

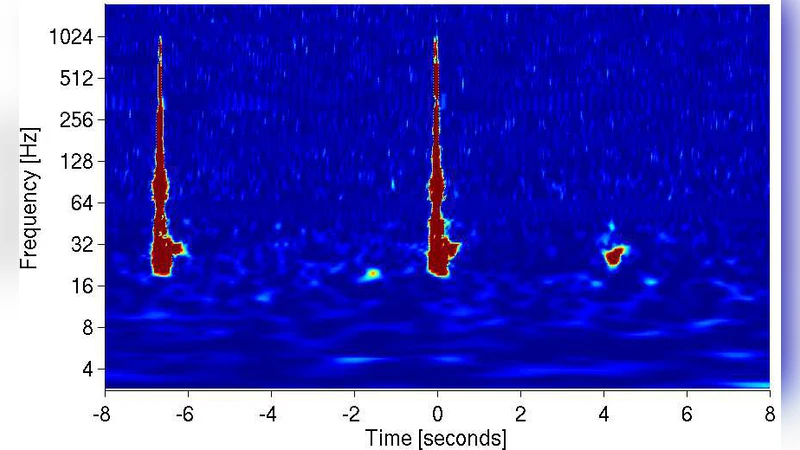

A central theme is that GW signals are extremely weak and rare, often buried in instrument noise that is neither stationary nor Gaussian. The authors review traditional matched‑filtering techniques, pointing out their reliance on the assumption of stationary, Gaussian noise, and then describe more robust alternatives. These include time‑frequency methods based on wavelet transforms, adaptive estimation of the noise power spectral density, and higher‑order statistical measures that capture non‑Gaussian “glitches.” By continuously updating the noise model, the signal‑to‑noise ratio (SNR) estimates become more reliable, reducing false‑alarm rates.

The second major difficulty is the high dimensionality of the parameter space. A typical compact‑binary coalescence waveform depends on 15–20 physical parameters (masses, spins, sky location, distance, orbital phase, etc.). Sampling the posterior distribution in such a space is computationally expensive because each likelihood evaluation requires generating a full waveform. The paper surveys state‑of‑the‑art Bayesian inference tools: parallel tempering Markov‑Chain Monte Carlo (PT‑MCMC), nested sampling, and variational Bayesian approximations. A novel hybrid approach is highlighted, where a deep neural network trained on a large bank of simulated waveforms provides a fast surrogate for the likelihood, allowing the sampler to focus on the most promising regions. This “learn‑and‑sample” strategy dramatically reduces the number of costly waveform evaluations and makes near‑real‑time inference feasible.

The third challenge addressed is signal overlap, which becomes acute for space‑based detectors and PTAs. In the millihertz band, LISA will observe thousands of simultaneously active binary systems, many of which overlap in both time and frequency. PTAs, which monitor pulse‑arrival times over decades, also contend with a superposition of low‑frequency GW backgrounds and individual sources. To disentangle these, the authors propose a Bayesian blind‑source‑separation framework that combines independent component analysis (ICA) with hierarchical models for the number of sources. Non‑parametric priors such as Dirichlet‑process mixtures allow the algorithm to infer the appropriate model complexity directly from the data, while sparse Bayesian regression enforces parsimony, preventing over‑fitting.

Beyond algorithmic advances, the paper discusses the need for an end‑to‑end, low‑latency detection pipeline. Current pipelines operate offline, taking hours to days to produce alerts. By integrating GPU‑accelerated convolutional neural‑network triggers with streaming Bayesian updates, the authors outline a path toward sub‑second alert generation, which is crucial for multimessenger follow‑up. They also stress the importance of distributed inference across the detector network, enabling consistent global posterior estimates and optimal use of heterogeneous data streams.

Finally, the authors enumerate open problems: (1) developing physics‑based models for non‑stationary, non‑Gaussian noise; (2) further improving sampling efficiency in ultra‑high‑dimensional spaces; (3) robust model selection for overlapping sources; and (4) scaling the computational infrastructure to handle the data deluge expected from next‑generation observatories. They conclude that the convergence of high‑performance computing, machine learning, and advanced statistical methods will be the key to unlocking the full scientific potential of gravitational‑wave astronomy.