Transfer Topic Modeling with Ease and Scalability

The increasing volume of short texts generated on social media sites, such as Twitter or Facebook, creates a great demand for effective and efficient topic modeling approaches. While latent Dirichlet allocation (LDA) can be applied, it is not optimal…

Authors: Jeon-Hyung Kang, Jun Ma, Yan Liu

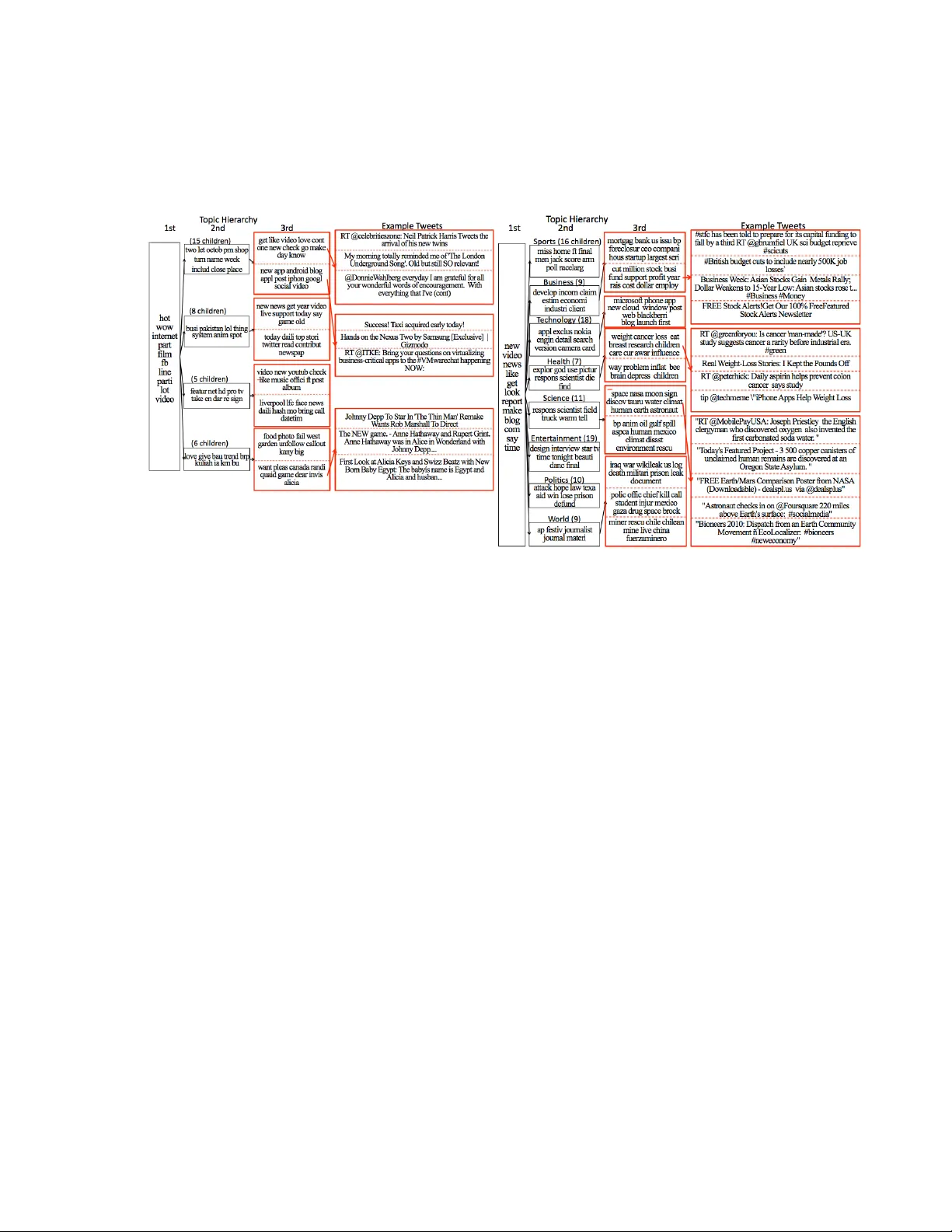

T ransfer T opic Mo deling with Ease and Scalabilit y Jeon-Hyung Kang, Jun Ma, Y an Liu Computer Science Departmen t Univ ersity of Southern California Los Angeles, CA 90089 jeonhyuk,junma,yanliu@usc.edu Abstract The increasing v olume of short texts generated on so- cial media sites, such as Twitter or F acebo ok, creates a great demand for effectiv e and efficient topic mod- eling approac hes. While laten t Diric hlet allocation (LD A) can b e applied, it is not optimal due to its w eakness in handling short texts with fast-changing topics and scalabilit y concerns. In this paper, we pro- p ose a transfer learning approach that utilizes abun- dan t labeled documents from other domains (such as Y aho o! News or Wikip edia) to improv e topic mo d- eling, with b etter model fitting and result interpre- tation. Sp ecifically , w e dev elop T r ansfer Hier ar chic al LD A (thLD A) mo del, which incorp orates the lab el information from other domains via informative pri- ors. In addition, w e develop a parallel implementa- tion of our mo del for large-scale applications. W e demonstrate the effectiv eness of our thLDA model on b oth a microblogging dataset and standard text collections including AP and R CV1 datasets. 1 In tro duction So cial-media w ebsites, suc h as Twitter and F aceb ook, ha ve b ecome a no vel real-time channel for p eople to share information on a broad range of sub jects. With millions of messages and up dates posted daily , there is an obvious need for effective wa ys to organize the data tsunami. Latent Diric hlet Allo cation (LDA), as a Ba yesian hierarc hical mo del to capture the text gen- eration pro cess [5], has b een sho wn to b e very pow er- ful in mo deling text corpus. How ev er, several ma jor c haracteristics distinguish so cial media data from tra- ditional text corpus and raise great challenges to the LD A mo del. First, each p ost or tw eet is limited to a certain n umber of characters, and as a result abbre- viated syn tax is often introduced. Second, the texts are very noisy , with broad topics and rep etitiv e, less meaningful conten t. Third, the input data t ypically arriv es in high-volume streams. It is kno wn that the topic outputs by LDA com- pletely depend on the w ord distributions in the train- ing do cumen ts. Therefore, LDA results on blog and microblogging data would naturally b e p o or, with a cluster of words that co-o ccur in man y do cuments without actual seman tic meanings [18, 24]. In tu- itiv ely , the generative pro cess of LDA mo del can be guided by the do cumen t labels so that the learned hidden topics can b e more meaningful. Therefore, in [4, 12, 21], discriminativ e training of LDA has b een explored and in particular, [20] applied lab ele d LD A (lLD A) to analyze Twitter data b y using hash tags as lab els. This approach addresses our c hallenges to a certain extent. Even though sup ervised LDA giv es more comprehensible topics than LDA, it has the general limitation of the LDA framework in that eac h do cumen t is represen ted b y a single topic dis- tribution. This makes comparing do cumen ts diffi- cult when w e hav e sparse, noisy do cuments with con- stan tly c hanging topics. F urthermore, it is very dif- ficult to obtain the labels (e.g. hash tags) for con- tin uously gro wing text collections like so cial media, not to mention the fact that on Twitter application, man y hashtags refer to very broad topics (e.g. “#in- 1 ternet”, “#sales”) and therefore could even b e mis- leading when used to guide the topic mo dels. [3, 2] prop osed hierarchical LDA model (hLD A), that gen- erates topic hierarchies from an infinite num b er of topics, whic h are represented b y a topic tree, and eac h do cument is assigned a path from tree ro ot to one of the tree lea ves. hLD A has the capabilit y to enco de the semantic topic hierarchies, but when fed with noisy and sparse data suc h as the user generated short tw eet messages, it is not robust and its results lac k meaningful interpretations [18, 24]. Recen tly , hLDA has b een studied in [9] to sum- marize m ultiple do cumen ts. They built a t wo-lev el learning mo del using hLDA mo del to disco ver simi- lar sentences to the given summary sentences using hidden topic distributions of the do cumen t. The dis- tance dep endent CRP [6] studied several t yp es of de- ca y: windo w decay , exp onential deca y , and logistic deca y , where customers’ table assignmen ts depend on external distances b etw een them. Our pap er aims to bridge the gap b et ween short, noisy texts and their actual generation process without additional lab eling efforts. A t the same time, we dev elop parallel algo- rithms to speed up inference so that our mo del can b e applicable to large-scale applications. In this pap er, we prop ose a simple solution mo del, transfer hierarhicial LDA (thLDA) mo del. The basic idea is extracting human knowledge on topics from source domain corpus in the form of representativ e w ords that are consistently meaning across con texts or media and enco de them as priors to learn the topic hierarc hies in target domain corpus of the hLDA mo del. T o extract source domain corpus, thLDA mo del utilizes related lab eled do cumen ts from other sources (suc h as Y aho o! news or so cially tagged w eb pages) and to enco de the laels, we mo dified a nested Chinese Restaurant Pro cess (nCRP) as guidance for inferring latent topics of target domains. W e base our mo del on hierarc hical LDA (hLDA) [3, 2] mainly b ecause hLDA has the natural capability to enco de the semantic topic hierarchies with document clus- ters. In addition, recent study suggests that hierar- c hical Dirichlet process pro vides an effective explana- tion mo del for human transfer learning [8]. The rest of the pap er is organized as follo ws: In Section 2, we describ e the prop osed metho dology in detail and discuss its relationship with existing work. In Section 3, w e demonstrate the effectiveness of our mo del, and summarize the work and discuss future directions in Section 4. 2 Related W ork 2.1 T opic Mo dels Laten t Dirichlet Allo cation (LDA) [5] has gained p op- ularit y for automatically extracting a represen tation of corpus. LD A is a completely unsup ervised mo del that views do cumen ts as a mixture of probabilistic topics that is represented as a K dimensional ran- dom v ariable θ . In generative story , each do cumen t is generated b y first picking a topic distribution θ from the Dirichlet prior and then use each do cumen t’s topic distribution θ to sample latent topic v ariables z i . LDA makes the assumption that eac h word is generated from one topic where z i is a latent v ariable indicating the hidden topic assignment for word w i . The probabilit y of choosing a word w i under topic z i , p ( w i | z i , β ), dep ends on different do cuments. LDA is not appropriate for lab eled corp ora, so it has b een extended in several wa ys to incorp orate a sup ervised lab el set into its learning pro cess. In [21], Ramage et al. introduced L ab ele d LDA, a nov el mo del that use m ulti-lab eled corp ora to address the credit assign- men t problem. Unlike LDA, L ab ele d LD A constrains topics of do cumen ts to a given lab el set. Instead of using symmetric Dirichlet distribution with a single h yp er-parameter α as a Dirichlet prior on the topic distribution θ ( d ) , it restricted θ ( d ) to only the topics that correspond to observed lab els Λ ( d ) . In [3, 2], the authors proposed a sto chastic pro cesses, where the Ba yesian inference are no longer restricted to fi- nite dimensional spaces. Unlike LD A, it do es not re- strict the giv en n umber of topics and allows arbitrary breadth and depth of topic hierarchies. The topics in hLD A mo del are represented by a topic tree, and each do cumen t is assigned a path from tree ro ot to one of the tree leav es. Eac h do cumen t is generated by first sampling a path along the topic tree, and then sam- pling topic z i among all the topic no des in the path for eac h word w i . The authors proposed a nested 2 Chinese restauran t pro cess prior on path selection. p ( occupied table i | pr ev ious customer s ) = n i γ + n − 1 (1) p ( unoccupied table | pr ev ious customer s ) = γ γ + n − 1 (2) The nested Chinese restaurant pro cess implies that the first customer sits at the first table and the nth customer sits at a table i whic h is drawn from the ab o ve equations. When a path of depth d is sam- pled, there are d n umber of topic no des along the path, and the do cument sample the topic z i among all topic no des in the path for each w ord w i based on GEM distribution. The experiment result of hLDA sho ws that the ab ov e document generating story can actually enco de the semantic topic hierarchies. 2.2 T ransfer Learning T ransfer learning has b een extensiv ely studied in the past decade to leverage the data (either lab eled or un- lab eled) from one task (or domain) to help another [17]. In summary , there are tw o t yp es of transfer learning problems, i.e. shared lab el space or shared feature space. F or shared lab el space [1], the main ob jective is to transfer the lab el information b etw een observ ations from differen t distributions (i.e. domain adaptation) or uncov er the relations b et ween m ulti- ple lab els for b etter prediction (i.e. multi-task learn- ing); for shared feature space, one of the most rep- resen tative w orks is self-taugh t learning [19], which uses sparse coding [13] to construct higher-level fea- tures via abundan t unlab eled data to help improv e the p erformance of the classification task with a lim- ited n umber of lab eled examples. As an unsup ervised generativ e model, LDA p os- sesses the adv antage of mo deling the generative pro- cedure of the whole dataset by establishing the rela- tionships b etw een the do cuments and their asso ciated hidden topics, and b etw een the hidden topics and the concrete w ords, in an un-sup ervised wa y . In tu- itiv ely , transfer learning on this generative model can b e realized in tw o w a ys: one is utilizing the do cument lab els from the other domain (with the assumption that the target domain and source domain share the same lab el space) so that the learned hidden topics can be m uch more meaningful, and the other is utiliz- ing the do cumen ts from other domains to enrich the con tents so that we can learn a more robust shared laten t space. In [4, 12, 21], the authors prop osed the discrimina- tiv e LDA, whic h adds sup ervised information to the original LDA mo del, and guides the generativ e pro- cess of do cumen ts by using the labels. These methods clearly demonstrate the adv an tages of the discrimina- tiv e training of generativ e mo dels. How ev er, this is differen t from transfer learning since they simply uti- lize the lab eled do cuments in the same domain to help build the generativ e mo del. But the same motiv ation can b e applied to transfer learning, in which the su- p ervised information is used to guide the generation of the common latent semantic space shared by b oth the source domain and the target domain. T rans- ferring information from source to target domain is extremely desirable in so cial media analysis, in whic h the target domain example features are very sparse, with a lot of missing features. Based on the shared common latent semantic space, the missing features can b e recov ered to some extent, which is helpful in b etter representing these examples. 3 T ransfer T opic Mo dels Con tent analysis on so cial media data is a c halleng- ing problem due to its unique language c haracteris- tics, which preven ts standard text mining to ols from b eing used to their full p otential. Sev eral mo dels ha ve b een developed to o vercome this barrier b y ag- gregating many messages [11], applying temp oral at- tributes [15, 22, 23], examining entities [15] or ap- plying manual annotation to guide the topic genera- tion [20]. The main motiv ation of our work is that previous unsup ervised approaches for analyzing so- cial media data fail to ac hieve high accuracy due to the noise and sparseness, while sup ervised approaches require annotated data, whic h often requires a sig- nifican t amount of human effort. Even though hi- 3 er ar chic al LD A has the natural capability to enco de the seman tic topic hierarchies with clusters of similar do cumen ts based on that hierarchies, it still cannot pro vide robust result for noise and sparseness (Fig 2(a)) b ecause of the exchangeabilit y assumption in Diric hlet Pro cess mixture [6] . Exchangeabilit y is es- sen tial for CRP mixture to b e equiv alent to a DP mixture; thus customers are exchangeable. Ho wev er this assumption is not reasonable when it is applied to microblogging dataset. Based on our exp erimen t, when the data set is noisy and sparse, unrelated w ords tends to cluster with other word b ecause of co-o ccurrences.(Fig 2(a)) 3.1 Kno wledge Extraction from Source Domain Consider the task of modeling short and sparse docu- men ts based on sp ecific source domain structure. F or example, a user who is interested in browsing do cu- men ts with particular categories of topics might pre- fer to see clusters of other do cumen ts based on his category . Clustering target domain b y transferring his topic hierarc hy category in the source domain, w e could pro duce b etter document clusters and topic hi- erarc hies by lev eraging the context information from the source domain. User generated categories c an b e found from v ar- ious source domains: Twitter list, Flickr collection and set, Del.icio.us hierarc hy and Wikipedia or News categories. W e transfer source domain knowledge to target domain do cumen ts by assigning a prior path, sequence of assigned topic no des from ro ot to leaf no de. The prior paths of the do cuments can b e used to iden tify whether tw o target do cuments are similar or not, so that our mo del could group similar do cu- men ts cluster together while keep different do cumen ts separate based on the lab el. T o lab el each do cumen t’s prior path on the source domain hierarch y , w e gen- erate word vectors of no des in the source domain hi- erarc hy . Each lab el is generated by measuring the similarit y betw een source domain topic hierarch y and do cumen t. There are many wa ys to measure similar- it y b etw een tw o vectors: cosine similarit y , euclidean distance, Jaccard index and so on. F or simplicit y , in this pap er, we label our prior knowledge of the (a) (b) Figure 1: (a)Y aho o! news home page and the cate- gories of news (b) The gr aphic al mo del r epr esentation of t r ansfer Hier ar chic al LDA with a mo difie d-neste d CRP prior target do cument b y computing cosine similarity b e- t ween the target do cument and a no de in the source domain hierarch y . W e start from the ro ot no de of the hierarch y and keep assigning only the most sim- ilar topic no de at eac h level while only considering c hild no des of currently assigned topic no des as next lev el candidates. 3.2 T ransfer Hierarchical LD A W e incorp orated the lab el hierarc hies into the mo del b y c hanging the prior of the path in hLDA in a w ay that the path selection fa vors the ones in the existing lab el hierarc hies. Similar to original hLDA, thLDA mo dels each do cument, a mixture of topics on the path, and generates each word from one of the topics. In the original hLDA mo del, the path prior is nested Chinese R estaur ant Pr o c ess (nCRP), where the prob- abilit y of choosing one topic in one topic la yer de- p ends on the n umber of documents already assigned to each no de in that lay er. That is, no des assigned with more documents will hav e a higher probability 4 of generating new do cumen ts. Ho wev er, thLD A in- corp orates supervision by mo difying path prior using follo wing equation: p ( table i w ithout pr ior | pr ev ious customer s ) = n i γ + k λ + n (3) p ( table i w ith pr ior | pr ev ious customer s ) = n i + λ γ + k λ + n (4) p ( unoccupied table | pr ev ious customer s ) = γ γ + k λ + n (5) where k is the total n umber of lab els for the do c- umen t, d is the total n umber of do cumen ts, and λ is the weigh ted indicator v ariable that con trols the strength of a prior added into the original nested Chi- nese restaurant pro cess. The graphical mo del of the new mo del is shown in Fig 1 (b). Whether the cus- tomer sits at a sp ecific table is not only related to ho w many customers are currently sitting at table i ( n i ) and ho w often a new customer chooses a new table ( γ ), but is also related to how close the current customer is to customers at table i ( λ ). Note that, in this work we use same λ for different topic for simplicit y; how ev er table sp ecific λ or different prior for differen t table can b e applied for a sophisticated mo del. In the transfer hierarc hical LD A model, the do cu- men ts in a corpus are assumed to b e drawn from the follo wing generative pro cess: (1) F or eac h table k in the infinite tree (a) Generate β ( k ) ∼ D irichl et ( η ) (2) F or eac h do cument d (a) Generate c ( d ) ∼ mo dified nCRP ( γ , λ ) (b) Generate θ ( d ) |{ m, π } ∼ GE M ( m, π ) (c) F or eac h word, i. Cho ose lev el Z d,n | θ d ∼ D iscrete ( θ d ) ii. Cho ose w ord W d,n |{ z d,n , c d , β } ∼ D iscrete ( β c d [ z d,n ]) The v ariables notation are: z d,n , the topic assign- men ts of the n th w ord in the d th do cument ov er L topics; w d,n , the n th word in the d th document. In Fig 1 (b), T represents a collection of an infinite n umber of L level paths which is drawn from mo di- fied nested CRP . c m,l represen ts the restauran t cor- rep onding to the l th topic distribution in m th do cu- men t and distribution of c m,l will b e defined b y the mo dified nested CRP conditioned on all the previous c n,l where n < m . W e assume that eac h table in a restauran t is assigned a parameter v ector β that is a multinomial topic distribution o ver vocabulary for eac h topic k from a Diric hlet prior η . W e also assume that the words are generated from a mixture model whic h is a sp ecific random distribution of each do c- umen t. A do cumen t is drawn by first choosing an L lev el path through the mo dified-nested CRP and then drawing the words from the L topics which are asso ciated with the restaurants along the path. Λ ( d ) = ( l 1 , ..., l k ) refers binary label presence indicators and Φ k is the lab el prior for lab el k. thLD A is able to transfer source domain knowl- edge to topic hierarch y by making an assignment re- lated to not only how many do cumen ts are assigned in topic i ( n i ) but also how close current topic is to the do cuments in topic i based on the source do- main knowledge ( λ ). F or unseen topics from source domain, w e do not hav e knowledge to transfer from source domain, so we assign probability prop ortional to the num ber of documents already assigned in topic i. Unlik e transferring kno wledge only for lab eled en- tities or given source domain kno wledge, our model learns b oth unlab eled and lab eled data based on dif- feren t prior probability equations (4), (5) and (6). A mo dified nested Chinese restaurant pro cess can b e imagined through the following scenario. As in [3, 2], suppose that there are an infinite num b er of infinite-table Chinese restaurants in a city and there is only one headquarter restauran t. There is a note on each table in every restauran t which refers to other restaurants and each restaurant is referred to once. So, starting from the headquarter restaurant, all other restaurants are connected as a branching tree. One can think of the table as a do or to other restauran ts unless the current restaurant is the leaf no de restaurant of the tree. So, starting from the ro ot restauran t, one can reach the final destination table of leaf no de restaurant, and the customers in the same restauran t share the same path. When a new cus- tomer arriv es, instead of follo wing the original nested 5 c hinese restaurant process, we put a higher weigh t on the table where similar customers are seated. 3.3 Gibbs Sampling for Inference The inference pro cedure in our thLDA mo del is sim- ilar to hLDA except for the mo dification of the path prior. W e use the gibbs sampling scheme: for each do cumen t in the corpus, we first sample a path c d in the topic tree based on the path sampling of the rest of the do cumen ts c − d in the corpus: (6) p ( c d | w , c − d , z , η , γ , λ ) ∝ p ( c d | c − d , γ , λ ) p ( w d | c , w − d , z , η ) Here the p ( c d | c − d , γ , λ ) is the prior probability on paths implied by the mo dified nested CRP , and p ( w d | c , w − d , z , η ) is the probability of the data given for a particular path. The second step in the Gibbs sampling inference for eac h do cument is to sample the lev el assignments for eac h word in the do cument given the sampled path: (7) p ( z d,n | z − ( d , n ) , c , w , m , π , η ) ∝ p ( z d,n | z d , − n , m , π ) p ( w d , n | z , c , w − ( d , n ) , η ) The first term is the distribution ov er levels from GEM, and the second term is the word emission prob- abilit y given a topic assignment for each word. The only thing w e need to change in the inference scheme is the path prior probability in the path sampling step. 3.4 P arallel Inference Algorithm W e dev elop ed thLDA parallel approximate inference algorithm on indep endent P processors to facilitate learning efficiency . In other words, we split the data in to P parts, and implemen t thLDA on each pro cessor p erforming Gibbs sampling on partial data. Ho wev er, the gibbs sampler requires that eac h sample step is conditioned on the rest of the sampling states, hence w e introduce a tree merge stage to help P Gibbs sam- plers share the sampling states p erio dically during the P indep enden t inference pro cesses. First, giv en the curren t global state of the CRP , w e sample the topic assignment for word n in do cumen t d from pro cessor p: (8) p ( z d,n,p | z − ( d , n , p ) , c , w , m , π , η ) ∝ p ( z d,n,p | z d , − n , p , m , π ) p ( w d , n , p | z , c , w − ( d , n , p ) , η ) Here z − ( d , n , p ) is the vectors of topic allo cations on pro cess p excluding z ( d , n , p ) and w − ( d , n , p ) is the nth w ord in do cument d on pro cess p excluding w ( d , n , p ) . Note that on a separate processor, we need to use total vocabulary size and the num b er of words that ha ve been assigned to the topic in the global state of the CRP . W e merge the P topic assignment count table to a single set of coun ts after each LDA Gibbs iteration so that the global sampling state is shared among P pro cesses. [16]. Second, on P separate pro cessors, we sample path selection, conditional distribution for c d,p giv en w and c v ariables for all do cuments other than d. (9) p ( c d , p | w , c − d , p , z , η , γ , λ ) ∝ p ( c d , p | c − d , p , γ , λ ) p ( w d , p | c , w − d , p , z , η ) The conditional distribution for both prior and the lik eliho o d of the data given a particular c hoice of c d,p are computed lo cally . Note that, to compute the sec- ond term it needs to b e known the global state of the CRP: do cuments’ path assignment and the n umber of instances of a word that hav e b een assigned to the topic index b y c d on the tree. T o merge topic trees for the global state of the CRP , w e first define the similarit y betw een t w o topics as the cosine similarity of tw o topics’ word distribu- tion v ector: (10) similar ity β i β j = β i · β j / || β i || || β j || F or giv en P num b er of infinite trees of Chinese restau- ran ts, we pick one tree as the base tree, and recur- siv ely merge topics in the remaining P-1 trees in to the base tree in a top-down manner. F or each topic no de t i b eing merged, we find the most similar no de t j in the base tree where t i and t j ha ve same parent no de. 6 foreac h iter ation do p ar al lel foreac h do cument d do foreac h wor d n do sample the topic assignmen t p ( z d,n,p | z − ( d , n , p ) , c , w , m , π , η ) end end foreac h pr o c ess p do merge topic assignmen t count table to a single set of coun ts end p ar al lel foreac h do cument d do sample path p ( c d , p | w , c − d , p , z , η , γ , λ ) using global state of the CRP end Pic k one tree q as a base tree in every merge stage, foreac h tr e e fr om pr o c ess p ∈ P- { q } do foreac h depth do foreac h topic no de i do find most similar topic no de j ∈ q if parent(i)==paren t(j) merge topics i to topic j else add topic no de i to tree q end end end end Algorithm 1: The parallel inference algorithm 3.5 Discussion Our thLD A model is differen t from existing topic mo dels. In lLDA, they incorporate sup ervision by restricting θ d to each do cumen t’s lab el set. So word- topic Z d,n assignmen ts are restricted by its given la- b els. The num b er of unique lab els K in lLDA is the same as the num b er of topics K in LDA. Unlike lLDA, thLD A do es not directly correlate lab el and topic by mo difying θ d , so the n umber of topics are not deter- mined or set by the num b er of labels, that n umber serv es as a guidance for inferring latent topics. The prop osed thLDA model is significan t in that it can o vercome the barrier that unsup ervised mo dels hav e when it is applied to noisy and sparse data. By trans- ferring differen t domain kno wledge, thLD A also sav es time and the cumbersome annotation efforts required for sup ervised models. thLDA has an adv an tage ov er LD A mo del by pro ducing a topic hierarch y and do c- umen t clusters without additionally computing sim- ilarit y among topic distribution of do cumen ts. F ur- thermore, thLDA offers ma jor adv an tages ov er other sup ervised or semisup ervised LDA mo dels by pro- viding mixture of detailed a topic hierarch y b elo w a certain level in an unsup ervised wa y , while pro vid- ing the ab o ve that level topic structure guided by giv en prior knowledge. By applying prior knowledge in b oth supervised and unsupervised wa ys, we can apply thLDA to learn deep er lev el of topic hierarch y than the giv en depth of source domain prior hierar- c hy . In the following exp eriment, w e will sho w the p erformance of thLD A a com bination of sup ervised prior knowledge upto a certain level and unsup ervised b elo w that level. 4 Exp erimen t Results In our experiments, w e used one source domain and three target domain text data sets to show the effec- tiv eness of our transfer hierarc hical LDA mo del. W e used tw o well known text collections: the Asso ciated Press (AP) Data set[10] and Reuters Corpus V olume 1(R CV1) Data set[14] and one sparse and noisy Mi- croblog data from Twitter for target domains and Y aho o! News categories for source domain. 7 (a) hLDA (b) thLDA Figure 2: The topic hierarch y learned from (A) hLDA and (B) thLDA as well as example tw eets assigned to the topic path. 4.1 Dataset Description W e used the web crawler to fetc h news titles on 8 categories in Y aho o! News: science, business, health, sp orts, p olitics, w orld, technology and en tertainmen t, as sho wn in Fig 1 (a). W e parsed and stemmed news titles and computed the tf-idf score to gener- ate weigh ted w ord vector for each topic category . W e computed each topic category score using the top 50 tf-idf w eighted word vector and pic ked one optimal category p er lev el as a lab el for each target docu- men t. T ext Retriev al Conference AP (TREC-AP) [10] con tains Asso ciated Press news stories from 1988 to 1990. The original data includes o ver 200,000 do cu- men ts with 20 categories. The sample AP data set from [3], which is sampled from a subset of the TREC AP corpus contains D= 2,246 do cumen ts with a vo- cabulary size V = 10,473 unique terms. W e divided do cumen ts in to 1,796 observed do cumen ts and 450 held-out do cumen ts to measure the held-out predic- tiv e log likelihoo d. R CV1[14] is an archiv e of o ver 800,000 manually categorized newswire stories provided by Reuters, Ltd. distributed as a set of on-line app endices to a JMLR article. It also includes 126 categories, asso- ciated industries and regions. F or this w ork a subset of RCV1 data set is used. Sample R CV1 data set has D = 55,606 do cuments with a v o cabulary size V = 8,625 unique terms. W e randomly divided it in to 44,486 observed do cumen ts and 11,120 held-out do cumen ts for exp erimen ts. W e hav e crawled the Twitter data for t wo w eeks and obtained around 58,000 user profiles and 2,000,000 t weets. Twitter users use some structure con ven tions such as a user-to-message relation (i.e. initial tw eet authors, via, cc, by), t yp e of message (i.e. Broadcast, con versation, or retw eet messages), t yp e of resources (i.e. URLs, hashtags, keyw ords) to o vercome the 140 character limit. T o capture trend- ing topics, many applications analyze twitter data and group similar trending topics using structure in- 8 formation (i.e. hash tag or url) and shows a list of top N trending topics. Ho wev er according to [7], only 5% of tw eets con tain a hashtag with 41% of these con- taining a URL, and also only 22% of tw eets include a URL. In this work, instead of using structure infor- mation (i.e. hash tag or url) of a tw eet, we used only w ords. W e remov ed structural information suc h as initial t weet authors, via, cc, b y , and url and stemmed w ord to transform any word into its base form using the P orter Stemmer. F or the exp erimen t, we ran- domly sampled and used D = 21,364 documents with a vocabulary size V = 31,181 unique terms. W e ran- domly divided it into 16,023 observ ed do cumen ts and 5,341 held-out do cumen ts. 4.2 P erformance Comparison on T opic Mo deling LD A and Lab eled LD A W e implemen ted LD A and lLDA using the standard collapsed Gibbs Sam- pling metho d. T o compare the learned topic results b et ween sup ervised LDA and unsup ervised LDA topic mo dels, we ran standard LDA with 9, 20 and 50 topics and lLDA with 9 topics: Y aho o news 8 top lev el categories and 1 topic as freedom of topic. The results show tw o main observ ations: first, multiple topics from the standard LD A mapp ed to p opular topics (i.e. technology , entertainmen t and sp orts) in lLD A; second, not all lLDA topics w ere disco vered b y standard LDA (i.e. topics such as p olitics, world, and science are not disco vered). Hierarc hical LD A W e used HLDA-C [3, 2] with a fixed depth tree and a stic k breaking prior on the depth w eights. The topic hierarch y generated by hLD A is shown in Fig 2(a). Being an unsup ervised mo del, the hLDA giv es the result that totally de- p ends on term co-occurance in the do cumen ts. hLD A giv es a topic hierarc hy that is not easily understo o d b y h uman b eings, because each t weet con tains only small n umber of terms and co-o ccurance w ould b e v ery sparse and less relev an t compared to long do cu- men ts. No des in the topic hierarc hy capture some clusters of words from the input documents, such as the 2nd topic in 3rd column in Fig 2(a), which has key w ords fo cusing on smart phones (Android, iPhone, and Apple) and the 5th no des in 3rd column that co vers online multimedia resources. How ever, the 2nd level topic nodes are less informativ e and the relationship b et ween child no des in the 3rd level and their parent no des in the 2nd lev el is less semantically meaningful. The topics b elong to the same parent in lev el 3 usually do not relate to eac h other in our run result. Ideally , this should work for do cuments that are long in length and dense in word distribution and o verlapping. Ho wev er, hLDA gives less interpretable results on noisy and sparse data. T ransfer Hierarc hical LD A W e implemented standard thLD A b y mo difying HLDA-C. Fig 2(b) sho ws tw o imp ortant adv antages of our model: first, topic no des are b etter interpretable by transferring our source domain knowledge. Second, all the c hild topics reflect a strong semantic relationship with their parent. F or example, in the topic ”world”, the first c hild ”iraq war wikileak death” relates to the Iraq w ar topic, the second child ”p olice kill injury drup” relates to criminals and more in terestingly , the 3rd child topic ”chile rescue miner” denotes the recent ev ent of 33 traped miners in Chile. Note that we only imp ose prior kno wledge on the 2nd level and all 3rd lev el topics are automatically emerged from the data set. In ”science” topics the 1st child topic ”space Nasa moon” is ab out astronom y and the 2nd c hild topic ”BP oil gulf spill environmen t” is ab out the recen t Gulf Oil Spill. F urthermore, example t weets assigned to the topic no des show a strong asso ciation with t weet clustering. T o quantitativ ely measure p erformance of thLDA, w e used predictive held-out likelihoo d. W e divided the corpus in to the observ ed and the held-out set, and approximate the conditional probabilit y of the held-out set given the training set. T o make a fair comparison we applied same hyper parameters that exist in all three mo dels while applied a different hy- p er parameter η to obtain a similar num b er of topics for hLDA and thLDA mo dels. F ollowing [2], we used outer samples: taking samples 100 iterations apart using a burn-in of 200 samples. W e collected 800 samples of the latent v ariables given the held-out do c- 9 (a) Twitter (b) AP (c) R CV1 Figure 3: The held-out predictive log lik eliho od comparison for LDA, hLD A, and thLDA mo del on three differen t data set umen ts and computed their conditional probability giv en the outer sample with the harmonic mean. Fig 3 illustrates the held-out likelihoo d for LDA, hLDA, and thLD A on Twitter, AP , and R CV1 corpus. In the figure, we applied a set of fixed topic cardinality on LDA and fixed depth 3 of the hierarch y on hLDA and thLD A. W e see that thLDA alw a ys provides bet- ter predictive p erformance than LDA or hLD A on all three cases (Fig 3). Interestingly , thLDA pro- vides significantly b etter p erformance on (a) Twit- ter data set, while hLD A sho ws p oor p erformance than LDA. As Blei et al [3, 2] show ed that even- tually with large num b ers of topics LDA will dom- inate hLDA and thLD A in predictiv e performance, ho wev er thLDA p erforms b etter in predictive p erfor- mance with reasonable n umbers of topics. F or man ual ev aluation of tw eet assignments on learned topics, w e randomly selected 100 tw eets and man ually annotate correctness. F or LDA, w e pick the highest assigned topic and for hLDA, thLDA, and parallel-thLDA, third level topic no de is used and their accuracy were 41% 46%, 71%, and 56% re- sp ectiv ely . T able 1 shows example tw eets with their assigned topic from LD A, hLDA, and thLDA. 4.3 P erformance Comparison on Scal- ablit y W e ev aluate our approximate parallel learning metho d on the t witter dataset with 5000 tw eets. The log likelihoo d of training data during the Gibbs sam- pling iterations is sho wn in Fig 4 (a). In all cases, the gibbs sampling con verges to the distribution that has similar log likelyhoo d. Fig 4 (b) shows the sp eedup from the parallel inference metho d. In addition to the o verhead of topic tree merging stage, the system also suffers from the ov erhead of state loading and saving time, which is similar since they o ccur everytime we need to update the global tree. Because of these ov er- heads, the system performs b etter when merging step is large. When w e merge topic trees in ev ery 50 gibbs iterations, the sp eedup with 4 pro cesses is 2.36 times faster than with 1 pro cess, but when we merge topic trees in every 200 gibbs iterations, the sp eedup is 3.25 times. The ov erhead can be roughly seen if we extend the lines in Fig 4 (b) to in tersect with y-axes. Since the merging stage complexit y is linear to the num b er of topic trees b eing merged, we see a greater o ver- head in 4 pro cess exp erimen t. Ho wev er, the merging algorithm complexit y do es not dep end on the size of dataset, whic h means the merging ov erhead will b e ignorable when run with h uge datasets. 5 Conclusion In this pap er, we proposed a transfer learning ap- proac h for effective and efficient topic mo deling anal- ysis on so cial media data. More sp ecifically , we de- v elop ed transfer hierarc hical LD A mo del, an exten- 10 (a) (b) Figure 4: (a)P arallel thLD A approximation inference p erformance comparison. (b)Parallel thLDA approxi- mation inference sp eedup comparison. Tweet LDA hLDA thLDA usnoaago v: NO AA scientists monitor servic switc h roll tcot teaparti palin climat discov c heck earth ozone lev els ab ov e broadband resid tea sgp parti gop water chang sea found An tarctic: voip ip chang asterisk democrat obama nvsen scien tist arctic w arm FB R T: Breaking News: NA TO official: call edit via limit one game yank e minist foreign pakistan Osama bin Laden is hiding duti topic liv e know new video israel un us gaza in north west Pakistan. - playstat novel cafe find ranger make man secur aid call T able 1: Example Tweets and their assigned topic with top 10 w ords from LDA, hLDA, and thLDA mo del. sion of hierarchical LDA model, which inferred the topic distributions of do cuments while incorp orating kno wledge from other domains. In addition, w e de- signed a parallel inference framew ork to run paral- lel Gibbs sampler synchronously on multi-core ma- c hines so as to p erform topic mo deling on large-scale datasets. Our work is significant in that it is among the frontier approac hes to explore knowledge trans- fer from other domains to topic mo deling for large- scale microblog analysis. F or future work, we are in terested in exploring other effective approaches to transfer domain knowledge in addition to topic priors as examined in the curren t pap er. Ac kno wledgmen t Researc h w as sp onsored b y the U.S. Defense Ad- v anced Researc h Pro jects Agency (DARP A) under the Anomaly Detection at Multiple Scales (AD AMS) program, Agreemen t Num b er W911NF-11-C-0200. The views and conclusions con tained in this do cu- men t are those of the author(s) and should not be in terpreted as representing the official p olicies, either expressed or implied, of the U.S. Defense Adv anced Researc h Pro jects Agency or the U.S. Go vernmen t. The U.S. Go vernmen t is authorized to reproduce and distribute reprin ts for Gov ernmen t purposes not with- standing an y copyrigh t notation hereon. References [1] A. Arnold, R. Nallapati, and W. Cohen. A comparativ e study of metho ds for transductiv e transfer learning. In ICDM Workshops , 2008. [2] D. Blei, T. Griffiths, and M. Jordan. The nested c hinese restauran t pro cess and ba yesian non- parametric inference of topic hierarchies. Jour- nal of the ACM (JACM) , 57(2):1–30, 2010. [3] D. Blei, T. Griffiths, M. Jordan, and J. T enen- baum. Hierarchical topic mo dels and the nested 11 Chinese restauran t pro cess. A dvanc es in neur al information pr o c essing systems , 16:106, 2004. [4] D. Blei and J. McAuliffe. Sup ervised topic mo d- els. A dvanc es in Neur al Information Pr o c essing Systems , 2008. [5] D. Blei, A. Ng, and M. Jordan. Latent dirich- let allo cation. The Journal of Machine L e arning R ese ar ch , 3:993–1022, 2003. [6] D. M. Blei and P . F razier. Distance dep enden t c hinese restaurant pro cesses. [7] D. Bo yd, S. Golder, and G. Lotan. Tweet, t w eet, ret weet: Conv ersational aspects of retw eeting on t witter. In In Pr o c e e dings of the Hawaii Inter- national Confer enc e on System Scienc es , 2010. [8] K. R. Canini, M. M. Shashko v, and T. L. Grif- fiths. Mo deling transfer learning in human cate- gorization with the hierarchical dirichlet pro cess. [9] A. Celikyilmaz and D. Hakk ani-T ur. A hybrid hierarc hical mo del for m ulti-do cumen t summa- rization. ACL ’10, pages 815–824, Stroudsburg, P A, USA, 2010. [10] D. Harman. First text r etrieval c onfer enc e (TREC-1): pr o c e e dings . DIANE Publishing, 1993. [11] L. Hong and B. D. Davison. Empirical study of topic mo deling in twitter, 2010. [12] S. Lacoste-Julien, F. Sha, and M. Jordan. Dis- cLD A: Discriminative learning for dimensional- it y reduction and classification. NIPS , 2008. [13] H. Lee, A. Battle, R. Raina, and A. Ng. Efficient sparse co ding algorithms. NIPS , 2007. [14] D. Lewis, Y. Y ang, T. Rose, and F. Li. Rcv1: A new benchmark collection for text categoriza- tion research. The Journal of Machine L e arning R ese ar ch , 5:361–397, 2004. [15] M. Michelson and S. A. Macsk assy . Discov ering users’ topics of interest on twitter: a first lo ok. In Pr o c e e dings of the fourth workshop on Analytics for noisy unstructur e d text data , AND ’10, pages 73–80, New Y ork, NY, USA, Oct. 2010. ACM Press. [16] D. Newman, A. Asuncion, P . Smyth, and M. W elling. Distributed inference for latent diric hlet allo cation. A dvanc es in Neur al Infor- mation Pr o c essing Systems , 20(1081-1088):17– 24, 2007. [17] S. Pan and Q. Y ang. A survey on transfer learn- ing. TKDE , 2009. [18] K. Puniy ani, J. Eisenstein, S. Cohen, and E. Xing. So cial links from latent topics in Mi- croblogs. In Pr o c e e dings of the NAA CL HL T 2010 Workshop on Computational Linguistics in a World of S o cial Me dia , pages 19–20. Asso cia- tion for Computational Linguistics, 2010. [19] R. Raina, A. Battle, H. Lee, B. Pac k er, and A. Ng. Self-taugh t learning: transfer learning from unlab eled data. In ICML , 2007. [20] D. Ramage, S. Dumais, and D. Liebling. Char- acterizing microblogs with topic mo dels. In Pr o- c e e dings of the F ourth International AAAI Con- fer enc e on Weblo gs and So cial Me dia . AAAI, 2010. [21] D. Ramage, D. Hall, R. Nallapati, and C. Man- ning. Lab eled LD A: A sup ervised topic mo del for credit attribution in multi-labeled corpora. In Pr o c e e dings of the 2009 Confer enc e on Em- piric al Metho ds in Natur al L anguage Pr o c essing: V olume 1-V olume 1 , 2009. [22] A. Ritter, C. Cherry , and B. Dolan. Unsuper- vised mo deling of twitter conv ersations, 2010. [23] Y. Tian, W. W ang, X. W ang, J. Rao, C. Chen, and J. Ma. T opic detection and organization of mobile text messages. In Pr o c e e dings of the 19th A CM international c onfer enc e on Information and know le dge management , CIKM ’10, pages 1877–1880, New Y ork, NY, USA, 2010. A CM. [24] H. W allach, D. Mimno, and A. McCallum. Re- thinking LDA: Why priors matter. Pr o c e e dings of NIPS-09, V anc ouver, BC , 2009. 12

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment