Multi-class Generalized Binary Search for Active Inverse Reinforcement Learning

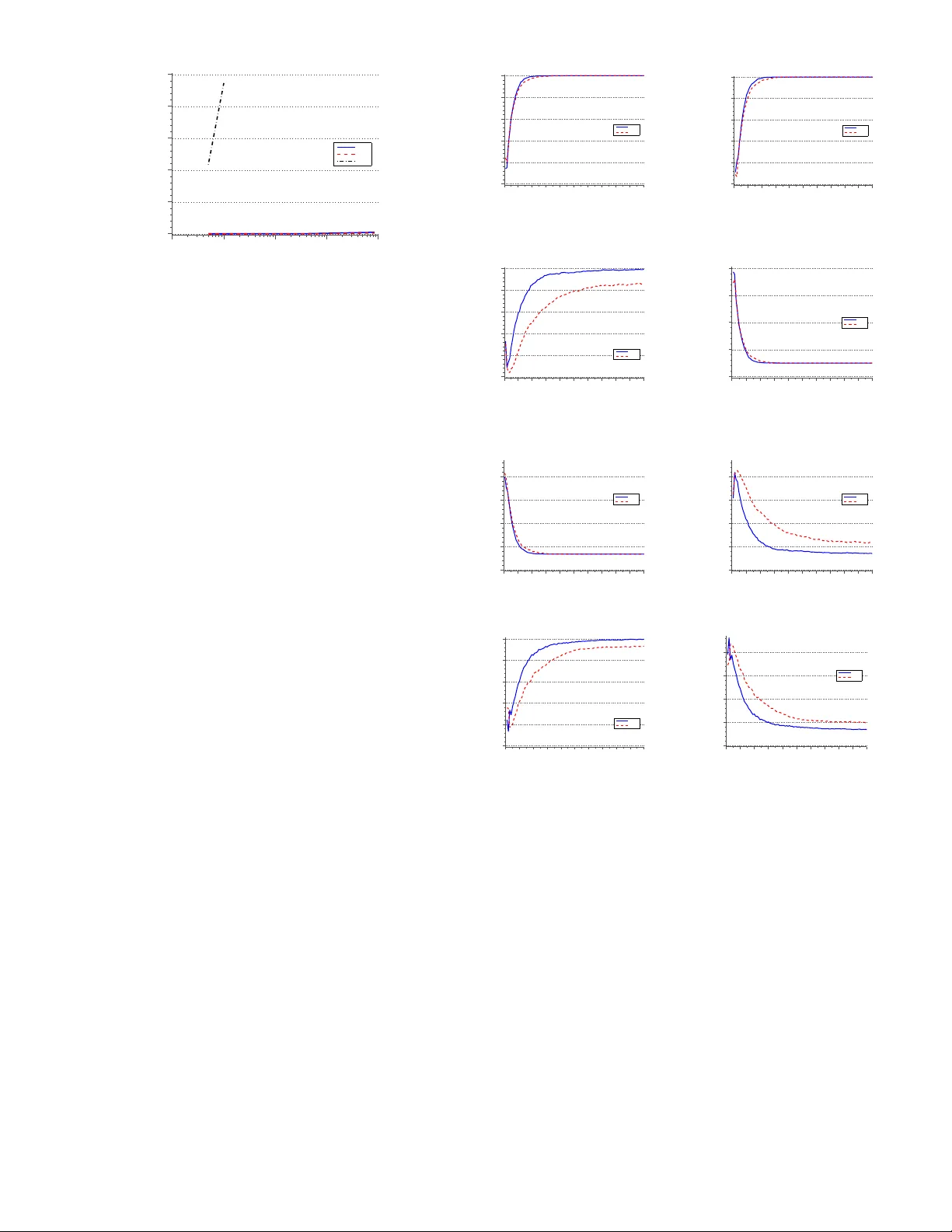

This paper addresses the problem of learning a task from demonstration. We adopt the framework of inverse reinforcement learning, where tasks are represented in the form of a reward function. Our contribution is a novel active learning algorithm that…

Authors: Francisco Melo, Manuel Lopes