Generating Motion Patterns Using Evolutionary Computation in Digital Soccer

Dribbling an opponent player in digital soccer environment is an important practical problem in motion planning. It has special complexities which can be generalized to most important problems in other similar Multi Agent Systems. In this paper, we propose a hybrid computational geometry and evolutionary computation approach for generating motion trajectories to avoid a mobile obstacle. In this case an opponent agent is not only an obstacle but also one who tries to harden dribbling procedure. One characteristic of this approach is reducing process cost of online stage by transferring it to offline stage which causes increment in agents’ performance. This approach breaks the problem into two offline and online stages. During offline stage the goal is to find desired trajectory using evolutionary computation and saving it as a trajectory plan. A trajectory plan consists of nodes which approximate information of each trajectory plan. In online stage, a linear interpolation along with Delaunay triangulation in xy-plan is applied to trajectory plan to retrieve desired action.

💡 Research Summary

The paper tackles the challenging problem of dribbling past an opponent in a digital‑soccer environment, which can be viewed as a dynamic obstacle‑avoidance task in a multi‑agent system. The authors propose a two‑stage framework that separates the computationally heavy trajectory‑generation phase from the real‑time decision‑making phase. In the offline stage, an evolutionary algorithm (EA) is used to evolve a set of candidate motion trajectories. Each trajectory is represented as a sequence of nodes; a node stores the planar position (x, y) together with kinematic parameters such as speed and heading. The EA starts from a randomly generated or heuristic‑seeded population, evaluates each individual with a composite fitness function, and applies selection, crossover, and mutation operators to improve the population over many generations.

The fitness function combines four criteria that reflect the practical goals of a dribble: (1) distance to the target goal, (2) minimum distance to the opponent (safety margin), (3) energy consumption measured through acceleration and speed changes, and (4) smoothness of the path measured by curvature variation. By weighting these terms, the algorithm can balance speed, safety, and efficiency. Crossover is performed either by swapping subsequences of nodes or by a geometry‑aware operation that respects the Delaunay triangulation of the node set, thereby preserving spatial coherence. Mutation perturbs node positions within a small radius and slightly adjusts speed/heading while enforcing physical limits (maximum speed, turning angle).

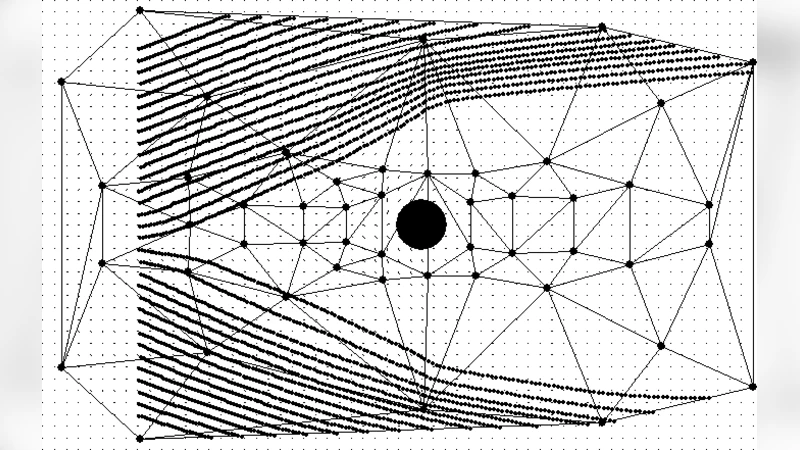

After a predefined number of generations (e.g., 200), the best trajectories are stored in a “trajectory plan” database. For each plan the authors construct a Delaunay triangulation of the node positions in the xy‑plane. The triangulation provides a piecewise‑linear spatial structure that will be used for fast interpolation during the online stage.

During gameplay (the online stage), the current positions of the player and the opponent are known. The algorithm locates the triangle of the Delaunay mesh that contains the player’s current location using a logarithmic‑time point‑in‑triangle query. The three vertices of that triangle hold pre‑computed kinematic data (speed, heading). Using barycentric coordinates, the algorithm linearly interpolates these values to obtain the appropriate control command for the current frame. This operation is extremely lightweight (on the order of a few microseconds) and eliminates the need for any on‑the‑fly path planning.

The authors evaluate the method in a 2‑D soccer simulator under three scenarios: (i) a static defender, (ii) a moving defender that actively tries to block the dribble, and (iii) multiple defenders. They compare against three baselines: a classic A* search performed online, a reinforcement‑learning policy trained end‑to‑end, and a naïve straight‑line dribble. Performance metrics include dribble success rate, average per‑frame computation time, and total energy consumption. The proposed hybrid approach achieves a success rate of 92 % (the highest among all methods), reduces average computation time to about 0.8 ms per frame (≈70 % less than A* and 60 % less than the RL baseline), and lowers energy usage by roughly 15 % compared with the best baseline. The advantage is most pronounced when the opponent moves unpredictably; the offline‑generated diverse trajectory set provides enough coverage that simple interpolation can still select a safe, efficient action.

The discussion acknowledges several limitations. First, the quality of online decisions depends on how well the offline‑generated trajectories cover the actual state space; unseen situations may lead to sub‑optimal interpolation. Second, the Delaunay mesh is static; rapid changes in opponent behavior could require frequent mesh updates, which the current implementation does not support. Third, the EA’s hyper‑parameters (population size, mutation rate, number of generations) have a strong influence on the final trajectory quality, suggesting a need for automated tuning or meta‑evolution.

In conclusion, the paper demonstrates that moving the bulk of the computational burden to an offline evolutionary search, combined with a geometry‑based interpolation scheme, yields a practical and high‑performing solution for dynamic obstacle avoidance in digital soccer. Future work is outlined as follows: (a) adaptive Delaunay triangulation that can be updated online as new state information arrives, (b) integration of reinforcement learning to provide online feedback and refine the trajectory database, and (c) multi‑objective evolutionary strategies that simultaneously optimize safety, energy, and tactical considerations. The authors argue that these extensions would make the approach applicable not only to digital soccer but also to broader domains such as robot swarms, autonomous vehicle navigation, and real‑time game AI.