IPF for Discrete Chain Factor Graphs

Iterative Proportional Fitting (IPF), combined with EM, is commonly used as an algorithm for likelihood maximization in undirected graphical models. In this paper, we present two iterative algorithms that generalize upon IPF. The first one is for likelihood maximization in discrete chain factor graphs, which we define as a wide class of discrete variable models including undirected graphical models and Bayesian networks, but also chain graphs and sigmoid belief networks. The second one is for conditional likelihood maximization in standard undirected models and Bayesian networks. In both algorithms, the iteration steps are expressed in closed form. Numerical simulations show that the algorithms are competitive with state of the art methods.

💡 Research Summary

The paper introduces two novel iterative algorithms that extend the classic Iterative Proportional Fitting (IPF) procedure to a much broader class of discrete probabilistic models, termed Discrete Chain Factor Graphs (DCFGs). A DCFG is defined as a collection of factors, each of which may represent either a conditional probability table (as in Bayesian networks) or an undirected potential (as in Markov random fields). By allowing factors to be arranged in arbitrary directed‑acyclic, undirected, or mixed (chain‑graph) structures, DCFGs subsume traditional undirected graphical models, Bayesian networks, chain graphs, and even sigmoid belief networks under a single formalism.

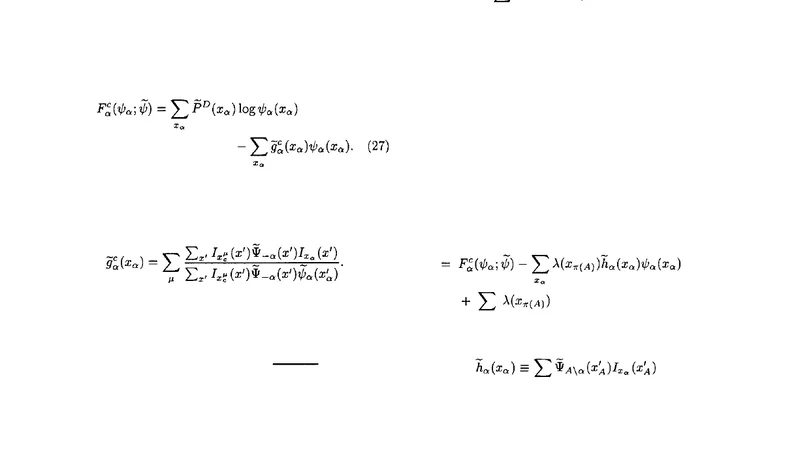

The first algorithm targets full‑data maximum‑likelihood (ML) estimation. It combines the Expectation‑Maximization (EM) framework with an IPF‑style multiplicative update. In the E‑step, the current parameters θ⁽ᵗ⁾ are used to compute expected sufficient statistics for each factor, typically via message‑passing or variational inference. In the M‑step, each factor’s parameters are updated by a closed‑form proportional correction:

θ_f⁽ᵗ⁺¹⁾(x_f) ∝ θ_f⁽ᵗ⁾(x_f) ×