Decision Principles to justify Carnaps Updating Method and to Suggest Corrections of Probability Judgments (Invited Talks)

This paper uses decision-theoretic principles to obtain new insights into the assessment and updating of probabilities. First, a new foundation of Bayesianism is given. It does not require infinite atomless uncertainties as did Savage s classical result, AND can therefore be applied TO ANY finite Bayesian network.It neither requires linear utility AS did de Finetti s classical result, AND r ntherefore allows FOR the empirically AND normatively desirable risk r naversion.Finally, BY identifying AND fixing utility IN an elementary r nmanner, our result can readily be applied TO identify methods OF r nprobability updating.Thus, a decision - theoretic foundation IS given r nto the computationally efficient method OF inductive reasoning r ndeveloped BY Rudolf Carnap.Finally, recent empirical findings ON r nprobability assessments are discussed.It leads TO suggestions FOR r ncorrecting biases IN probability assessments, AND FOR an alternative r nto the Dempster - Shafer belief functions that avoids the reduction TO r ndegeneracy after multiple updatings.r n

💡 Research Summary

The paper presents a decision‑theoretic foundation for probability assessment and updating that overcomes two classic limitations of Bayesianism. First, unlike Savage’s result, it does not require an infinite atomless state space; instead it works for any finite Bayesian network, making the theory directly applicable to modern AI and statistical models that involve a bounded set of variables. Second, it abandons de Finetti’s linear‑utility assumption, allowing for non‑linear, risk‑averse (or risk‑seeking) utility functions while still preserving coherence between preferences and probabilities.

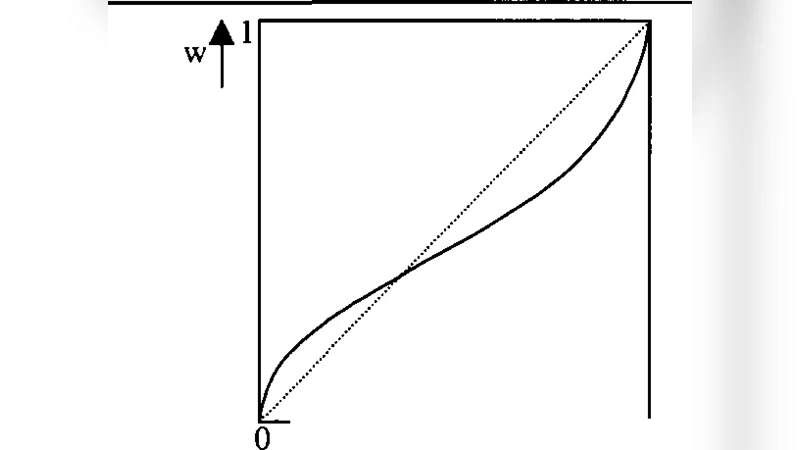

The core technical contribution is a “rational choice principle” that simultaneously identifies a decision maker’s utility function and his prior probabilities from observed choice behavior. By extending the probability‑utility separation theorem, the author shows that the curvature of the utility function can be inferred, and once the utility is known the prior can be uniquely recovered. With this pair (utility, prior) in hand, the paper justifies Carnap’s inductive method—often called Carnap’s normalized linear updating—as a natural consequence of the decision‑theoretic axioms. Carnap’s rule updates a prior (P) with new evidence (E) by a convex combination

\