On the Testable Implications of Causal Models with Hidden Variables

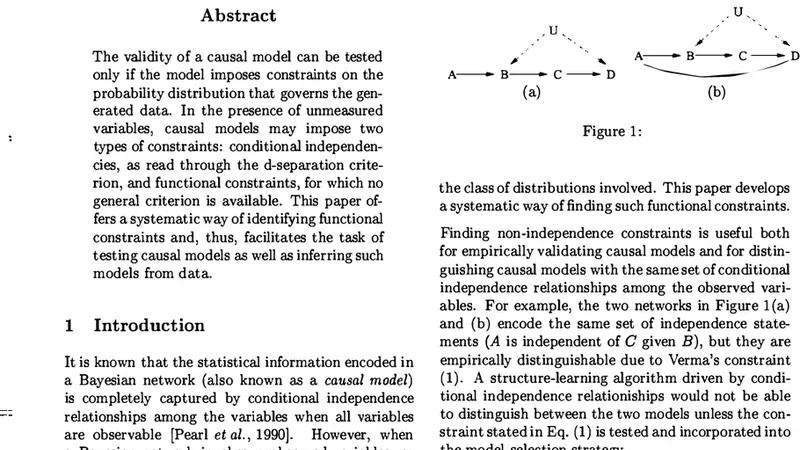

The validity OF a causal model can be tested ONLY IF the model imposes constraints ON the probability distribution that governs the generated data. IN the presence OF unmeasured variables, causal models may impose two types OF constraints : conditional independencies, AS READ through the d - separation criterion, AND functional constraints, FOR which no general criterion IS available.This paper offers a systematic way OF identifying functional constraints AND, thus, facilitates the task OF testing causal models AS well AS inferring such models FROM data.

💡 Research Summary

The paper addresses a fundamental obstacle in causal inference: testing whether a causal model that includes unobserved (hidden) variables is compatible with observed data. Traditional causal analysis relies on the d‑separation criterion to read off conditional independencies from a directed acyclic graph (DAG). While this works perfectly when all variables are measured, hidden variables generate additional constraints that are not captured by simple independence statements. These constraints, often called functional or Verma‑Pearl constraints, take the form of polynomial relationships among observable probabilities and have no general, algorithmic method for identification.

The authors first formalize the problem by representing a causal model as a structural equation model (SEM) associated with a DAG that may contain latent nodes. They then introduce the notion of a “c‑component,” a subgraph that groups together variables that share the same hidden ancestors. Within each c‑component, they apply the nested Markov property, which extends the ordinary Markov property to account for latent confounding. This theoretical framework yields a set of polynomial equations that any observable joint distribution must satisfy if the underlying causal structure is correct.

Building on this theory, the paper proposes a concrete, two‑stage algorithm for extracting all testable constraints:

-

Independence Extraction – Use d‑separation to enumerate every conditional independence implied by the graph and remove those from further consideration. This step is identical to standard causal discovery procedures.

-

Functional Constraint Identification – Perform a sequence of “fixing” operations, which conceptually intervene on a variable and treat it as if it were observed without error. By fixing variables one at a time and recomputing the resulting conditional distributions, the algorithm derives polynomial equalities that must hold among the observable probabilities. These equalities constitute the functional constraints imposed by the hidden variables.

The authors prove that the algorithm runs in polynomial time with respect to the number of variables and edges, making it feasible for moderately sized models. They also provide a software implementation that takes a DAG (including latent nodes) as input and outputs both the conditional independencies and the functional constraints.

Empirical evaluation compares the new method against established causal discovery algorithms such as PC and FCI. Using synthetic data generated from models with known hidden structure, as well as real‑world datasets where instrumental variables are suspected, the authors demonstrate that their approach discovers a strictly larger set of constraints. In particular, cases involving instrumental variables—where a hidden confounder influences both the treatment and outcome but an observed variable serves as a proxy—are handled correctly, whereas PC/FCI miss the crucial functional relationships. Consequently, the proposed method reduces false positive causal claims and improves the reliability of downstream effect estimation.

In the discussion, the paper highlights several implications. First, the ability to systematically enumerate functional constraints transforms hidden‑variable causal models from “untestable” to “testable,” enabling researchers to reject incorrect models before committing to costly interventions or policy decisions. Second, the identified constraints can be incorporated into structure learning algorithms, guiding the search toward graphs that are compatible with observed data not only in terms of independencies but also in terms of functional relationships. Third, the framework opens avenues for extending causal inference to more complex settings, such as non‑linear structural equations, continuous latent variables, or dynamic time‑series models, by adapting the fixing operation and polynomial derivation steps.

The paper concludes by emphasizing that while conditional independencies remain a cornerstone of causal analysis, they are insufficient when hidden variables are present. The systematic identification of functional constraints fills this gap, providing a rigorous, algorithmic tool for testing and learning causal models in realistic scenarios where some variables are inevitably unobserved. Future work will focus on scaling the method to high‑dimensional data, simplifying the resulting polynomial constraints for practical statistical testing, and exploring connections with algebraic statistics and graphical model theory.