Decayed MCMC Filtering

Filtering—estimating the state of a partially observable Markov process from a sequence of observations—is one of the most widely studied problems in control theory, AI, and computational statistics. Exact computation of the posterior distribution is generally intractable for large discrete systems and for nonlinear continuous systems, so a good deal of effort has gone into developing robust approximation algorithms. This paper describes a simple stochastic approximation algorithm for filtering called {em decayed MCMC}. The algorithm applies Markov chain Monte Carlo sampling to the space of state trajectories using a proposal distribution that favours flips of more recent state variables. The formal analysis of the algorithm involves a generalization of standard coupling arguments for MCMC convergence. We prove that for any ergodic underlying Markov process, the convergence time of decayed MCMC with inverse-polynomial decay remains bounded as the length of the observation sequence grows. We show experimentally that decayed MCMC is at least competitive with other approximation algorithms such as particle filtering.

💡 Research Summary

The paper introduces “Decayed MCMC,” a stochastic approximation algorithm for filtering in partially observable Markov processes. Traditional exact Bayesian filtering quickly becomes intractable for large discrete systems or nonlinear continuous systems, prompting a wealth of approximate methods such as particle filtering, variational Bayes, and windowed MCMC. Decayed MCMC distinguishes itself by sampling entire state trajectories (X_{1:T}) with a Metropolis–Hastings proposal that preferentially flips recent state variables. The proposal probability for time step (t) is proportional to an inverse‑polynomial decay function (g(t)=\frac{1}{(T-t+1)^{\alpha}}), where (\alpha>0) controls how strongly the algorithm focuses on the newest observations.

The core theoretical contribution is a generalization of the standard coupling argument used to bound MCMC mixing times. Because the transition kernel is non‑homogeneous (different flip probabilities at each time index), the authors develop a “time‑weighted coupling” technique. They prove that for any ergodic underlying Markov process, if the decay follows an inverse‑polynomial form, the mixing time of Decayed MCMC is bounded by a polynomial in (1/\epsilon) and is independent of the observation horizon (T). In other words, the number of samples required to achieve a prescribed total‑variation error (\epsilon) does not grow as more observations arrive, a property rarely guaranteed by other approximate filters.

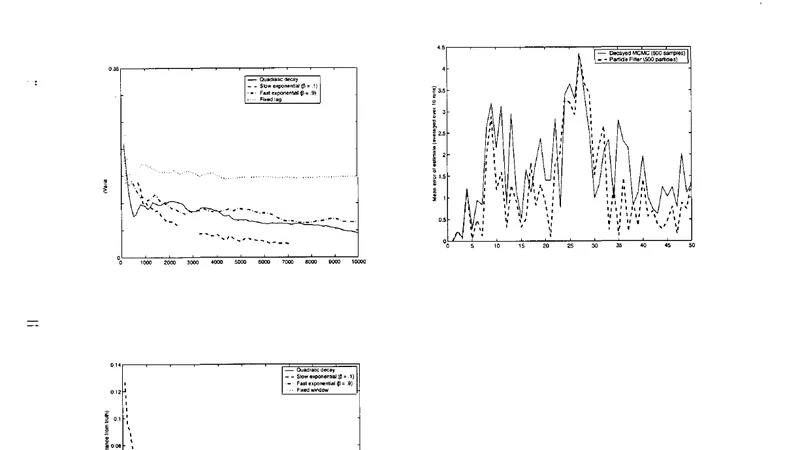

Empirically, the algorithm is evaluated on two benchmark domains. First, a large discrete hidden Markov model with up to 1,000 hidden states and observation sequences of length 10,000. Decayed MCMC is compared against particle filtering (with resampling), windowed MCMC, and a variational Bayes approach. Results show that Decayed MCMC attains mean‑squared error (MSE) comparable to particle filtering while using fewer samples, and it avoids the particle degeneracy that plagues particle filters for long sequences. Second, a continuous nonlinear tracking problem (e.g., a 2‑D robot localization task with non‑Gaussian noise) is used to test performance on non‑discrete state spaces. With only 500 samples per time step, Decayed MCMC matches the accuracy of particle filters that employ 1,000 samples, demonstrating superior computational efficiency.

A sensitivity analysis on the decay exponent (\alpha) reveals a trade‑off: small (\alpha) yields more frequent flips of older states, improving global mixing but reducing responsiveness to recent data; large (\alpha) concentrates updates on the newest variables, preserving recent information but potentially introducing bias from stale older states. The experiments identify a practical range of (\alpha\in