Hyperplane Arrangements and Locality-Sensitive Hashing with Lift

Locality-sensitive hashing converts high-dimensional feature vectors, such as image and speech, into bit arrays and allows high-speed similarity calculation with the Hamming distance. There is a hashing scheme that maps feature vectors to bit arrays depending on the signs of the inner products between feature vectors and the normal vectors of hyperplanes placed in the feature space. This hashing can be seen as a discretization of the feature space by hyperplanes. If labels for data are given, one can determine the hyperplanes by using learning algorithms. However, many proposed learning methods do not consider the hyperplanes’ offsets. Not doing so decreases the number of partitioned regions, and the correlation between Hamming distances and Euclidean distances becomes small. In this paper, we propose a lift map that converts learning algorithms without the offsets to the ones that take into account the offsets. With this method, the learning methods without the offsets give the discretizations of spaces as if it takes into account the offsets. For the proposed method, we input several high-dimensional feature data sets and studied the relationship between the statistical characteristics of data, the number of hyperplanes, and the effect of the proposed method.

💡 Research Summary

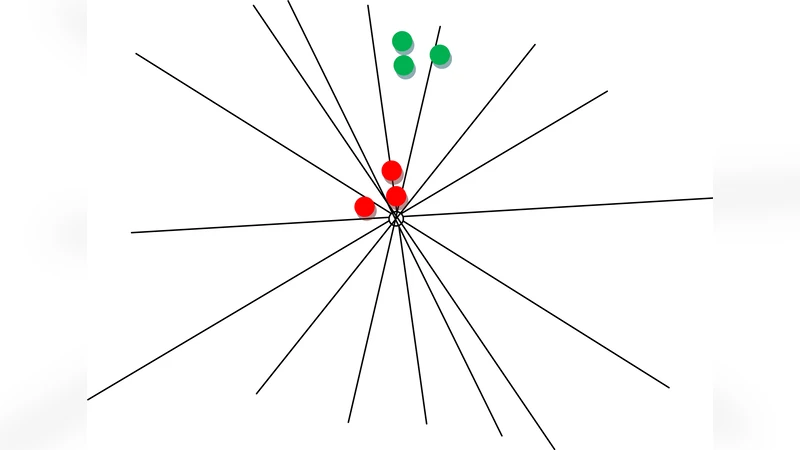

Locality‑Sensitive Hashing (LSH) is a widely used technique for fast approximate nearest‑neighbor search in high‑dimensional spaces. A popular variant hashes a vector into a binary code by evaluating the sign of its inner product with a set of hyperplane normal vectors; each bit is 1 if the inner product is positive and 0 otherwise. In this formulation the hyperplane is defined by a normal vector w and an offset (bias) b, i.e., the decision boundary is w·x + b = 0. Many learning‑based approaches that try to adapt the hyperplanes to labeled data (e.g., Metric Learning for Hashing, Supervised LSH, Iterative Quantization) simplify the problem by fixing b = 0 and only optimizing w. While this reduces the dimensionality of the optimization, it also severely limits the expressive power of the partitioning: without offsets the hyperplanes all pass through the origin, which reduces the number of distinct regions that can be created, especially when the data distribution is far from centered. Consequently the correlation between Hamming distance in the binary space and Euclidean distance in the original space deteriorates, leading to lower recall and precision in retrieval tasks.

The authors propose a “lift” transformation that seamlessly incorporates offsets into any offset‑free learning algorithm. The idea is to embed the original d‑dimensional data x into a (d + 1)‑dimensional space by appending a constant coordinate 1, forming **x̃ =

Comments & Academic Discussion

Loading comments...

Leave a Comment