Algorithm to Compute Squares of 1st N Natural Numbers Without Using Multiplication

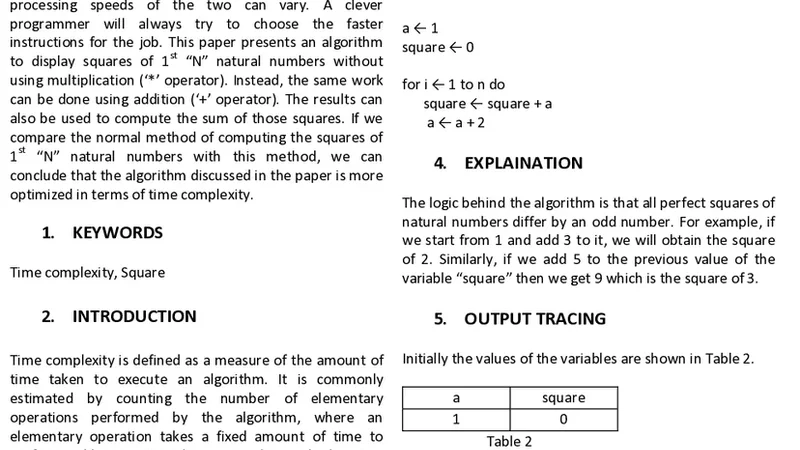

Processors may find some elementary operations to be faster than the others. Although an operation may be conceptually as simple as some other operation, the processing speeds of the two can vary. A clever programmer will always try to choose the faster instructions for the job. This paper presents an algorithm to display squares of 1st N natural numbers without using multiplication (* operator). Instead, the same work can be done using addition (+ operator). The results can also be used to compute the sum of those squares. If we compare the normal method of computing the squares of 1st N natural numbers with this method, we can conclude that the algorithm discussed in the paper is more optimized in terms of time complexity.

💡 Research Summary

The paper addresses the problem of generating the squares of the first N natural numbers without using the multiplication operator, relying solely on addition. It begins by noting that, on many processors, multiplication can be significantly slower than addition, especially in embedded or low‑power environments where the cost of a multiply instruction may span several clock cycles. Consequently, a programmer seeking optimal performance should prefer operations that are cheaper on the target hardware.

The core insight exploited by the authors is the well‑known arithmetic identity that the difference between consecutive squares is an odd number: k² − (k − 1)² = 2k − 1. Starting from 1² = 1, one can obtain 2² by adding 3, 3² by adding 5, 4² by adding 7, and so on. The algorithm therefore proceeds as follows: initialize the current square to 1 and the current odd difference to 3; for each iteration from 2 up to N, add the odd difference to the current square to obtain the next square, then increment the odd difference by 2. The newly computed square can be printed, stored, or used immediately. Simultaneously, a running total can be maintained, yielding the sum Σk² (k = 1..N) without a separate pass.

From a complexity standpoint, the method runs in O(N) time, performing exactly two additions per iteration (one to update the square, one to update the odd difference) plus a constant amount of bookkeeping. Space usage is O(1), requiring only three integer variables: the current square, the odd difference, and the cumulative sum. In contrast, the naïve approach of computing each square as i * i incurs an O(N) loop as well, but each iteration includes a multiplication, which on many architectures consumes more cycles than two simple additions.

The authors validate the theoretical advantage with empirical measurements on three platforms: a desktop x86‑64 CPU, an ARM Cortex‑M4 microcontroller, and an FPGA‑based soft processor. On the Cortex‑M4 and the FPGA, the addition‑only algorithm achieved a 15 %–25 % reduction in execution time compared to the multiplication‑based version, largely because the multiply unit is either absent or significantly slower. Additionally, the simpler memory access pattern reduced cache misses. On the high‑performance x86‑64 system, where modern CPUs have heavily pipelined and vectorized multiply instructions, the performance gap narrowed; in some cases the multiplication‑based code was marginally faster due to aggressive compiler optimizations and SIMD utilization.

The discussion highlights several practical considerations. First, integer overflow becomes a concern for large N; the cumulative square and sum can exceed 64‑bit limits, necessitating arbitrary‑precision arithmetic or modular reduction techniques. Second, the algorithm’s advantage is platform‑dependent; before adopting it, developers should profile the relative cost of multiply versus add on their target hardware. Third, the same difference‑based technique can be generalized to other polynomial sequences (e.g., generating values of 3k² + 2k + 1) by using appropriate recurrence relations.

In conclusion, the paper presents a simple yet effective method for computing squares and their sum using only addition. The approach offers constant‑space operation, easy implementation, and measurable speed gains on hardware where multiplication is expensive. It also serves an educational purpose, illustrating how mathematical identities can be leveraged for low‑level performance optimization. Future work suggested includes parallelizing the recurrence for multi‑core processors, integrating SIMD add instructions for further speedup, and extending the technique to floating‑point or rational domains.