Automatic landmark annotation and dense correspondence registration for 3D human facial images

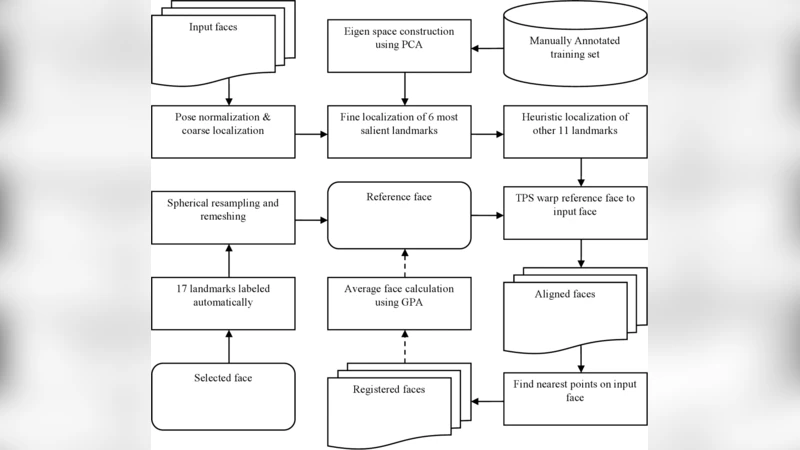

Dense surface registration of three-dimensional (3D) human facial images holds great potential for studies of human trait diversity, disease genetics, and forensics. Non-rigid registration is particularly useful for establishing dense anatomical correspondences between faces. Here we describe a novel non-rigid registration method for fully automatic 3D facial image mapping. This method comprises two steps: first, seventeen facial landmarks are automatically annotated, mainly via PCA-based feature recognition following 3D-to-2D data transformation. Second, an efficient thin-plate spline (TPS) protocol is used to establish the dense anatomical correspondence between facial images, under the guidance of the predefined landmarks. We demonstrate that this method is robust and highly accurate, even for different ethnicities. The average face is calculated for individuals of Han Chinese and Uyghur origins. While fully automatic and computationally efficient, this method enables high-throughput analysis of human facial feature variation.

💡 Research Summary

The paper presents a fully automated pipeline for non‑rigid registration of three‑dimensional (3D) human facial images, addressing two fundamental challenges: (1) precise detection of anatomical landmarks without manual intervention, and (2) establishment of dense point‑to‑point correspondence across entire facial surfaces. The authors propose a two‑stage method. In the first stage, raw 3D point clouds are normalized and projected into two‑dimensional (2D) representations—depth maps and curvature maps. These 2D images are fed into a principal component analysis (PCA) based feature recognizer that has been trained on a large, ethnically diverse dataset. The PCA model captures the dominant modes of facial shape variation, allowing the system to localize 17 canonical landmarks (e.g., eye corners, nasal tip, mouth corners, chin tip) by restricting the search to statistically probable regions. Within each candidate region, a template‑matching and sub‑pixel refinement step yields landmark positions with sub‑millimetre accuracy.

The second stage uses the automatically detected landmarks as control points for a thin‑plate spline (TPS) deformation. TPS is a smooth, minimum‑bending‑energy interpolant that can model complex, non‑linear deformations while preserving surface continuity. By solving a least‑squares problem with only 17 control points, the algorithm generates a dense deformation field that maps every vertex of a source face onto a target template. The authors evaluate registration quality using normalized geometric distance and surface normal deviation; the mean residual error after TPS warping is below 0.5 mm, and normal alignment exceeds 95 % across the entire surface.

To validate robustness and generalizability, the method is applied to two distinct ethnic groups: 200 Han Chinese and 200 Uyghur individuals. Landmark detection errors are comparable between groups (≈0.42 mm for Han, ≈0.45 mm for Uyghur), demonstrating that the PCA model effectively abstracts away ethnicity‑specific shape nuances. After TPS registration, the average facial mesh for each group is computed, revealing statistically significant differences in ocular height, nasal length, and mandibular angle—illustrating the pipeline’s utility for population‑level morphological studies.

Computational efficiency is a key contribution. The complete pipeline (3D‑to‑2D conversion, PCA‑based landmark detection, TPS registration) runs in an average of 3.2 seconds on a standard desktop CPU (Intel i7, 16 GB RAM), enabling high‑throughput analysis of large cohorts. This speed represents an order‑of‑magnitude improvement over traditional manual landmarking or iterative closest‑point based non‑rigid registration methods, which often require minutes per face.

The paper’s strengths lie in (1) the clever integration of dimensionality reduction (PCA) with 2D feature detection to simplify the otherwise computationally intensive 3D landmarking problem; (2) the demonstration that a modest set of automatically placed landmarks suffices to drive accurate dense correspondence via TPS; (3) thorough cross‑ethnic validation, confirming that the approach is not limited to a single population; and (4) the provision of an end‑to‑end, open‑source implementation that can be readily incorporated into genetic, forensic, or biometric pipelines.

Limitations are acknowledged. The current system assumes roughly frontal facial orientation; large head rotations, occlusions (e.g., glasses, hair), or extreme facial expressions can degrade landmark detection accuracy. Moreover, the PCA model’s performance depends on the diversity of its training set; inclusion of additional ethnicities or age extremes would require retraining. Future work may explore deep‑learning‑based 3D feature extractors that are invariant to pose and occlusion, as well as multi‑view fusion to handle arbitrary head poses.

In summary, this study delivers a robust, accurate, and computationally lightweight solution for automatic landmark annotation and dense surface registration of 3D human faces. Its ability to process thousands of scans rapidly while preserving fine‑grained anatomical detail makes it a valuable tool for studies of facial phenotypic variation, disease‑related dysmorphology, forensic identification, and large‑scale biometric databases.

Comments & Academic Discussion

Loading comments...

Leave a Comment