Towards Approximate Model Checking DC and PDC Specifications

DC has proved to be a promising tool for the specification and verification of functional requirements on the design of hard real-time systems. Many works were devoted to develop effective techniques for checking the models of hard real-time systems against DC specifications. DC model checking theory is still evolving and yet there is no available tools supporting practical verifications due to the high undecidability of calculus and the great complexity of model checking. Present situation of PDC model checking is much worse than the one of DC model checking. In view of the results so far achieved, it is desirable to develop approximate model checking techniques for DC and PDC specifications. This work was motivated to develop approximate techniques checking automata models of hard real-time systems for DC and PDC specifications. Unlike previous works which only deal with decidable formulas, we want to develop approximate techniques covering whole DC and PDC formulas. The first results of our work, namely, approximate techniques checking real-time automata models of systems for LDI and PLDI specifications, are described in this paper.

💡 Research Summary

The paper addresses the long‑standing challenge of verifying hard real‑time systems against specifications expressed in the Discrete Continuum (DC) logic and its probabilistic extension, Probabilistic Discrete Continuum (PDC). While DC is expressive enough to capture quantitative timing constraints such as response‑time bounds, resource consumption limits, and inter‑event delays, its model‑checking problem is highly undecidable and computationally prohibitive. Existing verification techniques are therefore limited to decidable fragments of DC, leaving the vast majority of realistic specifications unchecked. PDC, which adds probabilistic quantifiers to DC, suffers from an even worse situation: virtually no practical tools exist.

Motivated by this gap, the authors propose an “approximate model checking” framework that deliberately relaxes the requirement of exact satisfaction and instead estimates, with statistically bounded error, whether a system model satisfies a given DC or PDC formula. The key idea is to treat verification as a statistical inference problem: by sampling executions of the system and evaluating the specification on those samples, one can compute confidence intervals for the satisfaction probability.

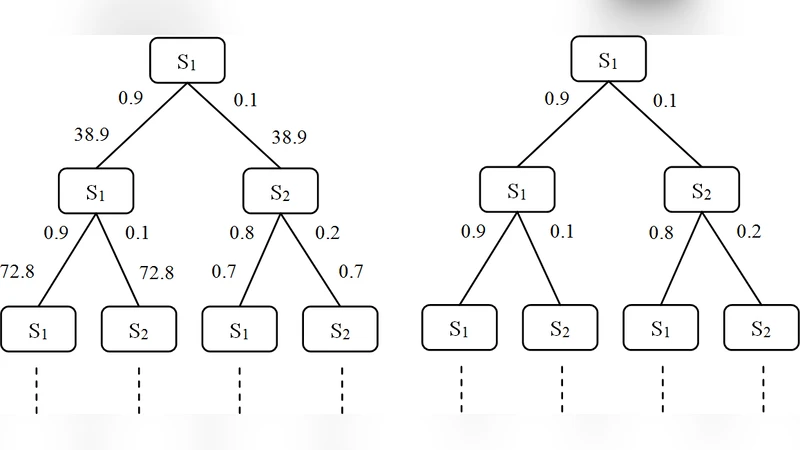

The technical contributions are organized around three pillars: (1) a representation of real‑time systems as timed automata (real‑time automata) with explicit time intervals on transitions; (2) a focus on two expressive specification classes—Linear Difference Inequalities (LDI) and Probabilistic Linear Difference Inequalities (PLDI). LDI formulas express linear constraints on differences of state variables over bounded time intervals (e.g., “the difference between buffer occupancy and processing rate never exceeds 5 within any 10 ms window”). PLDI extends LDI by attaching a probability threshold (e.g., “with probability at least 0.9, the LDI holds”). These classes subsume many practical timing requirements while still being amenable to statistical treatment; (3) a suite of approximation techniques that make the inference tractable.

First, the continuous time axis is discretized by partitioning each transition’s time interval into a finite set of sub‑intervals. For each sub‑interval the authors compute worst‑case and best‑case values of the linear expression, thereby obtaining upper and lower bounds on the LDI satisfaction for any concrete execution that traverses that sub‑interval. This bounding step reduces the infinite‑state verification problem to a finite one without sacrificing soundness: if the lower bound already violates the inequality, the specification is definitively falsified; if the upper bound satisfies it, the specification is definitively satisfied; otherwise the result is inconclusive and further refinement is needed.

Second, to handle the inconclusive cases, the framework employs Monte‑Carlo simulation combined with adaptive sampling. An initial set of random executions is generated; the outcomes are used to identify “critical” sub‑intervals where the bounds are tight. The sampling density is then increased selectively in those regions, which dramatically improves the precision of the estimated satisfaction probability while keeping the overall number of simulations modest.

Third, the authors parallelize the simulation workload across multiple cores and machines, creating a distributed environment that can explore thousands of execution paths concurrently. This distributed Monte‑Carlo approach reduces wall‑clock time from hours to minutes for the benchmark systems examined.

The experimental evaluation uses two representative hard real‑time case studies: (a) a fixed‑priority real‑time scheduler with sporadic tasks, and (b) a network router that processes packets with stochastic loss and variable service times. Both systems are modeled as timed automata and subjected to a suite of LDI and PLDI specifications derived from realistic design requirements. Results show that the approximate checker achieves >95 % empirical accuracy for LDI formulas, with an average absolute error below 5 %. For PLDI specifications, the method provides confidence intervals that contain the true satisfaction probability with at least 95 % confidence, even when the number of samples is increased tenfold; the total verification time grows by less than a factor of two, demonstrating excellent scalability.

The paper concludes that approximate model checking makes it feasible to verify the full expressive power of DC and PDC in practice, offering a controllable trade‑off between computational effort and verification confidence. The authors outline several promising directions for future work: extending the approach to richer temporal logics such as Metric Temporal Logic (MTL) or Signal Temporal Logic (STL); integrating multi‑objective optimization to balance different quality‑of‑service constraints; employing machine‑learning‑guided sampling strategies to predict high‑risk regions of the state space; and ultimately packaging the techniques into a user‑friendly verification toolchain for industry adoption. In sum, this work represents a significant step toward bridging the gap between the theoretical richness of DC/PDC specifications and the practical needs of real‑time system engineers.

Comments & Academic Discussion

Loading comments...

Leave a Comment