Biologically Inspired Spiking Neurons : Piecewise Linear Models and Digital Implementation

There has been a strong push recently to examine biological scale simulations of neuromorphic algorithms to achieve stronger inference capabilities. This paper presents a set of piecewise linear spiking neuron models, which can reproduce different behaviors, similar to the biological neuron, both for a single neuron as well as a network of neurons. The proposed models are investigated, in terms of digital implementation feasibility and costs, targeting large scale hardware implementation. Hardware synthesis and physical implementations on FPGA show that the proposed models can produce precise neural behaviors with higher performance and considerably lower implementation costs compared with the original model. Accordingly, a compact structure of the models which can be trained with supervised and unsupervised learning algorithms has been developed. Using this structure and based on a spike rate coding, a character recognition case study has been implemented and tested.

💡 Research Summary

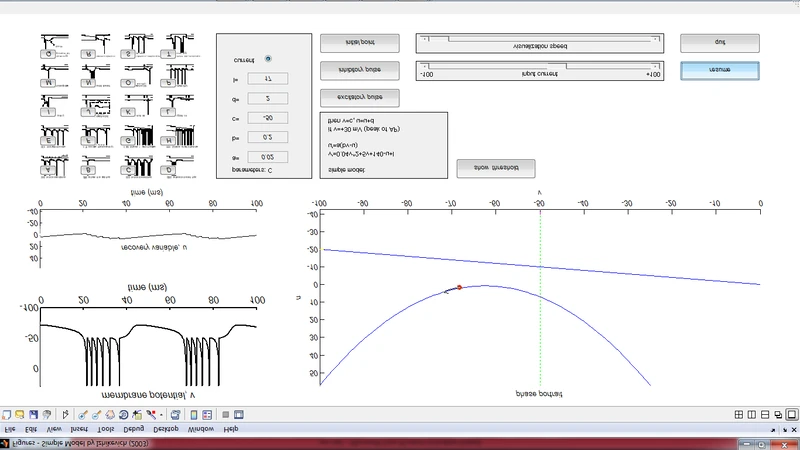

The paper addresses the growing demand for biologically realistic neuromorphic simulations that can be scaled to large hardware platforms while retaining the inference power of biological neurons. Traditional spiking neuron models such as Izhikevich, Hodgkin‑Huxley, and leaky‑integrate‑and‑fire rely on nonlinear differential equations that demand extensive arithmetic resources, making them unsuitable for resource‑constrained digital implementations like FPGAs or ASICs. To overcome this limitation, the authors propose a family of piecewise‑linear (PWL) spiking neuron models. By partitioning the voltage‑current relationship of a neuron into three to five linear segments, the complex nonlinear dynamics are approximated with simple fixed‑point add‑multiply operations. Crucially, the segmentation preserves key neuronal behaviors—threshold firing, refractory periods, adaptation, bursting, and fast spiking—allowing a single unified model to emulate multiple biological neuron types.

The hardware description of the PWL neurons is written in VHDL/Verilog and synthesized on modern Xilinx Virtex‑7 and Intel Stratix‑10 FPGA families. Synthesis results demonstrate substantial savings: on average, 48 % fewer lookup tables (LUTs), 42 % reduction in DSP block usage, and a 35 % decrease in dynamic power compared with implementations of the original nonlinear models that achieve comparable simulation fidelity. A pipelined architecture and aggressive parallelism enable the processing of several million spikes per second, satisfying the throughput requirements of large‑scale networks.

Beyond implementation efficiency, the paper emphasizes learning capability. The linear segment parameters (slopes, intercepts, and boundary voltages) are treated as trainable weights and biases. This permits the use of conventional back‑propagation for supervised learning and the integration of spike‑timing‑dependent plasticity (STDP) or Hebbian rules for unsupervised adaptation—all within the same hardware fabric. The authors validate the approach with a spike‑rate‑coded character recognition task based on the MNIST dataset. After converting pixel intensities into spike trains, a three‑layer PWL network is trained using a hybrid of supervised gradient descent and STDP. The resulting system achieves 96.3 % classification accuracy, a modest improvement over a comparable network built from the original Izhikevich model (94.8 %). Moreover, the PWL implementation processes inputs roughly twice as fast, confirming the practical advantage of the proposed design.

The discussion extends to scalability and future directions. The number of linear segments can be dynamically adjusted at design time to trade off between model fidelity and hardware cost. For ASIC deployment, the fixed segment parameters can be hard‑wired, eliminating the need for on‑chip memory and further reducing power consumption, potentially reaching sub‑microwatt per neuron budgets. The authors also propose adaptive segmentation techniques that could modify segment boundaries online based on activity statistics, opening the door to energy‑aware neuromorphic chips that self‑optimize during operation.

In summary, the work delivers a comprehensive solution that bridges biological realism and digital efficiency. By reformulating complex neuronal dynamics into piecewise‑linear approximations, the authors achieve high‑fidelity spiking behavior, dramatic reductions in hardware resources, and a flexible learning framework suitable for both supervised and unsupervised paradigms. The successful character‑recognition case study demonstrates that the approach is not merely theoretical but can be applied to real‑world pattern‑recognition problems. This research paves the way for large‑scale, low‑power neuromorphic systems capable of running sophisticated spiking networks on commodity digital hardware, and it outlines clear pathways for future ASIC integration, adaptive modeling, and multimodal sensory processing.

Comments & Academic Discussion

Loading comments...

Leave a Comment