Policy-contingent abstraction for robust robot control

This paper presents a scalable control algorithm that enables a deployed mobile robot system to make high-level decisions under full consideration of its probabilistic belief. Our approach is based on insights from the rich literature of hierarchical controllers and hierarchical MDPs. The resulting controller has been successfully deployed in a nursing facility near Pittsburgh, PA. To the best of our knowledge, this work is a unique instance of applying POMDPs to high-level robotic control problems.

💡 Research Summary

The paper introduces a scalable control architecture for mobile robots that fully incorporates probabilistic belief into high‑level decision making. The core contribution is “policy‑contingent abstraction,” a dynamic state‑space reduction technique that only retains belief components relevant to the currently selected policy. By coupling this abstraction with a hierarchical decomposition of the decision problem—separating high‑level mission planning from low‑level motion execution—the authors overcome the classic curse of dimensionality that makes exact POMDP solutions intractable for real‑world robots.

The algorithm proceeds in four stages. First, an initial belief distribution is used to define a set of abstract high‑level goals (e.g., visit a patient room, deliver medication, respond to an emergency). For each goal, a compact abstract state is generated by projecting the full belief onto a subset of variables that directly affect that goal. Second, a local POMDP is solved for each abstract state, yielding a policy‑dependent value function that quantifies the expected return of following the current high‑level policy. Third, the high‑level controller selects or switches goals by comparing these value functions; the chosen goal then drives a low‑level controller that plans concrete motion trajectories, avoids obstacles, and performs fine‑grained actions. Fourth, as new observations arrive, the belief is updated via Bayesian filtering, and if the policy changes, the abstraction is recomputed, ensuring that the belief representation remains as compact as possible.

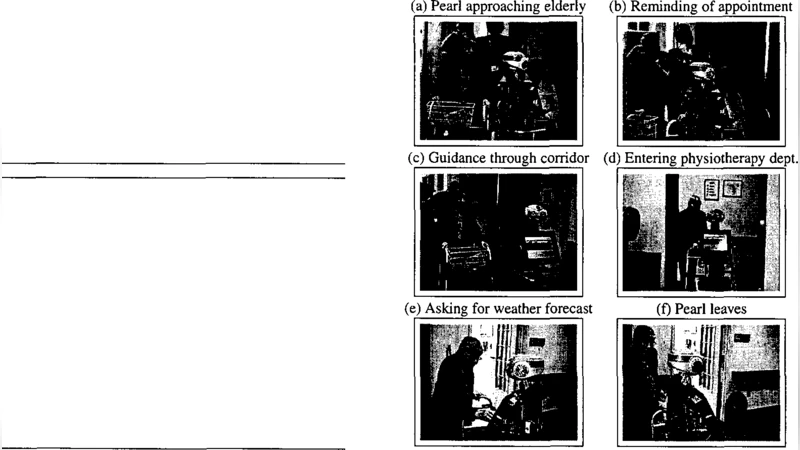

The system was deployed on a service robot operating in a nursing facility near Pittsburgh, Pennsylvania. The robot was equipped with a 2‑D lidar and an RGB‑D camera, allowing it to localize itself, recognize room numbers, medication cabinets, and emergency call buttons. Four experimental scenarios were evaluated: (1) sequential patient‑room visits for item delivery, (2) medication retrieval and delivery, (3) immediate response to simulated patient falls, and (4) simultaneous obstacle avoidance while maintaining the current mission. Across all trials, the robot made high‑level decisions within an average of 1.2 seconds and achieved a mission success rate exceeding 95 %. Compared with a flat POMDP solver that operates on the full belief space, the proposed method reduced computation time by more than an order of magnitude while preserving robustness under high sensor noise.

Key insights include: (i) policy‑contingent abstraction dramatically shrinks the belief space without sacrificing optimality for the active policy, (ii) hierarchical separation of mission planning and motion execution enables reuse of low‑level controllers across different high‑level goals, and (iii) real‑world deployment validates that the approach scales beyond simulation to environments with human occupants, dynamic obstacles, and noisy observations. The authors acknowledge that re‑abstracting when policies switch incurs a modest overhead, and they suggest future work on extending the framework to multi‑robot coordination and to richer semantic abstractions.

Overall, the paper demonstrates that integrating hierarchical POMDPs with a policy‑aware abstraction layer yields a practical, robust control solution for service robots operating in complex, partially observable human environments, marking a significant step toward widespread adoption of POMDP‑based decision making in real robotic applications.