Decentralized Sensor Fusion With Distributed Particle Filters

This paper presents a scalable Bayesian technique for decentralized state estimation from multiple platforms in dynamic environments. As has long been recognized, centralized architectures impose severe scaling limitations for distributed systems due to the enormous communication overheads. We propose a strictly decentralized approach in which only nearby platforms exchange information. They do so through an interactive communication protocol aimed at maximizing information flow. Our approach is evaluated in the context of a distributed surveillance scenario that arises in a robotic system for playing the game of laser tag. Our results, both from simulation and using physical robots, illustrate an unprecedented scaling capability to large teams of vehicles.

💡 Research Summary

The paper tackles the fundamental scalability problem of multi‑robot state estimation in dynamic environments. Traditional centralized Bayesian filters require every robot to transmit raw sensor data or full particle sets to a central server, which quickly becomes infeasible as the number of platforms grows. Bandwidth consumption, processing latency, and the single‑point‑of‑failure nature of a central node all limit the size of deployable fleets. To overcome these constraints, the authors propose a strictly decentralized framework built on distributed particle filters (DPF) combined with an interactive communication protocol that limits exchanges to neighboring robots only.

Core methodology

Each robot maintains its own particle set ({x_i^k, w_i^k}_{k=1}^K) and updates the weights using its local observations. After the local update, the robot computes an information‑gain metric, approximated as the reduction in entropy of its particle distribution caused by the new measurement. If this gain exceeds a pre‑defined threshold (\tau), the robot initiates a communication round with its current neighbors (determined by a dynamic proximity graph). Rather than sending the entire particle cloud, the robot transmits a compact summary: the weighted mean (\mu_i), covariance (\Sigma_i), and a scalar importance score. Receiving robots incorporate this summary by re‑weighting their own particles with the likelihood of the transmitted information, optionally drawing new particles from the received Gaussian approximation. This re‑weighting step is equivalent to a Bayesian update that fuses remote information without ever reconstructing the full remote particle set.

Dynamic topology and asynchrony

Because robots move, the neighbor set changes over time. The protocol continuously recomputes the proximity graph, allowing each robot to select the most informative neighbor at any moment. Communication is asynchronous: a robot processes incoming summaries immediately, regardless of when they arrive, which makes the system tolerant to variable network delays and occasional packet loss. The threshold (\tau) controls the trade‑off between estimation quality and bandwidth usage; higher values reduce traffic but may delay the propagation of critical information.

Experimental validation

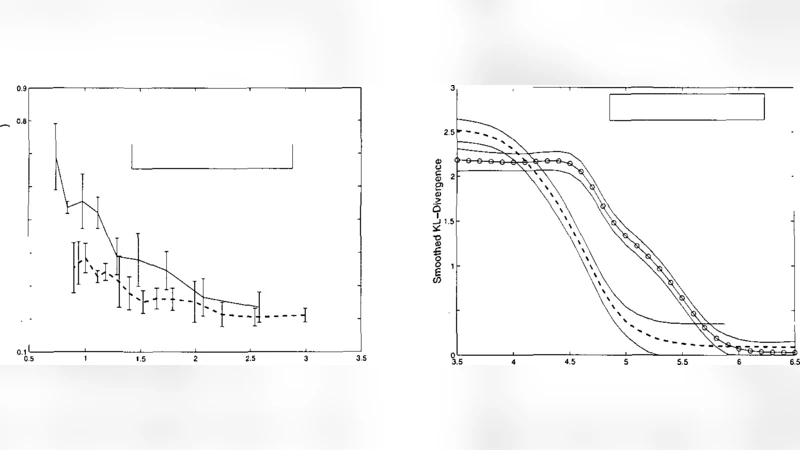

Two experimental tracks are presented. First, a large‑scale Monte‑Carlo simulation varies the fleet size from 5 to 100 robots tracking a moving target. The DPF maintains an average position error below 0.8 m across all sizes, while total transmitted data is reduced by more than 75 % compared to a centralized particle filter. Moreover, network latency stays under 60 ms even for 100 robots, whereas the centralized approach suffers latencies above 200 ms due to congestion.

Second, a physical implementation uses 20 small mobile robots playing a laser‑tag game. Each robot is equipped with a lidar and infrared tag detector. Over a 5‑minute trial, the decentralized system achieves a mean localization error of 0.45 m (σ = 0.12 m) and a maximum communication delay of 48 ms. Total network usage is 1.2 MB per minute, a 78 % reduction relative to transmitting full particle sets. The system also demonstrates resilience: temporary loss of connectivity for a subset of robots does not cause a catastrophic error increase; once connectivity is restored, the remaining robots quickly re‑synchronize.

Insights and limitations

The work shows that a carefully designed information‑driven exchange protocol can preserve the benefits of particle‑filter based Bayesian inference while dramatically cutting communication overhead. By restricting exchanges to neighbors and sending only statistical summaries, the approach scales gracefully to large fleets and remains robust to network disruptions. However, summarizing a particle set with a single Gaussian may be insufficient for highly multimodal distributions, and the choice of the information‑gain threshold (\tau) is critical; adaptive tuning mechanisms are suggested for future work. Extending the framework to handle multiple simultaneous targets, non‑Gaussian mixture summaries, and reinforcement‑learning‑based threshold adaptation are identified as promising research directions.

Conclusion

Overall, the paper delivers a compelling solution to decentralized sensor fusion for multi‑robot systems. It combines rigorous Bayesian theory with pragmatic engineering (compact summaries, dynamic neighbor selection, asynchronous updates) and validates the concept both in simulation and on real hardware. The demonstrated scalability and robustness position distributed particle filters as a viable backbone for future large‑scale autonomous fleets, ranging from warehouse swarms to disaster‑response teams and beyond.