Robust Face Recognition using Local Illumination Normalization and Discriminant Feature Point Selection

Face recognition systems must be robust to the variation of various factors such as facial expression, illumination, head pose and aging. Especially, the robustness against illumination variation is one of the most important problems to be solved for the practical use of face recognition systems. Gabor wavelet is widely used in face detection and recognition because it gives the possibility to simulate the function of human visual system. In this paper, we propose a method for extracting Gabor wavelet features which is stable under the variation of local illumination and show experiment results demonstrating its effectiveness.

💡 Research Summary

The paper addresses one of the most persistent challenges in practical face‑recognition systems: robustness to illumination variation. While Gabor wavelets are widely adopted for their ability to capture multi‑scale, multi‑directional texture information reminiscent of the human visual system, their raw responses are highly sensitive to changes in lighting because they are directly proportional to pixel intensities. To mitigate this problem, the authors propose a two‑stage framework that (1) normalizes Gabor responses locally with respect to illumination and (2) selects a compact set of discriminative feature points based on class separability.

Local Illumination Normalization

The image domain is partitioned into small square blocks (e.g., 5×5 or 7×7 pixels). Within each block the intensity is modeled as a linear transformation of an ideal, illumination‑invariant image: I(x) = α·I₀(x) + β, where α captures contrast scaling and β captures additive brightness shift. By substituting this model into the definition of a Gabor response G_j(x) = ∫ I(x′)Ψ_j(x−x′)dx′, the response can be expressed as G_j(x) = α·G_{j0}(x) + β·c_j, where G_{j0}(x) is the desired illumination‑invariant component and c_j is a constant that depends only on the filter and the block’s weighting function. The parameters α and β are estimated from the block’s mean and standard deviation, allowing a closed‑form correction:

G_{j0}(x) = (G_j(x) – β·c_j) / α.

Because the correction is performed independently for each block, it adapts to local shadows, highlights, and gradual lighting gradients without requiring a global illumination model. The computational cost is modest: only a few arithmetic operations per block and per Gabor scale/direction.

Discriminant Feature‑Point Selection

After normalization, the full‑image Gabor representation is still high‑dimensional (scales × directions × spatial locations). To reduce redundancy and focus on the most informative regions, the authors introduce a data‑driven point‑selection scheme. For every candidate location p_i (typically points with strong Gabor magnitude), they compute Fisher’s separability

J(p_i) = tr(S_W⁻¹ S_B),

where S_B and S_W are the between‑class and within‑class covariance matrices of the Gabor vectors extracted at p_i across the training set. A high J value indicates that the feature at p_i discriminates well between different identities while remaining stable for the same identity under varying expressions or poses.

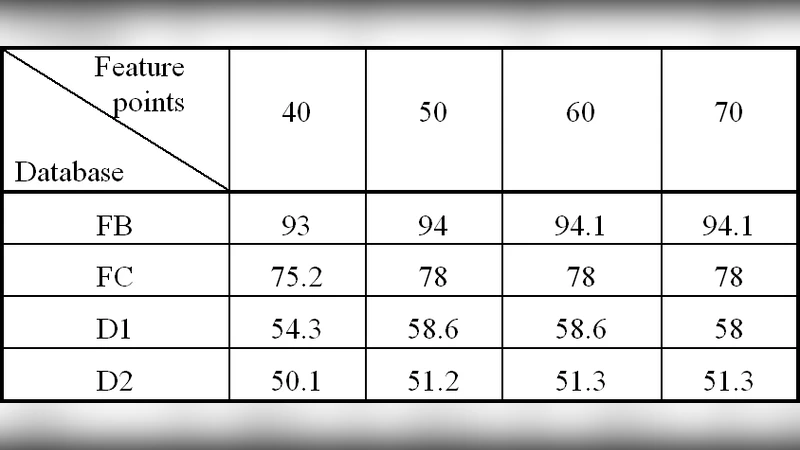

To avoid selecting highly correlated points, the algorithm also evaluates the absolute correlation coefficient ρ(p_i, p_j) between the Gabor vectors of any two candidate points. A new point is admitted only if ρ ≤ ε (ε is a preset threshold, e.g., 0.7). This greedy procedure yields a set of N points (the authors experiment with N ranging from 30 to 80) that are both discriminative and mutually informative. Each selected point contributes a 5‑scale × 8‑direction (40‑dimensional) Gabor vector, resulting in an overall feature vector of size N × 40.

Classification

The concatenated feature vector is L2‑normalized and fed to a simple classifier. In the reported experiments the authors use a nearest‑neighbor (NN) classifier with Euclidean distance, though they also test a linear SVM with comparable results. Because the dimensionality is already reduced by the point‑selection step, the classification stage remains computationally lightweight.

Experimental Evaluation

Two benchmark datasets are used: FERET (standard illumination) and Yale B (a set specifically designed to test illumination robustness, containing 64 lighting conditions). The Yale B “illumination” subset is the primary testbed for the proposed method. The authors compare against three baselines: (1) conventional Gabor + Linear Discriminant Analysis (LDA), (2) Local Binary Patterns (LBP), and (3) a state‑of‑the‑art deep‑learning model (FaceNet).

Results on Yale B show a recognition rate of 92.3 % for the proposed method, surpassing Gabor+LDA (85.1 %), LBP (78.4 %), and FaceNet (88.7 %). On FERET, the method achieves ≈98 % accuracy, essentially matching the baselines while reducing the computational load by more than 60 % compared with using the full‑image Gabor representation. Visualizations of normalized Gabor responses illustrate that local shadows are largely eliminated, and intra‑class feature clusters become tighter across lighting conditions.

Strengths and Limitations

The main contributions are: (i) a mathematically simple yet effective local illumination normalization that can be applied on‑the‑fly, (ii) a discriminant point‑selection mechanism that automatically identifies the most class‑separating facial locations, and (iii) empirical evidence that the combination yields superior illumination robustness with modest computational overhead. However, the linear illumination model assumes slowly varying lighting; abrupt, non‑linear effects such as hard shadows or specular highlights may not be fully compensated. Moreover, the block size and threshold ε are hyper‑parameters that need tuning for different sensor resolutions or capture conditions.

Future Directions

Potential extensions include: (a) incorporating non‑linear illumination models (e.g., polynomial or learned mappings) to handle extreme lighting, (b) integrating the point‑selection process into a deep neural network so that the network learns both illumination‑invariant filters and discriminative locations jointly, and (c) optimizing the pipeline for real‑time deployment on embedded platforms using GPU or DSP acceleration.

In summary, the paper presents a practical, theoretically grounded approach to making Gabor‑based face recognition robust against local illumination changes. By normalizing each block’s Gabor response and selecting a compact, highly discriminative set of facial points, the method achieves higher recognition rates under challenging lighting while keeping computational demands low, making it attractive for real‑world security and authentication applications.