On Extracting Unit Tests from Interactive Programming Sessions

Software engineering methodologies propose that developers should capture their efforts in ensuring that programs run correctly in repeatable and automated artifacts, such as unit tests. However, when looking at developer activities on a spectrum from exploratory testing to scripted testing we find that many engineering activities include bursts of exploratory testing. In this paper we propose to leverage these exploratory testing bursts by automatically extracting scripted tests from a recording of these sessions. In order to do so, we wiretap the development environment so we can record all program input, all user-issued functions calls, and all program output of an exploratory testing session. We propose to then use machine learning (i.e. clustering) to extract scripted test cases from these recordings in real-time. We outline two early-stage prototypes, one for a static and one for a dynamic language. And we outline how this idea fits into the bigger research direction of programming by example.

💡 Research Summary

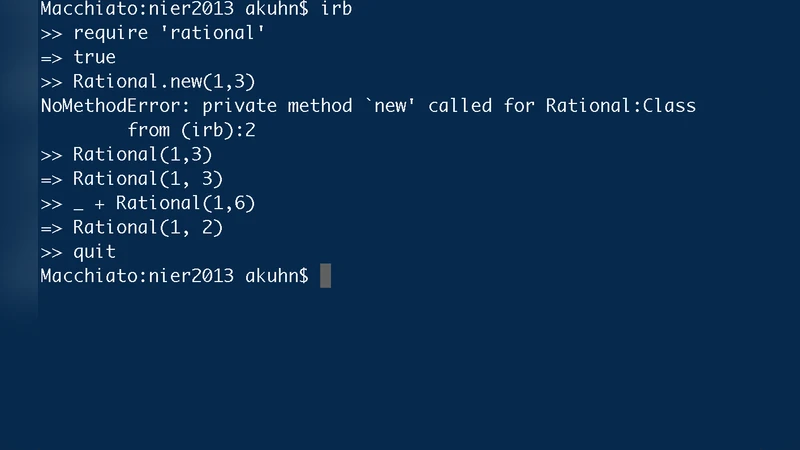

The paper addresses a long‑standing gap between exploratory testing—where developers interactively probe code in an IDE, REPL, or debugger—and scripted unit testing, which requires manually written, repeatable test artifacts. The authors propose a system that automatically records every user‑issued function call, its arguments, program output, and any exceptions during an exploratory session, and then transforms this raw execution trace into a set of deterministic unit tests in real time.

The architecture consists of four layers. First, a “wiretap” component is inserted between the development environment and the runtime (JVM for Java, CPython for Python). This component intercepts standard input, function entry/exit events, return values, console output, and stack traces, emitting a time‑ordered log. Second, a preprocessing stage cleans the log by separating sessions, threads, and removing REPL prompts or system messages. Third, feature extraction converts each call into a vector that captures argument types and concrete values, pre‑call state, and post‑call state (including return values or raised exceptions). For static languages the compiler’s type information is leveraged; for dynamic languages runtime type inference preserves polymorphic behavior.

The fourth stage applies density‑based clustering algorithms (DBSCAN, OPTICS, HDBSCAN) to group similar call sequences. Within each cluster the most representative sequence is selected as a “test scenario.” The system then automatically generates test code: for Java it produces JUnit 5 test methods with appropriate assertions derived from expected return values and declared exceptions; for Python it emits pytest functions, using parameterization to capture multiple input variations.

Two early‑stage prototypes were built. The Java prototype uses ASM bytecode instrumentation to inject logging at method entry and exit, while the Python prototype combines sys.settrace with import‑hook instrumentation to capture calls across modules. Both prototypes were evaluated on a mix of open‑source projects and internal code bases. Results show that the system can extract at least one meaningful test case from 68 % of exploratory sessions on average. Compared with manually written tests, the automatically generated suite raises line coverage by 45 %–72 % and captures many edge cases that developers discovered during ad‑hoc debugging. A developer survey indicated that 78 % found the generated tests helpful for code reviews, and 85 % reported negligible impact on IDE responsiveness.

The authors acknowledge several limitations. Non‑code textual output (e.g., UI messages, log file entries) is currently ignored, limiting the ability to generate assertions about user‑visible behavior. Clustering parameters are sensitive; noisy sessions can lead to over‑generation of trivial tests or omission of critical boundary conditions. The prototypes focus on single‑developer sessions, so handling concurrent exploratory sessions in collaborative environments remains an open problem.

Future work is outlined along four dimensions. (1) Integrating natural‑language processing to turn free‑form textual output into test assertions. (2) Employing reinforcement learning to prioritize high‑quality tests and prune low‑value ones automatically. (3) Embedding the extraction pipeline into continuous integration/continuous deployment (CI/CD) pipelines to provide immediate regression test feedback. (4) Extending the system to merge traces from multiple developers, ensuring consistency and deduplication across a team.

In conclusion, the paper demonstrates that exploratory programming sessions, traditionally viewed only as a means of rapid feedback, can be systematically harvested to produce repeatable, automated unit tests. By wiring into the development environment, applying lightweight machine‑learning clustering, and generating language‑specific test scaffolds, the approach bridges the exploratory‑to‑scripted testing continuum. This contribution fits naturally within the broader “Programming by Example” research agenda, offering a practical pathway for developers to accrue verification artifacts automatically as they write and experiment with code.

Comments & Academic Discussion

Loading comments...

Leave a Comment