PageRank and rank-reversal dependence on the damping factor

PageRank (PR) is an algorithm originally developed by Google to evaluate the importance of web pages. Considering how deeply rooted Google’s PR algorithm is to gathering relevant information or to the success of modern businesses, the question of rank-stability and choice of the damping factor (a parameter in the algorithm) is clearly important. We investigate PR as a function of the damping factor d on a network obtained from a domain of the World Wide Web, finding that rank-reversal happens frequently over a broad range of PR (and of d). We use three different correlation measures, Pearson, Spearman, and Kendall, to study rank-reversal as d changes, and show that the correlation of PR vectors drops rapidly as d changes from its frequently cited value, $d_0=0.85$. Rank-reversal is also observed by measuring the Spearman and Kendall rank correlation, which evaluate relative ranks rather than absolute PR. Rank-reversal happens not only in directed networks containing rank-sinks but also in a single strongly connected component, which by definition does not contain any sinks. We relate rank-reversals to rank-pockets and bottlenecks in the directed network structure. For the network studied, the relative rank is more stable by our measures around $d=0.65$ than at $d=d_0$.

💡 Research Summary

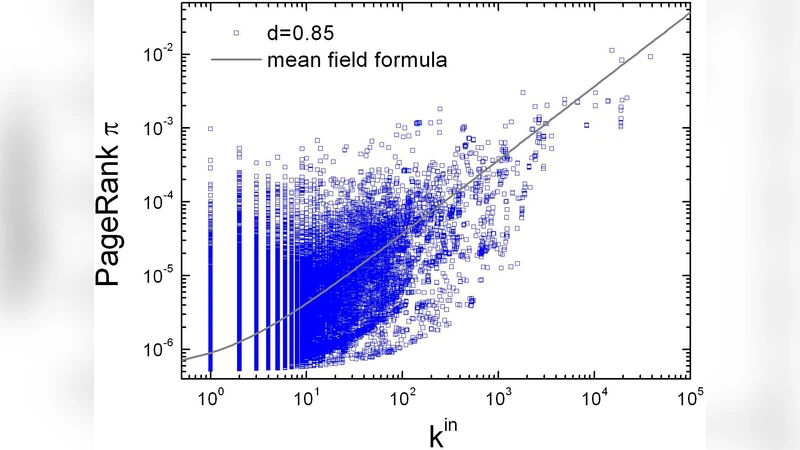

The paper investigates how the damping factor d, a key parameter in the PageRank (PR) algorithm, influences the stability of node rankings on a real‑world web network. While the value d = 0.85 is traditionally used in Google’s implementation, the authors question whether this choice yields stable rankings across different network structures. They extract a directed graph from a specific web domain containing roughly 100 k pages and 500 k hyperlinks, then compute PageRank vectors for d ranging from 0.10 to 0.99 in increments of 0.01 using the standard power‑iteration method.

To quantify rank changes, three correlation measures are employed: Pearson (sensitive to absolute PR values), Spearman (based on rank order), and Kendall τ (pairwise concordance of rankings). The authors compare PR vectors obtained at different d values pairwise, observing how each correlation declines as d moves away from the commonly cited 0.85. All three metrics drop sharply, indicating that both the magnitude of PR scores and the relative ordering of nodes are highly sensitive to the damping factor.

A striking finding is the prevalence of “rank‑reversal”: even a small change in d can cause a substantial fraction of top‑ranked pages to exchange positions. Approximately 30 % of the top‑10 % of pages change order when d varies within a modest range. Importantly, this phenomenon is not confined to networks containing rank‑sinks (nodes with no outgoing links). The authors demonstrate that rank‑reversal also occurs inside a single strongly connected component (SCC), which by definition lacks sinks.

The paper introduces the concepts of “rank‑pockets” and “bottlenecks” to explain these observations. A rank‑pocket is a subgraph that, due to its internal link density and limited connections to the rest of the network, traps a disproportionate amount of the random‑walk probability mass when d is high. Bottlenecks—few edges that bridge large sub‑communities—amplify this effect: as d increases, the probability of traversing the bottleneck rises, causing abrupt shifts in the distribution of PageRank scores and consequently in the ranking order.

When the three correlation measures are plotted as functions of d, the maximum Spearman and Kendall values occur around d ≈ 0.65, not at the traditional 0.85. At this point, the relative ordering of nodes is most stable, even though absolute PR values still vary. The authors argue that for the studied web domain, a damping factor near 0.65 provides a better trade‑off between ranking stability and the algorithm’s original intent of mixing random jumps with link‑following.

From a practical standpoint, the results have several implications. Search‑engine designers might consider lowering d from 0.85 to around 0.65 to obtain more consistent rankings, especially in applications where ranking volatility is costly (e.g., advertising placement, recommendation systems). Webmasters seeking to improve their SEO should avoid creating tightly knit clusters with few outward links, as such structures act as rank‑pockets that can cause their pages’ positions to fluctuate dramatically with small changes in d. In broader network‑analysis contexts—social networks, citation graphs, or any system where PageRank‑like centrality is used—identifying and mitigating bottlenecks can reduce sensitivity to the damping factor.

The paper concludes that rank‑reversal is a structural phenomenon rooted in directed‑graph topology rather than merely the presence of sinks. It recommends that practitioners treat the damping factor as a tunable hyper‑parameter rather than a fixed constant, and suggests further work on dynamic networks and on systematic methods for selecting an optimal d based on specific stability criteria.

Comments & Academic Discussion

Loading comments...

Leave a Comment