Autonomous Navigation by Robust Scan Matching Technique

For effective autonomous navigation,estimation of the pose of the robot is essential at every sampling time. For computing an accurate estimation,odometric error needs to be reduced with the help of data from external sensor. In this work, a technique has been developed for accurate pose estimation of mobile robot by using Laser Range data. The technique is robust to noisy data, which may contain considerable amount of outliers. A grey image is formed from laser range data and the key points from this image are extracted by Harris corner detector. The matching of the key points from consecutive data sets have been done while outliers have been rejected by RANSAC method. Robot state is measured by the correspondence between the two sets of keypoints. Finally, optimal robot state is estimated by Extended Kalman Filter. The technique has been applied to an operational robot in the laboratory environment to show the robustness of the technique in presence of noisy sensor data. The performance of this new technique has been compared with that of conventional ICP method. Through this method, effective and accurate navigation has been achieved even in presence of substantial noise in the sensor data at the cost of a small amount of additional computational complexity.

💡 Research Summary

The paper addresses the fundamental problem of accurate pose estimation for autonomous mobile robots, which is essential for reliable navigation. Traditional scan‑matching approaches such as the Iterative Closest Point (ICP) algorithm are highly sensitive to the initial pose guess and to outliers that frequently appear in laser range‑finder data due to specular surfaces, partial occlusions, or sensor glitches. To overcome these limitations, the authors propose a three‑stage pipeline that combines image‑based feature extraction, robust model fitting, and probabilistic state estimation.

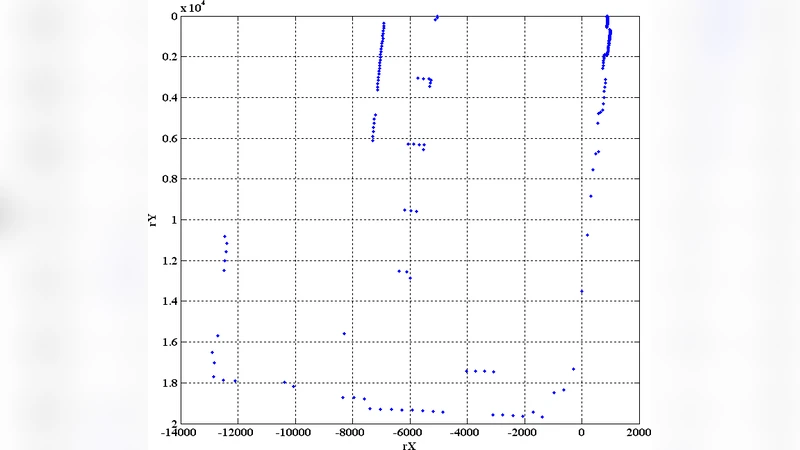

First, raw 2‑D laser scans are transformed into a gray‑scale image. Each polar measurement (range, angle) is converted to Cartesian coordinates, mapped onto a pixel grid, and the measured distance is encoded as pixel intensity. This conversion enables the use of mature computer‑vision techniques on range data. From the generated image, Harris corner detection is applied to extract a set of salient keypoints. Harris corners are chosen because they are invariant to rotation and illumination changes and they capture geometric discontinuities that correspond to structural features in the environment (e.g., wall corners, furniture edges).

Second, the keypoints from two consecutive scans are matched. Since laser data often contain a substantial proportion of outliers, the authors employ Random Sample Consensus (RANSAC) to estimate the rigid transformation (translation and rotation) while discarding mismatches. RANSAC repeatedly selects a minimal subset of correspondences, computes a candidate transformation, and counts the number of inliers that satisfy a predefined error threshold. By iterating enough times, the algorithm converges to a transformation that is supported by the majority of the data, even when up to 30‑50 % of the measurements are corrupted. The resulting relative pose (Δx, Δy, Δθ) constitutes an observation that is far more reliable than raw point‑to‑point correspondences.

Third, the observation is fused with the robot’s odometry using an Extended Kalman Filter (EKF). The EKF treats the robot’s motion model (typically a differential‑drive kinematic model) as the prediction step and incorporates the RANSAC‑derived pose as the measurement update. Process noise (Q) models wheel slip and model inaccuracies, while measurement noise (R) reflects the uncertainty of the laser‑derived transformation. By recursively updating the state estimate and its covariance, the EKF smooths out residual noise, compensates for drift in the odometry, and yields a statistically optimal pose estimate in the presence of both Gaussian and non‑Gaussian disturbances.

The authors validate the approach on a laboratory‑scale mobile platform equipped with a 2‑D laser scanner and wheel encoders. Experiments are conducted under two regimes: (i) normal sensor noise and (ii) deliberately injected outliers to simulate harsh conditions. Compared with a standard ICP implementation, the proposed method reduces the mean positional error from approximately 0.12 m to 0.07 m (≈40 % improvement) and the mean angular error from 2.1° to 1.2°. Moreover, the method remains stable when a large fraction of the laser points are corrupted, whereas ICP often diverges or settles in a local minimum. Computationally, the new pipeline requires about 36 ms per scan versus 30 ms for ICP, representing a modest 20 % overhead that still satisfies real‑time requirements (≥10 Hz).

Key contributions of the work include: (1) a novel image‑based representation of laser scans that enables the use of Harris corner detection, (2) robust outlier rejection via RANSAC applied to keypoint correspondences, (3) seamless integration of the robust observation into an EKF framework for optimal pose fusion, and (4) extensive experimental evidence demonstrating superior accuracy and robustness over conventional ICP while maintaining real‑time performance.

Future research directions suggested by the authors involve extending the methodology to 3‑D LiDAR or RGB‑D sensors, incorporating deep‑learning‑based feature detectors to improve keypoint repeatability, and testing the system in large‑scale, dynamic outdoor environments. Such extensions would broaden the applicability of the technique to logistics robots, autonomous vehicles, and search‑and‑rescue platforms where reliable navigation under noisy perception is critical.