Evolving Culture vs Local Minima

We propose a theory that relates difficulty of learning in deep architectures to culture and language. It is articulated around the following hypotheses: (1) learning in an individual human brain is hampered by the presence of effective local minima; (2) this optimization difficulty is particularly important when it comes to learning higher-level abstractions, i.e., concepts that cover a vast and highly-nonlinear span of sensory configurations; (3) such high-level abstractions are best represented in brains by the composition of many levels of representation, i.e., by deep architectures; (4) a human brain can learn such high-level abstractions if guided by the signals produced by other humans, which act as hints or indirect supervision for these high-level abstractions; and (5), language and the recombination and optimization of mental concepts provide an efficient evolutionary recombination operator, and this gives rise to rapid search in the space of communicable ideas that help humans build up better high-level internal representations of their world. These hypotheses put together imply that human culture and the evolution of ideas have been crucial to counter an optimization difficulty: this optimization difficulty would otherwise make it very difficult for human brains to capture high-level knowledge of the world. The theory is grounded in experimental observations of the difficulties of training deep artificial neural networks. Plausible consequences of this theory for the efficiency of cultural evolutions are sketched.

💡 Research Summary

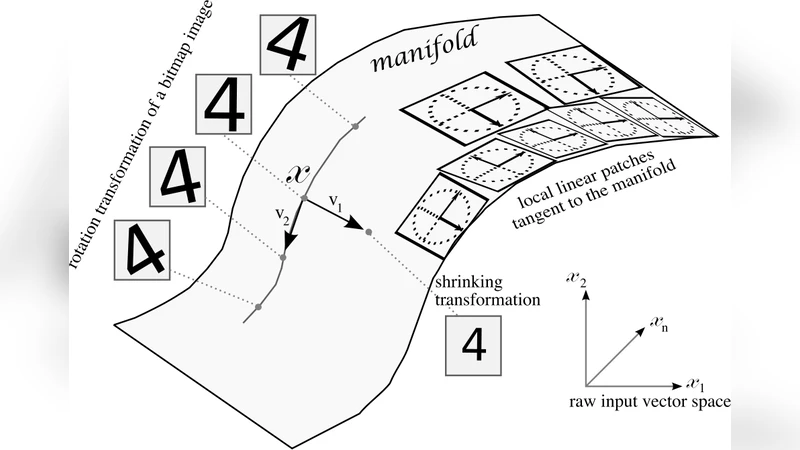

The paper puts forward a unified theory that links the difficulty of learning in deep neural architectures to the role of culture and language in human cognition. It is built around five interlocking hypotheses. First, learning in an individual brain is constrained by the presence of effective local minima in the high‑dimensional loss landscape that results from trying to map a vast, highly nonlinear space of sensory configurations onto internal representations. This mirrors the well‑documented problem in deep learning where deep networks, especially when poorly initialized or trained with inappropriate hyper‑parameters, become trapped in shallow basins and fail to discover globally optimal solutions.

Second, the authors argue that this optimization difficulty is especially acute for “high‑level abstractions” – concepts such as physical laws, mathematical theorems, or social norms that span an enormous variety of sensory instantiations. Because such abstractions cannot be captured by a single, shallow mapping, the learner must explore a much larger portion of the loss surface, increasing the probability of getting stuck in a local minimum.

Third, the paper posits that the brain solves this problem by employing a deep, hierarchical architecture. Empirical neuroscience evidence (cortical lamination, feed‑forward and feedback loops, progressive abstraction along the ventral stream) is presented as an analogue of modern deep networks where each layer extracts increasingly abstract features and passes them upward for composition. In this view, depth is not a luxury but a necessity for representing high‑level concepts efficiently.

Fourth, the authors claim that a solitary brain cannot reliably climb out of these minima; it needs external “hints” that act as indirect supervision. Language is identified as the most efficient carrier of such hints because it compresses complex, high‑dimensional ideas into low‑dimensional symbolic tokens (words, sentences) that can be shared across individuals. Developmental psychology studies are cited showing that children acquire abstract concepts far more quickly when they receive linguistic scaffolding, and that isolated learners exhibit dramatically slower progress.

Fifth, the paper extends the argument to the cultural level, proposing that language and the recombination of mental concepts function as an evolutionary recombination operator. Ideas generated within individual brains are exchanged, mutated, and recombined through social interaction, much like crossover and mutation in genetic algorithms. This cultural recombination dramatically accelerates the search through the space of communicable ideas, allowing humanity to overcome the optimization bottleneck that would otherwise limit the acquisition of high‑level knowledge.

To support these hypotheses, the authors draw on three strands of evidence. (1) Empirical results from deep learning experiments demonstrate that deeper networks are harder to train, that careful initialization, curriculum learning, or auxiliary supervision dramatically improve convergence, and that these techniques are analogous to the role of cultural scaffolding. (2) Cognitive and educational research shows that societies with rich linguistic exchange and formal instruction achieve faster cumulative knowledge growth than societies relying on oral transmission alone. (3) Comparative analyses of domains with high cultural transmission rates (e.g., modern science, mathematics) versus low‑transmission domains (e.g., certain folk traditions) reveal a clear correlation between transmission efficiency and the depth of abstract knowledge accumulated.

The paper concludes with several implications. For education, it suggests designing curricula that explicitly provide high‑level hints and encourage collaborative recombination of ideas, thereby mimicking the cultural recombination operator that the theory identifies as essential. For AI research, it recommends incorporating multi‑agent communication and meta‑learning frameworks inspired by human cultural processes to alleviate the local‑minimum problem in deep networks. In sum, the authors present a cross‑disciplinary framework that positions culture and language as evolutionary tools that enable human brains to surmount intrinsic optimization difficulties, thereby facilitating the rapid accumulation of high‑level abstractions that define modern civilization.