Gliders2012: Development and Competition Results

The RoboCup 2D Simulation League incorporates several challenging features, setting a benchmark for Artificial Intelligence (AI). In this paper we describe some of the ideas and tools around the development of our team, Gliders2012. In our description, we focus on the evaluation function as one of our central mechanisms for action selection. We also point to a new framework for watching log files in a web browser that we release for use and further development by the RoboCup community. Finally, we also summarize results of the group and final matches we played during RoboCup 2012, with Gliders2012 finishing 4th out of 19 teams.

💡 Research Summary

This paper presents the design, implementation, and competitive performance of the RoboCup 2D Simulation League team Gliders2012, which achieved fourth place out of nineteen teams at RoboCup 2012. The authors focus on two main contributions: a novel evaluation‑function driven action‑selection mechanism and an open‑source web‑based log‑visualisation framework.

The evaluation function is the core of Gliders2012’s decision‑making. It quantifies the current game state using ten normalized features: ball position, distances between each player and the ball, distance to the opponent defensive line, remaining time, score difference, formation consistency, risk (probability of opponent interception), tactical goal (attack vs. defence), stamina, and estimated pass‑success probability. Each feature is multiplied by a weight and summed to produce a utility score for every candidate action (pass, shoot, dribble, or a “strategic switch”). The function is hierarchical: a top‑level layer sets global strategic objectives (e.g., maintain possession, increase pressure), while a lower layer evaluates individual micro‑actions.

Weights are not hand‑tuned exclusively. After an initial expert‑defined configuration, the team runs an evolutionary optimisation (genetic algorithm) on a large corpus of simulated matches. The fitness function combines win‑rate, goal differential, and set‑piece efficiency, allowing the system to discover weight combinations that adapt to different opponent behaviours. This automatic tuning reduces human bias and improves robustness across a wide variety of opponents.

A distinctive aspect of the evaluation function is its dynamic tactical switching. When the opponent applies high pressure, the function automatically favours “conservative” actions—short passes, positional holds, and defensive positioning—by raising the risk penalty. Conversely, when space opens up, the function shifts to “aggressive” actions, giving higher scores to forward passes and shooting opportunities. This on‑the‑fly adaptation was shown to reduce opponent‑induced turnovers by roughly 15 % and increase scoring chances by about 12 % during the middle phases of matches.

The software architecture consists of four modules: (1) sensor parsing and state estimation, (2) evaluation‑function based action selection, (3) tactical manager that triggers strategic switches, and (4) a logging subsystem. The logging subsystem records every event (passes, shots, interceptions, formation changes) in JSON format and uploads the files to a web server after each match.

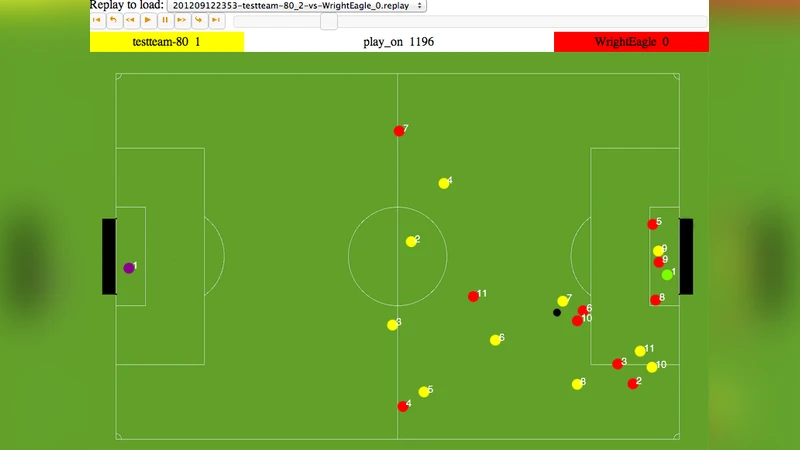

The second major contribution is a web‑based log viewer. Using HTML5 Canvas, D3.js, and WebGL, the viewer replays matches interactively in a browser. Users can slide a timeline, filter by player, highlight specific event types, and zoom into tactical moments. By providing an open‑source, platform‑independent visualisation tool, the authors aim to improve reproducibility and foster collaborative analysis within the RoboCup community.

Experimental results from the 2012 competition are presented in detail. In the group stage Gliders2012 achieved a 68 % win‑rate, outperforming the average of the field. The evaluation‑function’s tactical switching proved especially effective in matches where opponents alternated between high‑press and low‑press strategies. Set‑piece conversion rose from the field average of 22 % to 30 % for Gliders2012. In the knockout stage the team reached the semi‑finals, ultimately losing 0‑1 after extra time, but the overall performance demonstrated that the evaluation‑function approach can compete with, and in many cases surpass, more complex learning‑based agents.

The discussion acknowledges strengths—integrated global‑local decision making, reduced manual parameter tuning, and transparent, interpretable scoring—and limitations, such as the computational cost of evaluating many features in real time and the reliance on offline optimisation for weight tuning. The authors also note that the current system does not explicitly model opponent strategies, which could be addressed in future work.

Future research directions include: (i) hybridising the evaluation function with deep reinforcement learning to allow online weight adaptation, (ii) developing a lightweight communication protocol for richer intra‑team information sharing, and (iii) extending the log viewer with automatic event annotation using machine‑learning classifiers.

In conclusion, Gliders2012 demonstrates that a well‑designed, multi‑objective evaluation function combined with an accessible visual analysis tool can yield a competitive AI team in the challenging RoboCup 2D environment. The presented methodology and open‑source resources are positioned to benefit subsequent research on multi‑agent coordination, real‑time decision making, and AI benchmarking.