Localization from Incomplete Noisy Distance Measurements

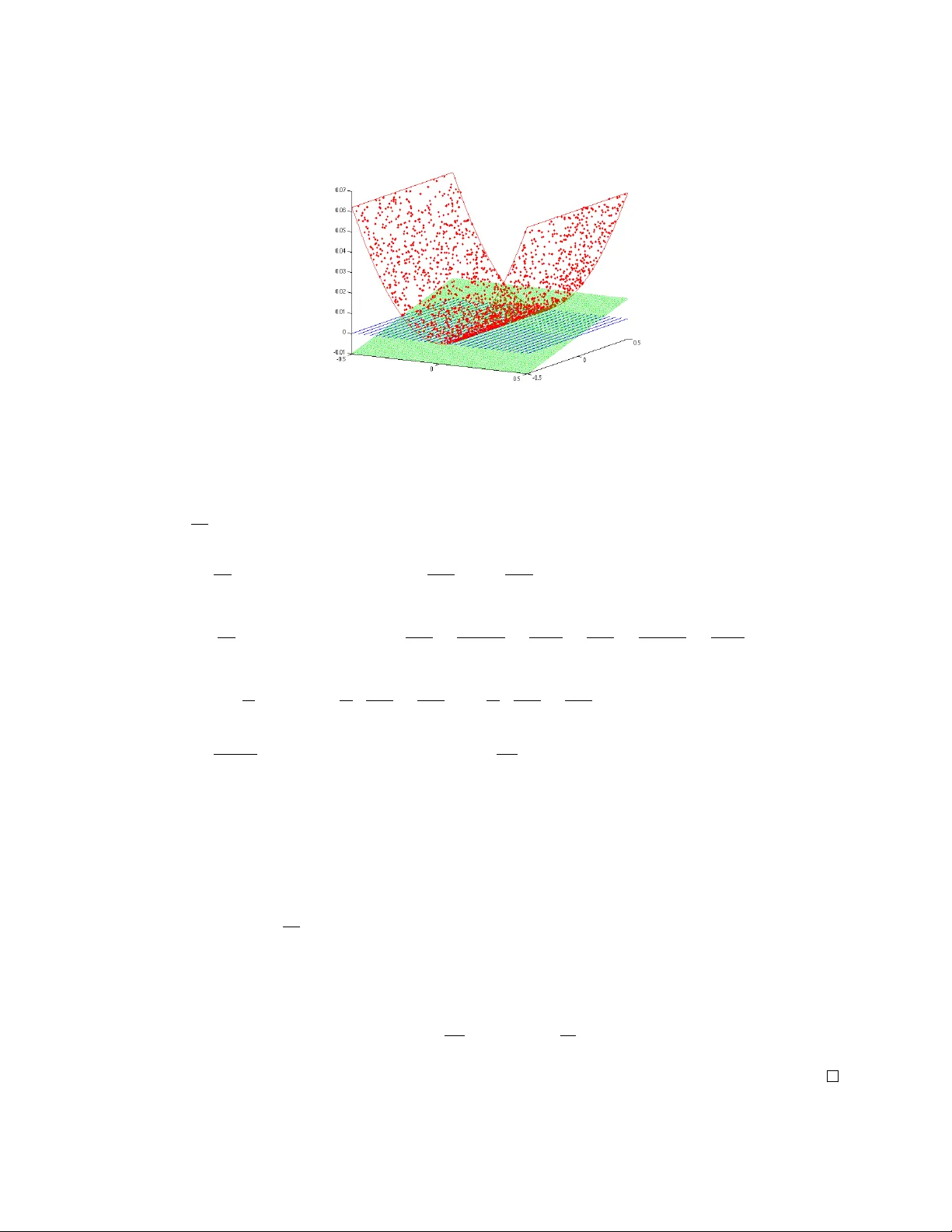

We consider the problem of positioning a cloud of points in the Euclidean space $\mathbb{R}^d$, using noisy measurements of a subset of pairwise distances. This task has applications in various areas, such as sensor network localization and reconstru…

Authors: Adel Javanmard, Andrea Montanari