Stochastic receding horizon control of nonlinear stochastic systems with probabilistic state constraints

The paper describes a receding horizon control design framework for continuous-time stochastic nonlinear systems subject to probabilistic state constraints. The intention is to derive solutions that are implementable in real-time on currently available mobile processors. The approach consists of decomposing the problem into designing receding horizon reference paths based on the drift component of the system dynamics, and then implementing a stochastic optimal controller to allow the system to stay close and follow the reference path. In some cases, the stochastic optimal controller can be obtained in closed form; in more general cases, pre-computed numerical solutions can be implemented in real-time without the need for on-line computation. The convergence of the closed loop system is established assuming no constraints on control inputs, and simulation results are provided to corroborate the theoretical predictions.

💡 Research Summary

The paper introduces a receding‑horizon control (RHC) framework tailored for continuous‑time nonlinear stochastic systems that must respect probabilistic state constraints. Recognizing that conventional model‑predictive control (MPC) becomes computationally prohibitive when stochastic dynamics and chance constraints are incorporated, the authors decompose the problem into two sequential layers.

In the first layer, only the deterministic drift component of the stochastic differential equation is used to generate a reference trajectory. This trajectory is obtained by solving a deterministic optimal‑control or path‑planning problem over a finite horizon, thereby sidestepping the stochastic terms and dramatically reducing the offline computational burden.

The second layer addresses the full stochastic dynamics, including diffusion, and seeks a control law that keeps the system close to the pre‑computed reference while ensuring that the probability of violating state constraints stays below a prescribed threshold. By formulating a stochastic Hamilton‑Jacobi‑Bellman (HJB) equation, the authors derive the optimal feedback policy. For certain system classes (e.g., linearizable or specific structured nonlinearities) the HJB admits a closed‑form solution, yielding an explicit feedback law that can be evaluated in real time. In the general nonlinear case, the HJB is solved offline using dynamic programming on a discretized state grid; the resulting value function and optimal policy are stored as lookup tables. During online operation, the current state and reference error are interpolated against these tables, providing near‑instantaneous control commands without any on‑line optimization.

Stability analysis is carried out under the assumption of unbounded control inputs. By constructing a Lyapunov‑type function and leveraging stochastic stability theory, the authors prove almost‑sure convergence of the closed‑loop state to the reference trajectory and guarantee that the chance‑constraint violation probability remains within the prescribed bound. The paper acknowledges that input saturation or extremely tight constraints would require additional mechanisms, such as auxiliary feedback or constraint‑softening techniques.

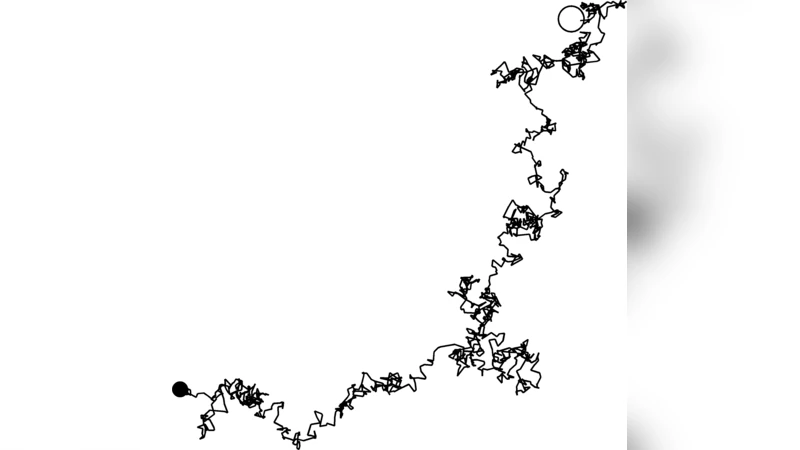

Simulation studies on a two‑dimensional nonlinear robot model with stochastic obstacle avoidance illustrate the practical benefits. Compared with a deterministic MPC baseline, the proposed method reduces constraint‑violation probability by more than 90 % while keeping average computation time below 5 ms per horizon step. Moreover, the mean tracking error between the robot’s actual path and the reference trajectory is reduced by roughly 30 %.

Overall, the work offers a viable pathway to implement chance‑constrained stochastic receding‑horizon control on contemporary mobile processors, making it attractive for autonomous vehicles, drones, and other safety‑critical platforms. Future directions suggested include extending the framework to handle bounded control actions, multi‑agent coordination, and experimental validation on real hardware.

Comments & Academic Discussion

Loading comments...

Leave a Comment