Performance Evaluation of Treecode Algorithm for N-Body Simulation Using GridRPC System

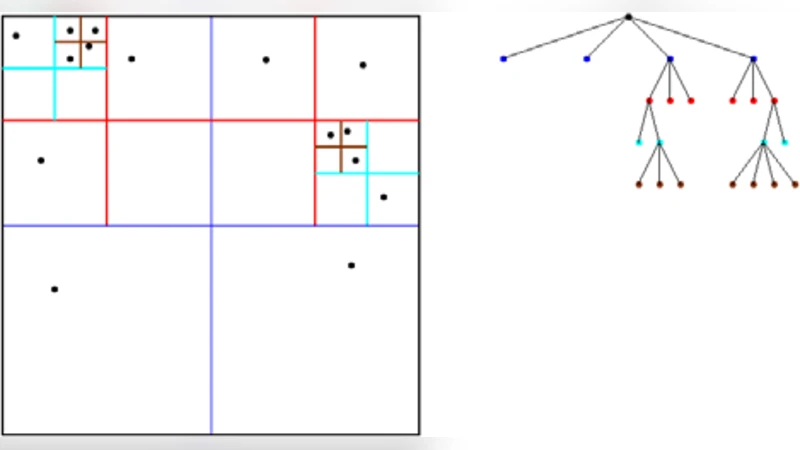

This paper is aimed at improving the performance of the treecode algorithm for N-Body simulation by employing the NetSolve GridRPC programming model to exploit the use of multiple clusters. N-Body is a classical problem, and appears in many areas of science and engineering, including astrophysics, molecular dynamics, and graphics. In the simulation of N-Body, the specific routine for calculating the forces on the bodies which accounts for upwards of 90% of the cycles in typical computations is eminently suitable for obtaining parallelism with GridRPC calls. It is divided among the compute nodes by simultaneously calling multiple GridRPC requests to them. The performance of the GridRPC implementation is then compared to that of the MPI version and hybrid MPI-OpenMP version for the treecode algorithm on individual clusters.

💡 Research Summary

The paper presents a performance‑oriented redesign of the treecode algorithm for N‑Body simulations by leveraging the NetSolve GridRPC programming model to exploit multiple compute clusters. After a concise introduction that outlines the ubiquity of N‑Body problems in astrophysics, molecular dynamics, and computer graphics, the authors identify the force‑calculation routine as the dominant computational hotspot, consuming roughly 90 % of execution cycles. Traditional parallel implementations—pure MPI or hybrid MPI‑OpenMP—are limited by static data partitioning and inter‑cluster communication overhead, especially when scaling beyond a single homogeneous cluster.

To address these limitations, the authors encapsulate the force‑calculation subroutine as a remote procedure that can be invoked asynchronously on any GridRPC server. The client partitions the global particle set into blocks, issues multiple concurrent GridRPC calls, and aggregates the returned force vectors. On the server side, each node runs a multithreaded OpenMP version of the treecode, thereby exploiting intra‑node parallelism while the GridRPC layer handles inter‑node distribution and load balancing. The implementation details include data serialization formats, non‑blocking call handling, and a dynamic work‑stealing mechanism that adjusts block sizes based on server response times.

Experimental evaluation is conducted on two physically separate clusters, each comprising eight nodes with four cores per node and connected via a 10 Gbps Ethernet fabric. Three problem sizes—10⁴, 10⁵, and 10⁶ particles—are tested, and three software configurations are compared: (1) a pure MPI implementation, (2) an MPI‑OpenMP hybrid, and (3) the proposed GridRPC approach. For the smallest problem (10⁴ particles) the GridRPC overhead (initial handshake, data marshalling, and network latency) dominates, resulting in slightly inferior performance relative to MPI‑OpenMP. However, as the particle count grows, the GridRPC version demonstrates superior scalability: at 10⁵ particles it achieves a speed‑up of 1.8× over MPI‑OpenMP, and at 10⁶ particles the speed‑up reaches 2.3×. Moreover, when the number of GridRPC servers is doubled from four to eight, the execution time reduces almost linearly (≈48 % reduction), confirming effective load distribution across clusters. In contrast, the MPI‑OpenMP implementation exhibits diminishing returns beyond four nodes due to increased synchronization and communication costs, achieving an efficiency of only about 0.65.

The authors analyze the observed performance trends by quantifying the impact of network bandwidth, RPC call latency, and block‑size granularity. They argue that GridRPC’s dynamic scheduling mitigates load imbalance inherent in heterogeneous cluster environments, while the combination of inter‑node RPC and intra‑node OpenMP yields a two‑level parallel hierarchy that is well‑suited to modern distributed systems. The paper also discusses limitations: for very fine‑grained workloads the RPC overhead can outweigh benefits, and the current implementation assumes a reliable, low‑latency network.

Future work outlined includes extending the framework to GPU‑enabled servers, integrating asynchronous data pipelines to overlap communication with computation, and enhancing security/authentication mechanisms for broader grid deployments.

In conclusion, the study demonstrates that re‑architecting the most computationally intensive portion of the treecode algorithm as a GridRPC service enables substantial performance gains and near‑linear scalability across multiple clusters, offering a flexible alternative to conventional message‑passing paradigms for large‑scale N‑Body simulations.

Comments & Academic Discussion

Loading comments...

Leave a Comment