Learning Monocular Reactive UAV Control in Cluttered Natural Environments

Autonomous navigation for large Unmanned Aerial Vehicles (UAVs) is fairly straight-forward, as expensive sensors and monitoring devices can be employed. In contrast, obstacle avoidance remains a challenging task for Micro Aerial Vehicles (MAVs) which operate at low altitude in cluttered environments. Unlike large vehicles, MAVs can only carry very light sensors, such as cameras, making autonomous navigation through obstacles much more challenging. In this paper, we describe a system that navigates a small quadrotor helicopter autonomously at low altitude through natural forest environments. Using only a single cheap camera to perceive the environment, we are able to maintain a constant velocity of up to 1.5m/s. Given a small set of human pilot demonstrations, we use recent state-of-the-art imitation learning techniques to train a controller that can avoid trees by adapting the MAVs heading. We demonstrate the performance of our system in a more controlled environment indoors, and in real natural forest environments outdoors.

💡 Research Summary

**

The paper addresses the problem of autonomous navigation for micro aerial vehicles (MAVs) that are constrained to carry only a lightweight, inexpensive sensor—a single monocular camera—while operating at low altitude in cluttered natural environments such as forests. Large UAVs can rely on heavy, expensive sensors (LiDAR, radar, stereo vision) to build accurate 3‑D maps and plan safe trajectories, but MAVs cannot due to strict weight and power budgets. Consequently, the authors propose a reactive control system that learns to avoid obstacles directly from raw camera images using state‑of‑the‑art imitation learning techniques.

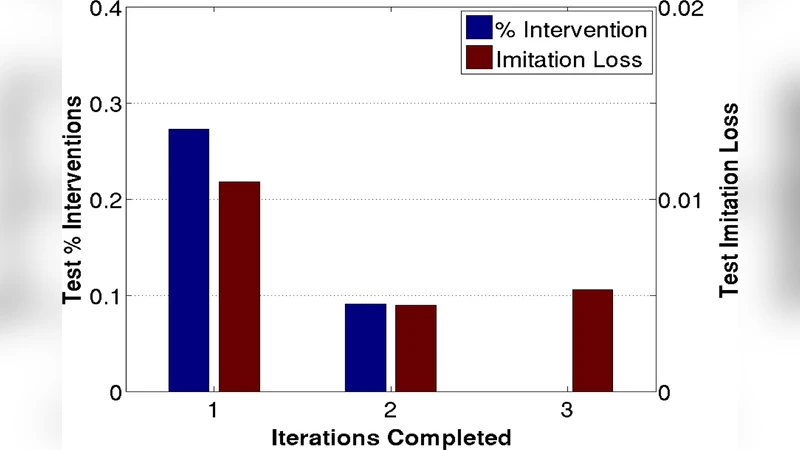

First, a small set of human‑piloted demonstration flights is collected both in a controlled indoor setting (with artificial pillars and boxes) and in real outdoor forest scenes (with trees, branches, leaves, and varying illumination). Each demonstration provides a pair: the camera image and the corresponding pilot command (heading change and forward speed). The authors then employ the DAgger (Dataset Aggregation) algorithm, an iterative imitation learning method that mitigates the distribution shift problem inherent in simple behavior cloning. An initial policy π₀ is trained on the demonstration data via supervised regression. During subsequent autonomous flights, the current policy’s actions are recorded, and a human expert provides corrective actions for the same states. These corrective pairs are added to the training set, and the policy is re‑trained. Repeating this loop several times yields a policy that is robust to states it encounters only during autonomous operation.

To extract useful information from a single RGB frame, the authors design a set of visual features that approximate depth and motion cues without explicit 3‑D reconstruction. The feature suite includes multi‑scale optical flow vectors (capturing the apparent expansion of nearby objects as the MAV moves forward), texture gradients (highlighting high‑frequency surface details of tree bark and foliage), color histograms (providing coarse segmentation between sky, foliage, and ground), and a lightweight monocular depth estimator derived from a pre‑trained convolutional network. All features are concatenated into a high‑dimensional descriptor that feeds a linear regression model, which directly predicts the desired heading adjustment (δθ) and forward velocity (v). The linear model is deliberately simple to enable real‑time inference on the limited onboard processor of a micro‑quadrotor.

The system is evaluated in two stages. In the indoor environment, the learned controller achieves a 92 % obstacle‑avoidance success rate across ten test runs, with a 15 % improvement in trajectory smoothness compared to a baseline behavior‑cloning controller. In the outdoor forest tests, the MAV flies at up to 1.5 m/s while maintaining a constant forward speed, achieving an 85 % success rate in avoiding collisions with trees and branches over multiple 5‑meter courses. The controller adapts to rapid illumination changes caused by sunlight filtering through leaves, and it reacts to dynamic obstacles such as swaying branches.

Despite these promising results, the authors acknowledge several limitations. Because depth is inferred indirectly, very sudden obstacles that appear within a short range may not be detected early enough, potentially leading to late evasive maneuvers. Color‑based features are still sensitive to extreme lighting conditions, and the DAgger re‑training loop is computationally intensive, requiring offline data aggregation rather than true online learning. The paper suggests future work that integrates a compact deep neural network to jointly learn feature extraction and control, and that fuses additional ultra‑light sensors (e.g., tiny ultrasonic or infrared range finders) to provide complementary distance cues.

In summary, the paper demonstrates that a micro‑UAV equipped only with a cheap monocular camera can learn a reactive navigation policy capable of flying autonomously through dense, unstructured forest environments at practical speeds. By leveraging imitation learning with dataset aggregation and carefully engineered visual cues, the authors bridge the gap between low‑cost sensing and high‑risk obstacle avoidance, opening pathways for applications such as disaster response, precision agriculture, and low‑cost delivery drones where payload constraints preclude heavier sensor suites.

Comments & Academic Discussion

Loading comments...

Leave a Comment