Brain Computer Interface Technologies in the Coming Decades

As the proliferation of technology dramatically infiltrates all aspects of modern life, in many ways the world is becoming so dynamic and complex that technological capabilities are overwhelming human capabilities to optimally interact with and leverage those technologies. Fortunately, these technological advancements have also driven an explosion of neuroscience research over the past several decades, presenting engineers with a remarkable opportunity to design and develop flexible and adaptive brain-based neurotechnologies that integrate with and capitalize on human capabilities and limitations to improve human-system interactions. Major forerunners of this conception are brain-computer interfaces (BCIs), which to this point have been largely focused on improving the quality of life for particular clinical populations and include, for example, applications for advanced communications with paralyzed or locked in patients as well as the direct control of prostheses and wheelchairs. Near-term applications are envisioned that are primarily task oriented and are targeted to avoid the most difficult obstacles to development. In the farther term, a holistic approach to BCIs will enable a broad range of task-oriented and opportunistic applications by leveraging pervasive technologies and advanced analytical approaches to sense and merge critical brain, behavioral, task, and environmental information. Communications and other applications that are envisioned to be broadly impacted by BCIs are highlighted; however, these represent just a small sample of the potential of these technologies.

💡 Research Summary

The paper opens by observing that the rapid proliferation of digital technologies has created an environment of unprecedented complexity, outpacing the human brain’s capacity to process information, make decisions, and interact efficiently with machines. This mismatch, the authors argue, presents both a challenge and an opportunity: while current systems can overwhelm users, the same technological momentum has simultaneously driven a surge in neuroscience research, providing the raw data and methodological tools needed to build adaptive, brain‑based interfaces.

Brain‑computer interfaces (BCIs) are positioned as the most promising embodiment of this “human‑centric” approach. Historically, BCI development has been dominated by clinical applications—communication aids for locked‑in syndrome patients, direct control of prosthetic limbs, and wheelchair navigation for individuals with severe motor impairments. These early successes have demonstrated feasibility but have also highlighted the stringent safety, reliability, and regulatory requirements that confine BCI use to highly controlled, task‑specific scenarios.

The authors distinguish between near‑term, task‑oriented BCI deployments and a longer‑term, holistic vision. Near‑term efforts focus on three pragmatic levers: (1) optimizing signal acquisition by balancing non‑invasive high‑density EEG or optical sensors against minimally invasive flexible electrode arrays; (2) employing adaptive deep‑learning pipelines that can personalize feature extraction, compensate for intra‑subject variability, and filter environmental noise in real time; and (3) designing human‑machine interaction loops that minimize cognitive load, provide intuitive feedback, and quantify user satisfaction through psychometric and physiological metrics. By concentrating on these “low‑hanging fruit,” developers can sidestep the most formidable obstacles—long‑term stability, high‑bandwidth bidirectional communication, and seamless integration into everyday workflows.

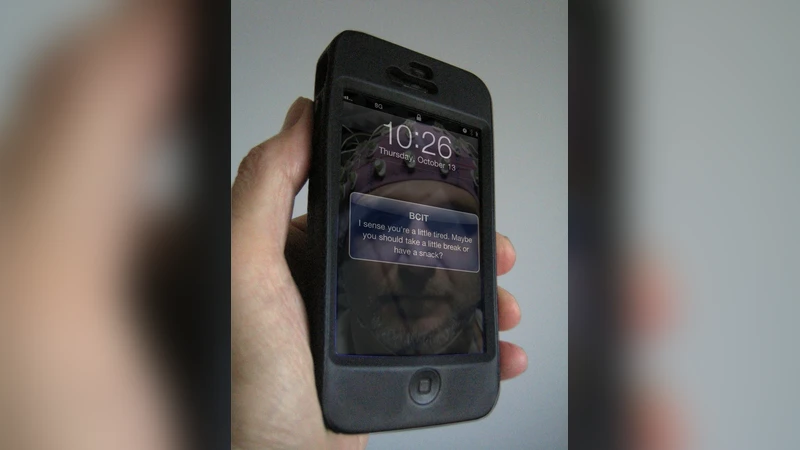

Beyond the immediate horizon, the paper proposes a “holistic BCI” paradigm that fuses neural signals with multimodal contextual data: behavioral logs, task parameters, and ambient information harvested from Internet‑of‑Things (IoT) devices, augmented‑reality (AR) headsets, and environmental sensors. This integrated platform would enable predictive, situation‑aware assistance—anticipating user intent, adjusting task difficulty, or delivering just‑in‑time information before the user explicitly requests it. In communications, for example, a brain‑derived text or speech synthesis could complement or replace conventional input devices, offering a silent, hands‑free channel for both able‑bodied and disabled users. In AR/VR environments, a pure‑brain control scheme could allow users to manipulate virtual objects, navigate menus, or trigger haptic feedback without any manual interaction, dramatically expanding the ergonomics and accessibility of immersive experiences.

The authors also explore broader societal implications. In education, real‑time monitoring of attention and mental workload could drive adaptive curricula that respond to each learner’s cognitive state. In industrial settings, BCI‑enhanced monitoring could detect fatigue or overload, prompting automated safety interventions or reallocating tasks to maintain productivity. Entertainment, military command, and collaborative robotics are identified as additional domains where brain‑centric interfaces could reshape interaction paradigms.

However, the paper does not overlook the substantial technical and ethical challenges that remain. Signal‑to‑noise ratios must improve to support reliable decoding over extended periods; long‑duration wearability demands materials that avoid skin irritation and maintain stable impedance. Massive multimodal data streams raise pressing privacy and security concerns, necessitating robust encryption, anonymization, and consent frameworks. Moreover, the authors stress the need for comprehensive legal and ethical guidelines governing the collection, storage, and use of neural data, arguing that interdisciplinary collaboration among neuroscientists, engineers, AI specialists, human‑factors experts, and policymakers is essential.

In conclusion, the authors forecast that BCIs will transition from niche clinical tools to a foundational layer of future human‑machine ecosystems. As sensor technologies mature, analytical algorithms become more adaptive, and integration standards solidify, BCIs are poised to become the “brain‑centric cognitive infrastructure” that seamlessly merges neural, behavioral, and environmental information. This evolution promises to amplify human capabilities, reduce friction in everyday interactions, and ultimately reshape how society leverages technology—provided that the accompanying technical, ethical, and regulatory hurdles are addressed through coordinated, multidisciplinary effort.