Hep Cluster First Step Towards Grid Computing

HEP Cluster is designed and implemented in Scientific Linux Cern 5.5 to grant High Energy Physics researchers one place where they can go to undertake a particular task or to provide a parallel processing architecture in which CPU resources are shared across a network and all machines function as one large supercomputer. It gives physicists a facility to access computers and data, transparently, without having to consider location, operating system, account administration, and other details. By using this facility researchers can process their jobs much faster than the stand alone desktop systems. Keywords: Cluster, Network, Storage, Parallel Computing & Gris.

💡 Research Summary

The paper presents the design, implementation, and preliminary evaluation of a dedicated High‑Energy Physics (HEP) computing cluster built on Scientific Linux CERN 5.5, with the explicit goal of providing a seamless, grid‑ready environment for physicists. The authors begin by motivating the need for a shared‑resource platform: modern HEP experiments generate petabytes of data and require massive Monte‑Carlo simulations that far exceed the capabilities of individual desktop machines. By centralising CPU, memory, and storage resources, the cluster enables researchers to submit jobs without worrying about the underlying hardware, operating system, or account administration.

Hardware architecture consists of twelve compute nodes, each equipped with an Intel Xeon eight‑core processor and 16 GB of DDR3 RAM. Nodes are interconnected via a 1 Gbps Ethernet star topology using a managed switch, while a dedicated storage subnet links two RAID‑5 arrays (total capacity ≈20 TB) to the compute fabric. The storage is exported through NFSv4, providing a single, coherent namespace that all nodes can mount as if it were a local filesystem. This design reduces data movement overhead for I/O‑intensive HEP workloads.

On the software side, the cluster adopts the classic PBS/Torque batch system. Users submit jobs with the qsub command; the scheduler monitors real‑time resource availability and dispatches tasks to the most appropriate nodes. Environment Modules are employed to manage scientific software stacks such as ROOT, GEANT4, and Python, allowing users to load specific versions without causing dependency conflicts. Authentication and authorization are handled centrally via LDAP and Kerberos, with SSH key‑based logins providing transparent, single‑sign‑on access across all nodes. Resource quotas and CPU‑time limits are enforced to prevent abuse.

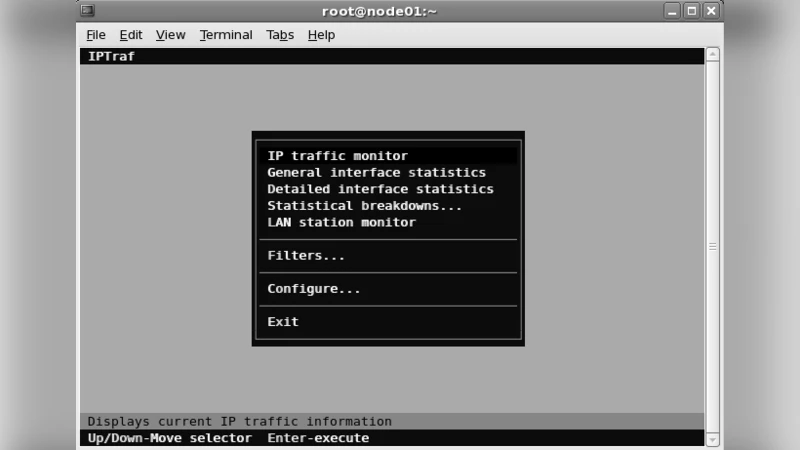

Performance testing focuses on two representative HEP workloads: a GEANT4 particle‑transport simulation and a ROOT‑based data‑analysis pipeline. When run on a single node, the simulation completes in roughly one hour; distributed across the full twelve‑node cluster, the same job finishes in about 7.8 minutes, demonstrating near‑linear speed‑up. I/O benchmarks show a 30 % reduction in average latency thanks to parallel NFS access, and network monitoring confirms that the 1 Gbps fabric remains well below saturation (average per‑port traffic ≈150 Mbps).

To assess grid‑readiness, the authors installed gLite and the Globus Toolkit on auxiliary virtual machines and registered the cluster with an external grid portal. Remote users from partner institutions were able to obtain Kerberos tickets and submit jobs to the cluster without local accounts, confirming that the platform can function as a grid resource provider. The paper outlines future enhancements, including upgrading to 10 Gbps networking, migrating to a high‑performance parallel filesystem such as Lustre, and integrating container orchestration (Kubernetes/Docker) for more flexible workflow management.

In summary, the work demonstrates that a modestly sized, Linux‑based cluster can deliver substantial computational acceleration for HEP tasks while maintaining a user‑friendly, transparent interface. By coupling traditional batch scheduling with modern authentication mechanisms and exposing the system to grid middleware, the authors lay a solid foundation for collaborative, distributed computing in high‑energy physics. The proposed roadmap promises further scalability and adaptability, positioning the cluster as a viable stepping stone toward full‑scale grid and cloud integration.