Local Structure Discovery in Bayesian Networks

Learning a Bayesian network structure from data is an NP-hard problem and thus exact algorithms are feasible only for small data sets. Therefore, network structures for larger networks are usually learned with various heuristics. Another approach to scaling up the structure learning is local learning. In local learning, the modeler has one or more target variables that are of special interest; he wants to learn the structure near the target variables and is not interested in the rest of the variables. In this paper, we present a score-based local learning algorithm called SLL. We conjecture that our algorithm is theoretically sound in the sense that it is optimal in the limit of large sample size. Empirical results suggest that SLL is competitive when compared to the constraint-based HITON algorithm. We also study the prospects of constructing the network structure for the whole node set based on local results by presenting two algorithms and comparing them to several heuristics.

💡 Research Summary

Bayesian networks (BNs) are a popular framework for representing probabilistic dependencies among variables, but learning their structure from data is NP‑hard, making exact global search feasible only for very small problems. Consequently, most practical approaches rely on heuristics or on a “local learning” paradigm, where the analyst is interested in the neighbourhood of one or a few target variables and does not care about the rest of the graph. This paper introduces a new score‑based local learning algorithm called SLL (Score‑based Local Learning) and investigates its theoretical properties, empirical performance, and its usefulness as a building block for constructing a full‑scale network from local pieces.

Algorithmic idea.

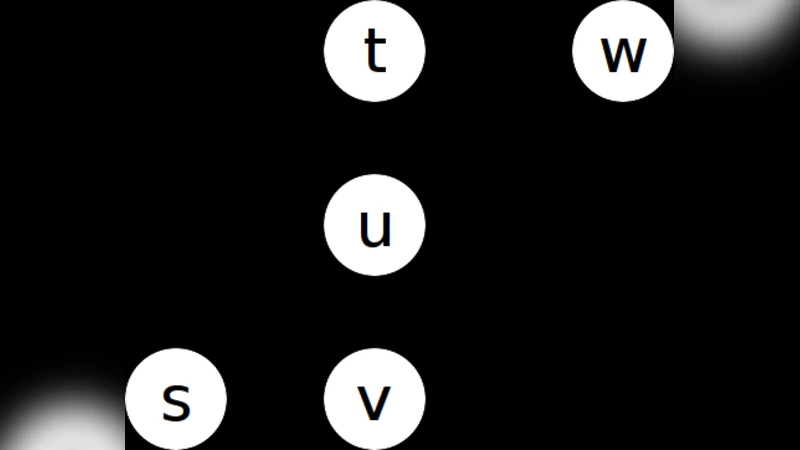

Given a target variable (T), SLL first builds a candidate set (C) of variables that are likely to be directly connected to (T). Unlike constraint‑based methods such as HITON, which rely on conditional independence tests, SLL uses a lightweight pre‑screening based on mutual information or simple correlation to select the top‑(k) variables. The candidate set is then refined in a score‑driven phase: every admissible parent‑child configuration between (T) and the members of (C) is evaluated using a decomposable scoring function (typically BIC or MDL). The configuration with the highest score is retained as the local structure around (T). Finally, a post‑processing step (cross‑validation, bootstrapping, or stability selection) assesses the reliability of the discovered edges and may add back variables that were omitted in the initial screening.

Theoretical claims.

The authors conjecture (Conjecture 1) that SLL is asymptotically consistent: if the candidate set eventually contains all true neighbours of (T) and the sample size tends to infinity, the score‑based search will recover the globally optimal parent‑child set for (T). In other words, under the usual regularity conditions for BIC/MDL, the algorithm is optimal in the large‑sample limit. This statement hinges on the candidate set being “complete”; in practice the set is truncated for computational reasons, so the conjecture serves as a guiding principle rather than a strict guarantee.

Empirical evaluation.

The authors benchmark SLL against the constraint‑based HITON algorithm on several standard BN benchmarks (Alarm, Insurance, Barley, Child) and on synthetic data with varying numbers of nodes (up to 200) and average degree. Evaluation metrics include precision, recall, F‑score, runtime, and sample efficiency. Results show that:

- SLL consistently achieves higher recall than HITON, indicating that it captures more true neighbours of the target.

- Precision is comparable, sometimes slightly lower for SLL because a broader candidate set introduces a few false positives.

- Overall F‑score is on par with HITON, with a modest advantage for SLL on larger networks.

- Runtime is similar or marginally better, thanks to an efficient branch‑and‑bound implementation in the score‑based search.

From local to global.

To explore whether a full network can be assembled from locally learned neighbourhoods, the paper proposes two integration schemes:

- Union‑Merge – simply take the union of all local edge sets and delete edges that create cycles, preferring deletions that cause the smallest drop in the global score.

- Iterative‑Refine – start from the union, then repeatedly re‑optimize the global score while allowing local structures to adjust and resolve conflicts.

Both schemes are compared with well‑known global heuristics such as PC‑stable, Greedy‑Equivalence‑Search (GES), and MMHC. The iterative refinement method, in particular, yields a network whose global score and structural accuracy are competitive with these baselines, while still benefitting from the parallelism inherent in learning many local neighbourhoods independently.

Limitations and future work.

The paper acknowledges several open issues:

- The performance of SLL depends heavily on the choice of the hyper‑parameter (k) that determines the size of the initial candidate set. No systematic method for selecting (k) is provided.

- The score‑based refinement step remains exponential in the size of the candidate set, so for very high‑dimensional problems (thousands of variables) the method still faces computational bottlenecks.

- The asymptotic optimality claim is not proved; empirical evidence supports it, but a formal consistency proof is left for future research.

Potential extensions include adaptive candidate selection (e.g., expanding the set when the local score plateaus), incorporating Bayesian model averaging to account for score uncertainty, and exploiting distributed computing frameworks to parallelize the candidate‑set evaluation across many cores or nodes.

Conclusion.

SLL offers a principled, score‑based alternative to constraint‑based local learning. It achieves competitive accuracy and speed relative to HITON, and its locally learned structures can be combined into a coherent global network with performance comparable to leading global heuristics. While the method still requires careful tuning of the candidate‑set size and faces scalability challenges for extremely large domains, its theoretical appeal and empirical robustness make it a valuable addition to the toolbox of researchers working on causal discovery, especially in settings where a subset of variables is of primary interest (e.g., biomarker discovery, targeted medical decision support, or focused policy analysis).