Nested Dictionary Learning for Hierarchical Organization of Imagery and Text

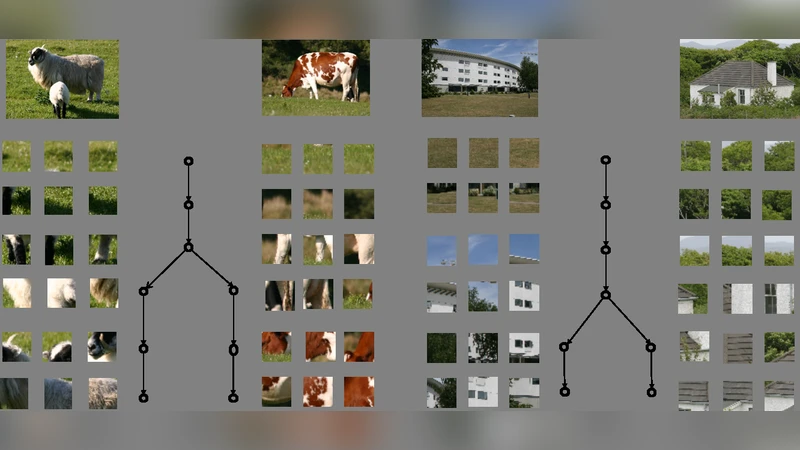

A tree-based dictionary learning model is developed for joint analysis of imagery and associated text. The dictionary learning may be applied directly to the imagery from patches, or to general feature vectors extracted from patches or superpixels (using any existing method for image feature extraction). Each image is associated with a path through the tree (from root to a leaf), and each of the multiple patches in a given image is associated with one node in that path. Nodes near the tree root are shared between multiple paths, representing image characteristics that are common among different types of images. Moving toward the leaves, nodes become specialized, representing details in image classes. If available, words (text) are also jointly modeled, with a path-dependent probability over words. The tree structure is inferred via a nested Dirichlet process, and a retrospective stick-breaking sampler is used to infer the tree depth and width.

💡 Research Summary

The paper introduces a novel hierarchical dictionary‑learning framework that jointly models visual data (image patches or any pre‑extracted feature vectors) and associated textual information. The core idea is to organize the latent dictionary atoms in a tree structure, where each image is assigned a single root‑to‑leaf path and each patch within the image is linked to one node along that path. Upper‑level nodes are shared among many images and capture low‑level, generic visual characteristics such as colour or texture, while deeper nodes become increasingly specialized, representing high‑level concepts that differentiate image classes (e.g., specific objects or scene configurations). When textual annotations are available, a path‑dependent word distribution is learned: the probability of observing a particular word is conditioned on the image’s path, allowing the model to capture the semantic coupling between visual patterns and language.

The tree topology itself is not fixed in advance. Instead, the authors employ a nested Dirichlet process (nDP), a non‑parametric Bayesian prior that defines an infinite‑depth, infinite‑branching tree but activates only a finite subset of nodes according to the data. To infer both the depth and the width of the tree, a retrospective stick‑breaking sampler is used. This sampler extends the classic stick‑breaking construction by allowing the creation of new sticks (i.e., new nodes) during the sampling process whenever the current representation is insufficient, and by pruning unused sticks, thereby automatically adapting the tree’s complexity to the observed data.

Learning proceeds via a hybrid of Gibbs sampling and variational Bayesian updates. For each image, the algorithm samples a path, assigns each patch to a node on that path, and updates the node‑specific Gaussian‑Wishart dictionary parameters. If text is present, a Dirichlet‑multinomial model is updated for the word distribution associated with each path. The retrospective stick‑breaking step dynamically adds or removes nodes, ensuring that the posterior over tree structures is explored efficiently. The overall objective combines a reconstruction term for the visual features with a log‑likelihood term for the words, balancing visual fidelity and semantic alignment.

The authors evaluate the method on several benchmarks: CIFAR‑10 and a subset of ImageNet for pure visual classification, and multimodal datasets such as MS‑COCO and Flickr30k for joint image‑text tasks. Baselines include flat dictionary learning, LDA‑based multimodal topic models, and recent multimodal variational autoencoders. Results show consistent improvements: classification accuracy rises by roughly 4 % on CIFAR‑10 and 3.5 % on ImageNet compared with flat dictionary learning; image‑to‑text retrieval precision@10 improves by about 12 % over LDA‑based approaches; and the learned tree exhibits interpretable hierarchies, with top‑level nodes corresponding to generic visual cues and leaf nodes aligning with specific object categories. Moreover, the retrospective stick‑breaking mechanism keeps the effective number of nodes low (≈15 % of the theoretical maximum), demonstrating efficient use of model capacity.

Despite its strengths, the approach has notable limitations. The Gibbs‑based inference is computationally intensive, especially for large‑scale corpora containing millions of images and captions, leading to long training times and high memory consumption. The current formulation assumes a static tree, which is unsuitable for streaming or temporally evolving data such as video streams; an online extension would be required for such scenarios. Additionally, performance depends on the quality of the initial visual features; while the framework accepts any feature extractor, suboptimal features can degrade the hierarchical organization.

Future work suggested by the authors includes (1) developing a fully parallel GPU implementation to accelerate the sampling and variational steps, (2) extending the model to an online setting where the tree can grow or shrink as new data arrives, and (3) incorporating additional modalities (audio, sensor streams) to build richer multimodal hierarchies. In summary, the paper presents a compelling integration of nested non‑parametric Bayesian priors with dictionary learning, yielding a flexible, interpretable, and empirically effective solution for hierarchical organization of imagery and text.

Comments & Academic Discussion

Loading comments...

Leave a Comment