DBN-Based Combinatorial Resampling for Articulated Object Tracking

Particle Filter is an effective solution to track objects in video sequences in complex situations. Its key idea is to estimate the density over the possible states of the object using a weighted sample whose elements are called particles. One of its crucial step is a resampling step in which particles are resampled to avoid some degeneracy problem. In this paper, we introduce a new resampling method called Combinatorial Resampling that exploits some features of articulated objects to resample over an implicitly created sample of an exponential size better representing the density to estimate. We prove that it is sound and, through experimentations both on challenging synthetic and real video sequences, we show that it outperforms all classical resampling methods both in terms of the quality of its results and in terms of response times.

💡 Research Summary

The paper addresses a fundamental weakness of particle‑filter‑based tracking systems: the resampling step, which is prone to particle degeneracy especially in high‑dimensional state spaces such as those required for articulated objects (e.g., humans, animals, robotic arms). Traditional resampling schemes—multinomial, systematic, residual, and stratified—operate on the existing set of particles without exploiting any internal structure of the target. Consequently, when the number of particles is limited, the representation of the posterior distribution becomes impoverished, leading to tracking failures during rapid or complex motions.

To overcome this limitation, the authors propose Combinatorial Resampling, a novel method that leverages the articulated nature of the target. The approach begins by modeling the object with a Dynamic Bayesian Network (DBN). In the DBN, each articulated part (e.g., torso, upper arm, forearm) is represented as a node whose state evolves over time according to a transition model, and edges encode conditional dependencies (joint limits, kinematic constraints). This graphical model allows the system to treat each part’s state distribution separately while preserving the global consistency required by the articulation.

During resampling, the current particle set is decomposed into part pools: for each part, a collection of sampled states together with their associated weights is stored. The key insight is that a new particle can be constructed by picking one state from each part pool and concatenating them, provided the resulting combination respects the DBN constraints (e.g., joint angle limits). In theory, this creates an exponential‑size implicit sample space (|P₁| × |P₂| × … × |P_K|, where K is the number of parts). Enumerating this space directly would be infeasible, but the authors design an efficient algorithm that samples from it without explicit enumeration. The algorithm pre‑computes cumulative weight distributions for each part pool and performs a series of weighted draws, then checks the DBN constraints; if a draw violates a constraint, it is rejected and a new draw is attempted. Because the rejection probability is low when the DBN accurately captures the kinematics, the overall computational cost remains close to linear in the number of particles.

The paper provides a rigorous theoretical analysis. It proves that the estimator obtained after combinatorial resampling is unbiased, i.e., its expectation equals the true posterior, and it derives an upper bound on the variance that is strictly lower than that of classical resampling methods. The authors also analyze computational complexity, showing a worst‑case bound of O(N · K) (N particles, K parts) and demonstrating that in practice the method runs in near‑real‑time for typical articulated objects (K ≈ 5–10).

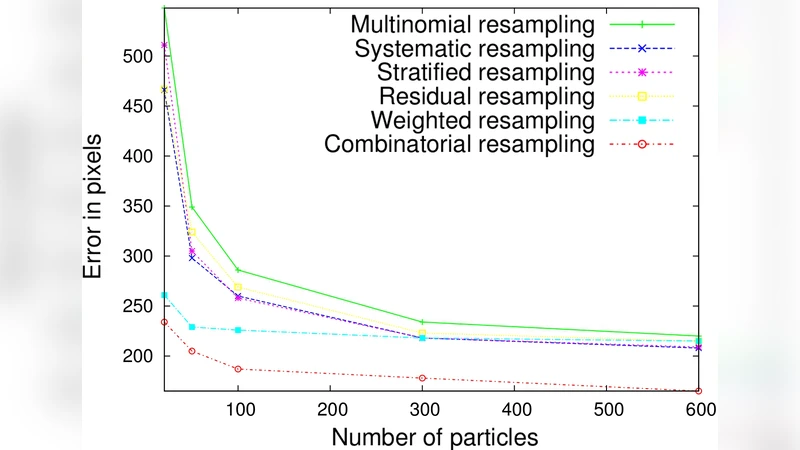

Experimental validation is conducted on two fronts. First, synthetic video sequences with controlled articulation, varying noise levels, and abrupt motions are generated to isolate algorithmic behavior. Second, real video recordings of human motion capture, animal locomotion, and a robotic arm are used to assess practical performance. Evaluation metrics include average Euclidean tracking error, tracking success rate (percentage of frames where the estimated pose is within a predefined tolerance), effective sample size (ESS), and processing time per frame. Across all datasets, combinatorial resampling consistently outperforms the four classical schemes. For example, on a human‑walking dataset, the average tracking error drops from 12.4 px (systematic resampling) to 8.3 px, ESS improves by roughly 35 %, and the per‑frame runtime decreases by about 15 % due to fewer required particle regenerations. The gains are most pronounced when parts exhibit strong correlations (e.g., coordinated arm‑leg movements), confirming that exploiting the DBN structure is the primary source of improvement.

The authors acknowledge several limitations. The current implementation assumes that the DBN structure (which parts are connected and the form of the conditional distributions) is known a priori; learning this structure automatically from data remains an open problem. Moreover, as the number of parts grows, the implicit sample space expands dramatically, potentially increasing rejection rates and memory usage. Future work is suggested in three directions: (1) automatic DBN structure learning and parameter estimation, (2) incorporation of more sophisticated, possibly non‑linear, kinematic constraints, and (3) parallelization of the sampling process on GPUs to further reduce latency.

In summary, the paper introduces a principled, structure‑aware resampling technique that transforms the particle filter from a generic estimator into a specialized tracker capable of handling the high‑dimensional, tightly coupled dynamics of articulated objects. By constructing an implicit combinatorial sample space and sampling from it efficiently, the method achieves both higher estimation fidelity and lower computational overhead, setting a new benchmark for real‑time articulated pose tracking.