Conducting Verification And Validation Of Multi- Agent Systems

Verification and Validation (V&V) is a series of activities, technical and managerial, which performed by system tester not the system developer in order to improve the system quality, system reliability and assure that product satisfies the users operational needs. Verification is the assurance that the products of a particular development phase are consistent with the requirements of that phase and preceding phase(s), while validation is the assurance that the final product meets system requirements. an outside agency can be used to performed V&V, which is indicate by Independent V&V, or IV&V, or by a group within the organization but not the developer, referred to as Internal V&V. Use of V&V often accompanies testing, can improve quality assurance, and can reduce risk. This paper putting guidelines for performing V&V of Multi-Agent Systems (MAS).

💡 Research Summary

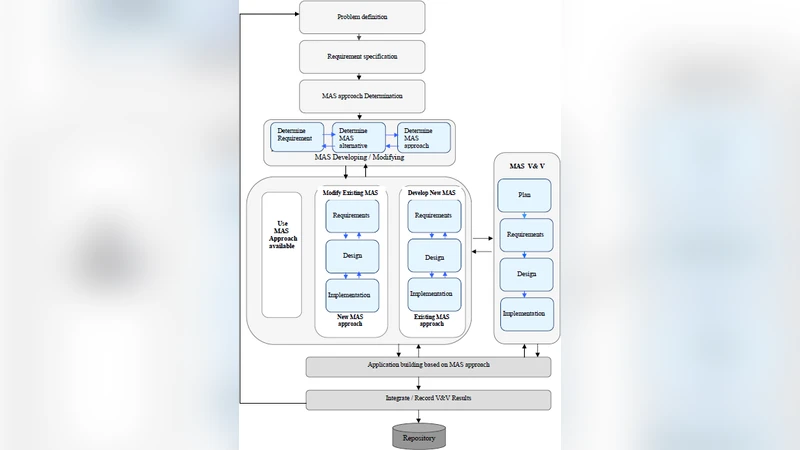

The paper presents a comprehensive methodology for conducting Verification and Validation (V&V) of Multi‑Agent Systems (MAS), aiming to improve system quality, reliability, and compliance with operational needs. It begins by distinguishing verification—the assurance that each development artifact (requirements, design, code) conforms to its predecessor—from validation—the assurance that the final system satisfies the intended user and system requirements. Recognizing that MAS differ fundamentally from monolithic software due to distributed autonomy, dynamic interaction, and collaborative behavior, the authors argue that traditional V&V practices must be adapted.

The core contribution is a structured framework that integrates both technical and managerial aspects of V&V for MAS. First, the paper redefines verification activities to include formal model checking, static code analysis, and unit testing of individual agent decision logic. Each agent’s behavior is modeled as a state machine or behavior tree, enabling automated consistency checks against design specifications. Second, validation is addressed through scenario‑based simulation and field testing that replicate real‑world operating conditions. The authors emphasize parameterizing simulation environments—varying agent counts, network latency, failure modes—to stress‑test the system and collect quantitative metrics (success rate, response time, resource utilization) as well as qualitative assessments of collaboration efficiency and decision appropriateness.

A significant portion of the work delineates two organizational models for V&V: Independent V&V (IV&V) performed by an external agency, and Internal V&V carried out by an independent team within the same organization but separate from developers. Both models employ risk‑based test prioritization, focusing early effort on high‑impact agent interactions that could jeopardize system safety or performance. The paper outlines how IV&V provides objectivity and satisfies regulatory certification requirements, while Internal V&V offers rapid feedback aligned with development schedules.

The proposed V&V process comprises six sequential steps: (1) requirements verification using a traceability matrix linking each requirement to test cases and acceptance criteria; (2) design verification through formal analysis of agent interaction protocols and system architecture; (3) implementation verification employing static analysis tools and automated unit tests; (4) integration verification that executes scenario‑driven tests of inter‑agent communication and protocol compliance in both simulated and real network environments; (5) system validation via large‑scale simulations and field trials that assess functional and non‑functional properties such as real‑time performance, scalability, and fault tolerance; and (6) comprehensive documentation and traceability, ensuring that every artifact—from requirement to test result—is linked and auditable. The authors recommend using V&V management tools (e.g., DOORS, JIRA) and automated reporting scripts to maintain this traceability.

Risk management is woven throughout the framework. The authors propose a risk identification phase that catalogs potential hazards such as agent deadlock, message loss, or cooperative failure. For each hazard, mitigation strategies (timeouts, retry mechanisms, fallback agents) are defined, and corresponding test cases are prioritized. The V&V outcomes feed into a continuous quality‑improvement loop: verification defects trigger redesign, while validation defects may lead to requirement refinement or system re‑architecture.

To demonstrate applicability, the paper presents case studies in smart traffic management and collaborative robotics manufacturing. In both domains, applying the proposed guidelines resulted in earlier defect detection, reduced integration‑phase rework, and measurable improvements in system reliability. Moreover, the framework satisfies stringent certification demands in regulated sectors such as defense, aerospace, and healthcare, where independent V&V is often mandatory.

In conclusion, the authors argue that a MAS‑specific V&V methodology—combining formal verification of autonomous agents, scenario‑driven validation, risk‑based test prioritization, and rigorous documentation—substantially lowers development risk, enhances system trustworthiness, and facilitates regulatory compliance. The paper serves as a practical guide for engineers, project managers, and quality assurance professionals tasked with delivering robust multi‑agent solutions.

Comments & Academic Discussion

Loading comments...

Leave a Comment