Inferring the Underlying Structure of Information Cascades

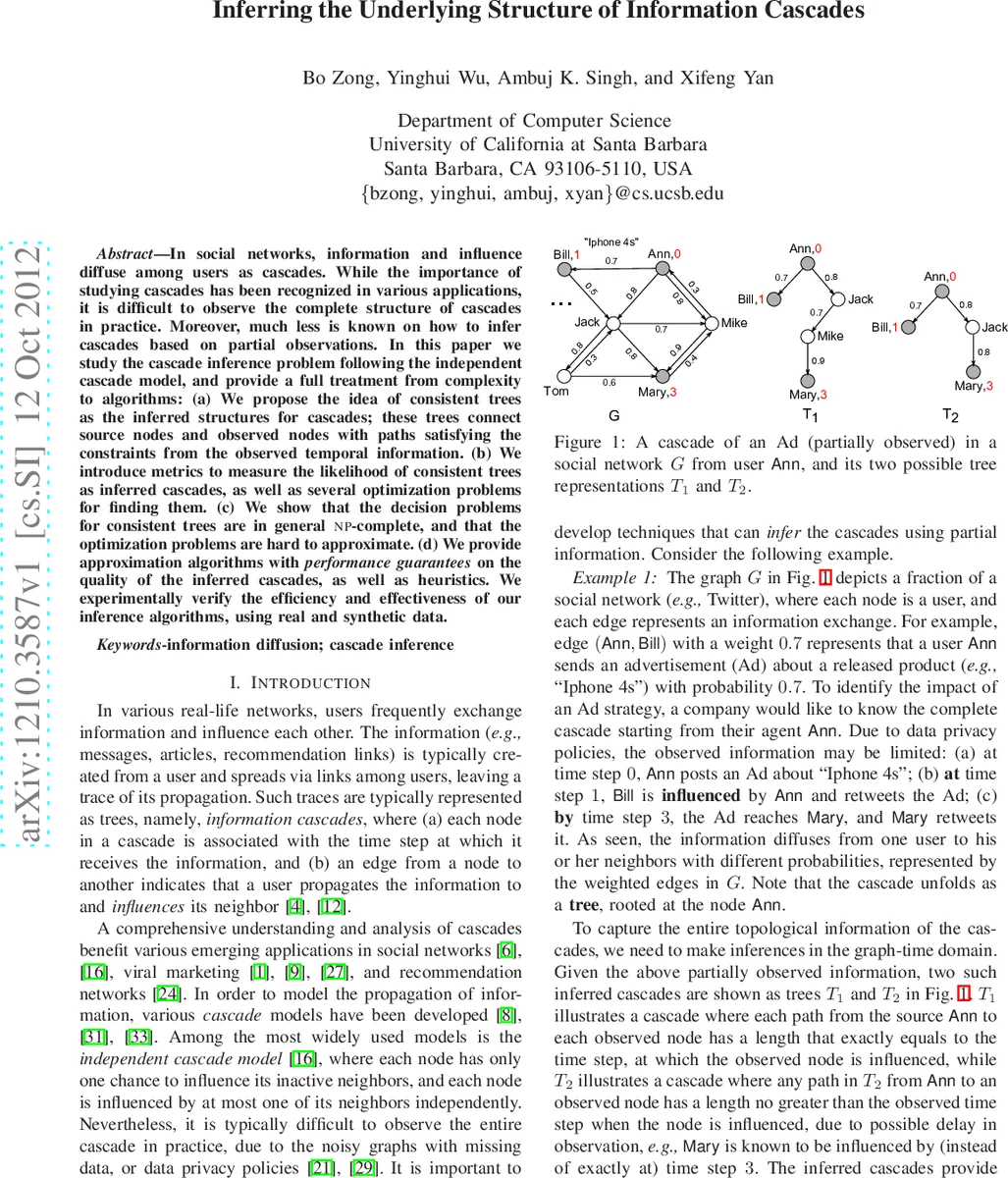

In social networks, information and influence diffuse among users as cascades. While the importance of studying cascades has been recognized in various applications, it is difficult to observe the complete structure of cascades in practice. Moreover, much less is known on how to infer cascades based on partial observations. In this paper we study the cascade inference problem following the independent cascade model, and provide a full treatment from complexity to algorithms: (a) We propose the idea of consistent trees as the inferred structures for cascades; these trees connect source nodes and observed nodes with paths satisfying the constraints from the observed temporal information. (b) We introduce metrics to measure the likelihood of consistent trees as inferred cascades, as well as several optimization problems for finding them. (c) We show that the decision problems for consistent trees are in general NP-complete, and that the optimization problems are hard to approximate. (d) We provide approximation algorithms with performance guarantees on the quality of the inferred cascades, as well as heuristics. We experimentally verify the efficiency and effectiveness of our inference algorithms, using real and synthetic data.

💡 Research Summary

The paper addresses the problem of reconstructing the full structure of information cascades in social networks when only partial observations are available. Assuming the widely used Independent Cascade (IC) model, the authors formalize a cascade as a directed tree rooted at a source node, where each node becomes newly active exactly once and attempts to activate each neighbor with a known probability on the corresponding edge. Observations consist of a set X of (node, time) pairs, indicating that a node was newly active at or before the reported time step.

To capture feasible cascade structures consistent with X, the authors introduce consistent trees. A bounded consistent tree requires that for every observed node v_i with timestamp t_i, there exists a directed path from the source to v_i of length at most t_i. A perfect consistent tree tightens this requirement to exactly t_i hops, reflecting precise timing. These definitions embed both the topological constraints of the IC model and the temporal uncertainty of real data.

Two quantitative objectives are defined for selecting the “best” consistent tree. The minimum consistent tree problem seeks a tree with the smallest number of edges that still satisfies all observations, effectively minimizing the total number of communication steps. The minimum weighted consistent tree problem incorporates edge activation probabilities f(u,v) by maximizing the likelihood of the observed data given the tree; equivalently, it minimizes the negative log‑likelihood, i.e., the sum of –log f(u,v) over the tree’s edges.

The authors first prove that deciding whether any consistent tree exists for a given X is NP‑complete. The reduction for perfect trees is from Hamiltonian Path, while bounded trees are shown hard via a Set‑Cover reduction. Moreover, they demonstrate that both optimization problems are hard to approximate: unless P = NP, no polynomial‑time algorithm can achieve any constant‑factor approximation.

Despite these hardness results, the paper provides practical algorithms with provable guarantees. For bounded consistent trees, they design polynomial‑time approximation algorithms that achieve an O(|X|·log f_min·log f_max) factor for both the edge‑count and weighted objectives, where |X| is the number of observations and f_min/f_max are the minimum and maximum edge probabilities. The algorithms rely on constructing a shallow Steiner‑like subgraph that respects the hop‑constraints and then pruning it to a tree. For perfect consistent trees, which are even harder, the authors propose a greedy heuristic: iteratively add the highest‑probability edge that does not violate any timestamp constraint, stopping when all observations are connected. When the underlying graph’s diameter d is small (as is typical in social networks), they show an O(d·log f_min·log f_max) approximation is attainable.

Experimental evaluation uses both real Twitter data (with known retweet timestamps) and synthetic graphs generated with varying size, density, and probability distributions. The approximation algorithms run in seconds on graphs with tens of thousands of nodes, and the inferred trees achieve high recall of observed nodes and timestamps. The weighted version consistently selects edges with higher activation probabilities, leading to cascades that closely match the ground‑truth diffusion paths. The greedy heuristic for perfect trees, while lacking formal guarantees, also produces plausible cascades and outperforms baseline methods that ignore temporal constraints.

In the related‑work discussion, the authors differentiate their contribution from two major strands: (1) cascade prediction, which focuses on forecasting global cascade properties (size, depth) rather than reconstructing the exact propagation tree; and (2) network inference, which aims to recover the underlying social graph from diffusion traces. Their work uniquely targets tree‑level reconstruction under the IC model, explicitly handling partial and noisy temporal observations.

Overall, the paper delivers a comprehensive theoretical and algorithmic treatment of cascade inference from partial data. It establishes fundamental hardness results, proposes approximation algorithms with clear performance bounds, and validates them empirically. The framework is directly applicable to domains such as viral marketing impact analysis, epidemic source tracing, and misinformation spread monitoring, where understanding the precise pathways of diffusion is crucial.

Comments & Academic Discussion

Loading comments...

Leave a Comment