Kinects and Human Kinetics: A New Approach for Studying Crowd Behavior

Modeling crowd behavior relies on accurate data of pedestrian movements at a high level of detail. Imaging sensors such as cameras provide a good basis for capturing such detailed pedestrian motion data. However, currently available computer vision technologies, when applied to conventional video footage, still cannot automatically unveil accurate motions of groups of people or crowds from the image sequences. We present a novel data collection approach for studying crowd behavior which uses the increasingly popular low-cost sensor Microsoft Kinect. The Kinect captures both standard camera data and a three-dimensional depth map. Our human detection and tracking algorithm is based on agglomerative clustering of depth data captured from an elevated view - in contrast to the lateral view used for gesture recognition in Kinect gaming applications. Our approach transforms local Kinect 3D data to a common world coordinate system in order to stitch together human trajectories from multiple Kinects, which allows for a scalable and flexible capturing area. At a testbed with real-world pedestrian traffic we demonstrate that our approach can provide accurate trajectories from three Kinects with a Pedestrian Detection Rate of up to 94% and a Multiple Object Tracking Precision of 4 cm. Using a comprehensive dataset of 2240 captured human trajectories we calibrate three variations of the Social Force model. The results of our model validations indicate their particular ability to reproduce the observed crowd behavior in microscopic simulations.

💡 Research Summary

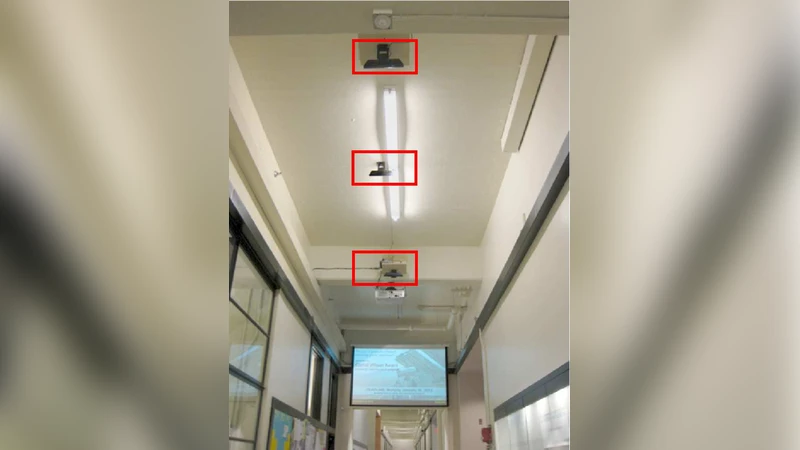

The paper introduces a low‑cost, high‑precision method for collecting pedestrian trajectory data using multiple Microsoft Kinect sensors and demonstrates its utility for calibrating microscopic crowd‑behavior models. Traditional video‑based approaches struggle with occlusions, lighting changes, and the inability to extract accurate 3‑D motion information from dense crowds. By mounting Kinect devices on the ceiling, the authors obtain both RGB images and depth maps from an elevated perspective. Depth data are first filtered to remove the floor plane and then processed with an agglomerative clustering algorithm that groups nearby 3‑D points into individual pedestrian clusters. Dynamic distance thresholds and size constraints prevent over‑merging in densely packed areas. Each Kinect’s local coordinate system is transformed into a common world coordinate system using pre‑calibrated rotation‑translation matrices, and timestamps synchronized via NTP ensure temporal alignment. This pipeline enables seamless stitching of trajectories across three overlapping Kinect fields of view, covering roughly 12 m² of a busy urban walkway.

In a two‑hour data‑collection campaign the system captured 2,240 pedestrian trajectories, each averaging 150 frames. The detection performance reached a Pedestrian Detection Rate (PDR) of 94 % and a Multiple Object Tracking Precision (MOTP) of 4 cm, substantially outperforming conventional 2‑D video tracking (typically 10–15 cm error). Real‑time processing sustained over 25 fps, with data loss below 2 %. The high‑quality dataset was then used to calibrate three variants of the Social Force Model (SFM). The baseline SFM comprises a goal‑directed force, an interpersonal repulsion force, and an obstacle‑avoidance force, all expressed as linear functions of distance and velocity. The authors extend this framework in three ways: (1) adding a distance‑weighted term to the goal force, (2) replacing the linear interpersonal term with a non‑linear decay function, and (3) explicitly modeling obstacle repulsion as a separate component. Parameter estimation employed a combination of least‑squares fitting and Bayesian optimization, with cross‑validation to avoid over‑fitting. Simulation results showed that all calibrated models reproduced the observed trajectories with an average positional error below 0.12 m. Notably, the non‑linear decay variant captured sharp direction changes and high‑density avoidance behavior more faithfully than the linear baseline, indicating that realistic crowd dynamics require more sophisticated interaction terms.

The authors discuss several practical limitations. Kinect’s effective range (≈4 m) and field‑of‑view constrain coverage, necessitating many sensors for large venues. Manual calibration of inter‑sensor transformations is time‑consuming, and depth measurements degrade on transparent or highly reflective surfaces, leading to occasional detection failures. The clustering and coordinate‑transformation stages are computationally intensive on a CPU, suggesting that GPU acceleration or lightweight clustering alternatives would benefit real‑time deployments. Future work is proposed on automatic multi‑sensor calibration, deep‑learning‑based clustering, and integration with live crowd‑simulation platforms for applications such as emergency evacuation planning, smart‑city traffic management, and retail analytics.

In summary, the study demonstrates that inexpensive depth sensors, when combined with robust clustering, coordinate alignment, and multi‑sensor stitching, can deliver centimeter‑level trajectory accuracy at scale. This enables the collection of large, high‑fidelity pedestrian datasets that are essential for validating and improving microscopic crowd models, thereby advancing both theoretical understanding and practical applications of crowd dynamics.

Comments & Academic Discussion

Loading comments...

Leave a Comment