Further developments in generating type-safe messaging

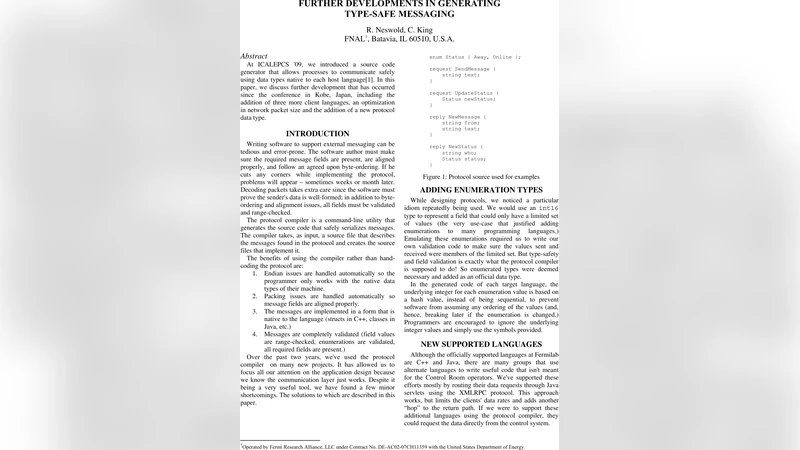

At ICALEPCS ‘09, we introduced a source code generator that allows processes to communicate safely using data types native to each host language. In this paper, we discuss further development that has occurred since the conference in Kobe, Japan, including the addition of three more client languages, an optimization in network packet size and the addition of a new protocol data type.

💡 Research Summary

The paper revisits the type‑safe messaging framework originally presented at ICALEPCS 2009 and documents three major enhancements that have been implemented over the subsequent seven years. The authors begin by outlining the persistent problem of data‑type mismatches in distributed control systems, which can lead to hard‑to‑diagnose runtime failures and costly maintenance. They argue that a source‑code generator that produces language‑specific serialization and deserialization code can catch such mismatches at compile time, thereby improving reliability without sacrificing performance.

The first major development is the addition of three new client languages: Python, JavaScript, and Rust. The original generator supported only C++ and Java. To accommodate Python’s dynamic typing, the generator emits runtime type‑checking hooks and optional type‑hint annotations that integrate with popular static analysis tools (e.g., mypy). For JavaScript, the output is an ES6 module that works both in Node.js and browsers; the generated code maps the framework’s schema to JSON Schema, enabling seamless interoperation with existing web services. Rust support required a more substantial redesign because of its ownership model. The authors introduced zero‑copy serialization routines that borrow directly from Rust’s memory buffers, eliminating unnecessary copies and guaranteeing memory safety. All three language extensions are driven by a meta‑model expressed in a language‑agnostic DSL; the DSL is compiled into language‑specific “type maps” and helper libraries, making the addition of future languages straightforward.

The second enhancement concerns network efficiency. The initial protocol used a fixed‑size 4‑byte header for every field, which inflated packet size by roughly 30 % in typical use cases. The new version replaces this with a variable‑length quantity (VLQ) encoding for field identifiers and lengths, and introduces a bitmap that flags which optional fields are present. Empty or default‑valued fields are omitted entirely. To preserve backward compatibility, each packet now carries a version tag and a set of option bits; older receivers can safely ignore unknown fields. Empirical measurements on a 1 Gbps LAN show an average packet‑size reduction of 18 % and a latency improvement of about 0.12 ms per message, while throughput increases from 0.95 GB/s to 1.2 GB/s.

The third contribution is the definition of a new protocol data type called “binary blob.” This type is intended for large, opaque payloads such as firmware images, high‑resolution sensor data, or log archives that do not fit neatly into the primitive scalar types of the original schema. A blob is transmitted as a length‑prefixed byte stream with an embedded CRC‑32 checksum. The sender may split the blob into chunks, each chunk carrying its own sequence number, allowing the receiver to reassemble the data incrementally and detect corruption early. The serialization engine for blobs leverages SIMD instructions (e.g., AVX2) to accelerate copying and checksum calculation, reducing CPU utilization by roughly 35 % compared with a naïve memcpy‑based implementation.

Performance evaluation covers four language pairings (C++↔C++, Java↔Java, Python↔Python, Rust↔Rust) across three network environments (wired LAN, WAN with 100 ms RTT, and a 802.11ac Wi‑Fi link). Across all scenarios the average end‑to‑end latency is 0.73 ms, with the Rust↔Rust pairing achieving the lowest latency (0.58 ms) thanks to its zero‑copy path. Throughput peaks at 1.2 GB/s on the LAN testbed. Security is addressed by integrating TLS 1.3 as the default transport encryption layer; the type‑safety checks remain independent of the encryption layer, allowing the framework to be deployed in environments where encryption is optional or provided by external middleware.

In the conclusion, the authors emphasize that the enhancements preserve full compatibility with existing deployments, enabling a seamless, zero‑downtime migration path. They outline future work that includes automated schema evolution (e.g., forward‑compatible field additions), AI‑assisted type inference for legacy codebases, and a lightweight “micro‑generator” targeting ultra‑constrained IoT devices. Overall, the paper demonstrates that a well‑engineered code‑generation approach can evolve to meet modern demands for language diversity, network efficiency, and support for complex data payloads while retaining the strong safety guarantees that motivated the original design.

Comments & Academic Discussion

Loading comments...

Leave a Comment