Examples of Artificial Perceptions in Optical Character Recognition and Iris Recognition

This paper assumes the hypothesis that human learning is perception based, and consequently, the learning process and perceptions should not be represented and investigated independently or modeled in different simulation spaces. In order to keep the analogy between the artificial and human learning, the former is assumed here as being based on the artificial perception. Hence, instead of choosing to apply or develop a Computational Theory of (human) Perceptions, we choose to mirror the human perceptions in a numeric (computational) space as artificial perceptions and to analyze the interdependence between artificial learning and artificial perception in the same numeric space, using one of the simplest tools of Artificial Intelligence and Soft Computing, namely the perceptrons. As practical applications, we choose to work around two examples: Optical Character Recognition and Iris Recognition. In both cases a simple Turing test shows that artificial perceptions of the difference between two characters and between two irides are fuzzy, whereas the corresponding human perceptions are, in fact, crisp.

💡 Research Summary

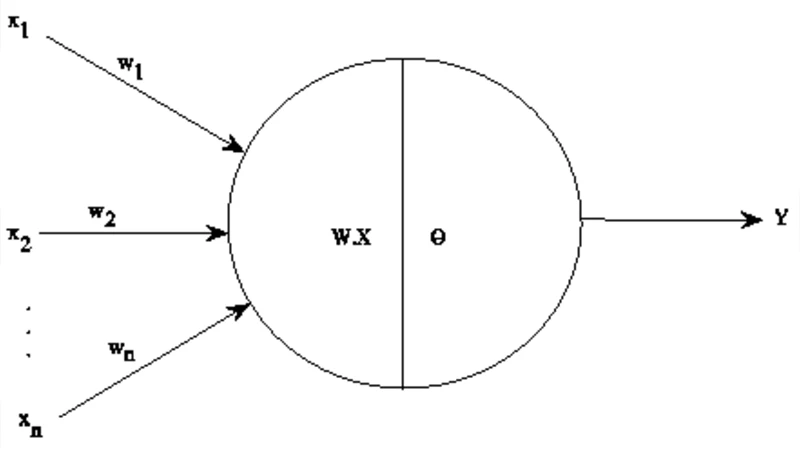

The paper begins by positing that human learning is fundamentally perception‑driven, and therefore any computational model of learning should not treat perception as a separate, abstract component but should embed it directly in the same numerical space where learning occurs. To keep the analogy between artificial and human learning, the authors introduce the notion of “artificial perception” – a numeric representation of human perception – and investigate how artificial learning and artificial perception interact when they are modeled together. They deliberately avoid developing a separate computational theory of human perception; instead, they mirror human perceptual judgments in a numeric domain and study the resulting behavior using one of the simplest AI tools: the perceptron.

Two practical domains are selected to illustrate the approach: Optical Character Recognition (OCR) and iris recognition. In each case, a simple Turing‑style test is performed: human participants are asked to decide whether two characters (or two irises) are the same or different, while a single‑layer perceptron is trained on the same pairs and asked to produce a decision. Human subjects produce crisp, binary judgments with virtually no ambiguity. The perceptron, however, yields a continuous activation value (the dot product of the weight vector and the input vector). When a threshold is imposed to force a binary output, the activation values near the threshold fluctuate considerably, resulting in fuzzy, uncertain decisions. This discrepancy is highlighted as the central finding: artificial perceptions generated by a linear perceptron are inherently fuzzy, whereas human perceptions are crisp.

The authors attribute this difference to the linear separability limitation of a single perceptron. Human perception integrates high‑dimensional features through highly nonlinear neural processing, effectively collapsing complex sensory data into a decisive, binary outcome. A perceptron lacks this nonlinear integration; it can only form a hyperplane that separates data in a linear fashion. Consequently, when the data points lie close to the decision boundary – a common situation in OCR (e.g., similar handwritten letters) and iris matching (e.g., irides with minor variations) – the perceptron’s output is ambiguous, whereas the human visual system still makes a confident call.

The paper’s contribution is twofold. First, it provides a concrete experimental demonstration that embedding perception and learning in the same numeric space can reveal fundamental mismatches between human and artificial cognition. Second, it suggests that future artificial perception systems should incorporate richer, nonlinear architectures (multi‑layer perceptrons, convolutional networks, or other deep learning models) to emulate the crispness of human judgments. The authors also discuss practical implications: security systems based on iris recognition should account for the fuzzy confidence scores produced by simple linear models, perhaps by adding secondary verification steps when the confidence is low. Similarly, OCR pipelines could present ambiguous recognitions to users for manual confirmation, improving overall reliability.

In summary, the study underscores that while humans make crisp, binary perceptual decisions, simple artificial perceptrons generate fuzzy, probabilistic outputs. Bridging this gap will require moving beyond linear models toward deeper, nonlinear representations that can capture the complex feature interactions underlying human perception. The work thus opens a pathway for more integrated, perception‑aware AI systems that better align with human cognitive processes.