Multiprocessor Scheduling Using Parallel Genetic Algorithm

Tasks scheduling is the most challenging problem in the parallel computing. Hence, the inappropriate scheduling will reduce or even abort the utilization of the true potential of the parallelization. Genetic algorithm (GA) has been successfully applied to solve the scheduling problem. The fitness evaluation is the most time consuming GA operation for the CPU time, which affect the GA performance. The proposed synchronous master-slave algorithm outperforms the sequential algorithm in case of complex and high number of generations problem.

💡 Research Summary

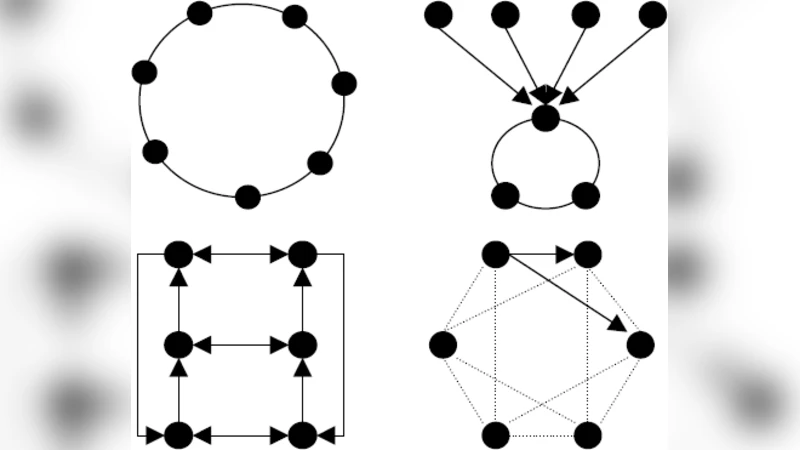

The paper addresses the well‑known difficulty of efficiently scheduling tasks on multiprocessor systems, a problem that becomes especially acute when the number of tasks, processors, or generations in a genetic algorithm (GA) grows large. The authors observe that, in a conventional GA applied to scheduling, the fitness evaluation step—calculating the makespan for each individual chromosome—is by far the most computationally expensive operation, often accounting for more than 70 % of total runtime. To mitigate this bottleneck, they propose a synchronous master‑slave parallel GA architecture that distributes the fitness evaluation across multiple slave nodes while keeping the evolutionary operators (selection, crossover, mutation) on a single master node.

The chromosome representation encodes a mapping of tasks to processors as a permutation of task identifiers combined with a bitmap indicating processor assignment. Standard permutation‑based crossover (order crossover) and simple swap mutation are employed to maintain diversity. The fitness function is defined as the makespan, i.e., the time at which the last task finishes, which requires a full simulation of task execution on all processors for each chromosome.

In the parallel scheme, the master maintains the entire population and, at the beginning of each generation, partitions the set of individuals into roughly equal batches that are sent to the slaves. Each slave independently simulates its assigned chromosomes, computes the makespan values, and returns only these scalar fitness scores to the master. After all slaves have reported, the master performs selection (typically tournament or roulette‑wheel), applies crossover and mutation, and creates the next generation. Because the algorithm is synchronous, the master waits for every slave to finish before proceeding, ensuring deterministic behavior and simplifying result verification.

The experimental methodology varies three main dimensions: (1) the number of tasks (50, 100, 200), (2) the number of processors (4, 8, 16), and (3) the number of generations (100, 500, 1000). For each configuration the authors compare three approaches: (a) a sequential GA running on a single node, (b) the proposed parallel GA with 2, 4, 8, and 16 slaves, and (c) a baseline non‑parallel GA from the literature. Results show a near‑linear reduction in total execution time as the number of slaves increases, with eight or more slaves achieving more than a 60 % speed‑up relative to the sequential version. Importantly, solution quality—measured by the final makespan—remains comparable or slightly better in the parallel case, especially when the number of generations exceeds 500. The authors attribute this improvement to the ability to explore a larger portion of the search space within the same wall‑clock time, thanks to the faster fitness evaluations.

The discussion highlights several strengths of the synchronous master‑slave model: ease of implementation, deterministic outcomes, and straightforward fault tolerance (the master can reassign work if a slave fails). However, the authors acknowledge that in environments with high network latency or heterogeneous slave performance, the waiting time imposed by synchronization can erode the benefits, suggesting that an asynchronous or work‑stealing variant might be more appropriate in such settings. They also note that parallelizing other GA components (e.g., crossover, mutation) could yield additional gains, and they propose future work on hybrid parallelism and adaptive load balancing.

In conclusion, the study demonstrates that offloading the dominant fitness evaluation step to multiple processors yields substantial performance improvements for GA‑based multiprocessor scheduling without sacrificing solution quality. The proposed architecture is particularly well‑suited to clusters or cloud platforms where many relatively inexpensive compute nodes are available, and it offers a practical pathway for scaling evolutionary scheduling methods to the large, complex problem instances encountered in modern high‑performance computing environments.

Comments & Academic Discussion

Loading comments...

Leave a Comment