Convex Graph Invariants

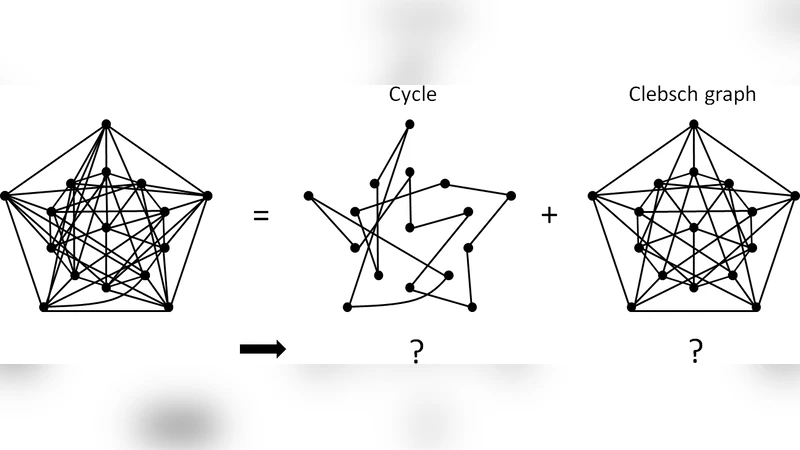

The structural properties of graphs are usually characterized in terms of invariants, which are functions of graphs that do not depend on the labeling of the nodes. In this paper we study convex graph invariants, which are graph invariants that are convex functions of the adjacency matrix of a graph. Some examples include functions of a graph such as the maximum degree, the MAXCUT value (and its semidefinite relaxation), and spectral invariants such as the sum of the $k$ largest eigenvalues. Such functions can be used to construct convex sets that impose various structural constraints on graphs, and thus provide a unified framework for solving a number of interesting graph problems via convex optimization. We give a representation of all convex graph invariants in terms of certain elementary invariants, and describe methods to compute or approximate convex graph invariants tractably. We also compare convex and non-convex invariants, and discuss connections to robust optimization. Finally we use convex graph invariants to provide efficient convex programming solutions to graph problems such as the deconvolution of the composition of two graphs into the individual components, hypothesis testing between graph families, and the generation of graphs with certain desired structural properties.

💡 Research Summary

The paper introduces a novel class of graph invariants—convex graph invariants—that are invariant under node permutations and, crucially, are convex functions of the adjacency matrix. Traditional graph invariants (e.g., degree distribution, clustering coefficient) are typically discrete and non‑convex, making them unsuitable for direct use in convex optimization frameworks. By focusing on convexity, the authors create a bridge between combinatorial graph theory and continuous optimization, enabling the formulation of structural graph constraints as convex sets and the solution of a variety of graph‑related problems via convex programming.

Definition and Basic Examples

A function (f:\mathbb{R}^{n\times n}\rightarrow\mathbb{R}) is a convex graph invariant if (i) it is permutation‑invariant, i.e., (f(PAP^{\top})=f(A)) for every permutation matrix (P), and (ii) it is convex in the matrix argument. The paper lists several canonical examples:

- Maximum degree (\Delta(G)=\max_i\sum_j A_{ij}) – the maximum row‑sum of the adjacency matrix, a convex function because the pointwise maximum of linear functions is convex.

- MAXCUT value – expressed as (\frac{1}{4}\sum_{i<j}A_{ij}(1-x_i x_j)) with binary labels (x\in{\pm1}^n). Relaxing the binary constraint to a unit‑norm vector yields the well‑known Goemans‑Williamson SDP, a convex surrogate of the original combinatorial cut value.

- Sum of the top‑(k) eigenvalues (\sum_{i=1}^k\lambda_i(A)) – convex by Ky Fan’s theorem.

These examples illustrate that many quantities of interest in graph algorithms already possess a convex formulation, or admit a tight convex relaxation.

Representation Theorem

A central theoretical contribution is the representation theorem: every convex graph invariant can be expressed as a supremum (or convex hull) of a family of elementary invariants. An elementary invariant is a permutation‑invariant function that is either linear, a semidefinite program (SDP) constraint, or otherwise tractable to evaluate. Formally, for any convex invariant (f) there exists a set (\mathcal{G}) of elementary invariants such that

\

Comments & Academic Discussion

Loading comments...

Leave a Comment