Bayesian Inference with Optimal Maps

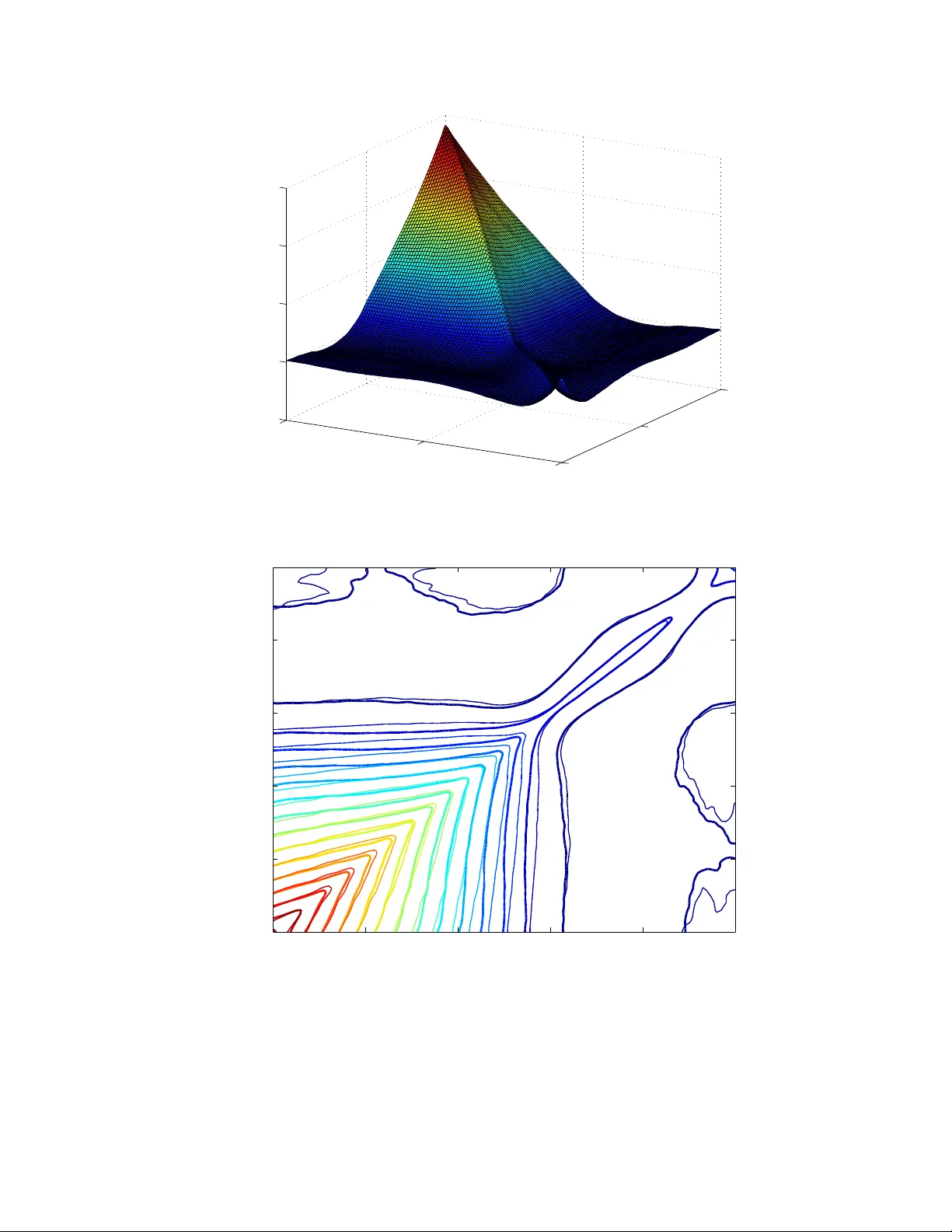

We present a new approach to Bayesian inference that entirely avoids Markov chain simulation, by constructing a map that pushes forward the prior measure to the posterior measure. Existence and uniqueness of a suitable measure-preserving map is estab…

Authors: Tarek A. El Moselhy, Youssef M. Marzouk